卷積神經網絡遷移學習-Inception

? 有論文依據表明可以保留訓練好的inception模型中所有卷積層的參數,只替換最后一層全連接層。在最后 這一層全連接層之前的網絡稱為瓶頸層。

? 原理:在訓練好的inception模型中,因為將瓶頸層的輸出再通過一個單層的全連接層,神經網絡可以很好 的區分1000種類別的圖像,所以可以認為瓶頸層輸出的節點向量可以被作為任何圖像的一個更具有表達能 力的特征向量。于是在新的數據集上可以直接利用這個訓練好的神經網絡對圖像進行特征提取,然后將提 取得到的特征向量作為輸入來訓練一個全新的單層全連接神經網絡處理新的分類問題。

? 一般來說在數據量足夠的情況下,遷移學習的效果不如完全重新訓練。但是遷移學習所需要的訓練時間和 訓練樣本要遠遠小于訓練完整的模型。

? 這其中說到inception模型,其實它是和Alexnet結構完全不同的卷積神經網絡。在Alexnet模型中,不同卷積 層通過串聯的方式連接在一起,而inception模型中的inception結構是將不同的卷積層通過并聯的方式結合 在一起

Inception

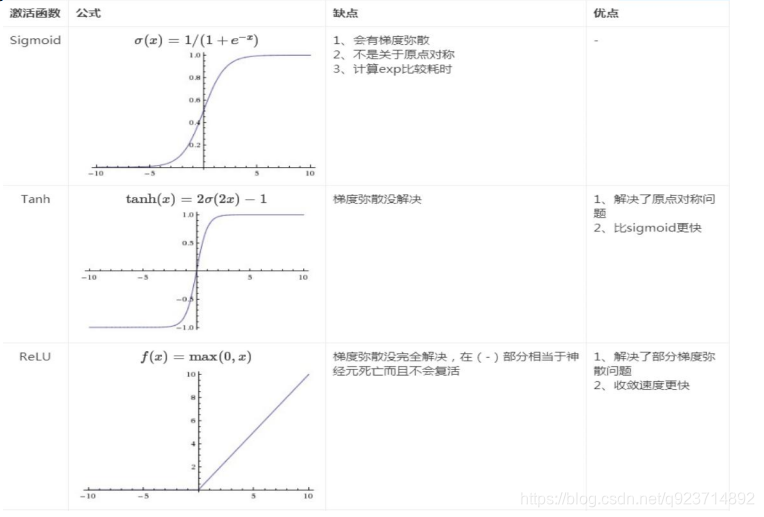

Inception 網絡是 CNN 發展史上一個重要的里程碑。在 Inception 出現之前,大部分流行 CNN 僅僅是把卷積層堆疊得越來越多,使網絡越來越深,以此希望能夠得到更好的性能。但是存 在以下問題:

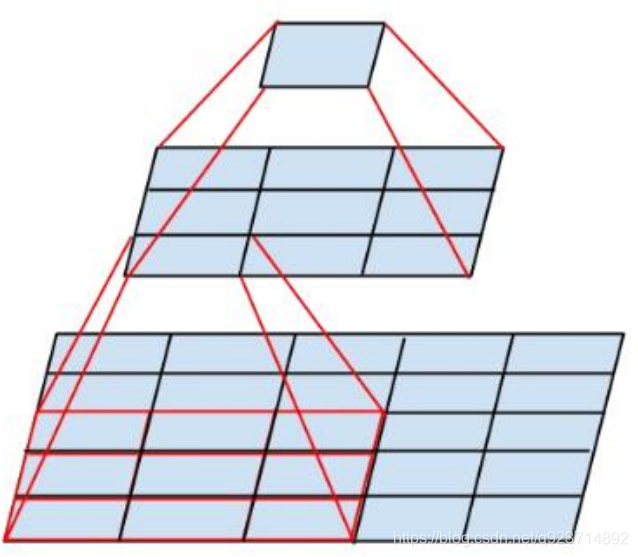

- 圖像中突出部分的大小差別很大。

- 由于信息位置的巨大差異,為卷積操作選擇合適的卷積核大小就比較困難。信息分布更全局性的圖像偏好較大的卷積核,信息分布比較局部的圖像偏好較小的卷積核。

- 非常深的網絡更容易過擬合。將梯度更新傳輸到整個網絡是很困難的。

- 簡單地堆疊較大的卷積層非常消耗計算資源。

狗在各個圖片的占比不同~可能想要得到的部分在圖中占比很小。

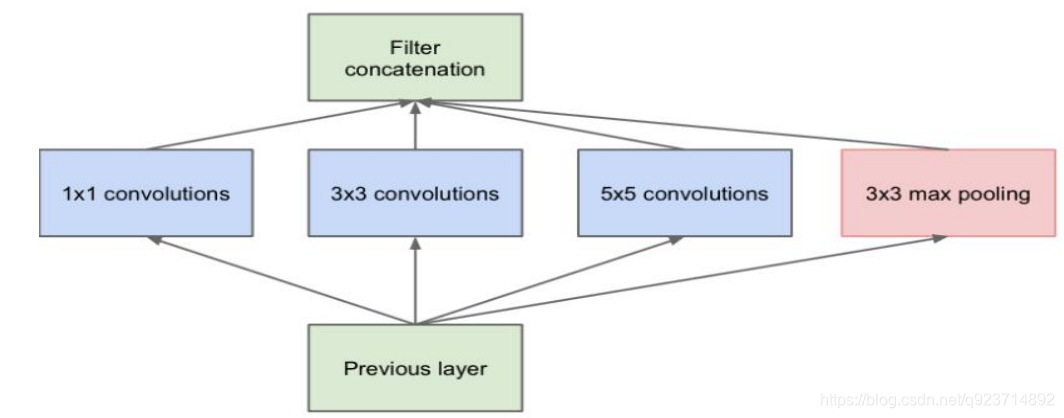

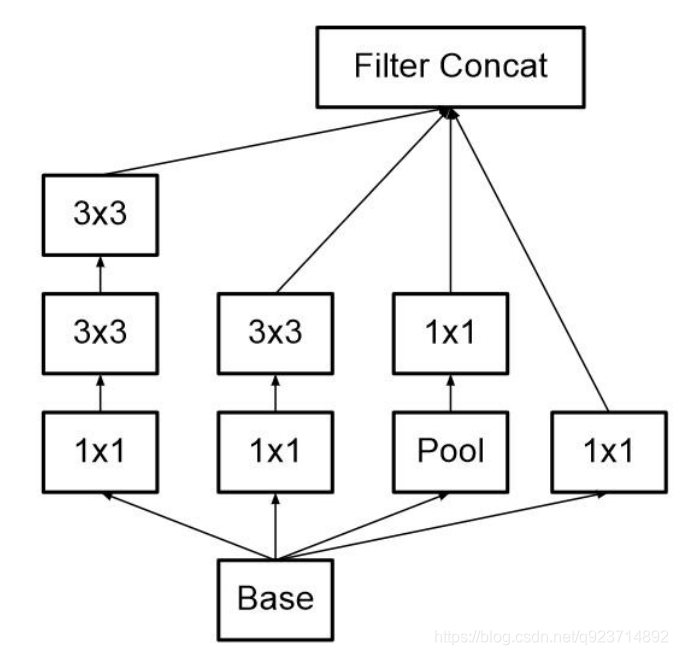

Inception module

解決方案:

為什么不在同一層級上運行具備多個尺寸的濾波器呢?網絡本質上會變得稍微「寬一些」,而不是 「更深」。

作者因此設計了 Inception 模塊。

Inception 模塊( Inception module ):

它使用 3 個不同大小的濾波器(1x1、3x3、5x5)對輸入執 行卷積操作,此外它還會執行最大池化。所有子層的輸出最后會被級聯起來,并傳送至下一個 Inception 模塊。 一方面增加了網絡的寬度,另一方面增加了網絡對尺度的適應性

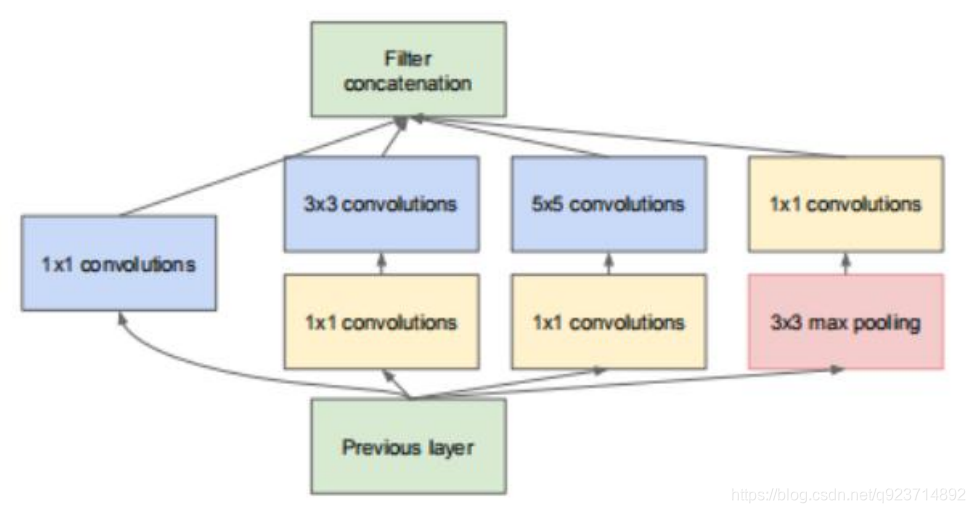

實現降維的 Inception 模塊:

如前所述,深度神經網絡需要耗費大量計算資源。為了降低算力成 本,作者在 3x3 和 5x5 卷積層之前添加額外的 1x1 卷積層,來限制輸入通道的數量。盡管添加額 外的卷積操作似乎是反直覺的,但是 1x1 卷積比 5x5 卷積要廉價很多,而且輸入通道數量減少也 有利于降低算力成本。

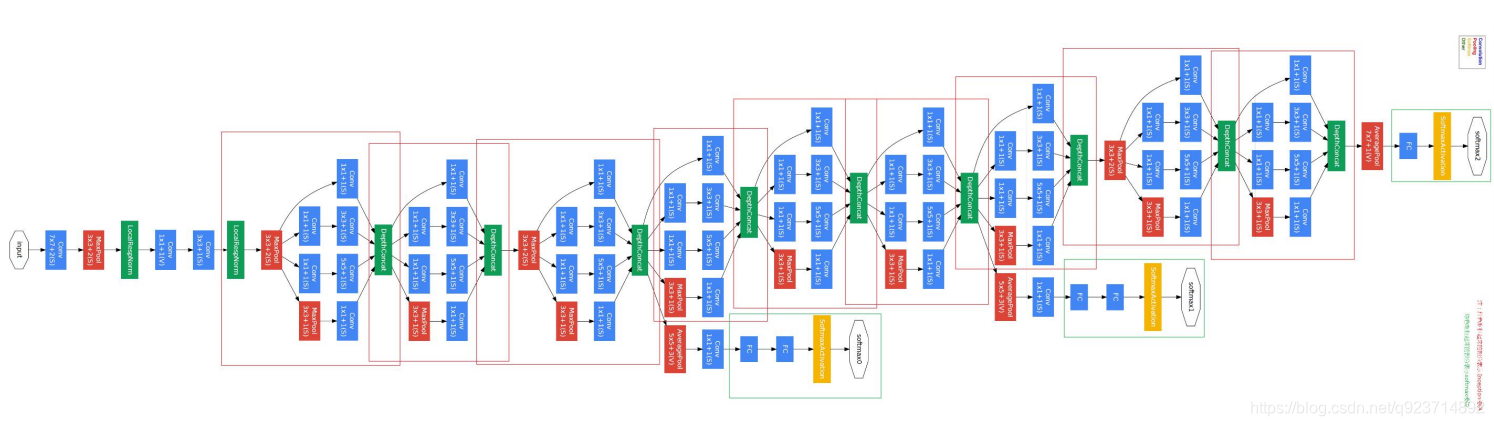

InceptionV1–Googlenet

- GoogLeNet采用了Inception模塊化(9個)的結構,共22層;

- 為了避免梯度消失,網絡額外增加了2個輔助的softmax用于向前傳導梯度。

V1改進版–InceptionV2

改進一:

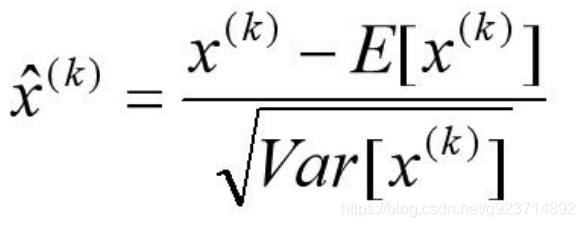

Inception V2 在輸入的時候增加了BatchNormalization:

? 所有輸出保證在0~1之間。

? 所有輸出數據的均值接近0,標準差接近1的正太分布。使其落入激活函數的敏感區,避免梯度 消失,加快收斂。 ? 加快模型收斂速度,并且具有一定的泛化能力。

? 可以減少dropout的使用。

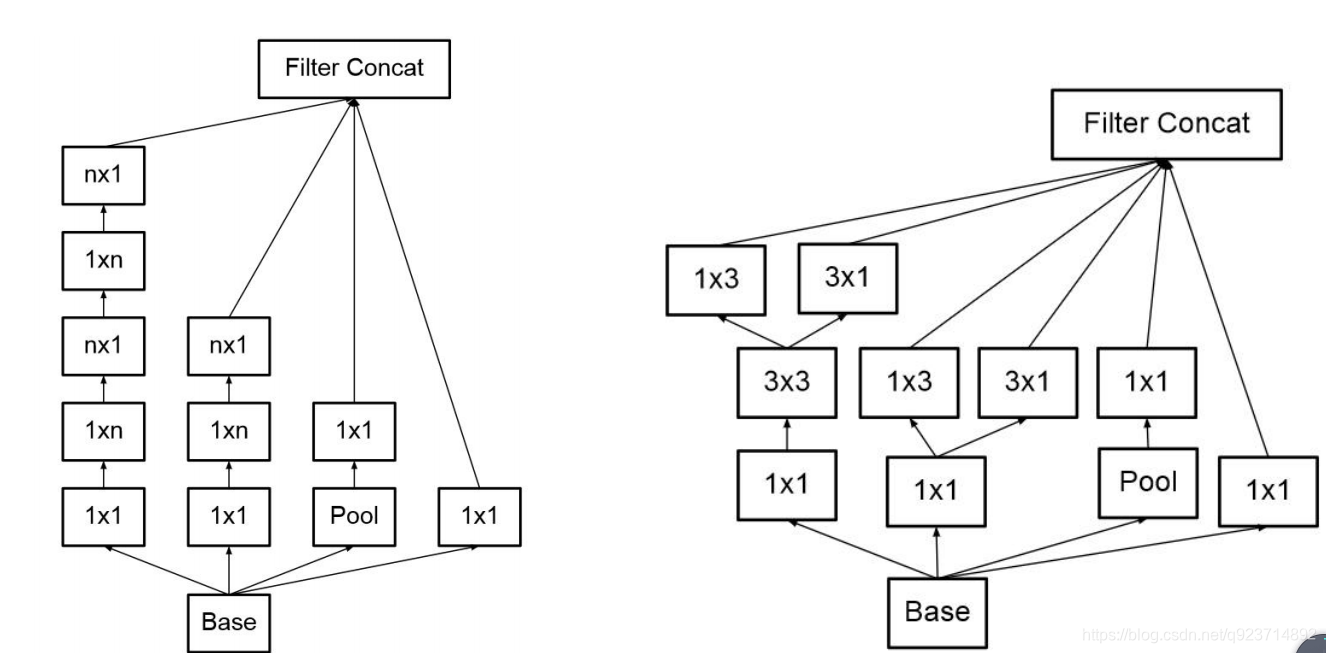

改進二:

? 作者提出可以用2個連續的3x3卷積層(stride=1)組成的小網絡來代替單個的5x5卷積 層,這便是Inception V2結構。

? 5x5卷積核參數是3x3卷積核的25/9=2.78倍。

改進三:

此外,作者將 n*n 的卷積核尺寸分解為 1×n 和 n×1 兩個卷積。

前面三個原則用來構建三種不同類型 的 Inception 模塊。

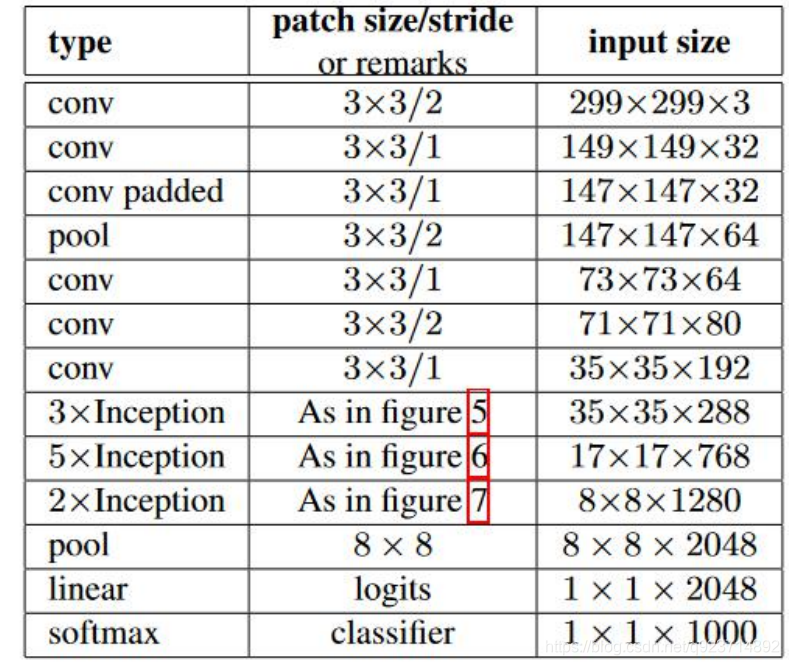

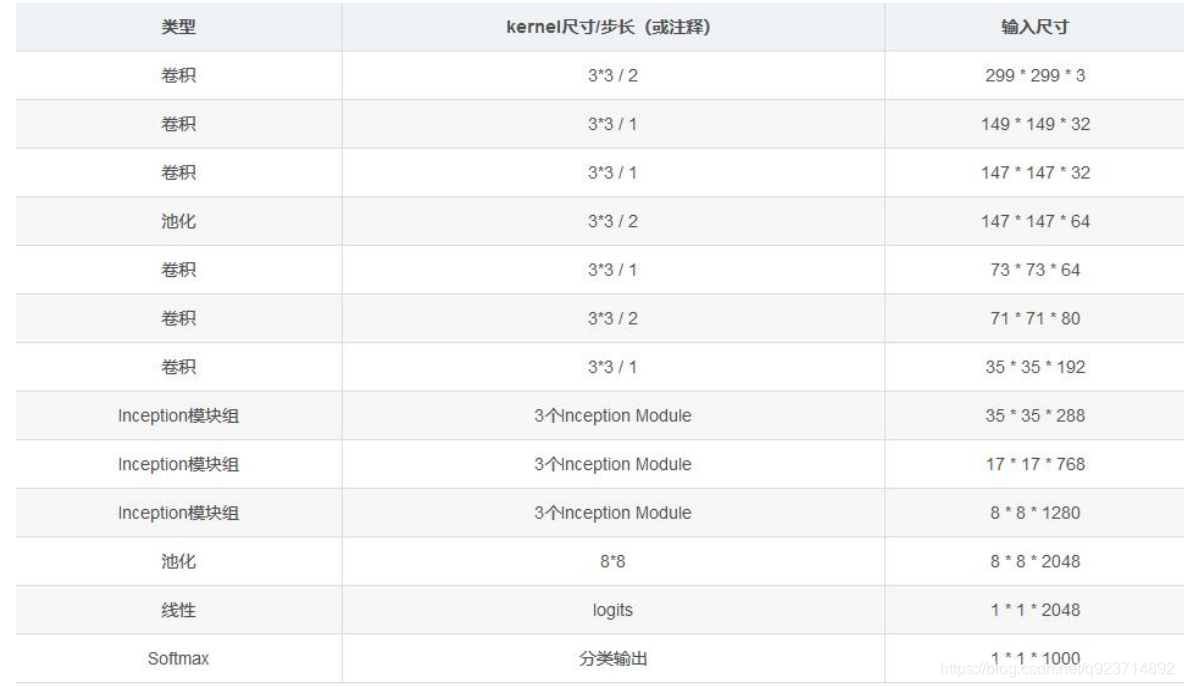

V2改進版–InceptionV3

改進:

InceptionV3 整合了前面 Inception v2 中提到的所有升級,還使用了7x7 卷積

思想和Trick:

Inception V3設計思想和Trick:

(1)分解成小卷積很有效,可以降低參數量,減輕過擬合,增加網絡非線性的表達能力。

(2)卷積網絡從輸入到輸出,應該讓圖片尺寸逐漸減小,輸出通道數逐漸增加,即讓空間結 構化,將空間信息轉化為高階抽象的特征信息。

(3)Inception Module用多個分支提取不同抽象程度的高階特征的思路很有效,可以豐富網絡 的表達能力

InceptionV3代碼實現

網絡部分:

#-------------------------------------------------------------#

# InceptionV3的網絡部分

#-------------------------------------------------------------#

from __future__ import print_function

from __future__ import absolute_importimport warnings

import numpy as npfrom keras.models import Model

from keras import layers

from keras.layers import Activation,Dense,Input,BatchNormalization,Conv2D,MaxPooling2D,AveragePooling2D

from keras.layers import GlobalAveragePooling2D,GlobalMaxPooling2D

from keras.engine.topology import get_source_inputs

from keras.utils.layer_utils import convert_all_kernels_in_model

from keras.utils.data_utils import get_file

from keras import backend as K

from keras.applications.imagenet_utils import decode_predictions

from keras.preprocessing import imagedef conv2d_bn(x,filters,num_row,num_col,strides=(1, 1),padding='same',name=None):if name is not None:bn_name = name + '_bn'conv_name = name + '_conv'else:bn_name = Noneconv_name = Nonex = Conv2D(filters, (num_row, num_col),strides=strides,padding=padding,use_bias=False,name=conv_name)(x)x = BatchNormalization(scale=False, name=bn_name)(x)x = Activation('relu', name=name)(x)return xdef InceptionV3(input_shape=[299,299,3],classes=1000):img_input = Input(shape=input_shape)x = conv2d_bn(img_input, 32, 3, 3, strides=(2, 2), padding='valid')x = conv2d_bn(x, 32, 3, 3, padding='valid')x = conv2d_bn(x, 64, 3, 3)x = MaxPooling2D((3, 3), strides=(2, 2))(x)x = conv2d_bn(x, 80, 1, 1, padding='valid')x = conv2d_bn(x, 192, 3, 3, padding='valid')x = MaxPooling2D((3, 3), strides=(2, 2))(x)#--------------------------------## Block1 35x35#--------------------------------## Block1 part1# 35 x 35 x 192 -> 35 x 35 x 256branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 32, 1, 1)# 64+64+96+32 = 256x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed0')# Block1 part2# 35 x 35 x 256 -> 35 x 35 x 288branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 64, 1, 1)# 64+64+96+64 = 288 x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed1')# Block1 part3# 35 x 35 x 288 -> 35 x 35 x 288branch1x1 = conv2d_bn(x, 64, 1, 1)branch5x5 = conv2d_bn(x, 48, 1, 1)branch5x5 = conv2d_bn(branch5x5, 64, 5, 5)branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 64, 1, 1)# 64+64+96+64 = 288 x = layers.concatenate([branch1x1, branch5x5, branch3x3dbl, branch_pool],axis=3,name='mixed2')#--------------------------------## Block2 17x17#--------------------------------## Block2 part1# 35 x 35 x 288 -> 17 x 17 x 768branch3x3 = conv2d_bn(x, 384, 3, 3, strides=(2, 2), padding='valid')branch3x3dbl = conv2d_bn(x, 64, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3)branch3x3dbl = conv2d_bn(branch3x3dbl, 96, 3, 3, strides=(2, 2), padding='valid')branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)x = layers.concatenate([branch3x3, branch3x3dbl, branch_pool], axis=3, name='mixed3')# Block2 part2# 17 x 17 x 768 -> 17 x 17 x 768branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 128, 1, 1)branch7x7 = conv2d_bn(branch7x7, 128, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 128, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 128, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed4')# Block2 part3 and part4# 17 x 17 x 768 -> 17 x 17 x 768 -> 17 x 17 x 768for i in range(2):branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 160, 1, 1)branch7x7 = conv2d_bn(branch7x7, 160, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 160, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 160, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed' + str(5 + i))# Block2 part5# 17 x 17 x 768 -> 17 x 17 x 768branch1x1 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(x, 192, 1, 1)branch7x7 = conv2d_bn(branch7x7, 192, 1, 7)branch7x7 = conv2d_bn(branch7x7, 192, 7, 1)branch7x7dbl = conv2d_bn(x, 192, 1, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 7, 1)branch7x7dbl = conv2d_bn(branch7x7dbl, 192, 1, 7)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch7x7, branch7x7dbl, branch_pool],axis=3,name='mixed7')#--------------------------------## Block3 8x8#--------------------------------## Block3 part1# 17 x 17 x 768 -> 8 x 8 x 1280branch3x3 = conv2d_bn(x, 192, 1, 1)branch3x3 = conv2d_bn(branch3x3, 320, 3, 3,strides=(2, 2), padding='valid')branch7x7x3 = conv2d_bn(x, 192, 1, 1)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 1, 7)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 7, 1)branch7x7x3 = conv2d_bn(branch7x7x3, 192, 3, 3, strides=(2, 2), padding='valid')branch_pool = MaxPooling2D((3, 3), strides=(2, 2))(x)x = layers.concatenate([branch3x3, branch7x7x3, branch_pool], axis=3, name='mixed8')# Block3 part2 part3# 8 x 8 x 1280 -> 8 x 8 x 2048 -> 8 x 8 x 2048for i in range(2):branch1x1 = conv2d_bn(x, 320, 1, 1)branch3x3 = conv2d_bn(x, 384, 1, 1)branch3x3_1 = conv2d_bn(branch3x3, 384, 1, 3)branch3x3_2 = conv2d_bn(branch3x3, 384, 3, 1)branch3x3 = layers.concatenate([branch3x3_1, branch3x3_2], axis=3, name='mixed9_' + str(i))branch3x3dbl = conv2d_bn(x, 448, 1, 1)branch3x3dbl = conv2d_bn(branch3x3dbl, 384, 3, 3)branch3x3dbl_1 = conv2d_bn(branch3x3dbl, 384, 1, 3)branch3x3dbl_2 = conv2d_bn(branch3x3dbl, 384, 3, 1)branch3x3dbl = layers.concatenate([branch3x3dbl_1, branch3x3dbl_2], axis=3)branch_pool = AveragePooling2D((3, 3), strides=(1, 1), padding='same')(x)branch_pool = conv2d_bn(branch_pool, 192, 1, 1)x = layers.concatenate([branch1x1, branch3x3, branch3x3dbl, branch_pool],axis=3,name='mixed' + str(9 + i))# 平均池化后全連接。x = GlobalAveragePooling2D(name='avg_pool')(x)x = Dense(classes, activation='softmax', name='predictions')(x)inputs = img_inputmodel = Model(inputs, x, name='inception_v3')return modeldef preprocess_input(x):x /= 255.x -= 0.5x *= 2.return xif __name__ == '__main__':model = InceptionV3()model.load_weights("inception_v3_weights_tf_dim_ordering_tf_kernels.h5")img_path = 'elephant.jpg'img = image.load_img(img_path, target_size=(299, 299))x = image.img_to_array(img)x = np.expand_dims(x, axis=0)x = preprocess_input(x)preds = model.predict(x)print('Predicted:', decode_predictions(preds))Inception模型優勢:

- 采用了1x1卷積核,性價比高,用很少的計算量既可以增加一層的特征變換和非線性變換。

- 提出Batch Normalization,通過一定的手段,把每層神經元的輸入值分布拉到均值0方差1 的正態分布,使其落入激活函數的敏感區,避免梯度消失,加快收斂。

- 引入Inception module,4個分支結合的結構。

)

有偏見)