國內私募機構九鼎控股打造APP,來就送?20元現金領取地址:http://jdb.jiudingcapital.com/phone.html

內部邀請碼:C8E245J?(不寫邀請碼,沒有現金送)

國內私募機構九鼎控股打造,九鼎投資是在全國股份轉讓系統掛牌的公眾公司,股票代碼為430719,為“中國PE第一股”,市值超1000億元。?

------------------------------------------------------------------------------------------------------------------------------------------------------------------

?

原文地址:?http://my.oschina.net/lanzp/blog/309078

?

目錄[-]

- 1、開發配置環境:

- 2、Hadoop服務端配置(Master節點)

- 3、基于Eclipse的Hadoop2.x開發環境配置

- 4、運行Hadoop程序及查看運行日志

1、開發配置環境:

開發環境:Win7(64bit)+Eclipse(kepler service release 2)

配置環境:Ubuntu Server 14.04.1 LTS(64-bit only)

輔助工具:WinSCP + Putty

Hadoop版本:2.5.0

Hadoop的Eclipse開發插件(2.x版本適用):http://pan.baidu.com/s/1eQy49sm

服務器端JDK版本:OpenJDK7.0

?

以上所有工具請自行下載安裝。

2、Hadoop服務端配置(Master節點)

??最近一直在摸索Hadoop2的配置,因為Hadoop2對原有的一些框架API做了調整,但也還是兼容舊版本的(包括配置)。像我這種就喜歡用新的東西的人,當然要嘗一下鮮了,現在網上比較少新版本的配置教程,那么下面我就來分享一下我自己的實戰經驗,如有不正確的地歡迎指正:)。

??假設我們已經成功地安裝了Ubuntu Server、OpenJDK、SSH,如果還沒有安裝的話請先安裝,自己網上找一下教程,這里我就說一下SSH的無口令登陸設置。首先通過

? ? ? ?

| 1 | ??$?ssh?localhost |

?

測試一下自己有沒有設置好無口令登陸,如果沒有設置好,系統將要求你輸入密碼,通過下面的設置可以實現無口令登陸,具體原理請百度谷歌:

? ? ? ? ?

| 1 2 | $?ssh-keygen?-t?dsa?-P?''?-f?~/.ssh/id_dsa$?cat?~/.ssh/id_dsa.pub?>>?~/.ssh/authorized_keys |

?

??其次是Hadoop安裝(假設已經安裝好OpenJDK以及配置好了環境變量),到Hadoop官網下載一個Hadoop2.5.0版本的下來,好像大概有100多M的tar.gz包,下載?下來后自行解壓,我的是放在/usr/mywind下面,Hadoop主目錄完整路徑是/usr/mywind/hadoop,這個路徑根據你個人喜好放吧。

??解壓完后,打開hadoop主目錄下的etc/hadoop/hadoop-env.sh文件,在最后面加入下面內容:

| 1 2 3 4 5 | #?set?to?the?root?of?your?Java?installation??export?JAVA_HOME=/usr/lib/jvm/java-7-openjdk-amd64??#?Assuming?your?installation?directory?is?/usr/mywind/hadoopexport?HADOOP_PREFIX=/usr/mywind/hadoop |

?

為了方便起見,我建設把Hadoop的bin目錄及sbin目錄也加入到環境變量中,我是直接修改了Ubuntu的/etc/environment文件,內容如下:

| 1 2 3 | PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/usr/lib/jvm/java-7-openjdk-amd64/bin:/usr/mywind/hadoop/bin:/usr/mywind/hadoop/sbin"JAVA_HOME="/usr/lib/jvm/java-7-openjdk-amd64"CLASSPATH=".:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar" |

?

也可以通過修改profile來完成這個設置,看個人習慣咯。假如上面的設置你都完成了,可以在命令行里面測試一下Hadoop命令,如下圖:

假如你能看到上面的結果,恭喜你,Hadoop安裝完成了。接下來我們可以進行偽分布配置(Hadoop可以在偽分布模式下運行單結點)。

接下來我們要配置的文件有四個,分別是/usr/mywind/hadoop/etc/hadoop目錄下的yarn-site.xml、mapred-site.xml、hdfs-site.xml、core-site.xml(注意:這個版本下默認沒有yarn-site.xml文件,但有個yarn-site.xml.properties文件,把后綴修改成前者即可),關于yarn新特性可以參考官網或者這個文章http://www.ibm.com/developerworks/cn/opensource/os-cn-hadoop-yarn/。

首先是core-site.xml配置HDFS地址及臨時目錄(默認的臨時目錄在重啟后會刪除):

| 1 2 3 4 5 6 7 8 9 10 11 12 | <configuration>????<property>????????<name>fs.defaultFS</name>????????<value>hdfs://192.168.8.184:9000</value>?????????<description>same?as?fs.default.name</description>????</property>?????<property>???????<name>hadoop.tmp.dir</name>???????<value>/usr/mywind/tmp</value>????????<description>A?base?for?other?temporary?directories.</description>?????</property></configuration> |

?

然后是hdfs-site.xml配置集群數量及其他一些可選配置比如NameNode目錄、DataNode目錄等等:

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | <configuration>?????<property>????????<name>dfs.namenode.name.dir</name>????????<value>/usr/mywind/name</value>????????<description>same?as?dfs.name.dir</description>?????</property>?????<property>????????<name>dfs.datanode.data.dir</name>????????<value>/usr/mywind/data</value>????????<description>same?as?dfs.data.dir</description>?????</property>?????<property>????????<name>dfs.replication</name>????????<value>1</value>????????<description>same?as?old?frame,recommend?set?the?value?as?the?cluster?DataNode?host?numbers!</description>?????</property></configuration> |

?

接著是mapred-site.xml配置啟用yarn框架:

| 1 2 3 4 5 6 | <configuration>????<property>????????<name>mapreduce.framework.name</name>????????<value>yarn</value>????</property></configuration> |

?

最后是yarn-site.xml配置NodeManager:

| 1 2 3 4 5 6 7 | <configuration>?<!--?Site?specific?YARN?configuration?properties?-->???<property>??????????<name>yarn.nodemanager.aux-services</name>??????????<value>mapreduce_shuffle</value>???</property>?</configuration> |

?

注意,網上的舊版本教程可能會把value寫成mapreduce.shuffle,這個要特別注意一下的,至此我們所有的文件配置都已經完成了,下面進行HDFS文件系統進行格式化:

| 1 2 | ??????????$?hdfs?namenode?-format |

?

然后啟用NameNode及DataNode進程:

| 1 2 | ????????$?start-yarn.sh |

?

然后創建hdfs文件目錄

| 1 2 | $?hdfs?dfs?-mkdir?/user$?hdfs?dfs?-mkdir?/user/a01513 |

?

注意,這個a01513是我在Ubuntu上的用戶名,最好保持與系統用戶名一致,據說不一致會有許多權限等問題,我之前試過改成其他名字,報錯,實在麻煩就改成跟系統用戶名一致吧。

然后把要測試的輸入文件放在文件系統中:

????????

| 1 | $?hdfs?dfs?-put?/usr/mywind/psa?input |

?

文件內容是Hadoop經典的天氣例子的數據:

| 1 2 3 4 5 6 7 8 9 | 12345679867623119010123456798676231190101234567986762311901012345679867623119010123456+00121234567890345612345679867623119010123456798676231190101234567986762311901012345679867623119010123456+01121234567890345612345679867623119010123456798676231190101234567986762311901012345679867623119010123456+02121234567890345612345679867623119010123456798676231190101234567986762311901012345679867623119010123456+00321234567890345612345679867623119010123456798676231190201234567986762311901012345679867623119010123456+00421234567890345612345679867623119010123456798676231190201234567986762311901012345679867623119010123456+01021234567890345612345679867623119010123456798676231190201234567986762311901012345679867623119010123456+01121234567890345612345679867623119010123456798676231190501234567986762311901012345679867623119010123456+04121234567890345612345679867623119010123456798676231190501234567986762311901012345679867623119010123456+008212345678903456 |

?

把文件拷貝到HDFS目錄之后,我們可以通過瀏覽器查看相關的文件及一些狀態:

http://192.168.8.184:50070/

這里的IP地址根據你實際的Hadoop服務器地址啦。

好吧,我們所有的Hadoop后臺服務搭建跟數據準備都已經完成了,那么我們的M/R程序也要開始動手寫了,不過在寫當然先配置開發環境了。

3、基于Eclipse的Hadoop2.x開發環境配置

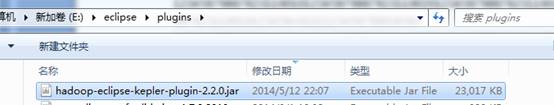

關于JDK及ECLIPSE的安裝我就不再介紹了,相信能玩Hadoop的人對這種配置都已經再熟悉不過了,如果實在不懂建議到谷歌百度去搜索一下教程。假設你已經把Hadoop的Eclipse插件下載下來了,然后解壓把jar文件放到Eclipse的plugins文件夾里面:

重啟Eclipse即可。

然后我們再安裝Hadoop到Win7下,在這不再詳細說明,跟安裝JDK大同小異,在這個例子中我安裝到了E:\hadoop。

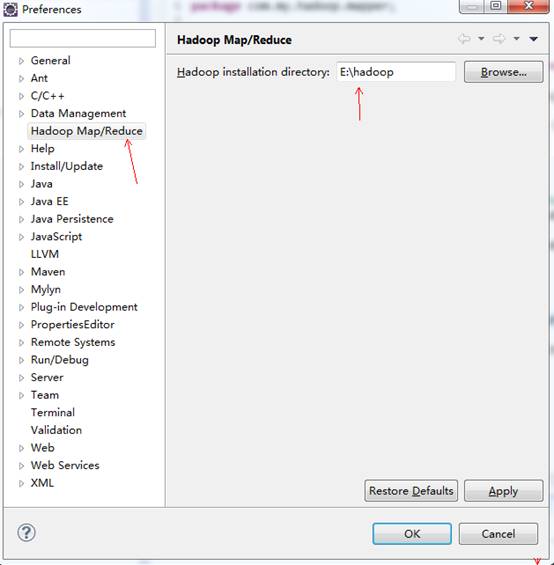

啟動Eclipse,點擊菜單欄的【Windows/窗口】→【Preferences/首選項】→【Hadoop Map/Reduce】,把Hadoop Installation Directory設置成開發機上的Hadoop主目錄:

點擊OK。

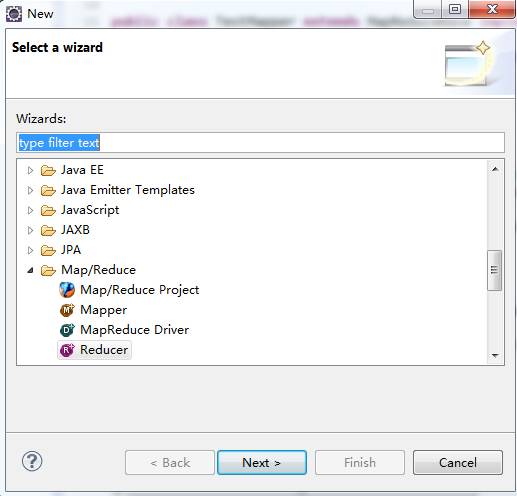

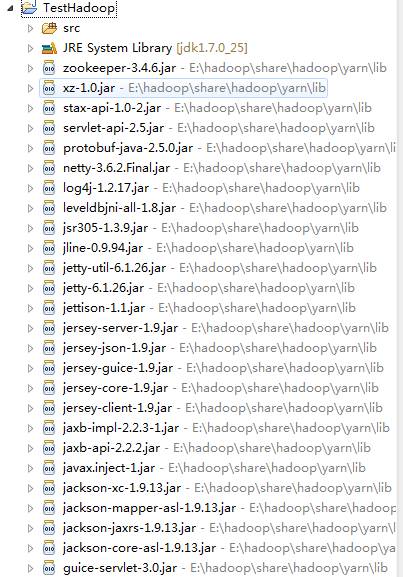

開發環境配置完成,下面我們可以新建一個測試Hadoop項目,右鍵【NEW/新建】→【Others、其他】,選擇Map/Reduce Project

?

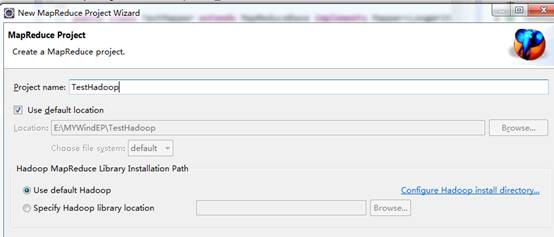

輸入項目名稱點擊【Finish/完成】:

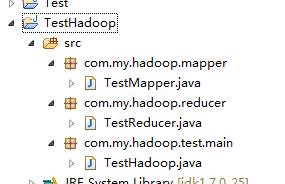

創建完成后可以看到如下目錄:

然后在SRC下建立下面包及類:

以下是代碼內容:

TestMapper.java

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 | package?com.my.hadoop.mapper;??import?java.io.IOException;??import?org.apache.commons.logging.Log;import?org.apache.commons.logging.LogFactory;import?org.apache.hadoop.io.IntWritable;import?org.apache.hadoop.io.LongWritable;import?org.apache.hadoop.io.Text;import?org.apache.hadoop.mapred.MapReduceBase;import?org.apache.hadoop.mapred.Mapper;import?org.apache.hadoop.mapred.OutputCollector;import?org.apache.hadoop.mapred.Reporter;??public?class?TestMapper?extends?MapReduceBase?implements?Mapper<LongWritable,?Text,?Text,?IntWritable>?{?????????private?static?final?int?MISSING?=?9999;?????????private?static?final?Log?LOG?=?LogFactory.getLog(TestMapper.class);????????????public?void?map(LongWritable?key,?Text?value,?OutputCollector<Text,?IntWritable>?output,Reporter?reporter)???????????????throws?IOException?{?????????????String?line?=?value.toString();?????????????String?year?=?line.substring(15,?19);?????????????int?airTemperature;?????????????if?(line.charAt(87)?==?'+')?{?//?parseInt?doesn't?like?leading?plus?signs???????????????airTemperature?=?Integer.parseInt(line.substring(88,?92));?????????????}?else?{???????????????airTemperature?=?Integer.parseInt(line.substring(87,?92));?????????????}?????????????LOG.info("loki:"+airTemperature);?????????????String?quality?=?line.substring(92,?93);?????????????LOG.info("loki2:"+quality);?????????????if?(airTemperature?!=?MISSING?&&?quality.matches("[012459]"))?{???????????????LOG.info("loki3:"+quality);???????????????output.collect(new?Text(year),?new?IntWritable(airTemperature));?????????????}???????????}??} |

TestReducer.java

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | package?com.my.hadoop.reducer;??import?java.io.IOException;import?java.util.Iterator;??import?org.apache.hadoop.io.IntWritable;import?org.apache.hadoop.io.Text;import?org.apache.hadoop.mapred.MapReduceBase;import?org.apache.hadoop.mapred.OutputCollector;import?org.apache.hadoop.mapred.Reporter;import?org.apache.hadoop.mapred.Reducer;??public?class?TestReducer?extends?MapReduceBase?implements?Reducer<Text,?IntWritable,?Text,?IntWritable>?{???????????@Override???????????public?void?reduce(Text?key,?Iterator<IntWritable>?values,?OutputCollector<Text,?IntWritable>?output,Reporter?reporter)???????????????throws?IOException{?????????????int?maxValue?=?Integer.MIN_VALUE;?????????????while?(values.hasNext())?{???????????????maxValue?=?Math.max(maxValue,?values.next().get());?????????????}?????????????output.collect(key,?new?IntWritable(maxValue));???????????}??} |

TestHadoop.java

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 | package?com.my.hadoop.test.main;??import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;import?org.apache.hadoop.io.Text;import?org.apache.hadoop.mapred.FileInputFormat;import?org.apache.hadoop.mapred.FileOutputFormat;import?org.apache.hadoop.mapred.JobClient;import?org.apache.hadoop.mapred.JobConf;??import?com.my.hadoop.mapper.TestMapper;import?com.my.hadoop.reducer.TestReducer;??public?class?TestHadoop?{??????????????????public?static?void?main(String[]?args)?throws?Exception{??????????????????????????????????????if?(args.length?!=?2)?{?????????????????????????System.err?????????????????????????????.println("Usage:?MaxTemperature?<input?path>?<output?path>");?????????????????????????System.exit(-1);???????????????????????}???????????????????JobConf?job?=?new?JobConf(TestHadoop.class);?????????????job.setJobName("Max?temperature");?????????????FileInputFormat.addInputPath(job,?new?Path(args[0]));?????????????FileOutputFormat.setOutputPath(job,?new?Path(args[1]));?????????????job.setMapperClass(TestMapper.class);?????????????job.setReducerClass(TestReducer.class);?????????????job.setOutputKeyClass(Text.class);?????????????job.setOutputValueClass(IntWritable.class);?????????????JobClient.runJob(job);?????????}?????????} |

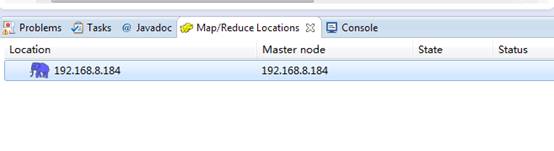

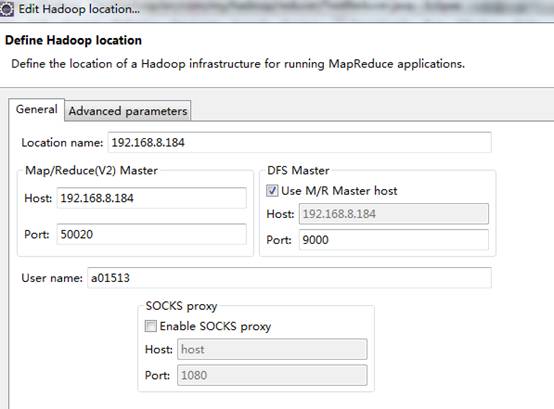

為了方便對于Hadoop的HDFS文件系統操作,我們可以在Eclipse下面的Map/Reduce Locations窗口與Hadoop建立連接,直接右鍵新建Hadoop連接即可:

連接配置如下:

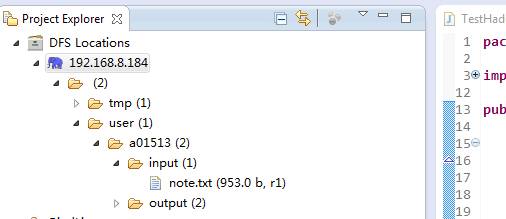

然后點擊完成即可,新建完成后,我們可以在左側目錄中看到HDFS的文件系統目錄:

這里不僅可以顯示目錄結構,還可以對文件及目錄進行刪除、新增等操作,非常方便。

?

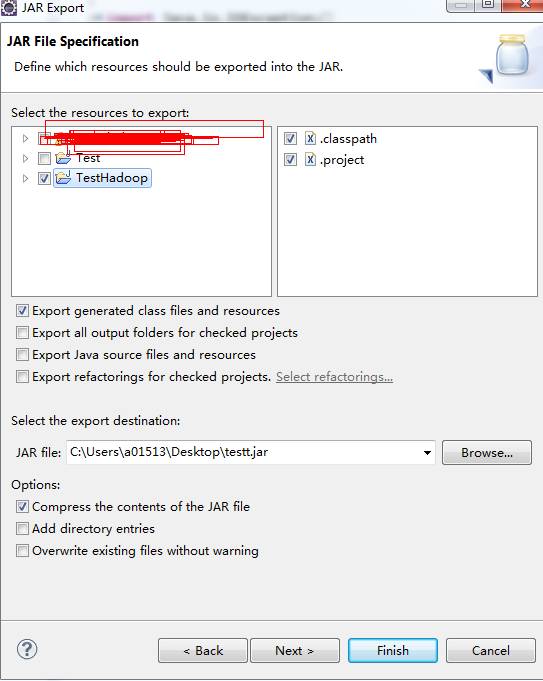

當上面的工作都做好之后,就可以把這個項目導出來了(導成jar文件放到Hadoop服務器上運行):

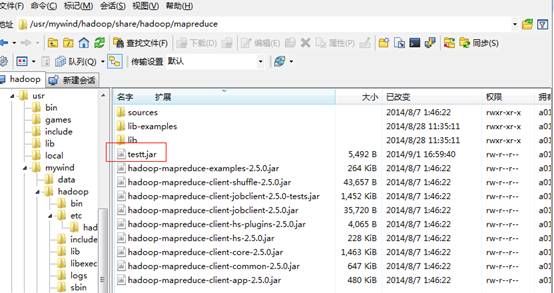

點擊完成,然后把這個testt.jar文件上傳到Hadoop服務器(192.168.8.184)上,目錄(其實可以放到其他目錄,你自己喜歡)是:

| 1 | /usr/mywind/hadoop/share/hadoop/mapreduce |

?

如下圖:

?

4、運行Hadoop程序及查看運行日志

當上面的工作準備好了之后,我們運行自己寫的Hadoop程序很簡單:

????????

| 1 | $?hadoop??jar??/usr/mywind/hadoop/share/hadoop/mapreduce/testt.jar?com.my.hadoop.test.main.TestHadoop???input??output |

?

注意這是output文件夾名稱不能重復哦,假如你執行了一次,在HDFS文件系統下面會自動生成一個output文件夾,第二次運行時,要么把output文件夾先刪除($ hdfs dfs -rmr /user/a01513/output),要么把命令中的output改成其他名稱如output1、output2等等。

如果看到以下輸出結果,證明你的運行成功了:

| 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 | a01513@hadoop?:~$?hadoop?jar?/usr/mywind/hadoop/share/hadoop/mapreduce/testt.jar??????????????????????????????????????????????????????????????????????????????com.my.hadoop.test.main.TestHadoop?input?output14/09/02?11:14:03?INFO?client.RMProxy:?Connecting?to?ResourceManager?at?/0.0.0.0?????????????????????????????????????????????????????????????????????????????:803214/09/02?11:14:04?INFO?client.RMProxy:?Connecting?to?ResourceManager?at?/0.0.0.0?????????????????????????????????????????????????????????????????????????????:803214/09/02?11:14:04?WARN?mapreduce.JobSubmitter:?Hadoop?command-line?option?parsin?????????????????????????????????????????????????????????????????????????????g?not?performed.?Implement?the?Tool?interface?and?execute?your?application?with??????????????????????????????????????????????????????????????????????????????ToolRunner?to?remedy?this.14/09/02?11:14:04?INFO?mapred.FileInputFormat:?Total?input?paths?to?process?:?114/09/02?11:14:04?INFO?mapreduce.JobSubmitter:?number?of?splits:214/09/02?11:14:05?INFO?mapreduce.JobSubmitter:?Submitting?tokens?for?job:?job_14?????????????????????????????????????????????????????????????????????????????09386620927_001514/09/02?11:14:05?INFO?impl.YarnClientImpl:?Submitted?application?application_14?????????????????????????????????????????????????????????????????????????????09386620927_001514/09/02?11:14:05?INFO?mapreduce.Job:?The?url?to?track?the?job:?http://hadoop:80?????????????????????????????????????????????????????????????????????????????88/proxy/application_1409386620927_0015/14/09/02?11:14:05?INFO?mapreduce.Job:?Running?job:?job_1409386620927_001514/09/02?11:14:12?INFO?mapreduce.Job:?Job?job_1409386620927_0015?running?in?uber?mode?:?false14/09/02?11:14:12?INFO?mapreduce.Job:??map?0%?reduce?0%14/09/02?11:14:21?INFO?mapreduce.Job:??map?100%?reduce?0%14/09/02?11:14:28?INFO?mapreduce.Job:??map?100%?reduce?100%14/09/02?11:14:28?INFO?mapreduce.Job:?Job?job_1409386620927_0015?completed?successfully14/09/02?11:14:29?INFO?mapreduce.Job:?Counters:?49????????File?System?Counters????????????????FILE:?Number?of?bytes?read=105????????????????FILE:?Number?of?bytes?written=289816????????????????FILE:?Number?of?read?operations=0????????????????FILE:?Number?of?large?read?operations=0????????????????FILE:?Number?of?write?operations=0????????????????HDFS:?Number?of?bytes?read=1638????????????????HDFS:?Number?of?bytes?written=10????????????????HDFS:?Number?of?read?operations=9????????????????HDFS:?Number?of?large?read?operations=0????????????????HDFS:?Number?of?write?operations=2????????Job?Counters????????????????Launched?map?tasks=2????????????????Launched?reduce?tasks=1????????????????Data-local?map?tasks=2????????????????Total?time?spent?by?all?maps?in?occupied?slots?(ms)=14817????????????????Total?time?spent?by?all?reduces?in?occupied?slots?(ms)=4500????????????????Total?time?spent?by?all?map?tasks?(ms)=14817????????????????Total?time?spent?by?all?reduce?tasks?(ms)=4500????????????????Total?vcore-seconds?taken?by?all?map?tasks=14817????????????????Total?vcore-seconds?taken?by?all?reduce?tasks=4500????????????????Total?megabyte-seconds?taken?by?all?map?tasks=15172608????????????????Total?megabyte-seconds?taken?by?all?reduce?tasks=4608000????????Map-Reduce?Framework????????????????Map?input?records=9????????????????Map?output?records=9????????????????Map?output?bytes=81????????????????Map?output?materialized?bytes=111????????????????Input?split?bytes=208????????????????Combine?input?records=0????????????????Combine?output?records=0????????????????Reduce?input?groups=1????????????????Reduce?shuffle?bytes=111????????????????Reduce?input?records=9????????????????Reduce?output?records=1????????????????Spilled?Records=18????????????????Shuffled?Maps?=2????????????????Failed?Shuffles=0????????????????Merged?Map?outputs=2????????????????GC?time?elapsed?(ms)=115????????????????CPU?time?spent?(ms)=1990????????????????Physical?memory?(bytes)?snapshot=655314944????????????????Virtual?memory?(bytes)?snapshot=2480295936????????????????Total?committed?heap?usage?(bytes)=466616320????????Shuffle?Errors????????????????BAD_ID=0????????????????CONNECTION=0????????????????IO_ERROR=0????????????????WRONG_LENGTH=0????????????????WRONG_MAP=0????????????????WRONG_REDUCE=0????????File?Input?Format?Counters????????????????Bytes?Read=1430????????File?Output?Format?Counters????????????????Bytes?Written=10a01513@hadoop?:~$ |

?

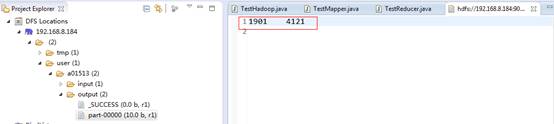

我們可以到Eclipse查看輸出的結果:

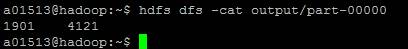

或者用命令行查看:

????????

| 1 2 | ??$?hdfs?dfs?-cat?output/part-00000 |

?

假如你們發現運行后結果是為空的,可能到日志目錄查找相應的log.info輸出信息,log目錄在:/usr/mywind/hadoop/logs/userlogs?下面。

?

好了,不太喜歡打字,以上就是整個過程了,歡迎大家來學習指正。

)

)

)

![[Labview資料] labview事件結構學習](http://pic.xiahunao.cn/[Labview資料] labview事件結構學習)