@浙大疏錦行

DAY 40 訓練和測試的規范寫法

知識點回顧:

1.? 彩色和灰度圖片測試和訓練的規范寫法:封裝在函數中

2.? 展平操作:除第一個維度batchsize外全部展平

3.? dropout操作:訓練階段隨機丟棄神經元,測試階段eval模式關閉dropout

作業:仔細學習下測試和訓練代碼的邏輯,這是基礎,這個代碼框架后續會一直沿用,后續的重點慢慢就是轉向模型定義階段了。

# 先繼續之前的代碼

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader , Dataset # DataLoader 是 PyTorch 中用于加載數據的工具

from torchvision import datasets, transforms # torchvision 是一個用于計算機視覺的庫,datasets 和 transforms 是其中的模塊

import matplotlib.pyplot as plt

import warnings

# 忽略警告信息

warnings.filterwarnings("ignore")

# 設置隨機種子,確保結果可復現

torch.manual_seed(42)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用設備: {device}")

# 1. 數據預處理

transform = transforms.Compose([transforms.ToTensor(), # 轉換為張量并歸一化到[0,1]transforms.Normalize((0.1307,), (0.3081,)) # MNIST數據集的均值和標準差

])# 2. 加載MNIST數據集

train_dataset = datasets.MNIST(root='./data',train=True,download=True,transform=transform

)test_dataset = datasets.MNIST(root='./data',train=False,transform=transform

)# 3. 創建數據加載器

batch_size = 64 # 每批處理64個樣本

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)# 4. 定義模型、損失函數和優化器

class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.flatten = nn.Flatten() # 將28x28的圖像展平為784維向量self.layer1 = nn.Linear(784, 128) # 第一層:784個輸入,128個神經元self.relu = nn.ReLU() # 激活函數self.layer2 = nn.Linear(128, 10) # 第二層:128個輸入,10個輸出(對應10個數字類別)def forward(self, x):x = self.flatten(x) # 展平圖像x = self.layer1(x) # 第一層線性變換x = self.relu(x) # 應用ReLU激活函數x = self.layer2(x) # 第二層線性變換,輸出logitsreturn x# 初始化模型

model = MLP()

model = model.to(device) # 將模型移至GPU(如果可用)# from torchsummary import summary # 導入torchsummary庫

# print("\n模型結構信息:")

# summary(model, input_size=(1, 28, 28)) # 輸入尺寸為MNIST圖像尺寸criterion = nn.CrossEntropyLoss() # 交叉熵損失函數,適用于多分類問題

optimizer = optim.Adam(model.parameters(), lr=0.001) # Adam優化器

# 5. 訓練模型(記錄每個 iteration 的損失)

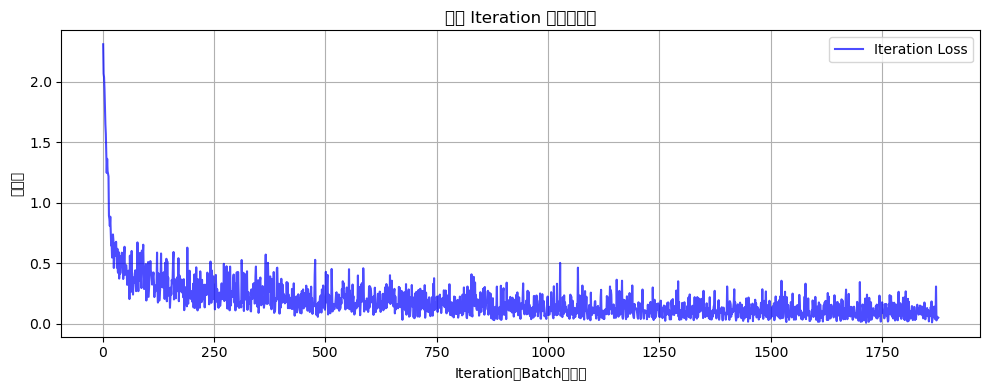

def train(model, train_loader, test_loader, criterion, optimizer, device, epochs):model.train() # 設置為訓練模式# 新增:記錄每個 iteration 的損失all_iter_losses = [] # 存儲所有 batch 的損失iter_indices = [] # 存儲 iteration 序號(從1開始)for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):# enumerate() 是 Python 內置函數,用于遍歷可迭代對象(如列表、元組)并同時獲取索引和值。# batch_idx:當前批次的索引(從 0 開始)# (data, target):當前批次的樣本數據和對應的標簽,是一個元組,這是因為dataloader內置的getitem方法返回的是一個元組,包含數據和標簽。# 只需要記住這種固定寫法即可data, target = data.to(device), target.to(device) # 移至GPU(如果可用)optimizer.zero_grad() # 梯度清零output = model(data) # 前向傳播loss = criterion(output, target) # 計算損失loss.backward() # 反向傳播optimizer.step() # 更新參數# 記錄當前 iteration 的損失(注意:這里直接使用單 batch 損失,而非累加平均)iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(epoch * len(train_loader) + batch_idx + 1) # iteration 序號從1開始# 統計準確率和損失running_loss += loss.item() #將loss轉化為標量值并且累加到running_loss中,計算總損失_, predicted = output.max(1) # output:是模型的輸出(logits),形狀為 [batch_size, 10](MNIST 有 10 個類別)# 獲取預測結果,max(1) 返回每行(即每個樣本)的最大值和對應的索引,這里我們只需要索引total += target.size(0) # target.size(0) 返回當前批次的樣本數量,即 batch_size,累加所有批次的樣本數,最終等于訓練集的總樣本數correct += predicted.eq(target).sum().item() # 返回一個布爾張量,表示預測是否正確,sum() 計算正確預測的數量,item() 將結果轉換為 Python 數字# 每100個批次打印一次訓練信息(可選:同時打印單 batch 損失)if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 單Batch損失: {iter_loss:.4f} | 累計平均損失: {running_loss/(batch_idx+1):.4f}')# 測試、打印 epoch 結果epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / totalepoch_test_loss, epoch_test_acc = test(model, test_loader, criterion, device)print(f'Epoch {epoch+1}/{epochs} 完成 | 訓練準確率: {epoch_train_acc:.2f}% | 測試準確率: {epoch_test_acc:.2f}%')# 繪制所有 iteration 的損失曲線plot_iter_losses(all_iter_losses, iter_indices)# 保留原 epoch 級曲線(可選)# plot_metrics(train_losses, test_losses, train_accuracies, test_accuracies, epochs)return epoch_test_acc # 返回最終測試準確率

# 6. 測試模型(不變)

def test(model, test_loader, criterion, device):model.eval() # 設置為評估模式test_loss = 0correct = 0total = 0with torch.no_grad(): # 不計算梯度,節省內存和計算資源for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()avg_loss = test_loss / len(test_loader)accuracy = 100. * correct / totalreturn avg_loss, accuracy # 返回損失和準確率

# 7. 繪制每個 iteration 的損失曲線

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序號)')plt.ylabel('損失值')plt.title('每個 Iteration 的訓練損失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()

# 8. 執行訓練和測試(設置 epochs=2 驗證效果)

epochs = 2

print("開始訓練模型...")

final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, device, epochs)

print(f"訓練完成!最終測試準確率: {final_accuracy:.2f}%")

?

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np# 設置中文字體支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解決負號顯示問題# 1. 數據預處理

transform = transforms.Compose([transforms.ToTensor(), # 轉換為張量transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)) # 標準化處理

])# 2. 加載CIFAR-10數據集

train_dataset = datasets.CIFAR10(root='./data',train=True,download=True,transform=transform

)test_dataset = datasets.CIFAR10(root='./data',train=False,transform=transform

)# 3. 創建數據加載器

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)# 4. 定義MLP模型(適應CIFAR-10的輸入尺寸)

class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.flatten = nn.Flatten() # 將3x32x32的圖像展平為3072維向量self.layer1 = nn.Linear(3072, 512) # 第一層:3072個輸入,512個神經元self.relu1 = nn.ReLU()self.dropout1 = nn.Dropout(0.2) # 添加Dropout防止過擬合self.layer2 = nn.Linear(512, 256) # 第二層:512個輸入,256個神經元self.relu2 = nn.ReLU()self.dropout2 = nn.Dropout(0.2)self.layer3 = nn.Linear(256, 10) # 輸出層:10個類別def forward(self, x):# 第一步:將輸入圖像展平為一維向量x = self.flatten(x) # 輸入尺寸: [batch_size, 3, 32, 32] → [batch_size, 3072]# 第一層全連接 + 激活 + Dropoutx = self.layer1(x) # 線性變換: [batch_size, 3072] → [batch_size, 512]x = self.relu1(x) # 應用ReLU激活函數x = self.dropout1(x) # 訓練時隨機丟棄部分神經元輸出# 第二層全連接 + 激活 + Dropoutx = self.layer2(x) # 線性變換: [batch_size, 512] → [batch_size, 256]x = self.relu2(x) # 應用ReLU激活函數x = self.dropout2(x) # 訓練時隨機丟棄部分神經元輸出# 第三層(輸出層)全連接x = self.layer3(x) # 線性變換: [batch_size, 256] → [batch_size, 10]return x # 返回未經過Softmax的logits# 檢查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 初始化模型

model = MLP()

model = model.to(device) # 將模型移至GPU(如果可用)criterion = nn.CrossEntropyLoss() # 交叉熵損失函數

optimizer = optim.Adam(model.parameters(), lr=0.001) # Adam優化器# 5. 訓練模型(記錄每個 iteration 的損失)

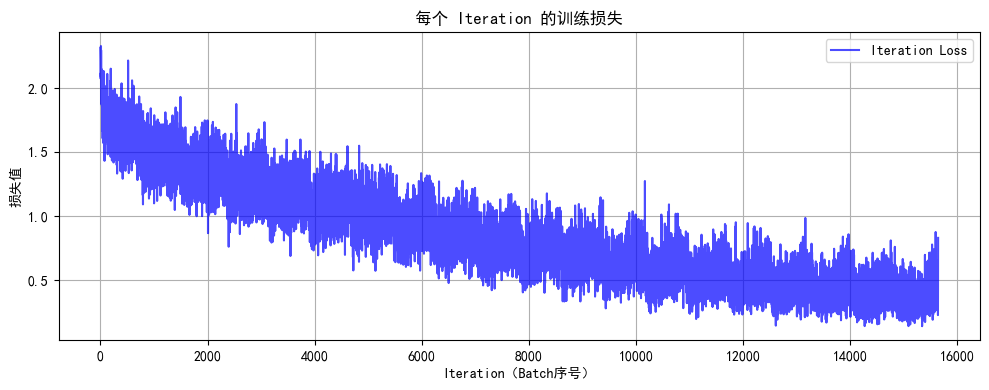

def train(model, train_loader, test_loader, criterion, optimizer, device, epochs):model.train() # 設置為訓練模式# 記錄每個 iteration 的損失all_iter_losses = [] # 存儲所有 batch 的損失iter_indices = [] # 存儲 iteration 序號for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 移至GPUoptimizer.zero_grad() # 梯度清零output = model(data) # 前向傳播loss = criterion(output, target) # 計算損失loss.backward() # 反向傳播optimizer.step() # 更新參數# 記錄當前 iteration 的損失iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(epoch * len(train_loader) + batch_idx + 1)# 統計準確率和損失running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 每100個批次打印一次訓練信息if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 單Batch損失: {iter_loss:.4f} | 累計平均損失: {running_loss/(batch_idx+1):.4f}')# 計算當前epoch的平均訓練損失和準確率epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / total# 測試階段model.eval() # 設置為評估模式test_loss = 0correct_test = 0total_test = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_testprint(f'Epoch {epoch+1}/{epochs} 完成 | 訓練準確率: {epoch_train_acc:.2f}% | 測試準確率: {epoch_test_acc:.2f}%')# 繪制所有 iteration 的損失曲線plot_iter_losses(all_iter_losses, iter_indices)return epoch_test_acc # 返回最終測試準確率# 6. 繪制每個 iteration 的損失曲線

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序號)')plt.ylabel('損失值')plt.title('每個 Iteration 的訓練損失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()# 7. 執行訓練和測試

epochs = 20 # 增加訓練輪次以獲得更好效果

print("開始訓練模型...")

final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, device, epochs)

print(f"訓練完成!最終測試準確率: {final_accuracy:.2f}%")# # 保存模型

# torch.save(model.state_dict(), 'cifar10_mlp_model.pth')

# # print("模型已保存為: cifar10_mlp_model.pth")

:網絡組成與三種交換方式全解析)

![[總結]前端性能指標分析、性能監控與分析、Lighthouse性能評分分析](http://pic.xiahunao.cn/[總結]前端性能指標分析、性能監控與分析、Lighthouse性能評分分析)

:RAG下的ETL快速上手)

)