1 安裝相關依賴庫

yum install -y gcc gcc-c++ make m4 libtool boost-devel zlib-devel openssl-devel libcurl-devel- yum:是yellowdog updater modified 的縮寫,Linux中的包管理工具

- gcc:一開始稱為GNU C Compiler,也就是一個C編譯器,后來因為這個項目里集成了更多其他不同語言的編譯器,所以就不再只是C編譯器,而稱為GNU編譯器套件(GCC,GNU Compiler Collection),表示一堆編譯器的合集

- gcc-c++:是GCC編譯器合集里的C++編譯器。

- make:是gcc的編譯器,m4:是一個宏處理器.將輸入拷貝到輸出,用來引用文件,執行命令,整數運算,文本操作,循環等.既可以作為編譯器的 前端,也可以單獨作為一個宏處理器。

- libtool:是一個通用庫支持腳本,作用是在編譯大型軟件的過程中解決了庫的依賴問題;將繁重的庫依賴關系的維護工作承擔下來,提供統一的接口,隱藏了不同平臺間庫的名稱的差異等。安裝libtool會自動安裝所依賴的automake和autoconfig。

- autoconf:是用來生成自動配置軟件源代碼腳本(configure)的工具.configure腳本能獨立于autoconf運行。

- Automake:會根據源碼中的Makefile.am來自動生成Makefile.in文件,Makefile.am中定義了宏和目標,運行automake命令會生成Makefile文件,然后使用make命令編譯代碼。

- boost-devel zlib-devel openssl-devel libcurl-devel:都是編譯時所依賴的庫。

2 下載源碼

由于Tair依賴tbsys和tbnet庫,需要安裝這兩個庫,而這兩庫需要編譯tb-common-utils安裝

安裝git:

yum install -y git從碼云上下載tb-common-utils源碼:

git clone https://gitee.com/abc0317/tb-common-utils.git進入tb-common-utils目錄

cd tb-common-utils/賦予執行權限

chmod u+x build.sh指定TBLIB_ROOT環境變量 TBLIB_ROOT為需要安裝的目錄。

export TBLIB_ROOT=/root/tairlib進入源碼目錄, 執行build.sh進行安裝

sh build.sh從碼云上下載Tair源碼:

cd ~

git clone https://gitee.com/mirrors/Tair.git3 編譯安裝Tair

cd Tair編譯依賴

./bootstrap.sh檢測和生成 Makefile (默認安裝位置是 ~/tair_bin, 修改使用 --prefix=目標目錄)

./configure編譯和安裝到目標目錄

make -j && make install3 配置Tair

基于MDB內存引擎,采用最小化配置方式,1個ConfigServer,1個DataServer搭建Tair集群。

由于MDB 引擎默認使用共享內存,所以需要查看并設置系統的tmpfs的大小,tmpfs是Linux/Unix系統上的一種基于內存的虛擬文件系統。

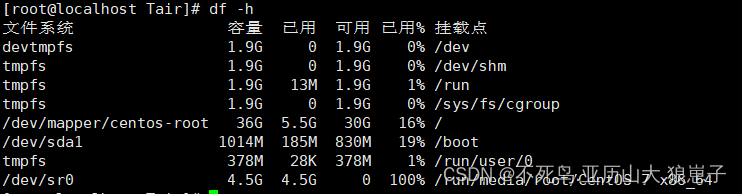

df -h

/dev/shm 目錄位于 linux 系統的內存中,而不在磁盤里,所以它的效率非常高,這里我們將大小設置1G,

修改/etc/fstab?的這行,如果沒有就在末尾加一行

vim /etc/fstab將

tmpfs /dev/shm tmpfs defaults 0 0改為

tmpfs /dev/shm tmpfs defaults,size=1G 0 0改完之后,執行mount使其生效

mount -o remount /dev/shm生效后再使用df -h 查看

切換到Tair安裝目錄,拷貝默認配置文件準備修改

cd /root/tair_bin/etc

mv configserver.conf.default configserver.conf

mv group.conf.default group.conf

mv dataserver.conf.default dataserver.conf4 修改configserver.conf

[public]

# 主備 ConfigServer 的地址和端口號,第一行為主,第二行為備,目前采用最簡單集群只配置一個ConfigServer

#config_server=192.168.1.1:5198

#config_server=192.168.1.2:5198

config_server=192.168.222.154:5198[configserver]

# ConfigServer 的工作端口號,和上面的配置以及 dataserver.conf 里的要一致

port=5198

# 日志文件位置

log_file=logs/config.log

# pid 存儲的文件位置

pid_file=logs/config.pid

# 默認日志級別

log_level=warn

# group.conf 文件的位置

group_file=etc/group.conf

# 運行時狀態持久化文件的位置

data_dir=data/data

# 使用的網卡設備名設置為你自己當前網絡接口的名稱,默認為eth0

# 如果不是當前接口網卡會報錯'local ip:0.0.0.0, check your `dev_name', `bond0' is preferred if present'

dev_name=ens33

5 修改group.conf

#group name

# 集群分組的名字,tair 支持一組 ConfigServer 管理多個集群 (不建議,避免流量瓶頸在 ConfigServer 上)

#[group_1]

[group_test]# data move is 1 means when some data serve down, the migrating will be start.

# default value is 0

# 是否允許數據遷移,雙份數據情況下,要設置為 1 (內部邏輯判斷是雙份的話,內部也會強制置為1)

_data_move=0

#_min_data_server_count: when data servers left in a group less than this value, config server will stop serve for this group

#default value is copy count.

# 過載保護的參數,當可用的 DataServer 節點少于這個數字時,ConfigServer 不再自動檢測宕機并重建路由表(避免逐臺擊穿而雪崩)

_min_data_server_count=1

#_plugIns_list=libStaticPlugIn.so

# 建表算法的選擇和一些參數,一般默認即可

_build_strategy=1 #1 normal 2 rack

_build_diff_ratio=0.6 #how much difference is allowd between different rack

# diff_ratio = |data_sever_count_in_rack1 - data_server_count_in_rack2| / max (data_sever_count_in_rack1, data_server_count_in_rack2)

# diff_ration must less than _build_diff_ratio

_pos_mask=65535 # 65535 is 0xffff this will be used to gernerate rack info. 64 bit serverId & _pos_mask is the rack info,

# 數據的備份數,注意集群一旦初始化,不能修改這個值

_copy_count=1

# 虛擬節點的個數,當集群機器數量很多時,可以調整這個值

# 注意一旦集群初始化完畢,這個值不能修改

_bucket_number=1023

# accept ds strategy. 1 means accept ds automatically

# 該文件的修改會觸發 ConfigServer 自動 reload,下面的參數控制是否自動加入本文件新增的 DataServer 節點到集群

# _min_data_server_count 參數也會影響,如果節點總數小于 _min_data_server_count,也不會自動加入

_accept_strategy=1

# 是否允許 failover 機制

# 這個機制工作在 LDB 引擎模式的集群下,當 _min_data_server_count 參數大于集群機器數(阻止自動剔除宕機機器)時,

# 如果該參數為1,宕機的第一個節點會進入 failover 模式,此時備機接管讀寫請求,同時記錄恢復日志,當宕機節點恢復時,

# 自動進入 recovery 模式,根據恢復日志補全數據。注意此時第二臺如果宕機,不會自動處理,所以第一臺一旦宕機收到告警,

# 請盡快人為干預處理。 **failover 機制想正常工作,需要 dataserver.conf 的 do_dup_depot 為 1 才可以**

_allow_failover_server=0# 下面是 DataServer 節點列表,注釋 data center A/B 并不是集群組的概念

# 集群組的配置是當前這個文件所有內容,復制整個內容追加到本文件尾部,可以添加一個集群組

# 注意一個節點不能出現在兩個集群組里!配置文件不做該校驗。

# data center A

#_server_list=192.168.1.1:5191

#_server_list=192.168.1.2:5191

#_server_list=192.168.1.3:5191

#_server_list=192.168.1.4:5191

_server_list=192.168.222.154:5191# data center B

#_server_list=192.168.2.1:5191

#_server_list=192.168.2.2:5191

#_server_list=192.168.2.3:5191

#_server_list=192.168.2.4:5191#quota info

# 配額信息

# 當引擎是 MDB 時,控制每個 Namespace 的內存配額,單位是字節

# Tair 支持 0~65535 的 Namespace 范圍,每個 Namespace 內部的 key 命名空間隔離

# 代碼中出現的 area 是 Namespace 的同義詞

_areaCapacity_list=0,1124000;6?dataserver.conf

#

# tair 2.3 --- tairserver config

#

[public]

# 主備 ConfigServer 的地址

#config_server=192.168.1.1:5198

#config_server=192.168.1.2:5198

config_server=192.168.222.154:5198[tairserver]

#

#storage_engine:

#

# mdb

# ldb

#

# 使用的引擎,支持 MDB 和 LDB

storage_engine=mdb

local_mode=0

#

#mdb_type:

# mdb

# mdb_shm

#

# 如果引擎是 MDB,這里選擇使用普通內存還是共享內存

mdb_type=mdb_shm# shm file prefix, located in /dev/shm/, the leading '/' is must

# MDB 實例的命名前綴,一般在單機部署多個節點時需要修改

mdb_shm_path=/mdb_shm_inst

# (1<<mdb_inst_shift) would be the instance count

# MDB 引擎的實例個數,多個實例減少鎖競爭但是會增加元數據而浪費內存

#mdb_inst_shift=3

mdb_inst_shift=0# (1<<mdb_hash_bucket_shift) would be the overall bucket count of hashtable

# (1<<mdb_hash_bucket_shift) * 8 bytes memory would be allocated as hashtable

# MDB 實例內部 hash 表的 bucket 數,24~27均可(取決于實例大小)

mdb_hash_bucket_shift=24

# milliseconds, time of one round of the checking in mdb lasts before having a break

mdb_check_granularity=15

# increase this factor when the check thread of mdb incurs heavy load

# cpu load would be around 1/(1+mdb_check_granularity_factor)

mdb_check_granularity_factor=10#tairserver listen port

port=5191supported_admin=0

# 工作時的 IO 線程數和 Worker 線程數

#process_thread_num=12

process_thread_num=4#io_thread_num=12

io_thread_num=4# 雙份數據時,往副本寫數據的 IO 線程數,只能是 1

dup_io_thread_num=1

#

#mdb size in MB

#

# MDB 引擎使用的存儲數據的內存池總大小 這里 slab_mem_size控制MDB內存池的總大小,mdb_inst_shift 控制實例的個數,每個實例的大小是 slab_mem_size/(1 << mdb_inst_shift) MB,注意這里一個實例必須大于512MB且小于64GB

#slab_mem_size=4096

slab_mem_size=512log_file=logs/server.log

pid_file=logs/server.pidis_namespace_load=1

is_flowcontrol_load=1

tair_admin_file = etc/admin.conf# 是否在put操作遍歷 hash 表沖突鏈時,順帶刪除已經 expired 的數據

# 會導致 put 的時延增大一點點,但是有利于控制過期數據很多的場景下內存增幅

put_remove_expired=0

# set same number means to disable the memory merge, like 5-5

mem_merge_hour_range=5-5

# 1ms copy 300 items

mem_merge_move_count=300log_level=warn

# 改為當前接口

# 如果不是當前接口網卡會報錯'local ip:0.0.0.0, check your `dev_name', `bond0' is preferred if present'

dev_name=ens33

ulog_dir=data/ulog

ulog_file_number=3

ulog_file_size=64

check_expired_hour_range=2-4

check_slab_hour_range=5-7

dup_sync=1

dup_timeout=500# 是否使用 LDB 集群間的數據自動同步

do_rsync=0rsync_io_thread_num=1

rsync_task_thread_num=4rsync_listen=1

# 0 mean old version

# 1 mean new version

# 這里只能是 1

rsync_version=1

# 同步的詳細配置地址,也可以使用 file:// 來指定本地磁盤的配置位置

rsync_config_service=http://localhost:8080/hangzhou/group_1

rsync_config_update_interval=60# much resemble json format

# one local cluster config and one or multi remote cluster config.

# {local:[master_cs_addr,slave_cs_addr,group_name,timeout_ms,queue_limit],remote:[...],remote:[...]}

# rsync_conf={local:[10.0.0.1:5198,10.0.0.2:5198,group_local,2000,1000],remote:[10.0.1.1:5198,10.0.1.2:5198,group_remote,2000,800]}

# if same data can be updated in local and remote cluster, then we need care modify time to

# reserve latest update when do rsync to each other.

rsync_mtime_care=0

# rsync data directory(retry_log/fail_log..)

rsync_data_dir=./data/remote

# max log file size to record failed rsync data, rotate to a new file when over the limit

rsync_fail_log_size=30000000

# when doing retry, size limit of retry log's memory use

rsync_retry_log_mem_size=100000000# depot duplicate update when one server down

# failover 機制的 DataServer 開關,見 group.conf 相關說明

do_dup_depot=0

dup_depot_dir=./data/dupdepot# 默認的流控配置,total 為整機限制

[flow_control]

# default flow control setting

default_net_upper = 30000000

default_net_lower = 15000000

default_ops_upper = 30000

default_ops_lower = 20000

default_total_net_upper = 75000000

default_total_net_lower = 65000000

default_total_ops_upper = 50000

default_total_ops_lower = 40000[ldb]

#### ldb manager config

## data dir prefix, db path will be data/ldbxx, "xx" means db instance index.

## so if ldb_db_instance_count = 2, then leveldb will init in

## /data/ldb1/ldb/, /data/ldb2/ldb/. We can mount each disk to

## data/ldb1, data/ldb2, so we can init each instance on each disk.

data_dir=data/ldb

## leveldb instance count, buckets will be well-distributed to instances

ldb_db_instance_count=1

## whether load backup version when startup.

## backup version may be created to maintain some db data of specifid version.

ldb_load_backup_version=0

## whether support version strategy.

## if yes, put will do get operation to update existed items's meta info(version .etc),

## get unexist item is expensive for leveldb. set 0 to disable if nobody even care version stuff.

ldb_db_version_care=1

## time range to compact for gc, 1-1 means do no compaction at all

ldb_compact_gc_range = 3-6

## backgroud task check compact interval (s)

ldb_check_compact_interval = 120

## use cache count, 0 means NOT use cache,`ldb_use_cache_count should NOT be larger

## than `ldb_db_instance_count, and better to be a factor of `ldb_db_instance_count.

## each cache mdb's config depends on mdb's config item(mdb_type, slab_mem_size, etc)

ldb_use_cache_count=1

## cache stat can't report configserver, record stat locally, stat file size.

## file will be rotate when file size is over this.

ldb_cache_stat_file_size=20971520

## migrate item batch size one time (1M)

ldb_migrate_batch_size = 3145728

## migrate item batch count.

## real batch migrate items depends on the smaller size/count

ldb_migrate_batch_count = 5000

## comparator_type bitcmp by default

# ldb_comparator_type=numeric

## numeric comparator: special compare method for user_key sorting in order to reducing compact

## parameters for numeric compare. format: [meta][prefix][delimiter][number][suffix]

## skip meta size in compare

# ldb_userkey_skip_meta_size=2

## delimiter between prefix and number

# ldb_userkey_num_delimiter=:

####

## use blommfilter

ldb_use_bloomfilter=1

## use mmap to speed up random acess file(sstable),may cost much memory

ldb_use_mmap_random_access=0

## how many highest levels to limit compaction

ldb_limit_compact_level_count=0

## limit compaction ratio: allow doing one compaction every ldb_limit_compact_interval

## 0 means limit all compaction

ldb_limit_compact_count_interval=0

## limit compaction time interval

## 0 means limit all compaction

ldb_limit_compact_time_interval=0

## limit compaction time range, start == end means doing limit the whole day.

ldb_limit_compact_time_range=6-1

## limit delete obsolete files when finishing one compaction

ldb_limit_delete_obsolete_file_interval=5

## whether trigger compaction by seek

ldb_do_seek_compaction=0

## whether split mmt when compaction with user-define logic(bucket range, eg)

ldb_do_split_mmt_compaction=0## do specify compact

## time range 24 hours

ldb_specify_compact_time_range=0-6

ldb_specify_compact_max_threshold=10000

## score threshold default = 1

ldb_specify_compact_score_threshold=1#### following config effects on FastDump ####

## when ldb_db_instance_count > 1, bucket will be sharded to instance base on config strategy.

## current supported:

## hash : just do integer hash to bucket number then module to instance, instance's balance may be

## not perfect in small buckets set. same bucket will be sharded to same instance

## all the time, so data will be reused even if buckets owned by server changed(maybe cluster has changed),

## map : handle to get better balance among all instances. same bucket may be sharded to different instance based

## on different buckets set(data will be migrated among instances).

ldb_bucket_index_to_instance_strategy=map

## bucket index can be updated. this is useful if the cluster wouldn't change once started

## even server down/up accidently.

ldb_bucket_index_can_update=1

## strategy map will save bucket index statistics into file, this is the file's directory

ldb_bucket_index_file_dir=./data/bindex

## memory usage for memtable sharded by bucket when batch-put(especially for FastDump)

ldb_max_mem_usage_for_memtable=3221225472

######## leveldb config (Warning: you should know what you're doing.)

## one leveldb instance max open files(actually table_cache_ capacity, consider as working set, see `ldb_table_cache_size)

ldb_max_open_files=65535

## whether return fail when occure fail when init/load db, and

## if true, read data when compactiong will verify checksum

ldb_paranoid_check=0

## memtable size

ldb_write_buffer_size=67108864

## sstable size

ldb_target_file_size=8388608

## max file size in each level. level-n (n > 0): (n - 1) * 10 * ldb_base_level_size

ldb_base_level_size=134217728

## sstable's block size

# ldb_block_size=4096

## sstable cache size (override `ldb_max_open_files)

ldb_table_cache_size=1073741824

##block cache size

ldb_block_cache_size=16777216

## arena used by memtable, arena block size

#ldb_arenablock_size=4096

## key is prefix-compressed period in block,

## this is period length(how many keys will be prefix-compressed period)

# ldb_block_restart_interval=16

## specifid compression method (snappy only now)

# ldb_compression=1

## compact when sstables count in level-0 is over this trigger

ldb_l0_compaction_trigger=1

## whether limit write with l0's filecount, if false

ldb_l0_limit_write_with_count=0

## write will slow down when sstables count in level-0 is over this trigger

## or sstables' filesize in level-0 is over trigger * ldb_write_buffer_size if ldb_l0_limit_write_with_count=0

ldb_l0_slowdown_write_trigger=32

## write will stop(wait until trigger down)

ldb_l0_stop_write_trigger=64

## when write memtable, max level to below maybe

ldb_max_memcompact_level=3

## read verify checksum

ldb_read_verify_checksums=0

## write sync log. (one write will sync log once, expensive)

ldb_write_sync=0

## bits per key when use bloom filter

#ldb_bloomfilter_bits_per_key=10

## filter data base logarithm. filterbasesize=1<<ldb_filter_base_logarithm

#ldb_filter_base_logarithm=12[extras]

######## RT-related ########

#rt_oplist=1,2

# Threashold of latency beyond which would let the request be dumped out.

rt_threshold=8000

# Enable RT Module at startup

rt_auto_enable=0

# How many requests would be subject to RT Module

rt_percent=100

# Interval to reset the latency statistics, by seconds

rt_reset_interval=10######## HotKey-related ########

hotk_oplist=2

# Sample count

hotk_sample_max=50000

# Reap count

hotk_reap_max=32

# Whether to send client feedback response

hotk_need_feedback=0

# Whether to dump out packets, caches or hot keys

hotk_need_dump=0

# Whether to just Do Hot one round

hotk_one_shot=0

# Whether having hot key depends on: sigma >= (average * hotk_hot_factor)

hotk_hot_factor=0.8在CentOS 7下,安裝目錄下的 tair.sh 啟動腳本有一行代碼(55行)需要修改

tmpfs_size=`df -m |grep tmpfs | awk '{print $2}'`

#這行改成下面這一行

tmpfs_size=`df -m |grep /dev/shm | awk '{print $2}'`7 啟動Tair實例

tair啟動需要跟參數,直接使用./tair.sh命令效果如下:

![]()

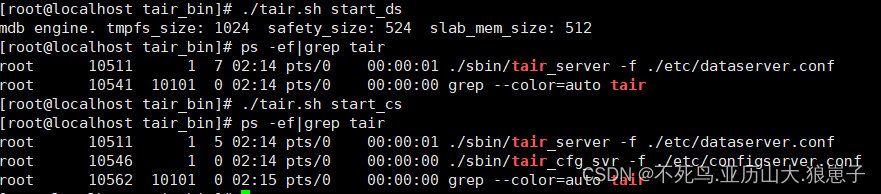

start_ds啟動數據節點

./tair.sh start_dsstart_cs啟動config配置節點

./tair.sh start_cs查看啟動

ps -ef | grep tair

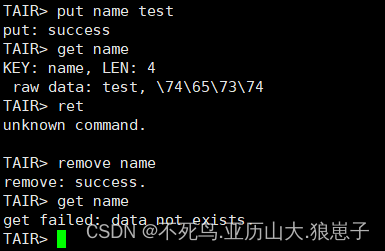

8 使用自帶客戶端測試讀寫

./sbin/tairclient -c 192.168.222.154:5198 -g group_test寫入數據測試

9 停止tair服務

停止數據節點

./tair.sh stop_ds???????停止配置節點

./tair.sh stop_cs

X的區別是?)

)

)

二十一 人臉識別)

)

)