🍨 本文為🔗365天深度學習訓練營中的學習記錄博客

🍖 原作者:K同學啊

🏡 我的環境:

語言環境:Python3.10

編譯器:Jupyter Lab

深度學習環境:torch==2.5.1 ? ?torchvision==0.20.1

------------------------------分割線---------------------------------

#設置GPU

import torch.nn as nn

import torch.nn.functional as F

import torchvision,torch device = torch.device("cuda" if torch.cuda.is_available() else "cpu")print(device) ![]() (數據規模很小,在普通筆記本電腦就可以流暢跑完)

(數據規模很小,在普通筆記本電腦就可以流暢跑完)

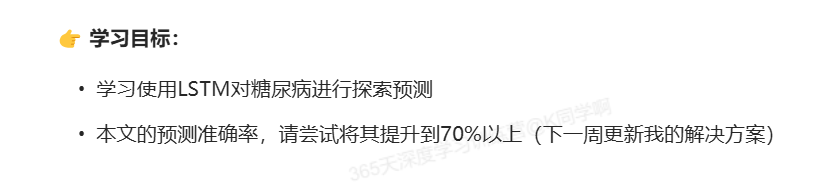

#導入數據

import numpy as np

import pandas as pd

import seaborn as sns

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

plt.rcParams['savefig.dpi'] = 500 #圖片像素

plt.rcParams['figure.dpi'] = 500 #分辨率plt.rcParams['font.sans-serif'] = ['SimHei'] #用來正常顯示中文標簽import warnings

warnings.filterwarnings('ignore')DataFrame = pd.read_excel('./dia.xls')

print(DataFrame.head())print(DataFrame.shape)

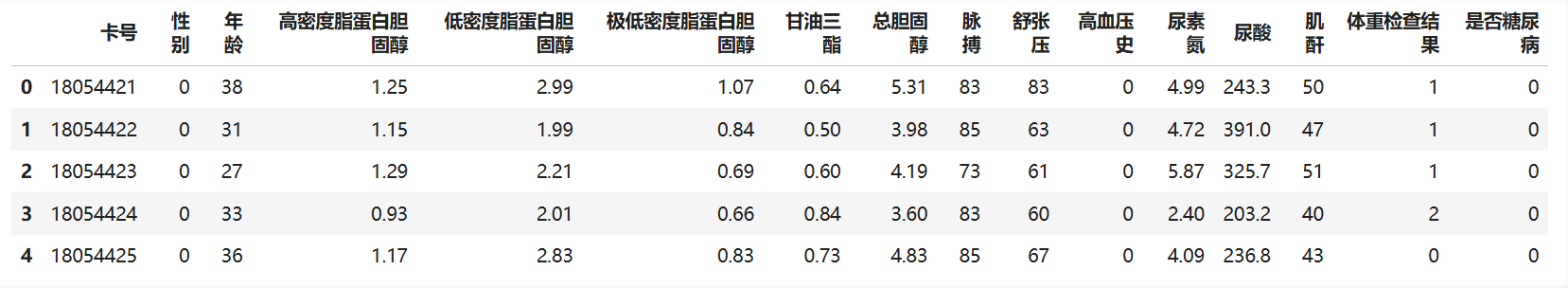

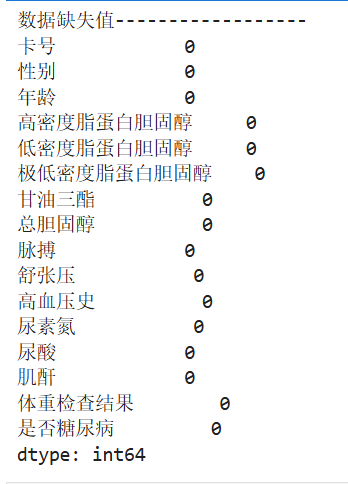

# 查看是否有缺失值

print("數據缺失值------------------")

print(DataFrame.isnull().sum())

# 查看數據是否有重復值

print("數據重復值------------------")

print('數據的重復值為:'f'{DataFrame.duplicated().sum()}')

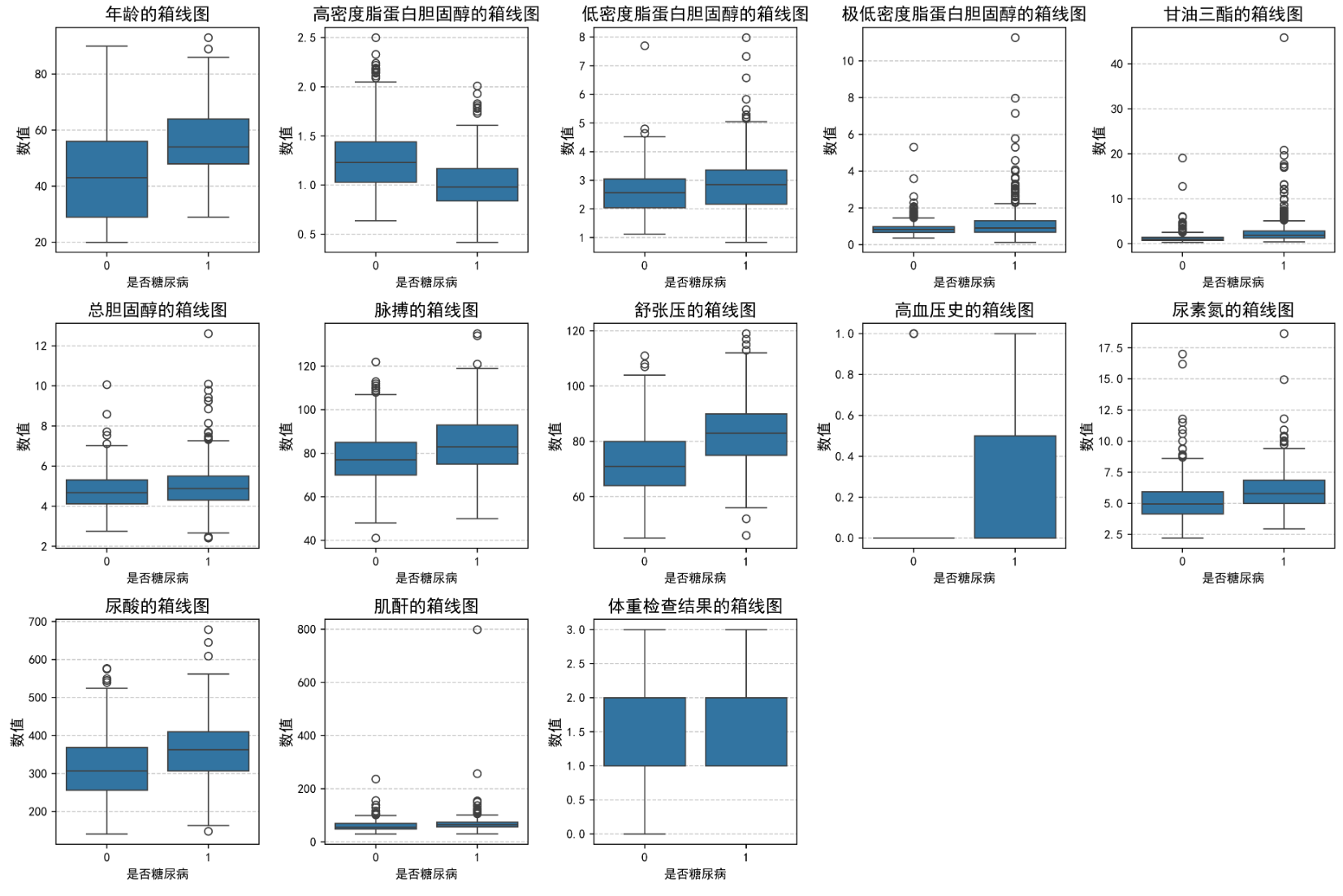

feature_map = {'年齡': '年齡','高密度脂蛋白膽固醇': '高密度脂蛋白膽固醇','低密度脂蛋白膽固醇': '低密度脂蛋白膽固醇','極低密度脂蛋白膽固醇': '極低密度脂蛋白膽固醇','甘油三酯': '甘油三酯','總膽固醇': '總膽固醇','脈搏': '脈搏','舒張壓': '舒張壓','高血壓史': '高血壓史','尿素氮': '尿素氮','尿酸': '尿酸','肌酐': '肌酐','體重檢查結果': '體重檢查結果'

}plt.figure(figsize=(15, 10))

for i, (col, col_name) in enumerate(feature_map.items(), 1):plt.subplot(3, 5, i)sns.boxplot(x=DataFrame['是否糖尿病'], y=DataFrame[col])plt.title(f'{col_name}的箱線圖', fontsize=14)plt.ylabel('數值', fontsize=12)plt.grid(axis='y', linestyle='--', alpha=0.7)

plt.tight_layout()

plt.show()

# 構建數據集

from sklearn.preprocessing import StandardScaler# '高密度脂蛋白膽固醇'字段與糖尿病負相關,故在X 中去掉該字段

X = DataFrame.drop(['卡號', '是否糖尿病', '高密度脂蛋白膽固醇'], axis=1)

y = DataFrame['是否糖尿病']# sc_X = StandardScaler()

# X = sc_X.fit_transformX = torch.tensor(np.array(X), dtype=torch.float32)

y = torch.tensor(np.array(y), dtype=torch.int64)train_X, test_X, train_y, test_y = train_test_split(X, y, test_size=0.2, random_state=1)train_X.shape, train_y.shape![]()

from torch.utils.data import TensorDataset, DataLoadertrain_dl = DataLoader(TensorDataset(train_X, train_y), batch_size=64, shuffle=False)

test_dl = DataLoader(TensorDataset(test_X, test_y), batch_size=64, shuffle=False)# 定義模型

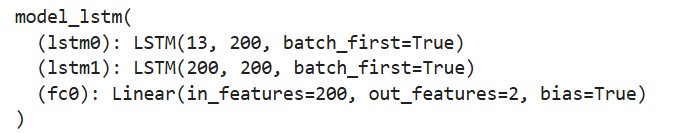

class model_lstm(nn.Module):def __init__(self):super(model_lstm, self).__init__()self.lstm0 = nn.LSTM(input_size=13, hidden_size=200,num_layers=1, batch_first=True)self.lstm1 = nn.LSTM(input_size=200, hidden_size=200,num_layers=1, batch_first=True)self.fc0 = nn.Linear(200, 2) # 輸出 2 類def forward(self, x):# 如果 x 是 2D 的,轉換為 3D 張量,假設 seq_len=1if x.dim() == 2:x = x.unsqueeze(1) # [batch_size, 1, input_size]# LSTM 處理數據out, (h_n, c_n) = self.lstm0(x) # 第一層 LSTM# 使用第二個 LSTM,并傳遞隱藏狀態out, (h_n, c_n) = self.lstm1(out, (h_n, c_n)) # 第二層 LSTM# 獲取最后一個時間步的輸出out = out[:, -1, :] # 選擇序列的最后一個時間步的輸出out = self.fc0(out) # [batch_size, 2]return outmodel = model_lstm().to(device)

print(model)

# 訓練模型def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 訓練集的大小num_batches = len(dataloader) # 批次數目train_loss, train_acc = 0, 0 # 初始化訓練損失和正確率model.train() # 設置模型為訓練模式for X, y in dataloader: # 獲取數據和標簽# 如果 X 是 2D 的,調整為 3Dif X.dim() == 2:X = X.unsqueeze(1) # [batch_size, 1, input_size],即假設 seq_len=1X, y = X.to(device), y.to(device) # 將數據移動到設備# 計算預測誤差pred = model(X) # 網絡輸出loss = loss_fn(pred, y) # 計算網絡輸出和真實值之間的差距# 反向傳播optimizer.zero_grad() # 清除上一步的梯度loss.backward() # 反向傳播optimizer.step() # 更新權重# 記錄acc與losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= size # 平均準確率train_loss /= num_batches # 平均損失return train_acc, train_lossdef test(dataloader, model, loss_fn):size = len(dataloader.dataset) # 測試集的大小num_batches = len(dataloader) # 批次數目, (size/batch_size,向上取test_loss, test_acc = 0, 0# 當不進行訓練時,停止梯度更新,節省計算內存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 計算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_lossloss_fn = nn.CrossEntropyLoss() # 創建損失函數

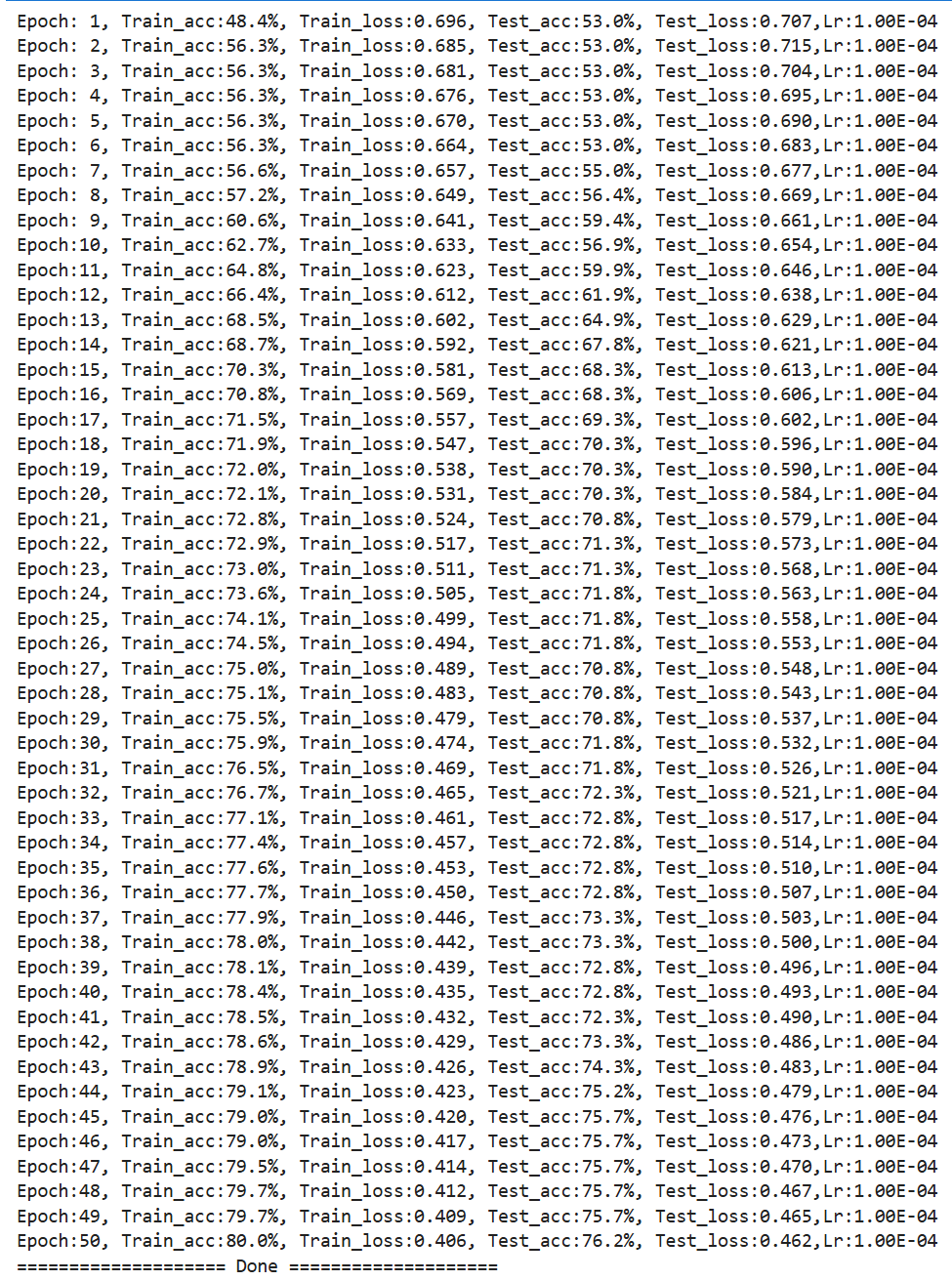

learn_rate = 1e-4 # 學習率

opt = torch.optim.Adam(model.parameters(), lr=learn_rate)

epochs = 50

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 獲取當前的學習率lr = opt.state_dict()['param_groups'][0]['lr']template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f},Lr:{:.2E}')print(template.format(epoch + 1, epoch_train_acc * 100, epoch_train_loss, epoch_test_acc * 100, epoch_test_loss, lr))print("=" * 20, 'Done', "=" * 20)

import matplotlib.pyplot as plt

#隱藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用來正常顯示中文標簽

plt.rcParams['axes.unicode_minus'] = False # 用來正常顯示負號

plt.rcParams['figure.dpi'] = 100 #分辨率from datetime import datetime

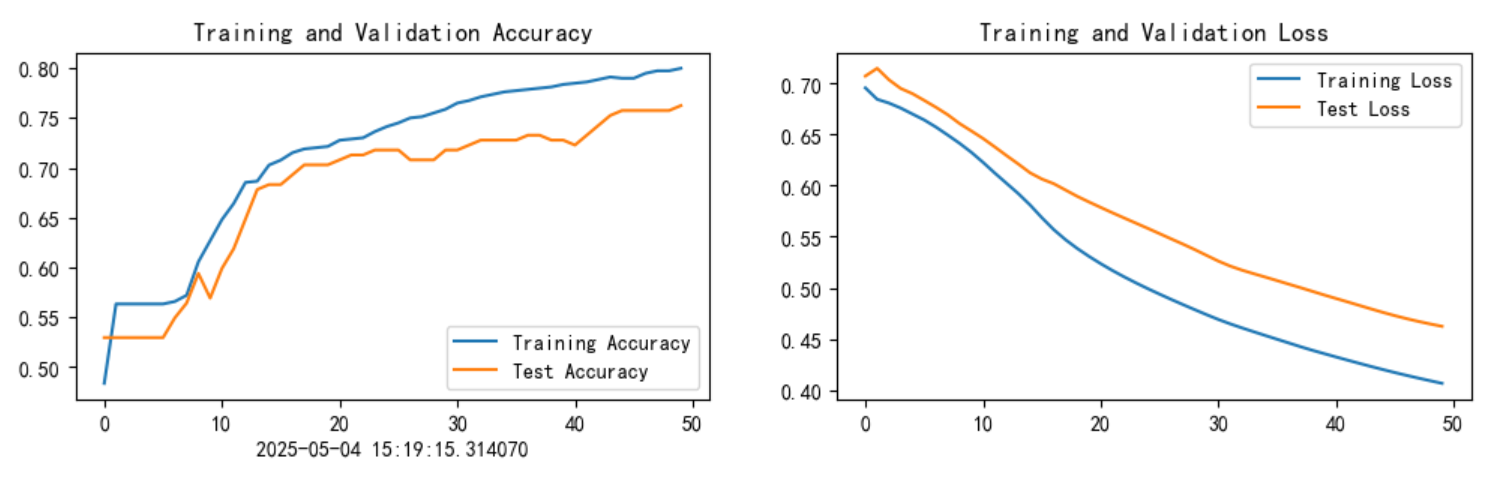

current_time = datetime.now()epochs_range = range(epochs)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time)plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

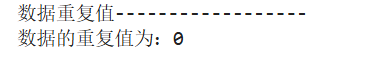

--------------小結和改進思路(順便把R7周的任務一起完成)---------------------

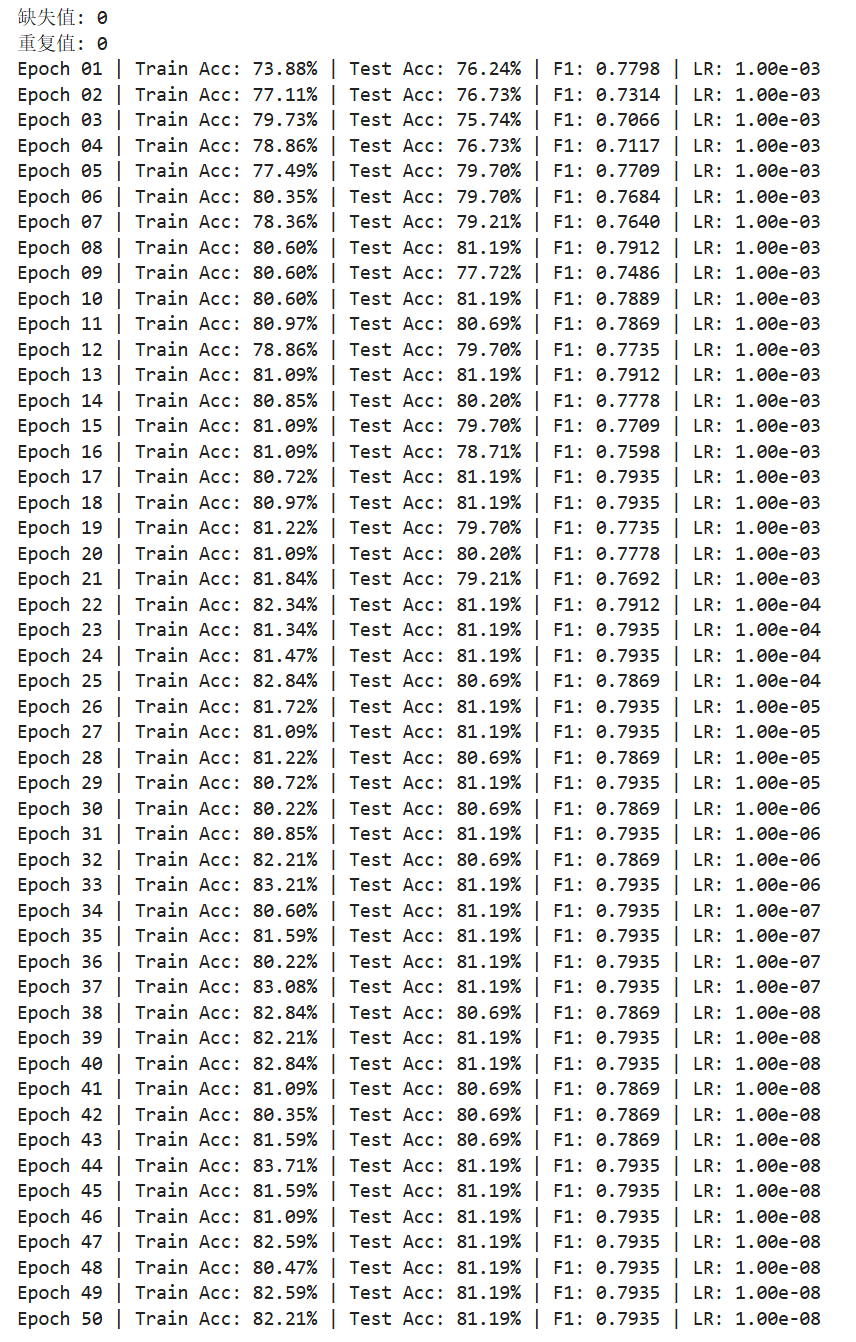

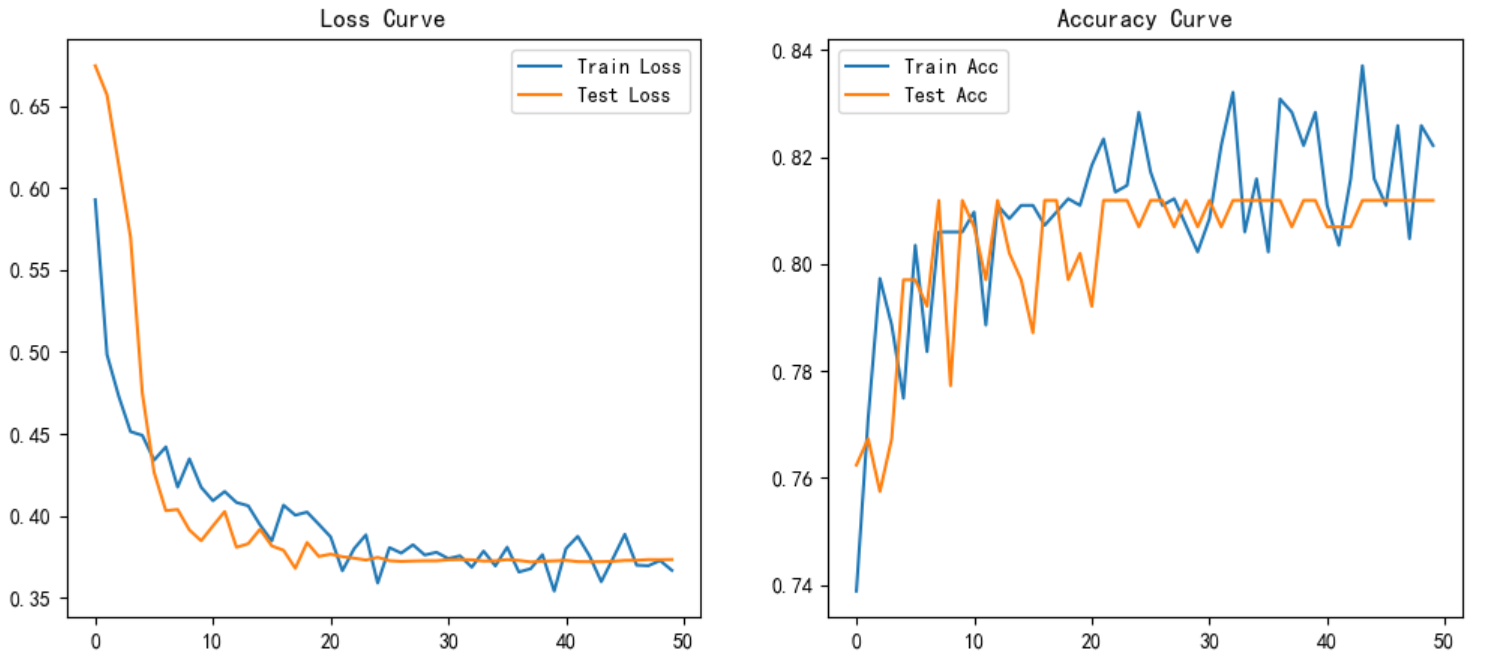

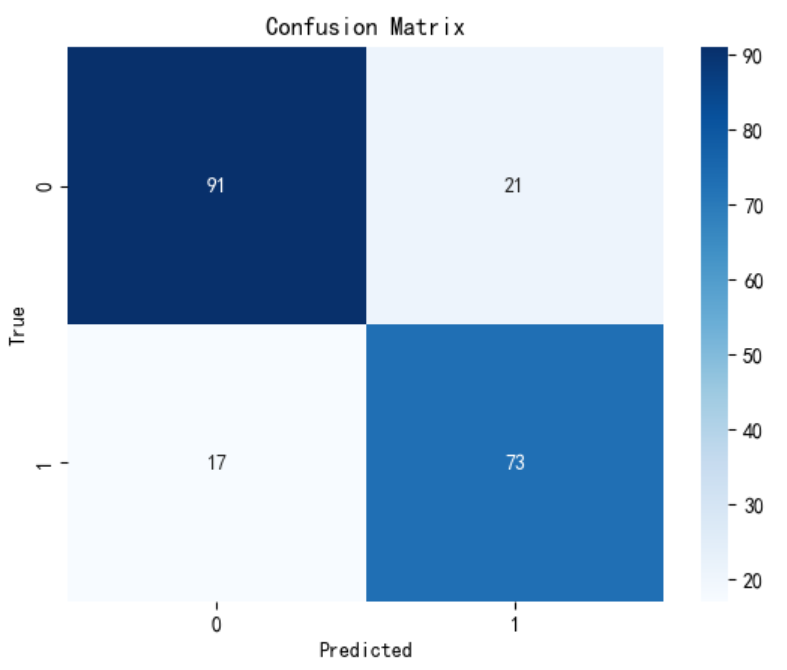

針對前述代碼,主要在下面幾個方面進行改進:

- 性能提升??:通過雙向LSTM和Dropout增強特征提取能力,標準化和類別權重緩解數據問題。

- ??防止過擬合??:在全連接層前添加BatchNorm提高泛化性。

- ??訓練穩定性??:學習率調度器和訓練集啟用shuffle隨機打亂數據使訓練更穩定。

通過上述優化,模型在保持LSTM核心結構的同時,能夠更有效地處理表格數據并提升預測性能,最終訓練集和測試集分別提高了5個百分點。

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import TensorDataset, DataLoader

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import f1_score, confusion_matrix

import matplotlib.pyplot as plt

import seaborn as sns# 環境設置

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

device![]()

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

torch.manual_seed(42) # 打印隨機seed使隨機分配可重復![]()

# 數據加載與預處理

def load_data():df = pd.read_excel('./dia.xls')# 數據清洗print("缺失值:", df.isnull().sum().sum())print("重復值:", df.duplicated().sum())df = df.drop_duplicates().reset_index(drop=True)# 特征工程X = df.drop(['卡號', '是否糖尿病', '高密度脂蛋白膽固醇'], axis=1)y = df['是否糖尿病']# 處理類別不平衡class_counts = y.value_counts().valuesclass_weights = torch.tensor([1/count for count in class_counts], dtype=torch.float32).to(device)# 數據標準化(先分割后標準化)X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, stratify=y, random_state=42)scaler = StandardScaler()X_train = scaler.fit_transform(X_train)X_test = scaler.transform(X_test)# 轉換為TensorX_train = torch.tensor(X_train, dtype=torch.float32).to(device)X_test = torch.tensor(X_test, dtype=torch.float32).to(device)y_train = torch.tensor(y_train.values, dtype=torch.long).to(device)y_test = torch.tensor(y_test.values, dtype=torch.long).to(device)return X_train, X_test, y_train, y_test, class_weights# 雙向LSTM模型

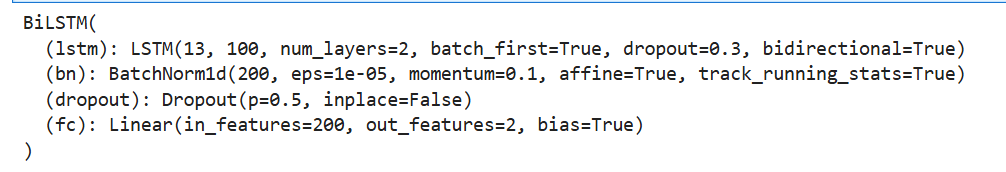

class BiLSTM(nn.Module):def __init__(self, input_size=12, hidden_size=100, num_layers=2):super().__init__()self.lstm = nn.LSTM(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers,bidirectional=True,batch_first=True,dropout=0.3 if num_layers>1 else 0)self.bn = nn.BatchNorm1d(hidden_size*2)self.dropout = nn.Dropout(0.5)self.fc = nn.Linear(hidden_size*2, 2)def forward(self, x):if x.dim() == 2:x = x.unsqueeze(1)out, _ = self.lstm(x)out = out[:, -1, :] # 取最后時間步out = self.bn(out)out = self.dropout(out)return self.fc(out)# 訓練與驗證函數

def train_epoch(model, loader, criterion, optimizer):model.train()total_loss, correct = 0, 0for X, y in loader:X = X.unsqueeze(1) if X.dim()==2 else Xoptimizer.zero_grad()outputs = model(X)loss = criterion(outputs, y)loss.backward()optimizer.step()total_loss += loss.item()correct += (outputs.argmax(1) == y).sum().item()return correct/len(loader.dataset), total_loss/len(loader)def evaluate(model, loader, criterion):model.eval()total_loss, correct = 0, 0all_preds, all_labels = [], []with torch.no_grad():for X, y in loader:X = X.unsqueeze(1) if X.dim()==2 else Xoutputs = model(X)loss = criterion(outputs, y)total_loss += loss.item()correct += (outputs.argmax(1) == y).sum().item()all_preds.extend(outputs.argmax(1).cpu().numpy())all_labels.extend(y.cpu().numpy())f1 = f1_score(all_labels, all_preds)cm = confusion_matrix(all_labels, all_preds)return correct/len(loader.dataset), total_loss/len(loader), f1, cmprint(model)

# 數據準備X_train, X_test, y_train, y_test, class_weights = load_data()train_dataset = TensorDataset(X_train, y_train)test_dataset = TensorDataset(X_test, y_test)train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)test_loader = DataLoader(test_dataset, batch_size=64)# 模型初始化model = BiLSTM(input_size=13).to(device)criterion = nn.CrossEntropyLoss(weight=class_weights)optimizer = optim.Adam(model.parameters(), lr=1e-3, weight_decay=1e-4)scheduler = optim.lr_scheduler.ReduceLROnPlateau(optimizer, 'min', patience=3)# 訓練循環best_f1 = 0train_losses, test_losses = [], []train_accs, test_accs = [], []for epoch in range(50):train_acc, train_loss = train_epoch(model, train_loader, criterion, optimizer)test_acc, test_loss, f1, cm = evaluate(model, test_loader, criterion)# 學習率調整scheduler.step(test_loss)# 記錄指標train_losses.append(train_loss)test_losses.append(test_loss)train_accs.append(train_acc)test_accs.append(test_acc)# 打印信息print(f"Epoch {epoch+1:02d} | "f"Train Acc: {train_acc:.2%} | Test Acc: {test_acc:.2%} | "f"F1: {f1:.4f} | LR: {optimizer.param_groups[0]['lr']:.2e}")# 可視化plt.figure(figsize=(12,5))plt.subplot(1,2,1)plt.plot(train_losses, label='Train Loss')plt.plot(test_losses, label='Test Loss')plt.legend()plt.title("Loss Curve")plt.subplot(1,2,2)plt.plot(train_accs, label='Train Acc')plt.plot(test_accs, label='Test Acc')plt.legend()plt.title("Accuracy Curve")plt.show()

![[特殊字符] 人工智能大模型之開源大語言模型匯總(國內外開源項目模型匯總) [特殊字符]](http://pic.xiahunao.cn/[特殊字符] 人工智能大模型之開源大語言模型匯總(國內外開源項目模型匯總) [特殊字符])

)

)

)