模型簡介

BERT全稱是來自變換器的雙向編碼器表征量(Bidirectional Encoder Representations from Transformers),它是Google于2018年末開發并發布的一種新型語言模型。與BERT模型相似的預訓練語言模型例如問答、命名實體識別、自然語言推理、文本分類等在許多自然語言處理任務中發揮著重要作用。模型是基于Transformer中的Encoder并加上雙向的結構,因此一定要熟練掌握Transformer的Encoder的結構。

BERT模型的主要創新點都在pre-train方法上,即用了Masked Language Model和Next Sentence Prediction兩種方法分別捕捉詞語和句子級別的representation。

在用Masked Language Model方法訓練BERT的時候,隨機把語料庫中15%的單詞做Mask操作。對于這15%的單詞做Mask操作分為三種情況:80%的單詞直接用[Mask]替換、10%的單詞直接替換成另一個新的單詞、10%的單詞保持不變。

因為涉及到Question Answering (QA) 和 Natural Language Inference (NLI)之類的任務,增加了Next Sentence Prediction預訓練任務,目的是讓模型理解兩個句子之間的聯系。與Masked Language Model任務相比,Next Sentence Prediction更簡單些,訓練的輸入是句子A和B,B有一半的幾率是A的下一句,輸入這兩個句子,BERT模型預測B是不是A的下一句。

BERT預訓練之后,會保存它的Embedding table和12層Transformer權重(BERT-BASE)或24層Transformer權重(BERT-LARGE)。使用預訓練好的BERT模型可以對下游任務進行Fine-tuning,比如:文本分類、相似度判斷、閱讀理解等。

對話情緒識別(Emotion Detection,簡稱EmoTect),專注于識別智能對話場景中用戶的情緒,針對智能對話場景中的用戶文本,自動判斷該文本的情緒類別并給出相應的置信度,情緒類型分為積極、消極、中性。 對話情緒識別適用于聊天、客服等多個場景,能夠幫助企業更好地把握對話質量、改善產品的用戶交互體驗,也能分析客服服務質量、降低人工質檢成本。

下面以一個文本情感分類任務為例子來說明BERT模型的整個應用過程。

import osimport mindspore

from mindspore.dataset import text, GeneratorDataset, transforms

from mindspore import nn, contextfrom mindnlp._legacy.engine import Trainer, Evaluator

from mindnlp._legacy.engine.callbacks import CheckpointCallback, BestModelCallback

from mindnlp._legacy.metrics import Accuracy# prepare dataset

class SentimentDataset:"""Sentiment Dataset"""def __init__(self, path):self.path = pathself._labels, self._text_a = [], []self._load()def _load(self):with open(self.path, "r", encoding="utf-8") as f:dataset = f.read()lines = dataset.split("\n")for line in lines[1:-1]:label, text_a = line.split("\t")self._labels.append(int(label))self._text_a.append(text_a)def __getitem__(self, index):return self._labels[index], self._text_a[index]def __len__(self):return len(self._labels)數據集

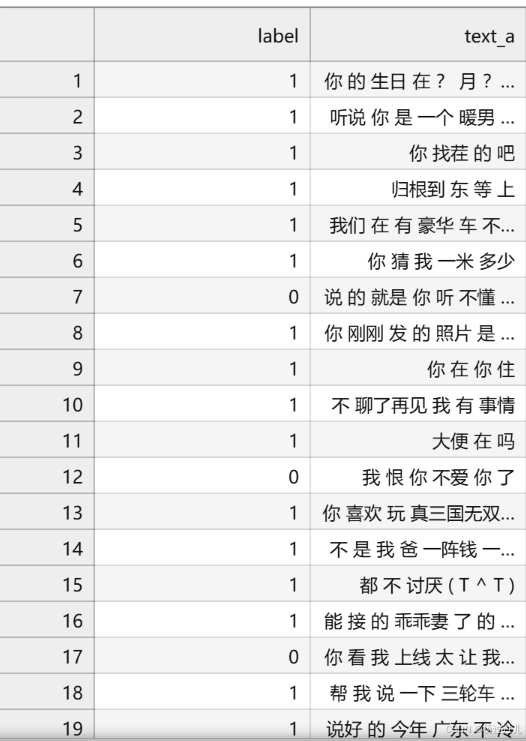

這里提供一份已標注的、經過分詞預處理的機器人聊天數據集,來自于百度飛槳團隊。數據由兩列組成,以制表符('\t')分隔,第一列是情緒分類的類別(0表示消極;1表示中性;2表示積極),第二列是以空格分詞的中文文本,如下示例,文件為 utf8 編碼。

label--text_a

0--誰罵人了?我從來不罵人,我罵的都不是人,你是人嗎 ?

1--我有事等會兒就回來和你聊

2--我見到你很高興謝謝你幫我

這部分主要包括數據集讀取,數據格式轉換,數據 Tokenize 處理和 pad 操作。

# download dataset

!wget https://baidu-nlp.bj.bcebos.com/emotion_detection-dataset-1.0.0.tar.gz -O emotion_detection.tar.gz

!tar xvf emotion_detection.tar.gz ?

?

數據加載和數據預處理?

新建 process_dataset 函數用于數據加載和數據預處理,具體內容可見下面代碼注釋。

import numpy as npdef process_dataset(source, tokenizer, max_seq_len=64, batch_size=32, shuffle=True):is_ascend = mindspore.get_context('device_target') == 'Ascend'column_names = ["label", "text_a"]dataset = GeneratorDataset(source, column_names=column_names, shuffle=shuffle)# transformstype_cast_op = transforms.TypeCast(mindspore.int32)def tokenize_and_pad(text):if is_ascend:tokenized = tokenizer(text, padding='max_length', truncation=True, max_length=max_seq_len)else:tokenized = tokenizer(text)return tokenized['input_ids'], tokenized['attention_mask']# map datasetdataset = dataset.map(operations=tokenize_and_pad, input_columns="text_a", output_columns=['input_ids', 'attention_mask'])dataset = dataset.map(operations=[type_cast_op], input_columns="label", output_columns='labels')# batch datasetif is_ascend:dataset = dataset.batch(batch_size)else:dataset = dataset.padded_batch(batch_size, pad_info={'input_ids': (None, tokenizer.pad_token_id),'attention_mask': (None, 0)})return dataset昇騰NPU環境下暫不支持動態Shape,數據預處理部分采用靜態Shape處理:

from mindnlp.transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained('bert-base-chinese')tokenizer.pad_token_iddataset_train = process_dataset(SentimentDataset("data/train.tsv"), tokenizer)

dataset_val = process_dataset(SentimentDataset("data/dev.tsv"), tokenizer)

dataset_test = process_dataset(SentimentDataset("data/test.tsv"), tokenizer, shuffle=False)dataset_train.get_col_names()['input_ids', 'attention_mask', 'labels']

print(next(dataset_train.create_tuple_iterator()))[Tensor(shape=[32, 64], dtype=Int64, value= [[ 101, 872, 679 ... 0, 0, 0],[ 101, 1557, 8024 ... 0, 0, 0],[ 101, 6929, 1168 ... 0, 0, 0],...[ 101, 2828, 800 ... 0, 0, 0],[ 101, 1521, 1506 ... 0, 0, 0],[ 101, 6820, 1962 ... 0, 0, 0]]), Tensor(shape=[32, 64], dtype=Int64, value= [[1, 1, 1 ... 0, 0, 0],[1, 1, 1 ... 0, 0, 0],[1, 1, 1 ... 0, 0, 0],...[1, 1, 1 ... 0, 0, 0],[1, 1, 1 ... 0, 0, 0],[1, 1, 1 ... 0, 0, 0]]), Tensor(shape=[32], dtype=Int32, value= [0, 0, 1, 1, 1, 1, 1, 2, 1, 1, 0, 0, 1, 1, 1, 2, 1, 0, 1, 1, 0, 1, 1, 2, 1, 1, 1, 1, 1, 1, 1, 0])]

模型構建

通過 BertForSequenceClassification 構建用于情感分類的 BERT 模型,加載預訓練權重,設置情感三分類的超參數自動構建模型。后面對模型采用自動混合精度操作,提高訓練的速度,然后實例化優化器,緊接著實例化評價指標,設置模型訓練的權重保存策略,最后就是構建訓練器,模型開始訓練。

from mindnlp.transformers import BertForSequenceClassification, BertModel

from mindnlp._legacy.amp import auto_mixed_precision# set bert config and define parameters for training

model = BertForSequenceClassification.from_pretrained('bert-base-chinese', num_labels=3)

model = auto_mixed_precision(model, 'O1')optimizer = nn.Adam(model.trainable_params(), learning_rate=2e-5)metric = Accuracy()

# define callbacks to save checkpoints

ckpoint_cb = CheckpointCallback(save_path='checkpoint', ckpt_name='bert_emotect', epochs=1, keep_checkpoint_max=2)

best_model_cb = BestModelCallback(save_path='checkpoint', ckpt_name='bert_emotect_best', auto_load=True)trainer = Trainer(network=model, train_dataset=dataset_train,eval_dataset=dataset_val, metrics=metric,%%time

# start training

trainer.run(tgt_columns="labels")The train will start from the checkpoint saved in 'checkpoint'.Epoch?0:?100%

?302/302?[04:12<00:00,??4.21s/it,?loss=0.3291704]

Checkpoint: 'bert_emotect_epoch_0.ckpt' has been saved in epoch: 0.Evaluate:?100%

?34/34?[00:07<00:00,??1.10s/it]

Evaluate Score: {'Accuracy': 0.9361111111111111} ---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 0.---------------Epoch?1:?100%

?302/302?[02:33<00:00,??2.02it/s,?loss=0.18864435]

Checkpoint: 'bert_emotect_epoch_1.ckpt' has been saved in epoch: 1.Evaluate:?100%

?34/34?[00:04<00:00,??7.94it/s]

Evaluate Score: {'Accuracy': 0.9629629629629629} ---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 1.---------------Epoch?2:?100%

?302/302?[02:34<00:00,??1.98it/s,?loss=0.12532383]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_2.ckpt' has been saved in epoch: 2.Evaluate:?100%

?34/34?[00:04<00:00,??8.05it/s]

Evaluate Score: {'Accuracy': 0.9805555555555555} ---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 2.---------------Epoch?3:?100%

?302/302?[02:34<00:00,??1.97it/s,?loss=0.08664711]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_3.ckpt' has been saved in epoch: 3.Evaluate:?100%

?34/34?[00:04<00:00,??8.11it/s]

Evaluate Score: {'Accuracy': 0.9916666666666667} ---------------Best Model: 'bert_emotect_best.ckpt' has been saved in epoch: 3.---------------Epoch?4:?100%

?302/302?[02:34<00:00,??1.97it/s,?loss=0.060646668]

The maximum number of stored checkpoints has been reached. Checkpoint: 'bert_emotect_epoch_4.ckpt' has been saved in epoch: 4.Evaluate:?100%

?34/34?[00:04<00:00,??7.80it/s]

Evaluate Score: {'Accuracy': 0.9879629629629629} Loading best model from 'checkpoint' with '['Accuracy']': [0.9916666666666667]... ---------------The model is already load the best model from 'bert_emotect_best.ckpt'.--------------- CPU times: user 22min 1s, sys: 13min 27s, total: 35min 28s Wall time: 15min 9s

模型驗證

將驗證數據集加再進訓練好的模型,對數據集進行驗證,查看模型在驗證數據上面的效果,此處的評價指標為準確率。

evaluator = Evaluator(network=model, eval_dataset=dataset_test, metrics=metric)

evaluator.run(tgt_columns="labels")Evaluate:?100%

?33/33?[00:07<00:00,??1.12s/it]

Evaluate Score: {'Accuracy': 0.8947876447876448}

模型推理?

遍歷推理數據集,將結果與標簽進行統一展示。

dataset_infer = SentimentDataset("data/infer.tsv")def predict(text, label=None):label_map = {0: "消極", 1: "中性", 2: "積極"}text_tokenized = Tensor([tokenizer(text).input_ids])logits = model(text_tokenized)predict_label = logits[0].asnumpy().argmax()info = f"inputs: '{text}', predict: '{label_map[predict_label]}'"if label is not None:info += f" , label: '{label_map[label]}'"print(info)from mindspore import Tensorfor label, text in dataset_infer:predict(text, label)inputs: '我 要 客觀', predict: '中性' , label: '中性' inputs: '靠 你 真是 說 廢話 嗎', predict: '消極' , label: '消極' inputs: '口嗅 會', predict: '中性' , label: '中性' inputs: '每次 是 表妹 帶 窩 飛 因為 窩路癡', predict: '中性' , label: '中性' inputs: '別說 廢話 我 問 你 個 問題', predict: '消極' , label: '消極' inputs: '4967 是 新加坡 那 家 銀行', predict: '中性' , label: '中性' inputs: '是 我 喜歡 兔子', predict: '積極' , label: '積極' inputs: '你 寫 過 黃山 奇石 嗎', predict: '中性' , label: '中性' inputs: '一個一個 慢慢來', predict: '中性' , label: '中性' inputs: '我 玩 過 這個 一點 都 不 好玩', predict: '消極' , label: '消極' inputs: '網上 開發 女孩 的 QQ', predict: '中性' , label: '中性' inputs: '背 你 猜 對 了', predict: '中性' , label: '中性' inputs: '我 討厭 你 , 哼哼 哼 。 。', predict: '消極' , label: '消極'

自定義推理數據集

自己輸入推理數據,展示模型的泛化能力。

predict("家人們咱就是說一整個無語住了 絕絕子疊buff")inputs: '家人們咱就是說一整個無語住了 絕絕子疊buff', predict: '中性'

網絡訓練對輸入向量進行分類)

)

)

(附MATLAB、python和R語言代碼實現))

![Java [ 進階 ] 深入理解 JVM](http://pic.xiahunao.cn/Java [ 進階 ] 深入理解 JVM)

)