文章目錄

- 目錄

- 1.殘差網絡基礎

- 1.1基本概念

- 1.2VGG19、ResNet34結構圖

- 1.3 梯度彌散和網絡退化

- 1.4 殘差塊變體

- 1.5 ResNet模型變體

- 1.6 Residual Network補充

- 1.7 1*1卷積核(補充)

- 2.殘差網絡介紹(何凱明)

- 3.ResNet-50(Ng)

- 3.1 非常深的神經網絡問題

- 3.2 建立一個殘差網絡

- 3.3first ResNet model(50layers)

- 3.4 代碼實現

- 4.ResNet 100-1000層

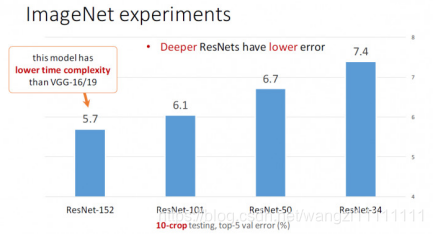

- 4.1 152層

目錄

1.殘差網絡基礎

1.1基本概念

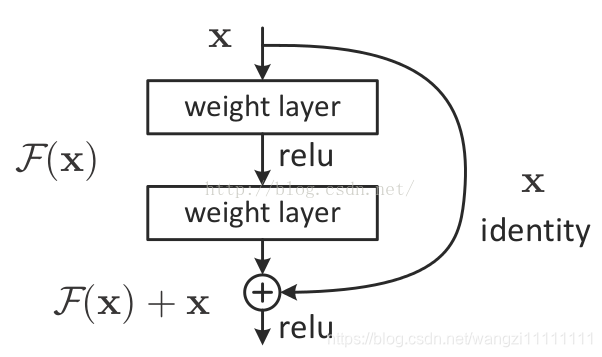

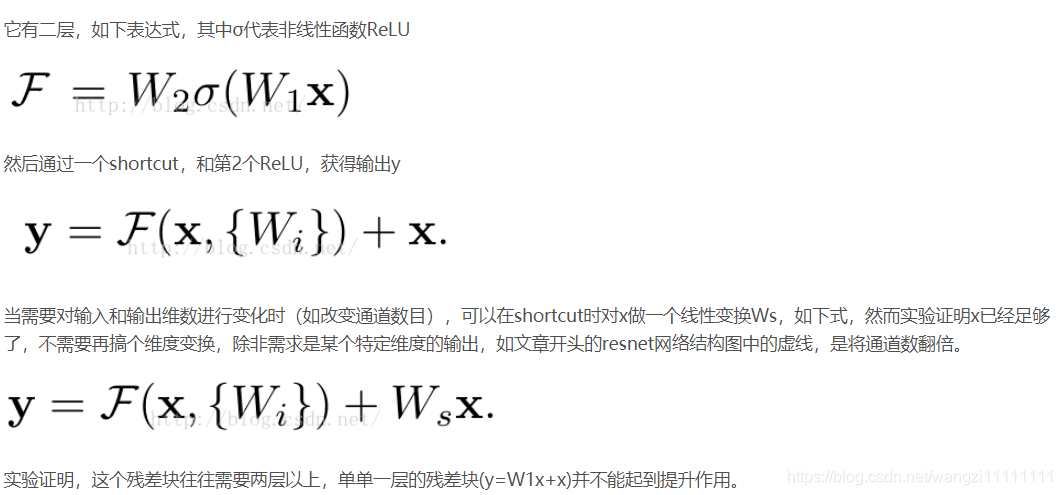

非常非常深的神經網絡是很難訓練的,因為存在梯度消失和梯度爆炸問題。ResNets是由殘差塊(Residual block)構建的,首先解釋一下什么是殘差塊。

殘差塊結構:

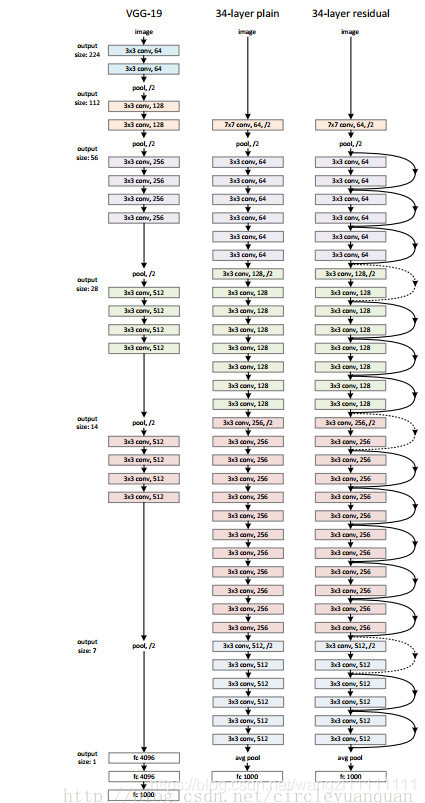

1.2VGG19、ResNet34結構圖

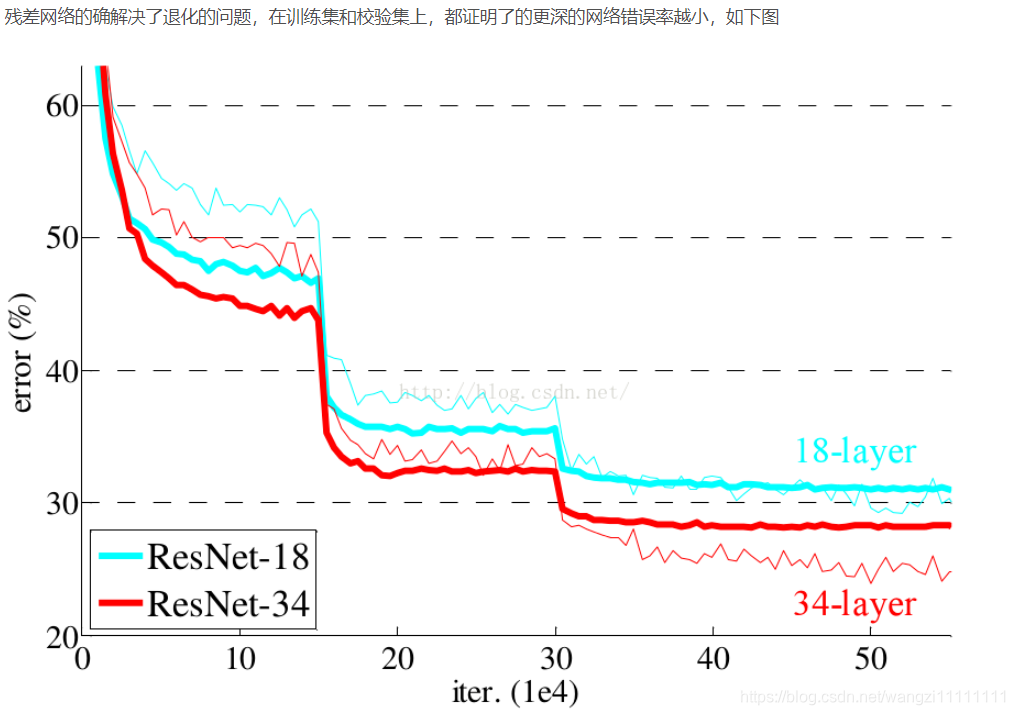

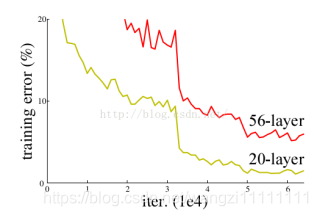

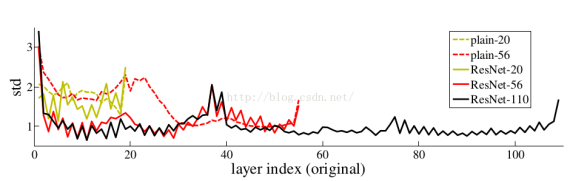

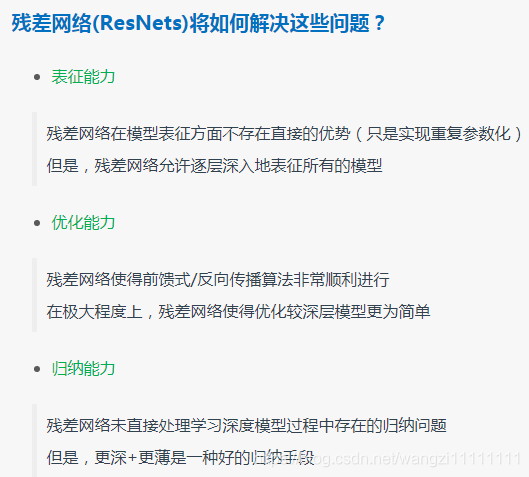

1.3 梯度彌散和網絡退化

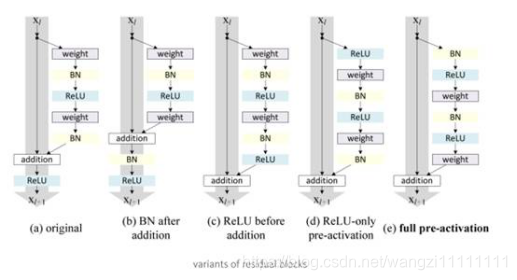

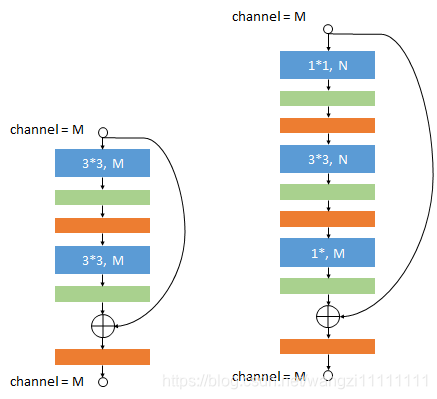

1.4 殘差塊變體

自AlexNet以來,state-of-the-art的CNN結構都在不斷地變深。VGG和GoogLeNet分別有19個和22個卷積層,而AlexNet只有5個。

ResNet基本思想:引入跳過一層或多層的shortcut connection。

1.5 ResNet模型變體

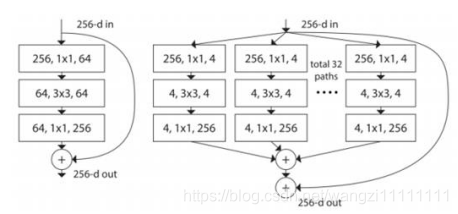

(1)ResNeXt

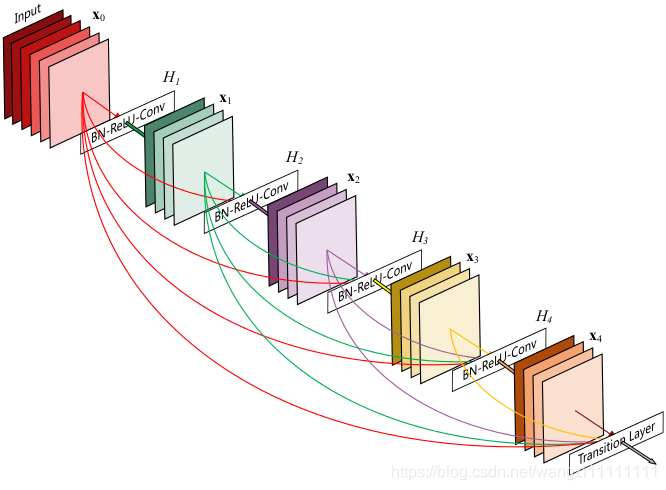

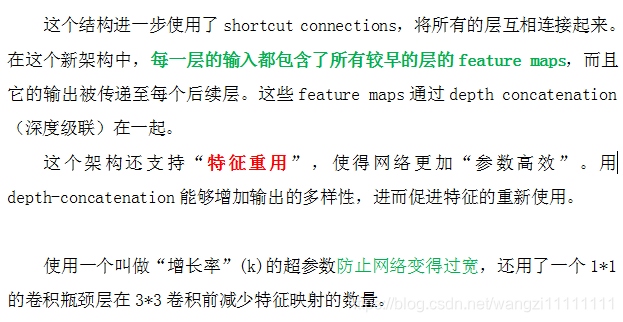

(2)DenseNet

1.6 Residual Network補充

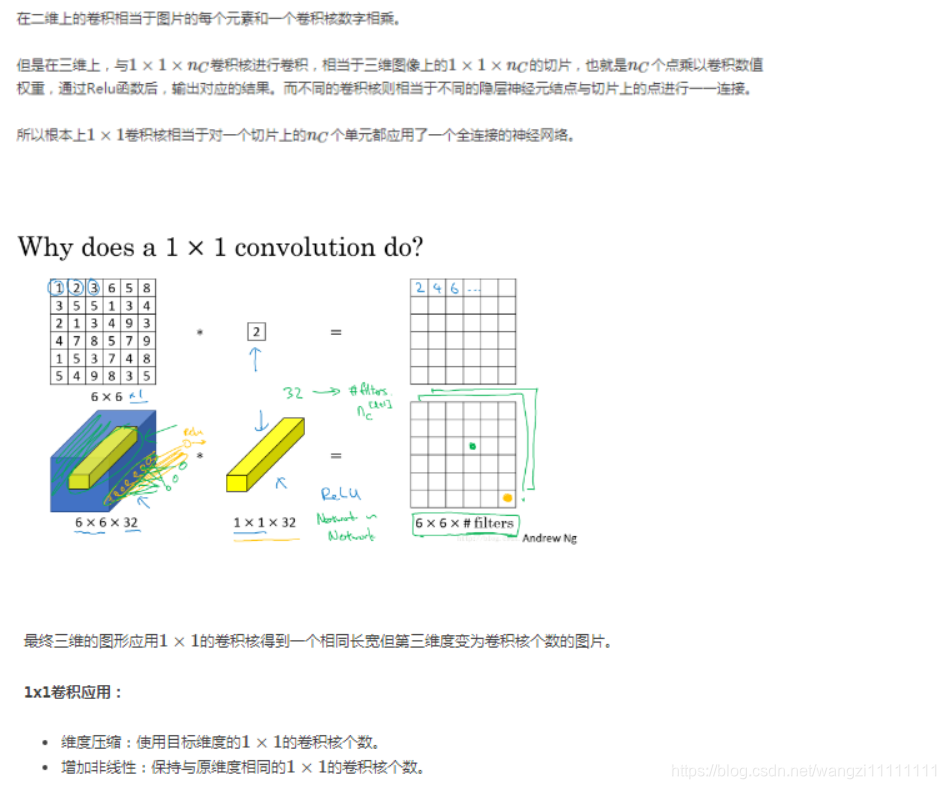

1.7 1*1卷積核(補充)

*1的卷積核相當于對一個切片上的nc個單元,都應用了一個全連接的神經網絡

2.殘差網絡介紹(何凱明)

3.ResNet-50(Ng)

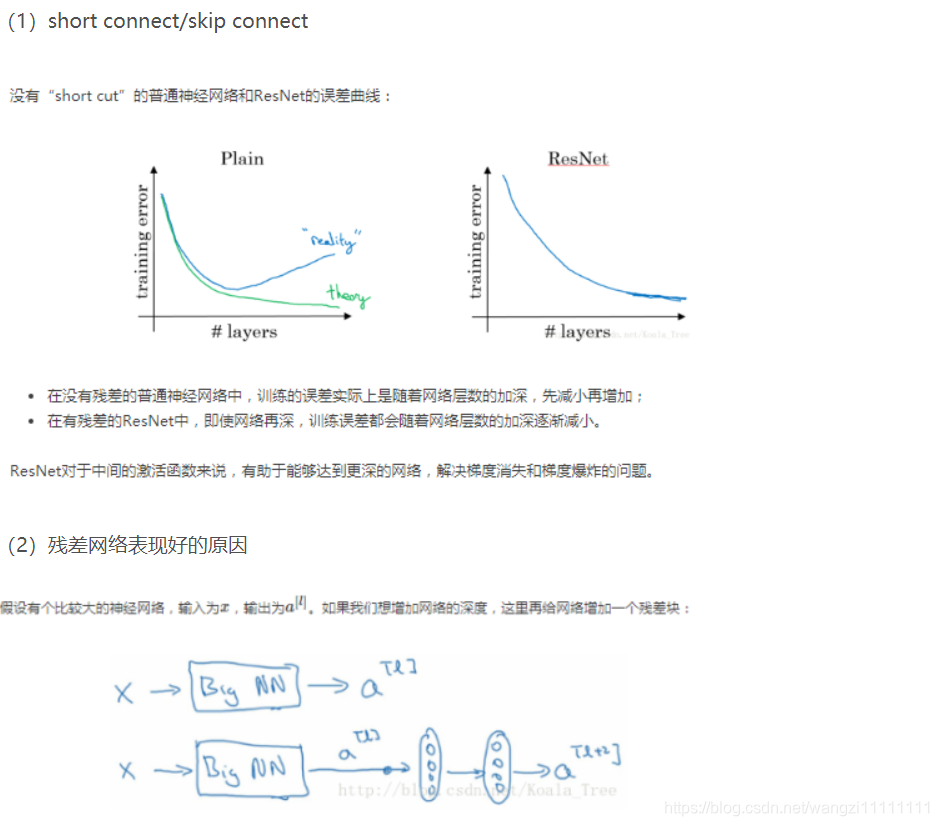

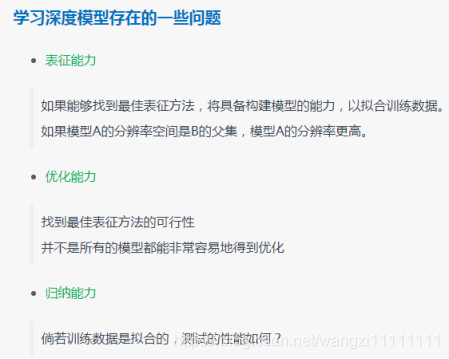

3.1 非常深的神經網絡問題

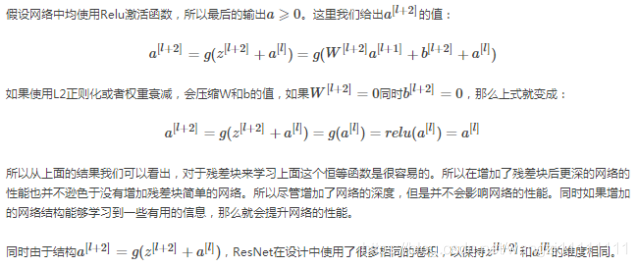

3.2 建立一個殘差網絡

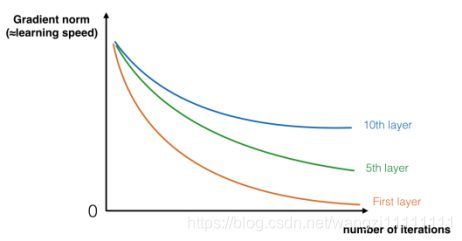

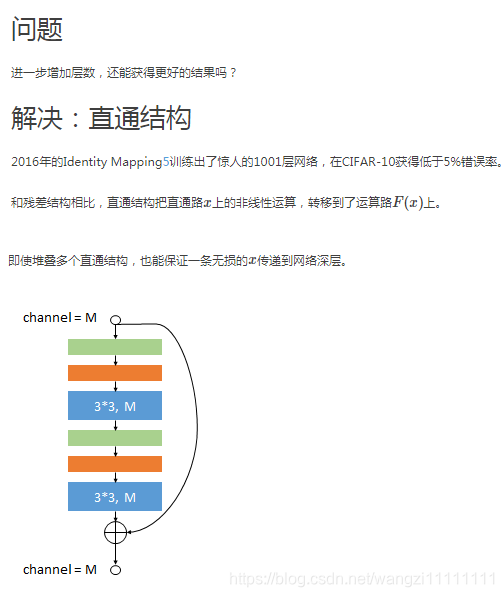

使用 short connect的殘差塊,能很容易的學習標識功能。在其他ResNet塊上進行堆棧,不會損害訓練集的性能。

(1)identity block (標準塊)

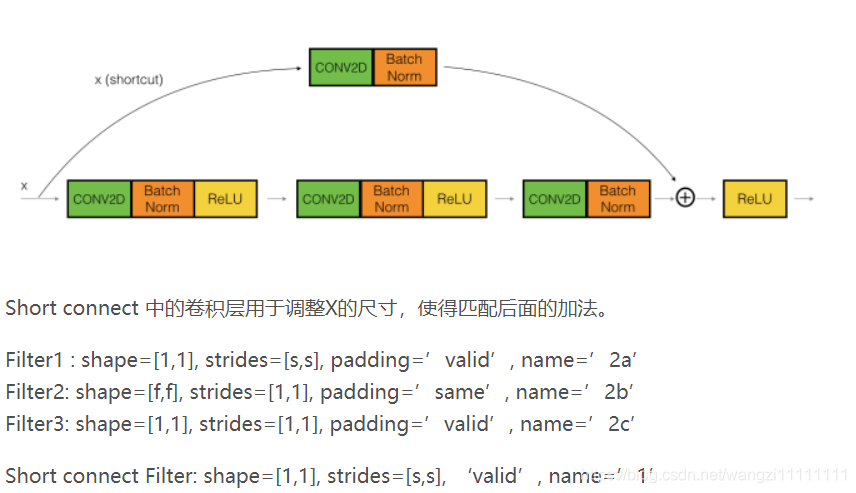

(2)convolutional block

當輸入和輸出的尺寸不匹配時,使用

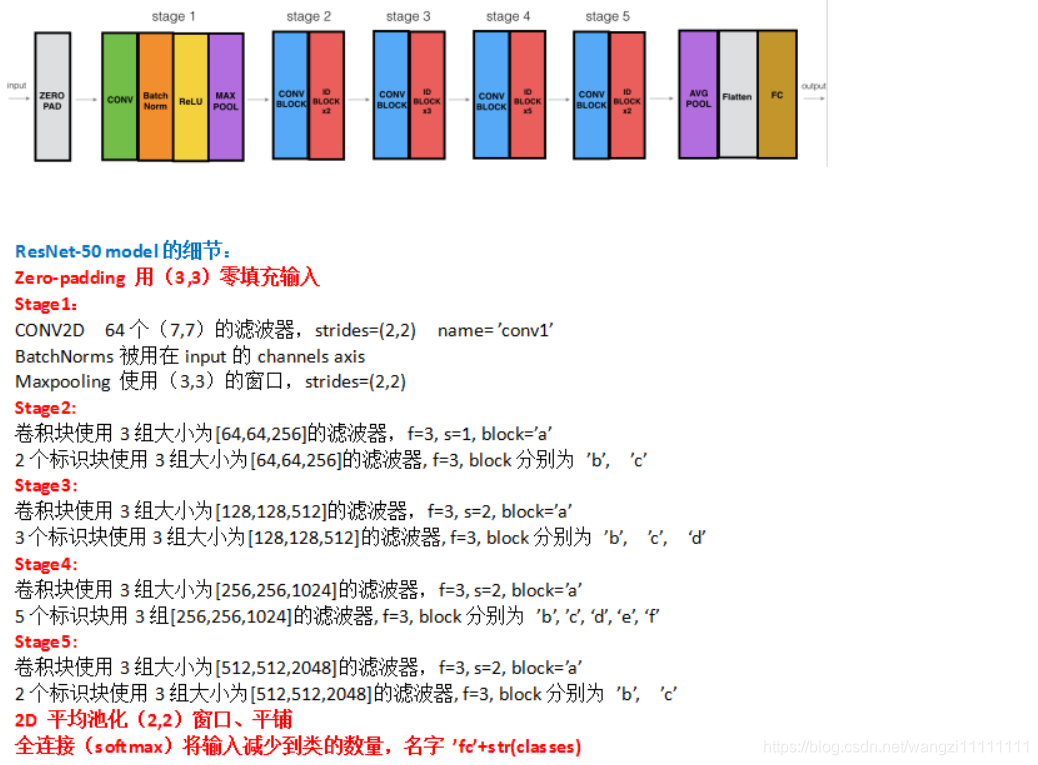

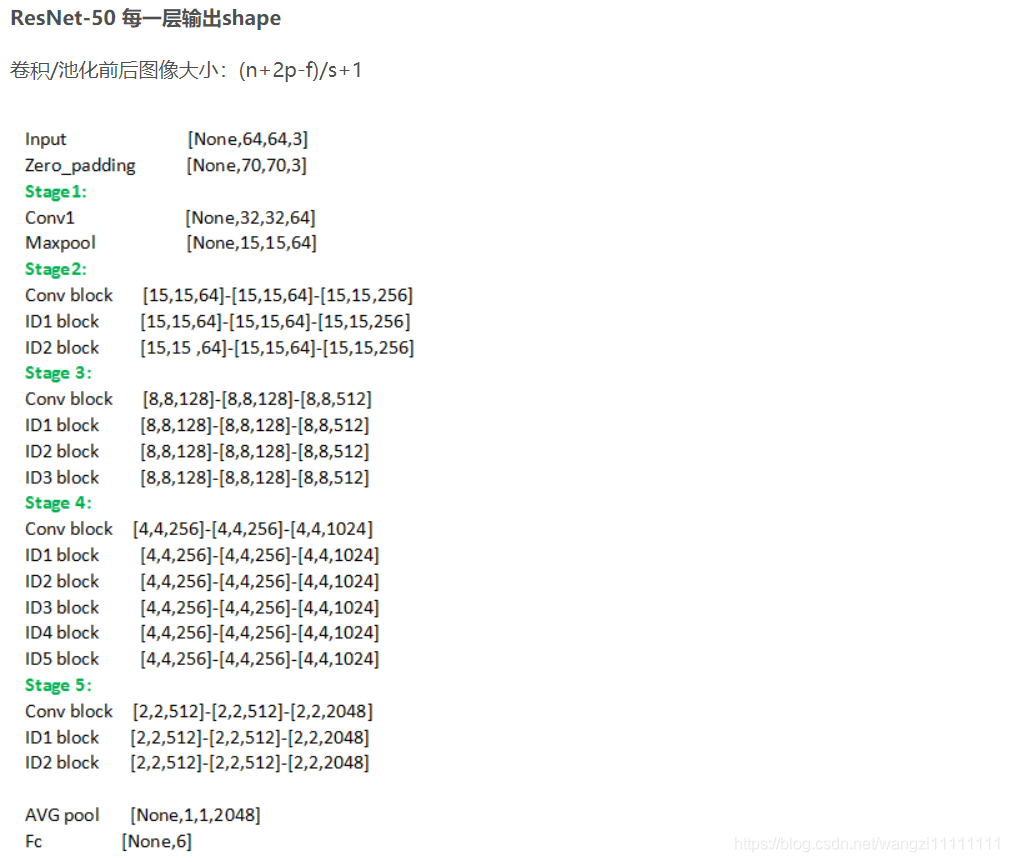

3.3first ResNet model(50layers)

3.4 代碼實現

def identity_block(X, f, filters, stage, block):"""X -- 輸入tensor, shape=(m, n_H_prev, n_W_prev, n_C_prev)f -- identity block主路徑,中間卷積層的濾波器大小filters -- [f1,f2,f3]對應主路徑中三個卷積層的濾波器個數stage -- 用于命名層,階段(數字表示:1/2/3/4/5...)block -- 用于命名層,每個階段中的殘差塊(字符表示:a/b/c/d/e/f...)Return X -- identity block 輸出tensor shape=(m, n_H, n_W, n_C) """"define name basis"conv_name_base = 'res' + str(stage) + block + '_branch'bn_name_base = 'bn' + str(stage) + block + '_branch'"get filter number"F1, F2, F3 = filters"save the input value"X_shortcut = X"主路徑的第一層:conv-bn-relu"X = Conv2D(filters=F1, kernel_size=(1,1), strides=(1,1), padding='valid',name=conv_name_base+'2a', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2a')(X)X = Activation('relu')(X)"主路徑的第二層:conv-bn-relu"X = Conv2D(filters=F2, kernel_size=(f,f), strides=(1,1), padding='same',name=conv_name_base+'2b', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2b')(X)X = Activation('relu')(X)"主路徑的第三層:conv-bn"X = Conv2D(filters=F3, kernel_size=(1,1), strides=(1,1), padding='valid',name=conv_name_base+'2c', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2c')(X)"add shortcut and relu"X = Add()([X, X_shortcut])X = Activation('relu')(X)return X"""s -- convolutional block中,主路徑第一個卷積層和shortcut卷積層,濾波器滑動步長,s=2,用來降低圖片大小"""

def convolutional_block(X, f, filters, stage, block, s=2):conv_name_base = 'res' + str(stage) + block + '_branch'bn_name_base = 'bn' + str(stage) + block + '_branch'F1, F2, F3 = filtersX_shortcut = X"1st layer"X = Conv2D(filters=F1, kernel_size=(1,1), strides=(s,s), padding='valid',name=conv_name_base+'2a', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2a')(X)X = Activation('relu')(X)"2nd layer"X = Conv2D(filters=F2, kernel_size=(f,f), strides=(1,1), padding='same',name=conv_name_base+'2b', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2b')(X)X = Activation('relu')(X)"3rd layer"X = Conv2D(filters=F3, kernel_size=(1,1), strides=(1,1), padding='valid',name=conv_name_base+'2c', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name=bn_name_base+'2c')(X)"shortcut path"X_shortcut = Conv2D(filters=F3, kernel_size=(1,1), strides=(s,s), padding='valid',name=conv_name_base+'1', kernel_initializer=glorot_uniform(seed=0))(X_shortcut)X_shortcut = BatchNormalization(axis=3, name=bn_name_base+'1')(X_shortcut)X = Add()([X, X_shortcut])X = Activation('relu')(X)return X

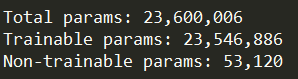

def ResNet50(input_shape=(64,64,3), classes=6):"""input - zero padding stage1:conv - BatchNorm - relu - maxpoolstage2: conv block - identity block *2stage3: conv block - identity block *3stage4: conv block - identity block *5stage5: conv block - identity block *2avgpool - flatten - fully connectinput_shape -- 輸入圖像的shapeclasses -- 類別數Return: a model() instance in Keras""""用input_shape定義一個輸入tensor"X_input = Input(input_shape)"zero padding"X = ZeroPadding2D((3,3))(X_input)"stage 1"X = Conv2D(filters=64, kernel_size=(7,7), strides=(2,2), padding='valid',name='conv1', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name='bn_conv1')(X)X = MaxPooling2D((3,3), strides=(2,2))(X)"stage 2"X = convolutional_block(X, f=3, filters=[64,64,256], stage=2, block='a', s=1)X = identity_block(X,f=3, filters=[64,64,256], stage=2, block='b')X = identity_block(X,f=3, filters=[64,64,256], stage=2, block='c')"stage 3"X = convolutional_block(X, f=3, filters=[128,128,512], stage=3, block='a', s=2)X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='b')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='c')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='d')"stage 4"X = convolutional_block(X, f=3, filters=[256,256,1024], stage=4, block='a', s=2)X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='b')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='c')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='d')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='e')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='f')"stage 5"X = convolutional_block(X, f=3, filters=[512,512,2048], stage=5, block='a', s=2)X = identity_block(X, f=3, filters=[512,512,2048], stage=5, block='b')X = identity_block(X, f=3, filters=[512,512,2048], stage=5, block='c')"fully connect"X = AveragePooling2D((2,2), name='avg_pool')(X)X = Flatten()(X)X = Dense(classes, activation='softmax', name='fc'+str(classes),kernel_initializer=glorot_uniform(seed=0))(X)"a model() instance in Keras"model = Model(inputs=X_input, outputs=X, name='ResNet50')return model

4.ResNet 100-1000層

4.1 152層

代碼

def ResNet101(input_shape=(64,64,3), classes=6):"""input - zero padding stage1:conv - BatchNorm - relu - maxpoolstage2: conv block - identity block *2stage3: conv block - identity block *3stage4: conv block - identity block *22stage5: conv block - identity block *2avgpool - flatten - fully connectinput_shape -- 輸入圖像的shapeclasses -- 類別數Return: a model() instance in Keras""""用input_shape定義一個輸入tensor"X_input = Input(input_shape)"zero padding"X = ZeroPadding2D((3,3))(X_input)"stage 1"X = Conv2D(filters=64, kernel_size=(7,7), strides=(2,2), padding='valid',name='conv1', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name='bn_conv1')(X)X = MaxPooling2D((3,3), strides=(2,2))(X)"stage 2"X = convolutional_block(X, f=3, filters=[4,4,16], stage=2, block='a', s=1)X = identity_block(X,f=3, filters=[4,4,16], stage=2, block='b')X = identity_block(X,f=3, filters=[4,4,16], stage=2, block='c')"stage 3"X = convolutional_block(X, f=3, filters=[8,8,32], stage=3, block='a', s=2)X = identity_block(X, f=3, filters=[8,8,32], stage=3, block='b')X = identity_block(X, f=3, filters=[8,8,32], stage=3, block='c')X = identity_block(X, f=3, filters=[8,8,32], stage=3, block='d')"stage 4""identity block from 5(50 layers) to 22(101 layers)"X = convolutional_block(X, f=3, filters=[16,16,64], stage=4, block='a', s=2)X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='b')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='c')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='d')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='e')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='f')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='g')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='h')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='i')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='j')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='k')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='l')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='m')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='n')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='o')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='p')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='q')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='r')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='s')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='t')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='u')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='v')X = identity_block(X, f=3, filters=[16,16,64], stage=4, block='w')"stage 5"X = convolutional_block(X, f=3, filters=[32,32,128], stage=5, block='a', s=2)X = identity_block(X, f=3, filters=[32,32,128], stage=5, block='b')X = identity_block(X, f=3, filters=[32,32,128], stage=5, block='c')"fully connect"X = AveragePooling2D((2,2), name='avg_pool')(X)X = Flatten()(X)X = Dense(classes, activation='softmax', name='fc'+str(classes),kernel_initializer=glorot_uniform(seed=0))(X)"a model() instance in Keras"model = Model(inputs=X_input, outputs=X, name='ResNet101')return model

def ResNet152(input_shape=(64,64,3), classes=6):"""input - zero padding stage1:conv - BatchNorm - relu - maxpoolstage2: conv block - identity block *2stage3: conv block - identity block *7stage4: conv block - identity block *35stage5: conv block - identity block *2avgpool - flatten - fully connectinput_shape -- 輸入圖像的shapeclasses -- 類別數Return: a model() instance in Keras""""用input_shape定義一個輸入tensor"X_input = Input(input_shape)"zero padding"X = ZeroPadding2D((3,3))(X_input)"stage 1"X = Conv2D(filters=64, kernel_size=(7,7), strides=(2,2), padding='valid',name='conv1', kernel_initializer=glorot_uniform(seed=0))(X)X = BatchNormalization(axis=3, name='bn_conv1')(X)X = MaxPooling2D((3,3), strides=(2,2))(X)"stage 2"X = convolutional_block(X, f=3, filters=[64,64,256], stage=2, block='a', s=1)X = identity_block(X,f=3, filters=[64,64,256], stage=2, block='b')X = identity_block(X,f=3, filters=[64,64,256], stage=2, block='c')"stage 3""identity block from 3(101 layers) to 7(152 layers)"X = convolutional_block(X, f=3, filters=[128,128,512], stage=3, block='a', s=2)X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='b')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='c')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='d')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='e')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='f')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='g')X = identity_block(X, f=3, filters=[128,128,512], stage=3, block='h')"stage 4""identity block from 22(101 layers) to 35(152 layers)"X = convolutional_block(X, f=3, filters=[256,256,1024], stage=4, block='a', s=2)X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='b')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='c')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='d')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='e')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='f')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='g')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='h')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='i')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='j')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='k')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='l')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='m')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='n')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='o')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='p')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='q')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='r')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='s')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='t')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='u')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='v')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='w')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='x')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='y')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z1')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z2')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z3')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z4')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z5')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z6')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z7')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z8')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z9')X = identity_block(X, f=3, filters=[256,256,1024], stage=4, block='z10')"stage 5"X = convolutional_block(X, f=3, filters=[512,512,2048], stage=5, block='a', s=2)X = identity_block(X, f=3, filters=[512,512,2048], stage=5, block='b')X = identity_block(X, f=3, filters=[512,512,2048], stage=5, block='c')"fully connect"X = AveragePooling2D((2,2), name='avg_pool')(X)X = Flatten()(X)X = Dense(classes, activation='softmax', name='fc'+str(classes),kernel_initializer=glorot_uniform(seed=0))(X)"a model() instance in Keras"model = Model(inputs=X_input, outputs=X, name='ResNet152')return model

-模塊)

-- DenseNet)

-window7配置iPython)

-- Capsules Networks(CapsNet))

-列表list,for循環)

-- GAN)

--try-expect)

-Argparse 簡易使用教程)

-元組tuple)

Keras)

)

-數據載入接口:Dataloader、datasets)

)

-- NoSQL數據庫)