已知兩點坐標拾取怎么操作

有關深層學習的FAU講義 (FAU LECTURE NOTES ON DEEP LEARNING)

These are the lecture notes for FAU’s YouTube Lecture “Deep Learning”. This is a full transcript of the lecture video & matching slides. We hope, you enjoy this as much as the videos. Of course, this transcript was created with deep learning techniques largely automatically and only minor manual modifications were performed. Try it yourself! If you spot mistakes, please let us know!

這些是FAU YouTube講座“ 深度學習 ”的 講義 。 這是演講視頻和匹配幻燈片的完整記錄。 我們希望您喜歡這些視頻。 當然,此成績單是使用深度學習技術自動創建的,并且僅進行了較小的手動修改。 自己嘗試! 如果發現錯誤,請告訴我們!

導航 (Navigation)

Previous Lecture / Watch this Video / Top Level

上一堂課 / 觀看此視頻 / 頂級

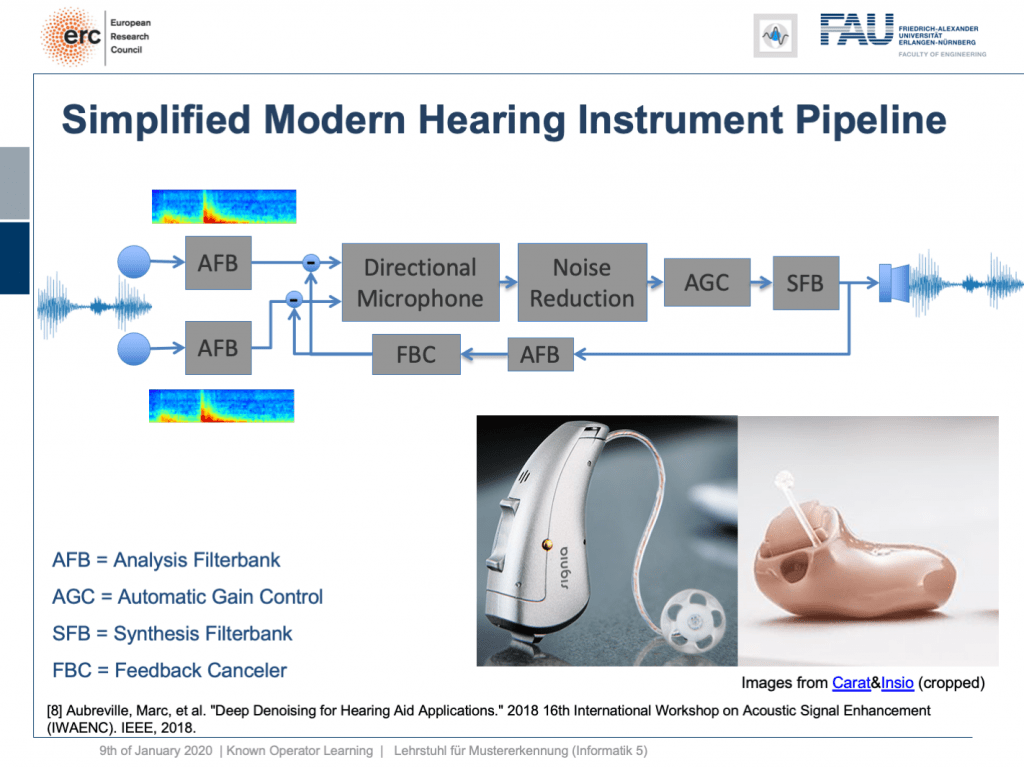

Welcome back to deep learning! This is it. This is the final lecture. So today, I want to show you a couple of more applications of this known operator paradigm and also some ideas where I believe future research could actually go to. So, let’s see what I have here for you. Well, one thing that I would like to demonstrate is the simplified modern hearing aid pipeline. This is a collaboration with a company that is producing hearing aids and they typically have a signal processing pipeline where you have two microphones. They collect some speech signals. Then, this is run through an analysis filter bank. So, this is essentially a short-term Fourier transform. This is then run through a directional microphone in order to focus on things that are in front of you. Then, you use noise reduction in order to get better intelligibility for the person who is wearing the hearing aid. This is followed by an automatic gain control and using the gain control you then do a synthesis of the frequency analysis back to a speech signal that is then played back on a loudspeaker within the hearing aid. So, there’s also a recurrent connection because you want to suppress feedback loops. This kind of pipeline, you can find in modern-day hearing aids of various manufacturers. Here, you see some examples and the key problem in all of this processing is here the noise reduction. This is the difficult part. All the other things, we know how to address with traditional signal processing. But the noise reduction is something that is a huge problem.

歡迎回到深度學習! 就是這個。 這是最后的演講。 因此,今天,我想向您展示這個已知的運算符范例的更多應用程序,以及一些我認為將來可以進行實際研究的想法。 所以,讓我們看看我在這里為您準備的。 好吧,我想展示的一件事是簡化的現代助聽器管道。 這是與一家生產助聽器的公司的合作,它們通常具有信號處理管道,其中有兩個麥克風。 他們收集一些語音信號。 然后,將其運行通過分析過濾器庫。 因此,這本質上是短期傅立葉變換。 然后將其通過定向麥克風運行,以專注于前方的事物。 然后,您可以使用降噪功能來使佩戴助聽器的人獲得更好的清晰度。 接下來是自動增益控制,然后使用增益控制將頻率分析合成為語音信號,然后在助聽器內的揚聲器上播放語音信號。 因此,還有一個循環連接,因為您要抑制反饋循環。 您可以在各種制造商的現代助聽器中找到這種管道。 在這里,您會看到一些示例,所有這些處理過程中的關鍵問題是噪聲的降低。 這是困難的部分。 所有其他的事情,我們知道如何用傳統的信號處理解決。 但是降噪是一個很大的問題。

So, what can we do? Well, we can map this entire hearing aid pipeline onto a deep network. Onto a deep recurrent network and all of those steps can be expressed in terms of differentiable operations.

所以,我們能做些什么? 好吧,我們可以將整個助聽器管道映射到一個深層網絡。 進入深度循環網絡,所有這些步驟都可以通過可區分的操作來表示。

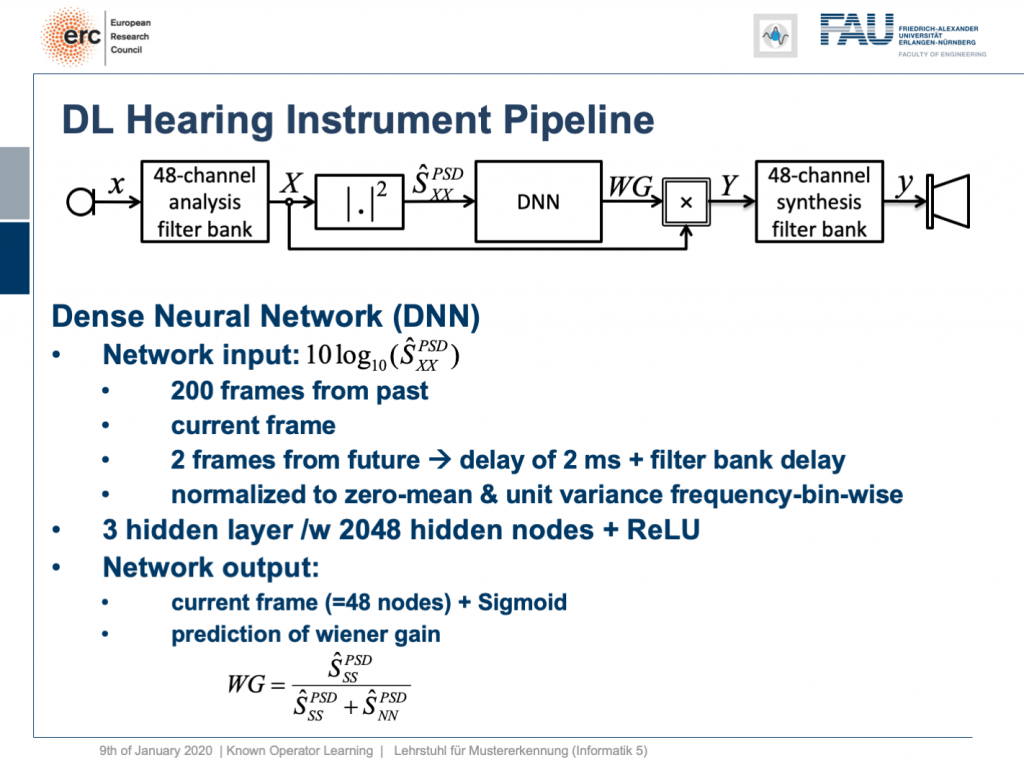

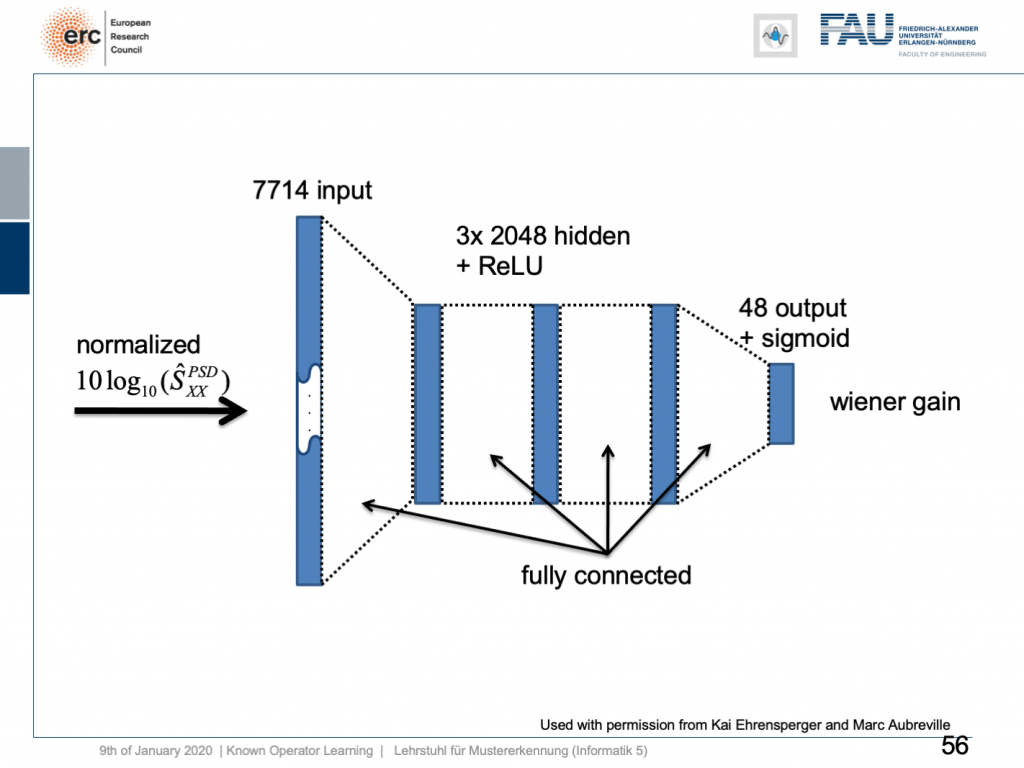

If we do so, we set up the following outline. Actually, our network here is not so deep because we only have three hidden layers but with 2024 hidden nodes and ReLUs. This is then used to predict the coefficients of a Wiener filter gain in order to suppress channels that have particular noises. So, this is the setup. We have an input of seven thousand seven hundred and fourteen nodes from our normalized spectrum. Then, this is run through three hidden layers. They are fully connected with ReLUs and in the end, we have some output that is 48 channels produced by the sigmoid producing our Wiener gain.

如果這樣做,我們將建立以下概述。 實際上,我們的網絡并不那么深,因為我們只有三個隱藏層,但有2024個隱藏節點和ReLU。 然后將其用于預測維納濾波器增益的系數,以便抑制具有特定噪聲的通道。 因此,這就是設置。 從歸一化頻譜中,我們輸入了77414個節點。 然后,這將貫穿三個隱藏層。 它們與ReLU完全相連,最后,我們的輸出是由S型產生的48個通道,產生了我們的維納增益。

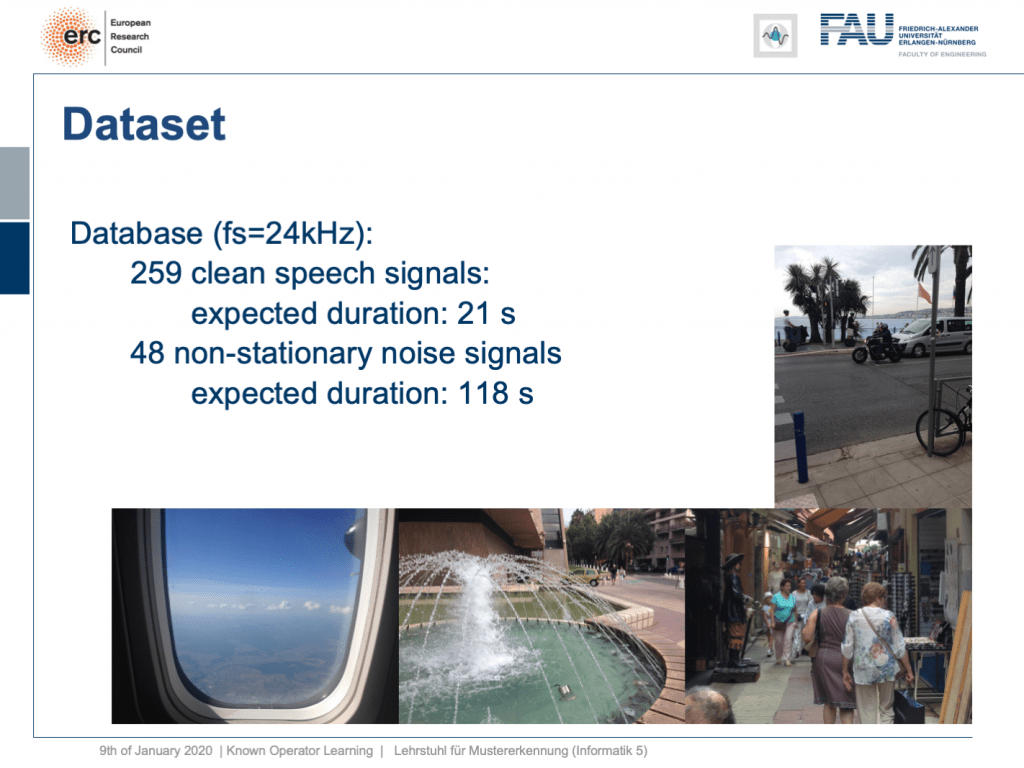

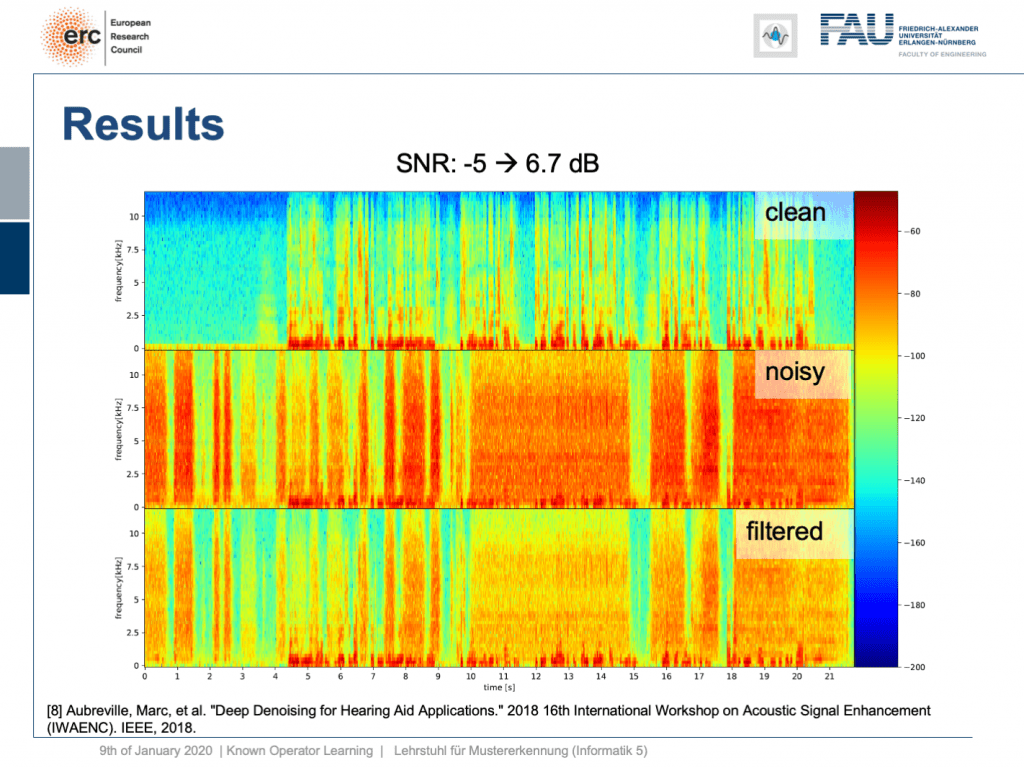

We evaluated this on some data set and here we had 259 clean speech signals. We then essentially had 48 non-stationary noise signals and we mixed them. So, you could argue what we’re essentially training here is a kind of recurrent autoencoder. Actually, a denoising autoencoder because as input we take the clean speech signal plus the noise and on the output, we want to produce the clean speech signal. Now, this is the example.

我們在一些數據集上對此進行了評估,這里有259個干凈的語音信號。 然后,我們基本上得到了48個非平穩噪聲信號,并對其進行了混合。 因此,您可以爭辯說,我們在這里實際上要訓練的是一種遞歸自動編碼器。 實際上,是一種去噪自動編碼器,因為我們將干凈的語音信號加噪聲作為輸入,并在輸出上要產生干凈的語音信號。 現在,這是示例。

Let’s try a non-stationary noise pattern and this is an electronic drill. Also, note that the network has never heard an electronic drill before. This typically kills your hearing aid and let’s listen to the output. So, you can hear that the non-stationary noise is also very well suppressed. Wow. So, that’s pretty cool of course there are many more applications of this.

讓我們嘗試一個非平穩的噪聲模式,這是一個電鉆。 另外,請注意,網絡之前從未聽過電子演習。 這通常會殺死您的助聽器,讓我們聽聽輸出。 因此,您會聽到非平穩噪聲也得到了很好的抑制。 哇。 因此,這很酷,當然還有更多的應用程序。

Let’s look into one more idea. Can we derive networks? So here, let’s say you have a scenario where you collect data in a format that you don’t like, but you know the formal equation between the data and the projection.

讓我們再看看一個想法。 我們可以得出網絡嗎? 因此,在這里,假設您有一個場景,其中以您不喜歡的格式收集數據,但是您知道數據和投影之間的形式方程式。

So, the example that I’m showing here is a cone-beam acquisition. This is simply a typical x-ray geometry. So, you take an X-ray and this is typically conducted in cone-beam geometry. Now, for the cone-beam geometry, we can describe it entirely using this linear operator as we’ve already seen in the previous video. So, we can express the relation between the object x our geometry A subscript CB and our projection p subscript CB. Now, the cone-beam acquisition is not so great because you have magnifications in there. So if you have something close to the source, it will be magnified more than an object closer to the detector. So, this is not so great for diagnosis. In othopedics, they would prefer parallel projections because if you have something, it will be orthogonally projected and it’s not magnified. This would be really great for diagnosis. You would have metric projections and you can simply measure int the projection and it would have the same size as inside the body. So, this would be really nice for diagnosis, but typically we can’t measure it with the systems that we have. So, in order to create this, you would have to create a full reconstruction of the object, doing a full CT scan from all sides, and then reconstruct the object and project it again. Typically in orthopedics, people don’t like slice volumes because they are far too complicated to read. But projection images are much nicer to read. Well, what can we do? We know the factor that connects two equations here is x. So we can simply solve this equation here and produce the solution with respect to x. Once, we have x and the matrix inverse here of A subscript CB times p subscript CB. Then, we simply would multiply it to our production image. But we are not interested in the reconstruction. We are interested in this projection image here. So, let’s plug it into our equation and then we can see that by applying this series of matrices we can convert our cone-beam projections into a parallel-beam projection p subscript PB. There’s no real reconstruction required. Only a kind of intermediate reconstruction is required. Of course, you don’t just acquire a single projection here. You may want to acquire a couple of those projections. Let’s say three or four projections but not thousands as you would in a CT scan. Now, if you look at this set of equations, we know all of the operations. So, this is pretty cool. But we have this inverse here and note that this is again a kind of reconstruction problem, an inverse of a large matrix that is sparse to a large extent. So, we still have a problem estimating this guy here. This is very expensive to do, but we are in the world of deep learning and we can just postulate things. So, let’s postulate that this inverse is simply a convolution. So, we can replace it by a Fourier transform a diagnoal matrix K and an inverse Fourier transform. Suddenly, I’m only estimating parameters of a diagonal matrix which makes the problem somewhat easier. We, can solve it in this domain and again we can use our trick that we have essentially defined a known operator net topology here. We can simply use it with our neural network methods. We use the backpropagation algorithm in order to optimize this guy here. We just use the other layers as fixed layers. By the way, this could also be realized for nonlinear formulas. So, remember as soon as we’re able to compute a subgradient, we can plug it into our network. So you can also do very sophisticated things like including a median filter for example.

因此,我在這里顯示的示例是一個錐束采集。 這只是典型的X射線幾何形狀。 因此,您需要拍攝X射線,并且通常以錐形束幾何形狀進行。 現在,對于錐束幾何形狀,我們可以完全使用此線性運算符對其進行描述,就像我們在上一個視頻中已經看到的那樣。 因此,我們可以表示對象x我們的幾何A下標CB和我們的投影p下標CB之間的關系。 現在,錐束采集不是那么好,因為那里有放大倍數。 因此,如果您靠近源頭,它會比靠近探測器的對象被放大。 因此,這對于診斷不是那么好。 在骨科手術中,他們更喜歡平行投影,因為如果您有東西,它將被正交投影并且不會被放大。 這對于診斷確實非常有用。 您將擁有公制投影,并且您可以簡單地測量int投影,它的尺寸將與人體內部相同。 因此,這對于診斷確實非常好,但是通常我們無法使用已有的系統對其進行測量。 因此,為了創建此對象,您將必須創建對象的完整重建,從各個側面進行完整的CT掃描,然后重建對象并再次對其進行投影。 通常在整形外科中,人們不喜歡切片,因為它們太復雜而難以閱讀。 但是投影圖像更易于閱讀。 好吧,我們該怎么辦? 我們知道這里連接兩個方程的因數是x 。 因此,我們可以在這里簡單地求解此方程,并產生關于x的解。 一次,我們有x和A下標CB乘以p下標CB的矩陣逆。 然后,我們只需將其乘以我們的生產圖像即可。 但是我們對重建不感興趣。 我們對此投影圖像感興趣。 因此,讓我們將其插入方程式中,然后我們可以看到,通過應用這一系列矩陣,我們可以將錐束投影轉換為平行束投影p下標PB。 不需要真正的重建。 僅需要一種中間重建。 當然,您不僅在這里獲得了一個投影。 您可能需要獲得其中的一些預測。 假設有3或4個投影,但不像CT掃描中的投影那樣成千上萬個。 現在,如果您查看這組方程,我們知道所有操作。 因此,這非常酷。 但是我們在這里有這個逆,并注意到這又是一種重構問題,是在很大程度上稀疏的大矩陣的逆。 因此,在此估算這個人仍然有問題。 這樣做非常昂貴,但是我們處在深度學習的世界中,我們只能假設一些事情。 因此,我們假設該逆只是卷積。 因此,我們可以用診斷矩陣K和傅立葉逆變換進行傅立葉變換來代替它。 突然,我只是估計對角矩陣的參數,這使問題更容易解決。 我們可以在此域中解決它,并且再次可以使用我們的技巧,即在這里本質上定義了已知的操作員網絡拓撲。 我們可以簡單地將其與我們的神經網絡方法一起使用。 我們在這里使用反向傳播算法來優化此人。 我們只是將其他層用作固定層。 順便說一下,這對于非線性公式也可以實現。 因此,請記住,一旦我們能夠計算出次梯度,就可以將其插入我們的網絡。 因此,您還可以做一些非常復雜的事情,例如包括一個中值濾波器。

Let’s look at an example here. We do the rebinning of MR projections in this case. We will do an acquisition in k-space and these are typically just parallel projections. Now, we’re interested in generating overlay for X-rays and x-rays we need the come-beam geometry. So, we take a couple of our projections and then rebin them to match exactly the come-beam geometry. The cool thing here is that we would be able to unite the contrasts from MR and X-ray in a single image. This is not straightforward. If you initialize with just the Ram-Lak filter, what you would get is the following thing here. So in this plot here, you can see the difference between the prediction and the ground truth in green, the ground truth or label is shown in blue, and our prediction is shown in orange. We trained only on geometric primitives here. So, we train with a superposition of cylinders and some Gaussian noise, and so on. There is never anything that even looks faintly like a human in the training data set, but we take this and immediately apply it to an anthropomorphic phantom. This is to show you the generality of the method. We are estimating very few coefficients here. This allows us very very nice generalization properties onto things that have never been seen in the training data set. So, let’s see what happens over the iterations. You can see the filter deforms and we are approaching, of course, the correct label image here. The other thing that you see is that this image on the right got dramatically better. If I go ahead with a couple of more iterations, you can see we can really get a crisp and sharp image. Obviously, we can also not just in look into a single filter, but instead individual filters for the different parallel projections.

讓我們在這里看一個例子。 在這種情況下,我們將對MR投影進行重新組合。 我們將在k空間中進行采集,這些通常只是平行投影。 現在,我們對生成X射線和X射線的疊加層感興趣,我們需要光束幾何。 因此,我們采用了幾個投影,然后重新組合它們以完全匹配光束幾何。 這里很酷的事情是,我們將能夠將MR和X射線的對比度合并到一個圖像中。 這并不簡單。 如果僅使用Ram-Lak過濾器進行初始化,那么您將獲得以下內容。 因此,在此圖中,您可以看到預測與綠色之間的差異,綠色或藍色顯示地面預測或標簽,橙色顯示我們的預測。 在這里,我們僅對幾何圖元進行訓練。 因此,我們用圓柱體和一些高斯噪聲的疊加進行訓練,等等。 在訓練數據集中,從來沒有任何東西看起來像人一樣微弱,但是我們接受了這一點,并立即將其應用于擬人化模型。 這是向您展示該方法的一般性。 在這里,我們估計的系數很小。 這使我們對訓練數據集中從未見過的事物具有非常好的泛化屬性。 因此,讓我們看看在迭代過程中會發生什么。 您會看到過濾器變形,我們當然在這里接近正確的標簽圖像。 您看到的另一件事是,右側的圖像明顯好得多。 如果再進行幾次迭代,您會看到我們確實可以獲得清晰而清晰的圖像。 顯然,我們不僅可以查看單個濾鏡,還可以查看針對不同平行投影的單個濾鏡。

We can now also train view-dependent filters. So, this is what you see here. Now, we have a filter for every different view that is acquired. We can still show the difference between the predicted image and the label image and again directly applied to our anthropomorphic phantom. You see also in this case, we get a very good convergence. We train filters and those filters can be united in order to produce very good images of our phantom.

現在,我們還可以訓練與視圖相關的過濾器。 所以,這就是您在這里看到的。 現在,我們為獲取的每個不同視圖提供了一個過濾器。 我們仍然可以顯示預測圖像和標簽圖像之間的差異,并再次將其直接應用于擬人化模型。 您還會看到在這種情況下,我們得到了很好的融合。 我們訓練濾鏡,并且可以將那些濾鏡組合在一起,以產生幻影的非常好的圖像。

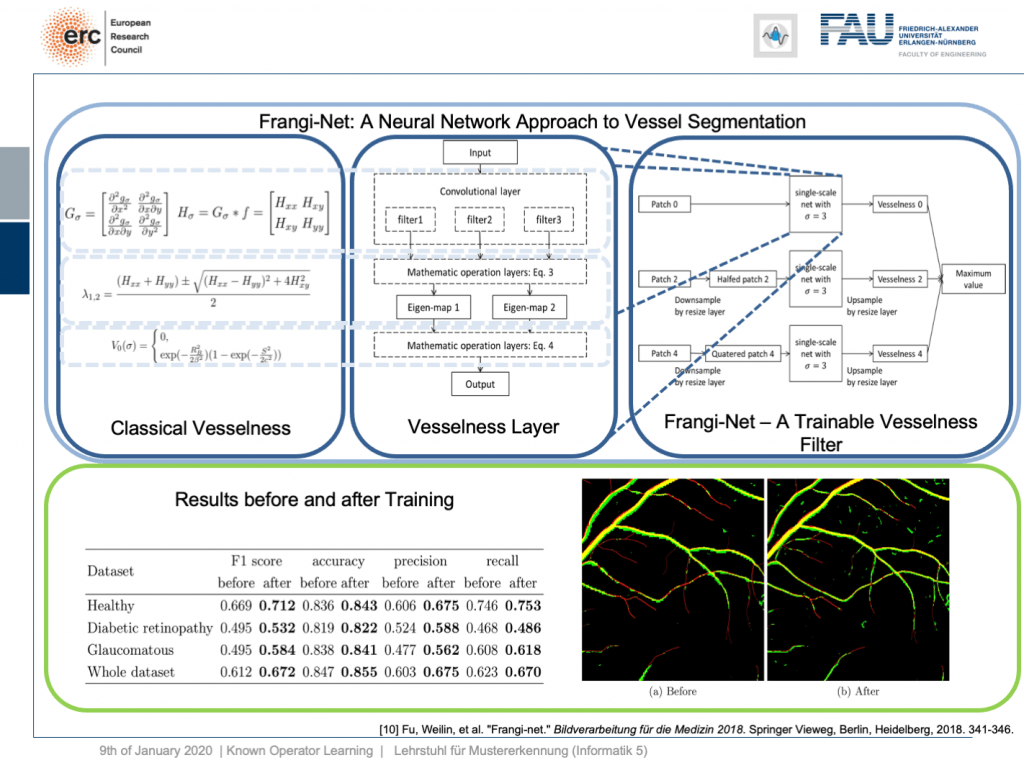

Very well, there are also other things that we can use as a kind of prior knowledge. Here is a work where we essentially took a heuristic method, the so-called vesselness filter that has been proposed by Frangi. You can show that the processing that it does is essentially convolutions. There’s an eigenvalue computation. But if you look at the eigenvalue computation, you can see that this central equation here. It can also be expressed as a layer and this way we can map the entire computations of the Frangi filter into a specialized kind of layer. This can then be trained in a multiscale approach and gives you a trainable version of the Frangi filter. Now, if you do so, you can produce vessel segmentations and they are essentially inspired by the Frangi filter but because they are trainable they produce much better results.

很好,還有其他東西可以用作先驗知識。 這是我們基本上采用啟發式方法的工作,這是Frangi提出的所謂的脈管濾波器。 您可以證明它所做的處理本質上是卷積。 有一個特征值計算。 但是,如果查看特征值計算,則可以在此處看到該中心方程。 它也可以表示為一層,這樣我們就可以將Frangi過濾器的整個計算映射到一種特殊的層中。 然后可以采用多尺度方法對其進行訓練,并為您提供Frangi過濾器的可訓練版本。 現在,如果您這樣做,就可以進行血管分割,并且它們實質上是受Frangi過濾器啟發的,但是由于它們是可訓練的,因此可以產生更好的結果。

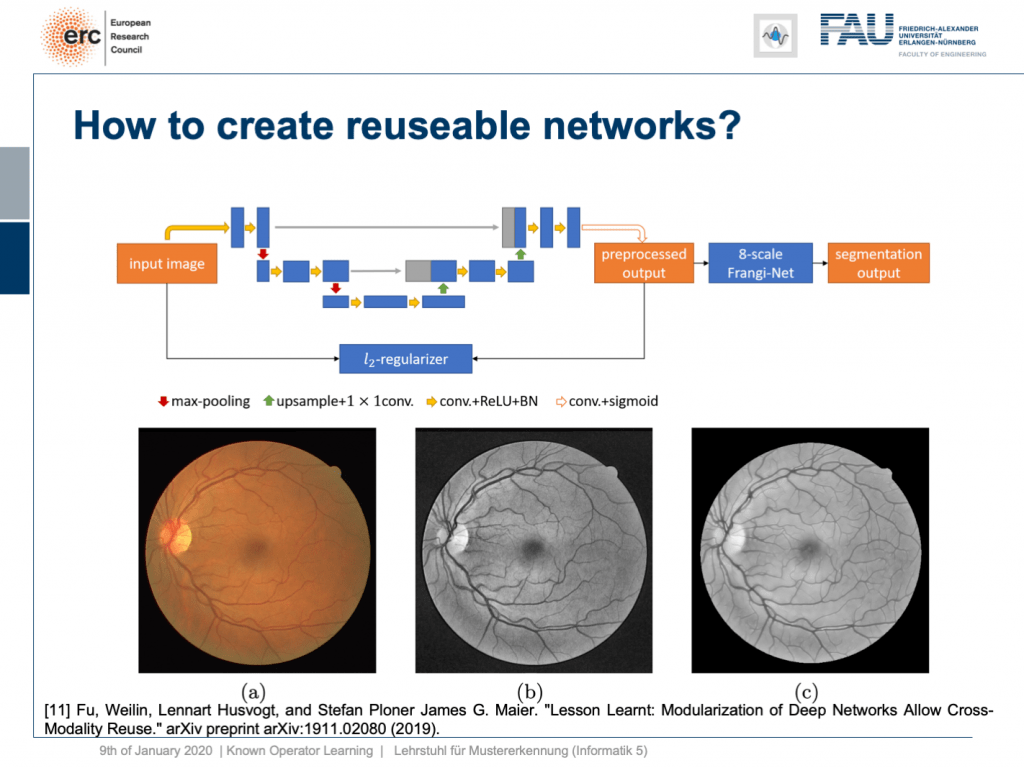

This is kind of interesting, but you very quickly realize that one reason why the Frangi filter fails is inadequate pre-processing. So, we can also combine this with a kind of pre-processing network. Here, the idea then is that you take let’s say a U-net or a guided filter network. Also, the guided filter or by the way the joint bilateral filter can be mapped into neural network layers. You can include them here and you design a special loss. This special loss is not just optimizing the segmentation output, but you combine it with some kind of autoencoder loss here. So in this layer, you want to have a pre-processed image that is still similar to the input, but with properties such that the vessel segmentation using an 8 scale Frankie filter is much better. So, we can put this into our network and train it. As a result, we get vessel detection and this vessel detection is on par with a U-net. Now, the U-Net is essentially a black box method, but here we can say “Okay, we have a kind of pre-processing net.” By the way, using a guided filter, it works really well. So, it doesn’t have to be a U-net. This is kind of a neural network debugging approach. You can show that we can now module by module replace parts of our U-net. In the last version, we don’t have U-nets at all anymore, but we have a guided filter network here and the Frangi filter. This has essentially the same performance as the U-net. So, this way we are able to modularize our networks. Why would you want to create modules? Well, the reason is modules are reusable. So here, you see the output on eye imaging data of ophthalmic data. This is a typical fundus image. So it’s an RGB image of the eye background. It shows the blind spot where the vessels all penetrate the retina. The fovea is where you have essentially the best resolution on your retina. Now, typically if you want to analyze those images, you would just take the green color Channel because it’s the channel of the highest contrast. The result of our pre-processing network can be shown here. So, we get significant noise reduction, but at the same time, we also get this emphasis on vessels. So, it kind of improves how the vessels are displayed and also fine vessels are preserved.

這很有意思,但是您很快就會意識到Frangi過濾器失敗的原因之一是預處理不足。 因此,我們也可以將其與一種預處理網絡結合起來。 在這里,我們的想法是假設您使用一個U-net或一個引導過濾器網絡。 而且,引導濾波器或通過聯合雙邊濾波器可以映射到神經網絡層。 您可以在此處包括它們,并且可以設計特殊的損耗。 這種特殊的損失不僅是優化分段輸出,而且在這里將其與某種自動編碼器損失結合在一起。 因此,在此層中,您需要一個預處理后的圖像,該圖像仍與輸入相似,但具有的屬性使得使用8比例Frankie濾波器進行血管分割要好得多。 因此,我們可以將其放入我們的網絡并對其進行培訓。 結果,我們得到了船只檢測,并且該船只檢測與U-net相當。 現在,U-Net本質上是一種黑匣子方法,但是在這里我們可以說“好的,我們有一種預處理網絡。” 順便說一句,使用導向濾鏡,它確實運行良好。 因此,它不必是U-net。 這是一種神經網絡調試方法。 您可以證明我們現在可以逐模塊更換U-net的各個部分。 在最后一個版本中,我們再也沒有U-net了,但是這里有一個引導過濾器網絡和Frangi過濾器。 這與U-net具有基本相同的性能。 因此,通過這種方式,我們可以使我們的網絡模塊化。 您為什么要創建模塊? 好吧,原因是模塊是可重用的。 因此,在這里,您將看到眼科數據在眼部成像數據上的輸出。 這是典型的眼底圖像。 這是眼睛背景的RGB圖像。 它顯示了血管全部穿透視網膜的盲點。 中央凹是視網膜上分辨率最高的地方。 現在,通常,如果您要分析這些圖像,則只需選擇綠色通道,因為它是對比度最高的通道。 我們的預處理網絡的結果可以在此處顯示。 因此,我們獲得了顯著的降噪效果,但與此同時,我們也將重點放在了船只上。 因此,它改善了容器的展示方式,并保留了精美的容器。

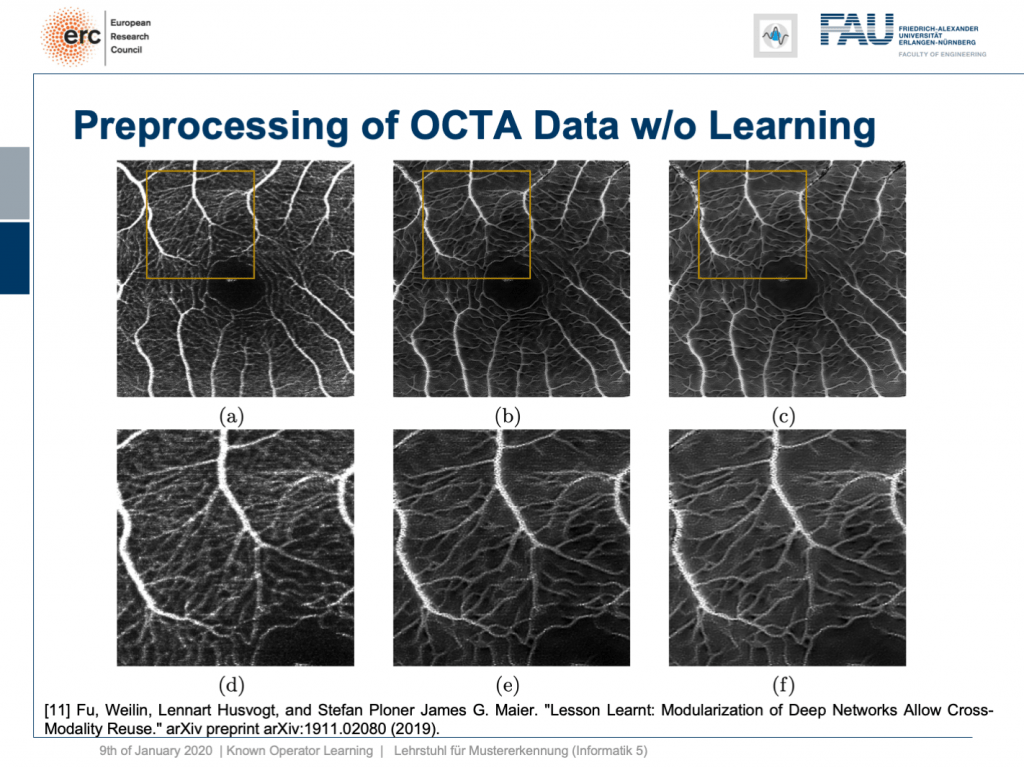

Okay, this is nice, but it only works on fundus data, right? No, our modularization shows that if we take this kind of modeling, we are able to transfer the filter to a completely different modality. This is now optical cohere tomography angiography (OCTA), a specialist modality in order to extract contrast-free vessel images of the eye background. You can now demonstrate that our pre-processing filter can be applied to these data without any additional need for fine-tuning, learning, or whatnot. You take this filter and apply it to the en-face images that, of course, show similar anatomy. But you don’t need any training on OCTA data at all. This is the OCTA input image on the left. This is the output of our filter, in the center, and this is a 50% blend of the two, on the right. Here, we have the magnified areas and you can see very nicely that what is appearing like noise is actually reformed into vessels in the output of our filter. Now, these are qualitative results. By the way, until now we finally also have quantitative results and we are actually quite happy that our pre-processing network is really able to produce the vessels at the right locations. So, this is a very interesting result and this shows us that we kind of can modularize networks and make them reusable without having to train them. So, we can now probably generate blocks that can be reassembled to new networks without additional adjustment and fine-tuning. This is actually pretty cool.

好的,這很好,但是僅適用于眼底數據,對吧? 不,我們的模塊化表明,如果我們采用這種建模,我們就能將過濾器轉移到完全不同的模態。 現在這是光學相干斷層掃描血管造影(OCTA),這是一種專業模式,用于提取眼睛背景的無對比度血管圖像。 現在,您可以證明我們的預處理過濾器可以應用于這些數據,而無需進行任何其他微調,學習或其他操作。 您可以使用此濾鏡并將其應用于當然顯示出類似解剖結構的面部圖像。 但是您根本不需要任何有關OCTA數據的培訓。 這是左側的OCTA輸入圖像。 這是我們過濾器的輸出,位于中間,這是兩者的50%混合,位于右側。 在這里,我們有放大的區域,您可以非常清楚地看到,實際上出現的噪聲實際上已在濾波器的輸出中重新形成為血管。 現在,這些是定性結果。 順便說一句,到目前為止,我們最終也獲得了定量的結果,我們對我們的預處理網絡確實能夠在正確的位置生產船只感到非常滿意。 因此,這是一個非常有趣的結果,它向我們表明,我們可以對網絡進行模塊化,并使它們可重用,而無需進行培訓。 因此,我們現在可以生成無需重新調整和微調即可重新組裝到新網絡的塊。 這實際上很酷。

Well, this essentially leads us back to our classical pattern recognition pipeline. You remember, we looked at that in the very beginning. We have the sensor, the pre-processing, the features, and the classification. The classical role of neural networks was just classifying and you had all these feature engineering on the path here. We said that’s much better to do deep learning because then we do everything end-to-end and we can optimize all on the way. Now, if we look at this graph then we can also think about whether we actually need something like neural network design patterns. One design pattern is of course the end-to-end learning, but you may also want to include these autoencoder pre-processing losses in order to get the maximum out of your signals. On the one hand, you want to make sure that you have an interpretable module here that still remains in the image domain. On the other hand, you want to have good features and another thing that we learned about in this class is multi-task learning. So, multi-task learning associates the same latent space with different problems with different classification results. This way by implementing a multi-task loss, we make sure that we get very general features and features that will be applicable to a wide range of different tasks. So, essentially we can see that by appropriate construction of our loss functions, we’re actually back to our classical pattern recognition pipeline. It’s not the same pattern recognition pipeline that we had in a classical sense because everything is end-to-end and differentiable. So, you could argue that what we’re going towards right now is CNNs, ResNets, global pooling, differentiable rendering even are kinds of known operations that are embedded into those networks. We then essentially get modules that can be recombined and we probably end up in differentiable algorithms. This is the path that we’re going: Differentiable, adjustable algorithms that can be fine-tuned using only a little bit of data.

好吧,這實質上使我們回到了傳統的模式識別管道。 您還記得,我們從一開始就看過它。 我們有傳感器,預處理,功能和分類。 神經網絡的經典作用只是分類,在這里的路徑上已經有了所有這些特征工程。 我們說深度學習要好得多,因為那樣我們就可以端到端地做所有事情,并且可以一路優化。 現在,如果我們看一下這張圖,我們還可以考慮我們是否真的需要諸如神經網絡設計模式之類的東西。 一種設計模式當然是端到端學習,但是您可能還希望包括這些自動編碼器的預處理損耗,以便最大程度地利用信號。 一方面,您要確保此處有一個可解釋的模塊,該模塊仍保留在映像域中。 另一方面,您希望具有良好的功能,而在本課程中我們學到的另一件事是多任務學習。 因此,多任務學習將相同的潛在空間與不同的問題與不同的分類結果相關聯。 這樣,通過實現多任務丟失,我們確保獲得非常通用的功能,并將這些功能應用于各種不同的任務。 因此,從本質上我們可以看到,通過適當構建損失函數,我們實際上又回到了傳統的模式識別管道。 這與傳統意義上的模式識別流水線不同,因為一切都是端到端且可區分的。 因此,您可能會爭辯說,我們現在要使用的是CNN,ResNets,全局池,可區分的呈現,甚至是嵌入到這些網絡中的已知操作。 然后,我們實質上獲得了可以重組的模塊,并且最終可能會得出可區分的算法。 這就是我們要走的路:可微調的可調算法,僅需少量數據即可對其進行微調。

I wanted to show to you this concept because I think known operator learning is pretty cool. It also means that you don’t have to throw away all of the classical theory that you already learned about: Fourier transforms and all the clever ways of how you can process a signal. They still are very useful and they can be embedded into your networks, not just using regularization and losses. We’ve already seen when we talked about this bias-variance tradeoff, this is essentially one way how you can reduce variance and bias at the same time: You incorporate prior knowledge on the problem. So, this is pretty cool. Then, you can create algorithms, learn the weights, you reduce the number of parameters. Now, we have a nice theory that also shows us that what we are doing here is sound and virtually all of the state-of-the-art methods can be integrated. There are very few operations where you cannot find a subgradient approximation. If you don’t find a subgradient approximation, there are probably also other ways around it, such that you can still work with it. This makes methods very efficient, interpretable, and you can also work with modules. So, that’s pretty cool, isn’t it?

我想向您展示這個概念,因為我認為已知的操作員學習非常酷。 這也意味著您不必拋棄已經學到的所有經典理論:傅里葉變換以及如何處理信號的所有巧妙方法。 它們仍然非常有用,可以嵌入到您的網絡中,而不僅僅是使用正則化和丟失。 當我們談論偏差-偏差權衡時,我們已經看到了,這實質上是您可以同時減少偏差和偏差的一種方式:您將有關問題的先驗知識納入其中。 因此,這非常酷。 然后,您可以創建算法,學習權重,減少參數數量。 現在,我們有了一個很好的理論,這也向我們表明,我們在這里所做的一切都是合理的,并且幾乎所有最新技術都可以集成。 很少有操作無法找到次梯度近似值。 如果找不到次梯度近似值,則可能還有其他解決方法,因此您仍然可以使用它。 這使方法非常有效,易于解釋,并且您也可以使用模塊。 所以,這很酷,不是嗎?

Well, this is our last video. So, I also want to thank you for this exciting semester. This is the first time that I am entirely teaching this class in a video format. So far, what I heard, the feedback was generally very positive. So, thank you very much for providing feedback on the way. This is also very crucial and you can see that we improved on the lecture on various occasions in terms of hardware and also in what to include, and so on. Thank you very much for this. I had a lot of fun with this and I think a lot of things I will also keep on doing in the future. So, I think these video lectures are a pretty cool way, in particular, if you’re teaching a large class. In the non-corona case, this class would have an audience of 300 people and I think, if we use things like these recordings, we can also get a very personal way of communicating. I can also use the time that I don’t spend in the lecture hall for setting up things like question and answer sessions. So, this is pretty cool. The other thing that’s cool is we can even do lecture notes. Many of you have been complaining, the class doesn’t have lecture notes and I said “Look, we make this class up-to-date. We include the newest and coolest topics. It’s very hard to produce lecture notes.” But actually, deep learning helps us to produce lecture notes because have video recordings. We can use speech recognition on the audio track and produce lecture notes. So you see that I already started doing this and if you go back to the old recordings, you can see that I already put in links to the full transcript. They’re published as blog posts and you can also access them. By the way, like the videos are the blog posts and everything that you see here licensed using Creative Commons BY 4.0 which means you are free to reuse any part of this and redistribute and share it. So generally, I think this field of machine learning and in particular, deep learning methods we’re going at a rapid pace right now. We are still going ahead. So, I don’t see that these things and developments will stop very soon and there’s still very much excitement in the field. I’m also very excited that I can show the newest things to you in lectures like this one. So, I think there are still exciting new breakthroughs to come and this means that we will adjust this lecture also in the future, produce new lecture videos in order to be able to incorporate the newest latest and greatest methods.

好,這是我們的最后一個視頻。 所以,我也要感謝你這個令人興奮的學期。 這是我第一次完全以視頻格式授課。 到目前為止,據我所知,反饋總體上是非常積極的。 因此,非常感謝您提供有關此方法的反饋。 這也是非常關鍵的,您可以看到我們在各種場合的講座中對硬件,包含的內容等進行了改進。 非常感謝你。 我對此很開心,我認為將來我還會繼續做很多事情。 因此,我認為這些視頻講座是一種非常酷的方式,特別是如果您要教授大型課程。 在非電暈的情況下,該班級將有300名觀眾,我想,如果我們使用這些錄音之類的東西,我們也會獲得非常個人化的溝通方式。 我還可以將閑暇時間用于安排問答會議等活動。 因此,這非常酷。 另一個很酷的事情是,我們甚至可以做筆記。 你們中的許多人一直在抱怨,這堂課沒有講義,我說:“看,我們使這堂課是最新的。 我們包括最新和最酷的主題。 編寫講義非常困難。” 但是實際上,深度學習可以幫助我們產生備忘錄,因為它們具有視頻錄制功能。 我們可以在音軌上使用語音識別并生成講義。 因此,您看到我已經開始執行此操作,并且如果您返回到舊唱片,則可以看到我已經插入了完整成績單的鏈接。 它們以博客文章的形式發布,您也可以訪問它們。 順便說一句,就像視頻一樣,這些都是博客文章,并且您在此處看到的所有內容都使用Creative Commons BY 4.0進行了許可,這意味著您可以自由地重用其中的任何部分,然后重新分發和共享。 因此,總的來說,我認為我們現在正在快速發展這一機器學習領域,尤其是深度學習方法。 我們仍在繼續。 因此,我認為這些事情和發展不會很快停止,并且該領域仍然會充滿興奮。 我很高興能在這樣的演講中向您展示最新的事物。 因此,我認為仍然會有激動人心的新突破,這意味著我們將來也將調整此講座,制作新的講座視頻,以便能夠采用最新的最新方法。

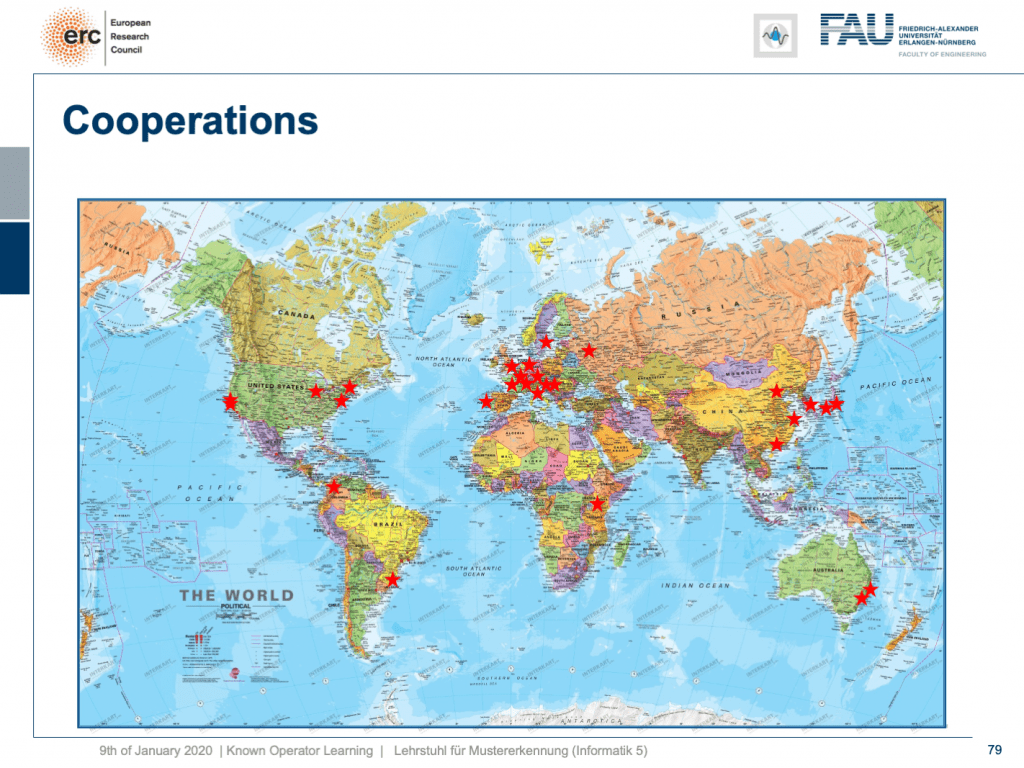

By the way, the stuff that I’ve been showing you in this lecture is of course not just by our group. We incorporated many, many different results by other groups worldwide and of course with results that we produced in Erlangen, we do not alone, but we are working in a large network of international partners. I think this is the way how science needs to be conducted, also now and in the future. I have some additional references. Okay. So, that’s it for this semester. Thank you very much for listening to all of these videos. I hope you had quite some fun with them. Well, let’s see I’m pretty sure I’ll teach a class next semester again. So, if you like this one, you may want to join one of our other classes in the future. Thank you very much and goodbye!

順便說一句,我在本講座中向您展示的內容當然不僅限于我們小組。 我們并入了世界各地其他小組的許多不同結果,當然還有我們在埃爾蘭根(Erlangen)產生的結果,我們并不孤單,但我們正在一個龐大的國際合作伙伴網絡中工作。 我認為這是現在和將來都需要進行科學的方式。 我還有一些其他參考。 好的。 所以,這是本學期。 非常感謝您收聽所有這些視頻。 希望您和他們玩得很開心。 好吧,讓我們看看我很確定我會在下個學期再次教一門課。 因此,如果您喜歡這個課程,將來可能想加入我們的其他課程之一。 非常感謝,再見!

If you liked this post, you can find more essays here, more educational material on Machine Learning here, or have a look at our Deep LearningLecture. I would also appreciate a follow on YouTube, Twitter, Facebook, or LinkedIn in case you want to be informed about more essays, videos, and research in the future. This article is released under the Creative Commons 4.0 Attribution License and can be reprinted and modified if referenced. If you are interested in generating transcripts from video lectures try AutoBlog.

如果你喜歡這篇文章,你可以找到這里更多的文章 ,更多的教育材料,機器學習在這里 ,或看看我們的深入 學習 講座 。 如果您希望將來了解更多文章,視頻和研究信息,也歡迎關注YouTube , Twitter , Facebook或LinkedIn 。 本文是根據知識共享4.0署名許可發布的 ,如果引用,可以重新打印和修改。 如果您對從視頻講座中生成成績單感興趣,請嘗試使用AutoBlog 。

謝謝 (Thanks)

Many thanks to Weilin Fu, Florin Ghesu, Yixing Huang Christopher Syben, Marc Aubreville, and Tobias Würfl for their support in creating these slides.

非常感謝傅偉林,弗洛林·格蘇,黃宜興Christopher Syben,馬克·奧布雷維爾和托比亞斯·伍爾夫(TobiasWürfl)為創建這些幻燈片提供的支持。

翻譯自: https://towardsdatascience.com/known-operator-learning-part-4-823e7a96cf5b

已知兩點坐標拾取怎么操作

本文來自互聯網用戶投稿,該文觀點僅代表作者本人,不代表本站立場。本站僅提供信息存儲空間服務,不擁有所有權,不承擔相關法律責任。 如若轉載,請注明出處:http://www.pswp.cn/news/391110.shtml 繁體地址,請注明出處:http://hk.pswp.cn/news/391110.shtml 英文地址,請注明出處:http://en.pswp.cn/news/391110.shtml

如若內容造成侵權/違法違規/事實不符,請聯系多彩編程網進行投訴反饋email:809451989@qq.com,一經查實,立即刪除!

)