本節課可以讓同學們實踐 4 個主要內容,分別是:

1、部署?InternLM2-Chat-1.8B?模型進行智能對話

1.1安裝依賴庫:

pip install huggingface-hub==0.17.3

pip install transformers==4.34

pip install psutil==5.9.8

pip install accelerate==0.24.1

pip install streamlit==1.32.2

pip install matplotlib==3.8.3

pip install modelscope==1.9.5

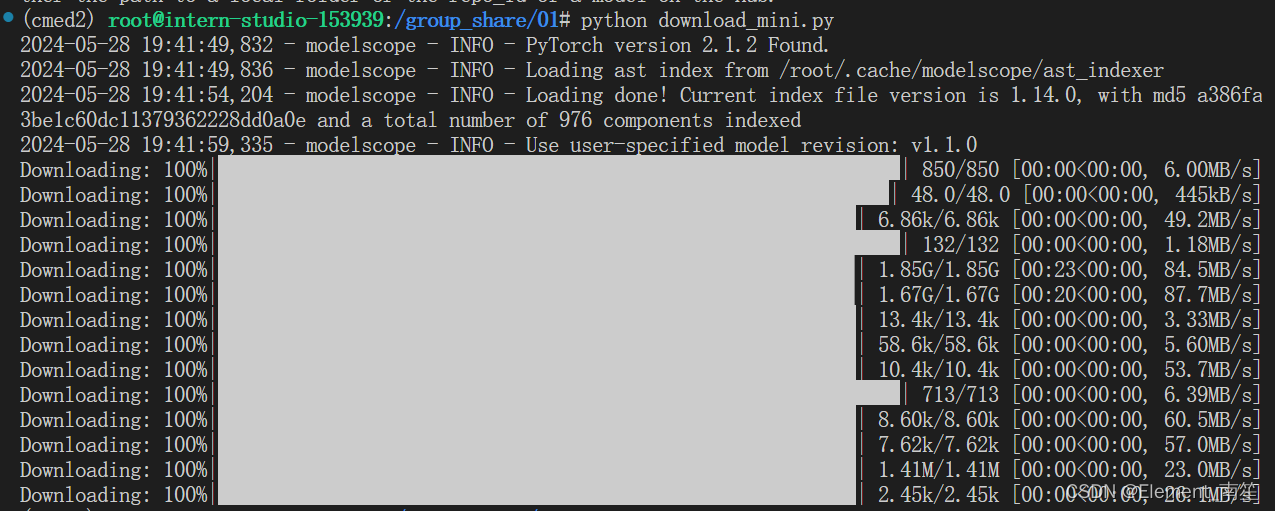

pip install sentencepiece==0.1.99?1.2下載?InternLM2-Chat-1.8B?模型

import os

from modelscope.hub.snapshot_download import snapshot_download# 創建保存模型目錄

os.system("mkdir /root/models")# save_dir是模型保存到本地的目錄

save_dir="/root/models"snapshot_download("Shanghai_AI_Laboratory/internlm2-chat-1_8b", cache_dir=save_dir, revision='v1.1.0')

?

?

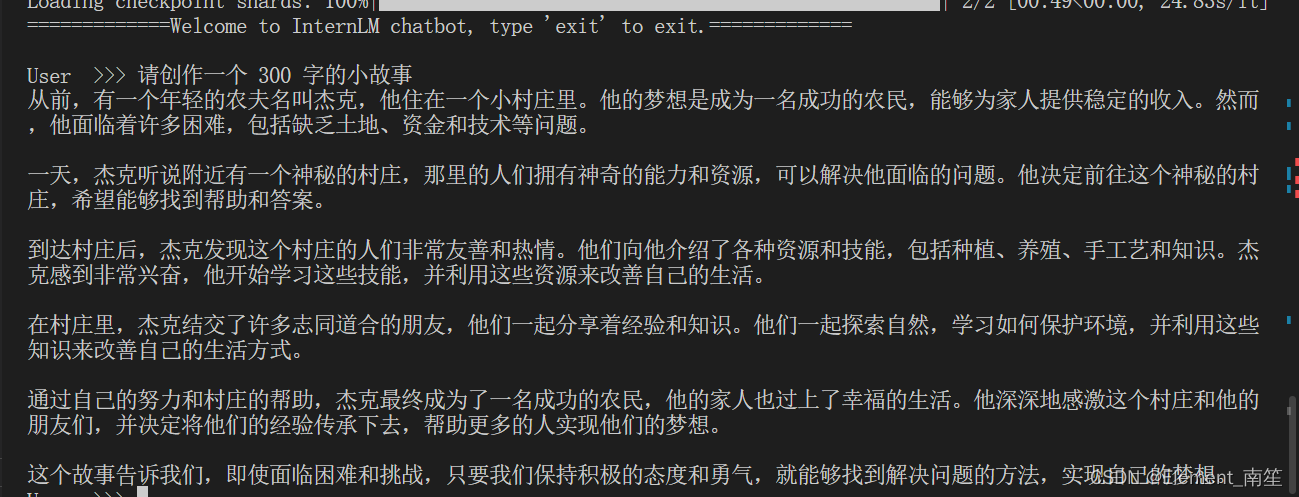

1.3運行 cli_demo?

import torch

from transformers import AutoTokenizer, AutoModelForCausalLMmodel_name_or_path = "/root/models/Shanghai_AI_Laboratory/internlm2-chat-1_8b"tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True, device_map='cuda:0')

model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='cuda:0')

model = model.eval()system_prompt = """You are an AI assistant whose name is InternLM (書生·浦語).

- InternLM (書生·浦語) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能實驗室). It is designed to be helpful, honest, and harmless.

- InternLM (書生·浦語) can understand and communicate fluently in the language chosen by the user such as English and 中文.

"""messages = [(system_prompt, '')]print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============")while True:input_text = input("\nUser >>> ")input_text = input_text.replace(' ', '')if input_text == "exit":breaklength = 0for response, _ in model.stream_chat(tokenizer, input_text, messages):if response is not None:print(response[length:], flush=True, end="")length = len(response)

2、部署實戰營優秀作品?八戒-Chat-1.8B?模型

- 八戒-Chat-1.8B:魔搭社區

- 聊天-嬛嬛-1.8B:OpenXLab浦源 - 模型中心

- Mini-Horo-巧耳:OpenXLab浦源 - 模型中心

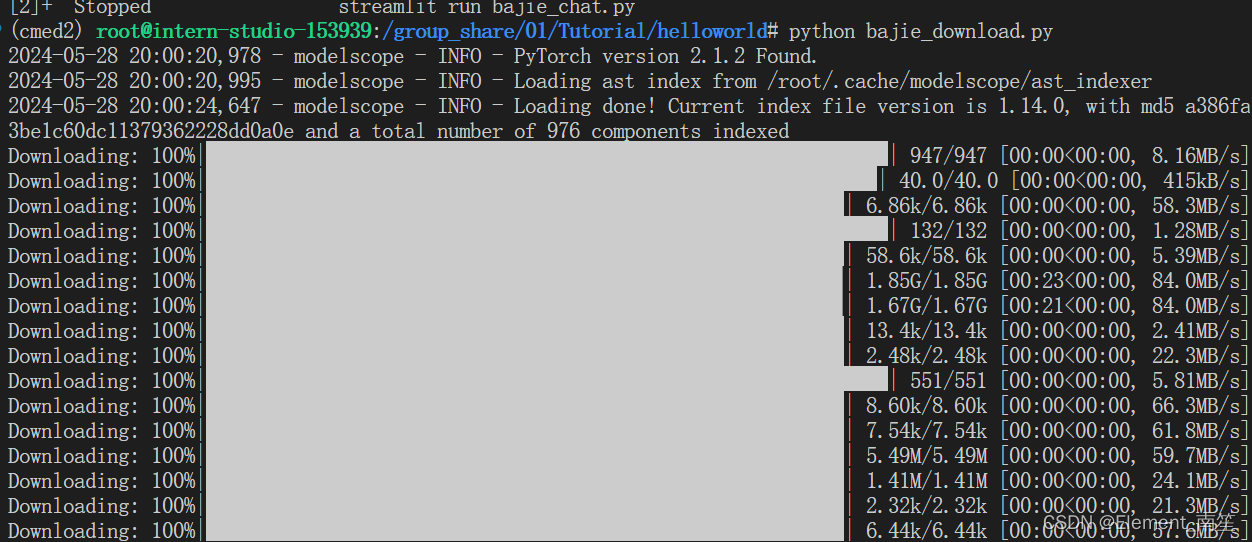

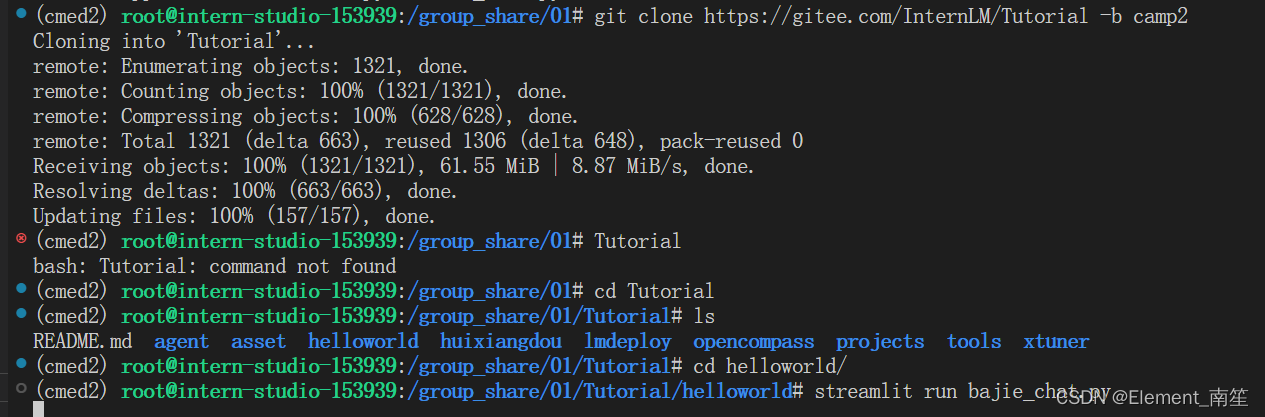

git clone https://gitee.com/InternLM/Tutorial -b camp2?執行下載模型:

python /root/Tutorial/helloworld/bajie_download.py ?待程序下載完成后,輸入運行命令:

?待程序下載完成后,輸入運行命令:

?

streamlit run /root/Tutorial/helloworld/bajie_chat.py --server.address 127.0.0.1 --server.port 6006

?

?

?

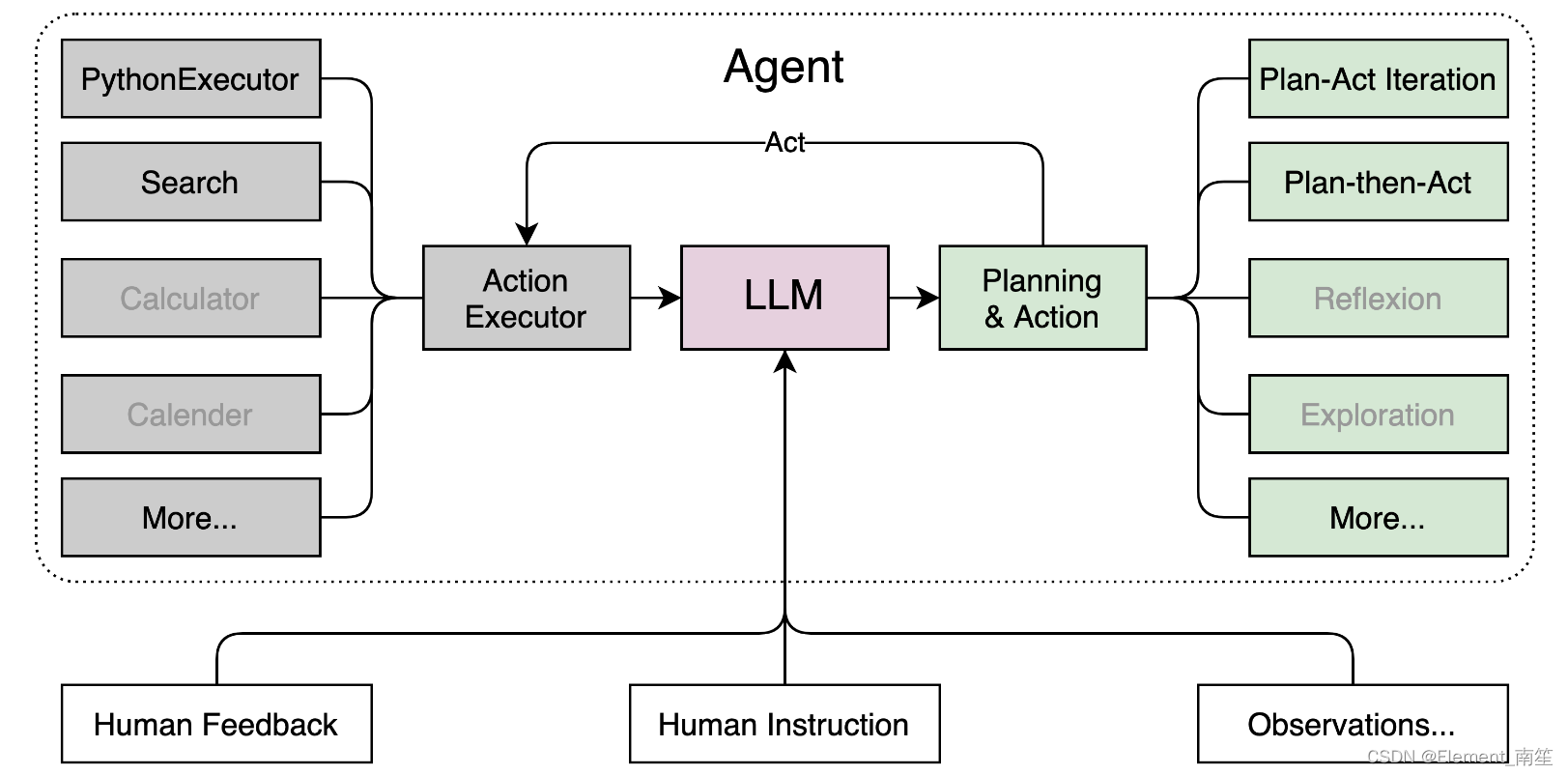

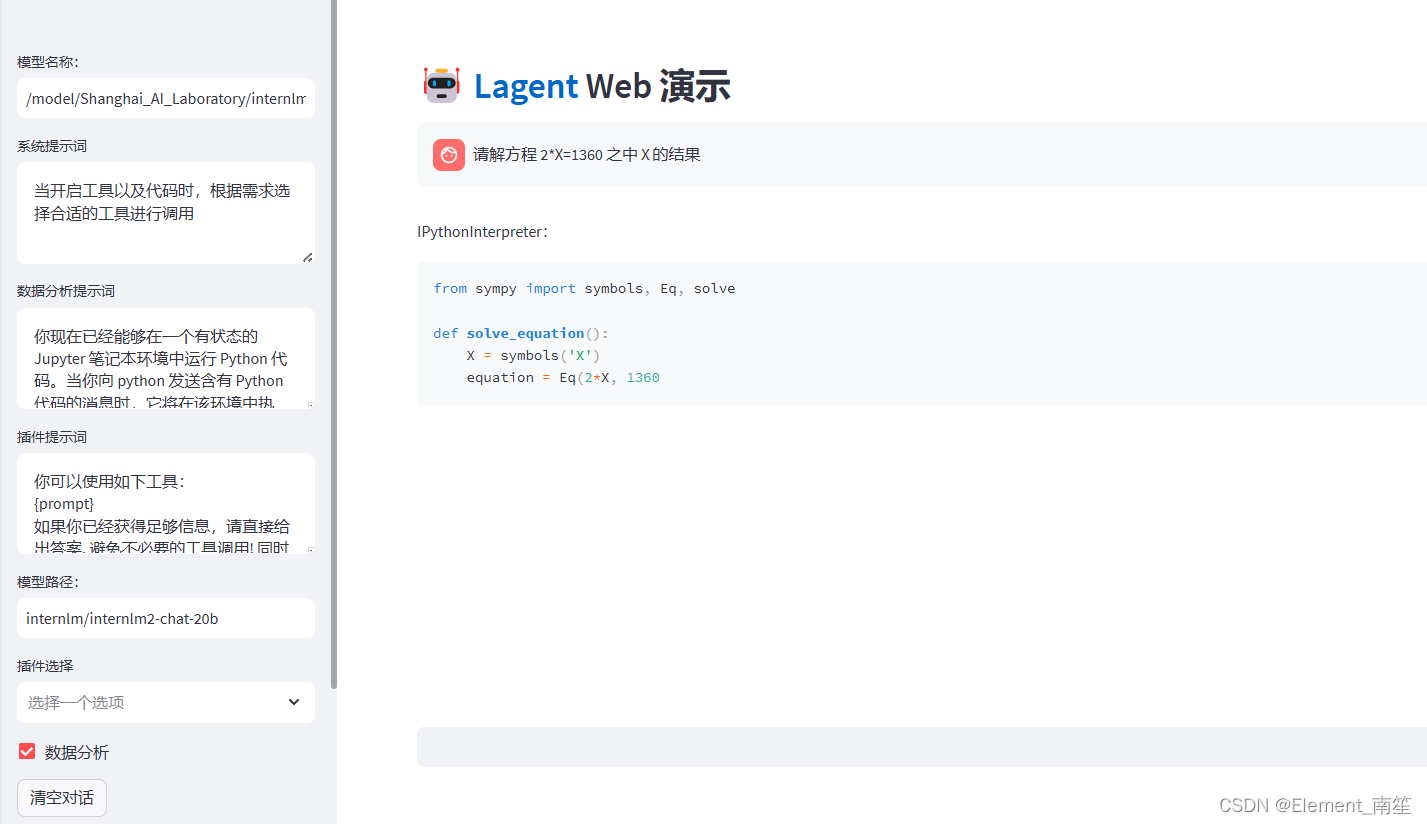

3、通過?InternLM2-Chat-7B?運行?Lagent?智能體?Demo

?

Lagent 的特性總結如下:

- 流式輸出:提供 stream_chat 接口作流式輸出,本地就能演示酷炫的流式 Demo。

- 接口統一,設計全面升級,提升拓展性,包括:

- 型號 : 不論是 OpenAI API, Transformers 還是推理加速框架 LMDeploy 一網打盡,模型切換可以游刃有余;

- Action: 簡單的繼承和裝飾,即可打造自己個人的工具集,不論 InternLM 還是 GPT 均可適配;

- Agent:與 Model 的輸入接口保持一致,模型到智能體的蛻變只需一步,便捷各種 agent 的探索實現;

- 文檔全面升級,API 文檔全覆蓋。

下載模型:

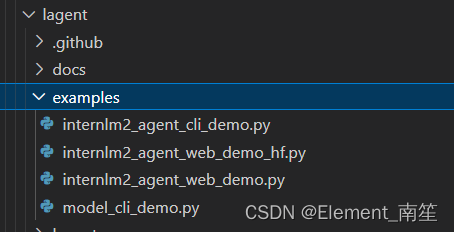

git clone https://gitee.com/internlm/lagent.git

# git clone https://github.com/internlm/lagent.git

cd /root/demo/lagent

git checkout 581d9fb8987a5d9b72bb9ebd37a95efd47d479ac

pip install -e . # 源碼安裝在 terminal 中輸入指令,構造軟鏈接快捷訪問方式:

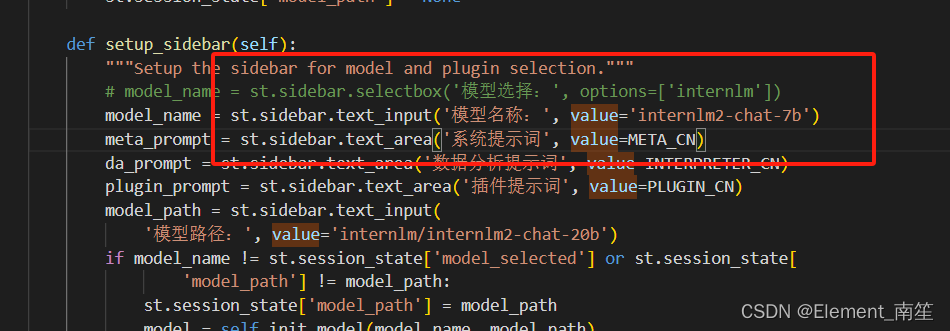

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-7b /root/models/internlm2-chat-7b打開?路徑下?文件,并修改對應位置 (71行左右) 代碼?:internlm2_agent_web_demo_hf.py

?

?修改模型地址:

運行前端代碼:

streamlit run /root/demo/lagent/examples/internlm2_agent_web_demo_hf.py --server.address 127.0.0.1 --server.port 6006

?

?

4、實踐部署?浦語·靈筆2?模型

補充環境包,選用?進行開發:50% A100

pip install timm==0.4.12 sentencepiece==0.1.99 markdown2==2.4.10 xlsxwriter==3.1.2 gradio==4.13.0 modelscope==1.9.5

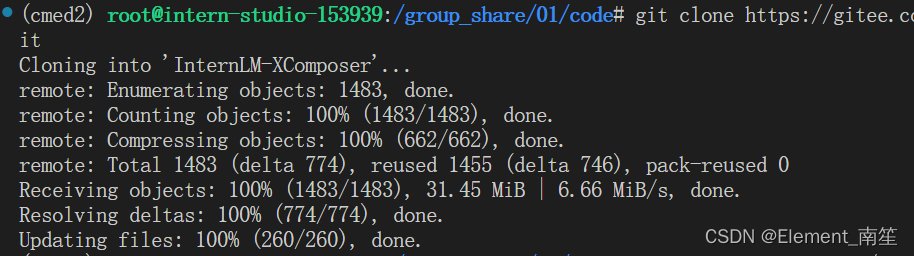

下載?InternLM-XComposer 倉庫?相關的代碼資源:

cd /root/demo

git clone https://gitee.com/internlm/InternLM-XComposer.git

# git clone https://github.com/internlm/InternLM-XComposer.git

cd /root/demo/InternLM-XComposer

git checkout f31220eddca2cf6246ee2ddf8e375a40457ff626 ?

?

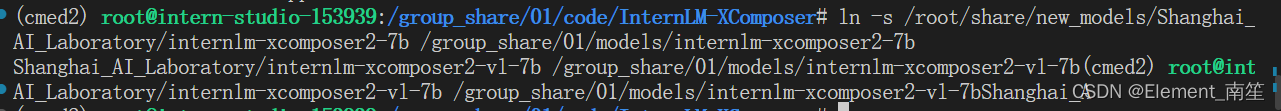

?在?中輸入指令,構造軟鏈接快捷訪問方式:terminal

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm-xcomposer2-7b /group_share/01/models/internlm-xcomposer2-7b

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm-xcomposer2-vl-7b /group_share/01/models/internlm-xcomposer2-vl-7b

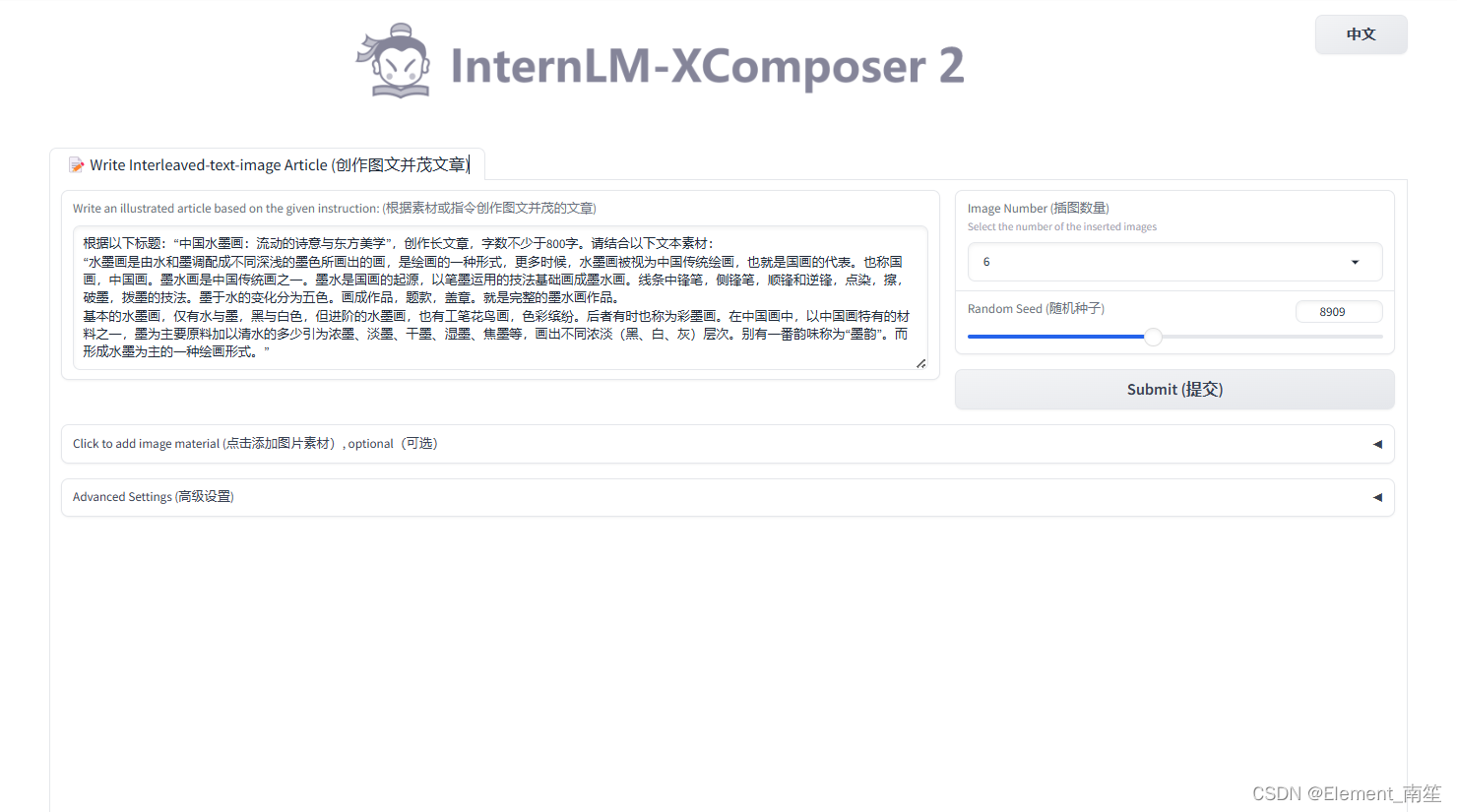

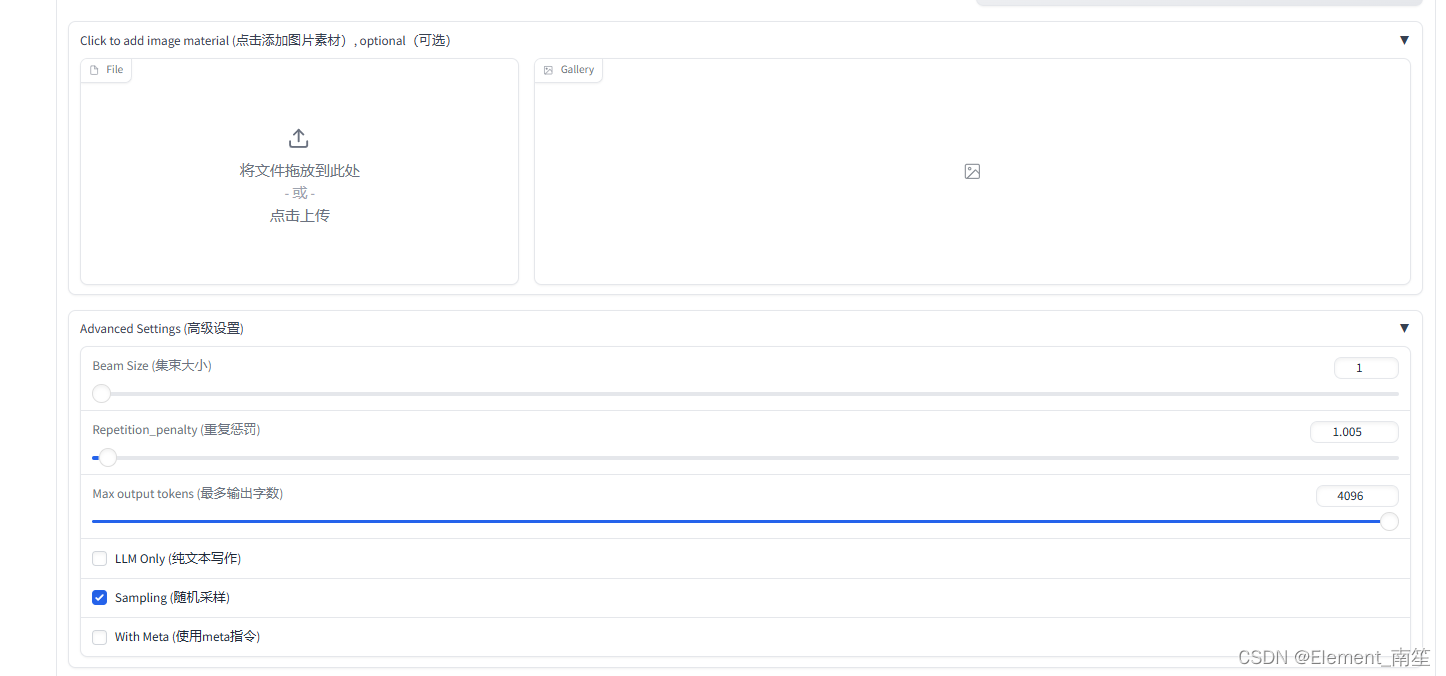

??圖文寫作實戰(開啟 50% A100 權限后才可開啟此章節)

cd /root/demo/InternLM-XComposer

python /root/demo/InternLM-XComposer/examples/gradio_demo_composition.py \

--code_path /root/models/internlm-xcomposer2-7b \

--private \

--num_gpus 1 \

--port 6006

?

?

?

?

?圖片理解實戰(開啟 50% A100 權限后才可開啟此章節)

根據附錄 6.4 的方法,關閉并重新啟動一個新的?,繼續輸入指令,啟動?:terminalInternLM-XComposer2-vl

conda activate democd /root/demo/InternLM-XComposer

python gradio_demo_chat.py \

--code_path /group_share/01/models/internlm-xcomposer2-vl-7b \

--private \

--num_gpus 1 \

--port 6006

)

的使用)

函數)