1.filter

在多媒體處理中,filter 的意思是被編碼到輸出文件之前用來修改輸入文件內容的一個軟件工具。如:視頻翻轉,旋轉,縮放等。

語法:[input_link_label1]… filter_name=parameters [output_link_label1]…

1、視頻過濾器 -vf

如 input.mp4 視頻按順時針方向旋轉 90 度

ffplay -i input.mp4 -vf transpose=1

如 input.mp4 視頻水平翻轉(左右翻轉)

ffplay -i input.mp4 -vf hflip

2、音頻過濾器 -af

實現慢速播放,聲音速度是原始速度的 50%

offplay input.mp3 -af atempo=0.5

過濾器鏈(Filterchain)

Filterchain = 逗號分隔的一組 filter

語法:“filter1,filter2,filter3,…filterN-2,filterN-1,filterN”

順時針旋轉 90 度并水平翻轉

ffplay -i input.mp4 -vf transpose=1,hflip

過濾器圖(Filtergraph)

第一步: 源視頻寬度擴大兩倍。

ffmpeg -i jidu.mp4 -t 10 -vf pad=2*iw output.mp4

第二步:源視頻水平翻轉

ffmpeg -i jidu.mp4 -t 10 -vf hflip output2.mp4

第三步:水平翻轉視頻覆蓋 output.mp4

ffmpeg -i output.mp4 -i output2.mp4 -filter_complex overlay=w compare.mp4

是不是很復雜?

用帶有鏈接標記的過濾器圖(Filtergraph)只需一條命令

基本語法

Filtergraph = 分號分隔的一組 filterchain

“filterchain1;filterchain2;…filterchainN-1;filterchainN”

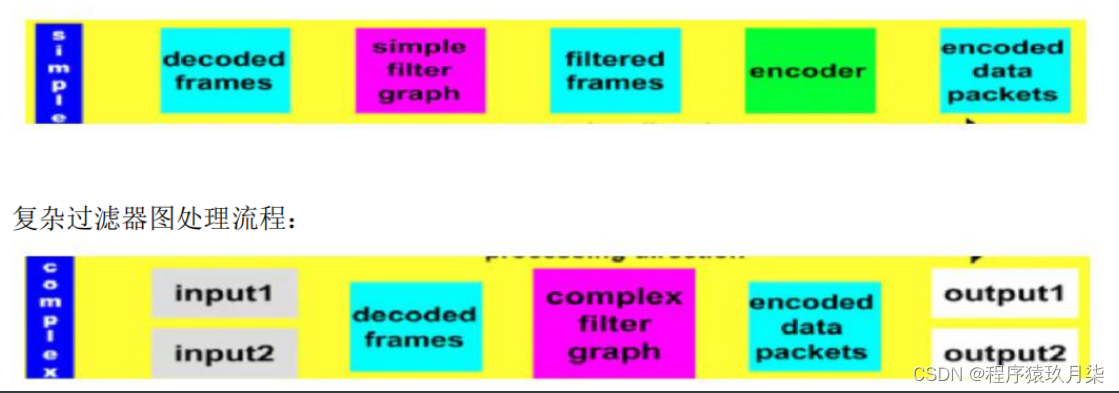

Filtergraph 的分類

1、簡單(simple) 一對一

2、復雜(complex)多對一, 多對多

過濾器圖處理流程:

對于剛才用三步處理的方式,用過濾器圖可以這樣做:

ffplay -f lavfi -i testsrc -vf split[a][b];[a]pad=2*iw[1];[b]hflip[2];[1][2]overlay=w

F1: split 過濾器創建兩個輸入文件的拷貝并標記為[a],[b]

F2: [a]作為 pad 過濾器的輸入,pad 過濾器產生 2 倍寬度并輸出到[1].

F3: [b]作為 hflip 過濾器的輸入,vflip 過濾器水平翻轉視頻并輸出到[2].

F4: 用 overlay 過濾器把 [2]覆蓋到[1]的旁邊.

filter 涉及的結構體,主要包括:

-

FilterGraph, AVFilterGraph

-

InputFilter, InputStream, OutputFilter, OutputStream

-

AVFilter, AVFilterContext

-

AVFilterLink

-

AVFilterPad;

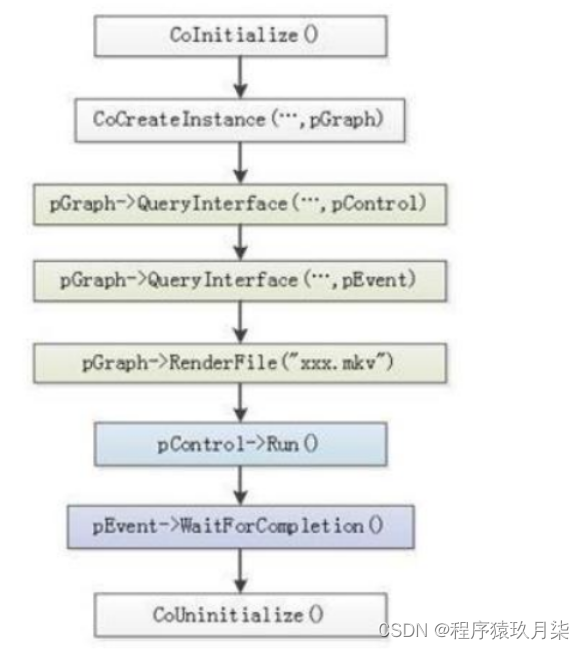

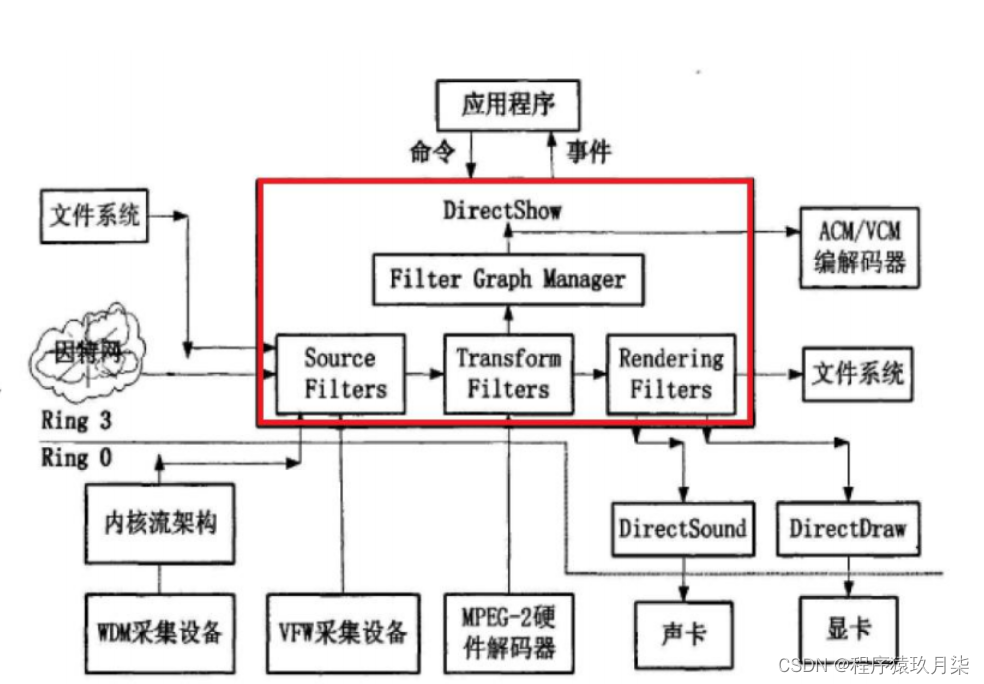

2.DirectShow

DirectShow(簡稱 DShow) 是一個 Windows 平臺上的流媒體框架,提供了高質量的多媒體流采集和回放功能。它支持多種多樣的媒體文件格式,包括 ASF、MPEG、AVI、MP3和 WAV 文件,同時支持使 動或早期的 VFW 驅動來進行多媒體流的采集。

DirectShow 大大簡化了媒體回放、格式轉換和采集工作。但與此同時,它也為用戶自定義的解決方案提供了底層流控制框架,從而使用戶可以自行創建支持新的文件格式或其他用戶的 DirectShow 組件。

DirectShow 是基于**組件對象模型(COM)**的,因此當你編寫 DirectShow 應用程序時,你必須具備 COM 客戶端程序編寫的知識。對于大部分的應用程序,你不需要實現自己的COM 對象,DirectShow 提供了大部分你需要的 DirectShow 組件,但是假如你需要編寫自己的 DirectShow 組件來進行擴充,那么你必須編寫實現 COM 對象。

使用 DirectShow 編寫的典型應用程序包括:DVD 播放器、視頻編輯程序、AVI 到 ASF轉換器、 MP3 播放器和數字視頻采集應用。

DirectShow 的架構如下圖所示:

/*** @file* API example for decoding and filtering* @example filtering_video.c*/#define _XOPEN_SOURCE 600 /* for usleep */

#include <unistd.h>

#include <stdio.h>

#include <stdlib.h>#include <libavcodec/avcodec.h>

#include <libavformat/avformat.h>

#include <libavfilter/buffersink.h>

#include <libavfilter/buffersrc.h>

#include <libavutil/opt.h>const char *filter_descr = "scale=640:480,hflip";

/* other way:scale=78:24 [scl]; [scl] transpose=cclock // assumes "[in]" and "[out]" to be input output pads respectively*/static AVFormatContext *fmt_ctx;

static AVCodecContext *dec_ctx;

AVFilterContext *buffersink_ctx;

AVFilterContext *buffersrc_ctx;

AVFilterGraph *filter_graph;

static int video_stream_index = -1;

static int64_t last_pts = AV_NOPTS_VALUE;static int open_input_file(const char *filename)

{int ret;AVCodec *dec;if ((ret = avformat_open_input(&fmt_ctx, filename, NULL, NULL)) < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot open input file\n");return ret;}if ((ret = avformat_find_stream_info(fmt_ctx, NULL)) < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot find stream information\n");return ret;}/* select the video stream */ret = av_find_best_stream(fmt_ctx, AVMEDIA_TYPE_VIDEO, -1, -1, &dec, 0);if (ret < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot find a video stream in the input file\n");return ret;}video_stream_index = ret;/* create decoding context */dec_ctx = avcodec_alloc_context3(dec);if (!dec_ctx)return AVERROR(ENOMEM);avcodec_parameters_to_context(dec_ctx, fmt_ctx->streams[video_stream_index]->codecpar);/* init the video decoder */if ((ret = avcodec_open2(dec_ctx, dec, NULL)) < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot open video decoder\n");return ret;}return 0;

}static int init_filters(const char *filters_descr)

{char args[512];int ret = 0;const AVFilter *buffersrc = avfilter_get_by_name("buffer");const AVFilter *buffersink = avfilter_get_by_name("buffersink");AVFilterInOut *outputs = avfilter_inout_alloc();AVFilterInOut *inputs = avfilter_inout_alloc();AVRational time_base = fmt_ctx->streams[video_stream_index]->time_base;enum AVPixelFormat pix_fmts[] = { AV_PIX_FMT_GRAY8, AV_PIX_FMT_NONE };filter_graph = avfilter_graph_alloc();if (!outputs || !inputs || !filter_graph) {ret = AVERROR(ENOMEM);goto end;}/* buffer video source: the decoded frames from the decoder will be inserted here. */snprintf(args, sizeof(args),"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",dec_ctx->width, dec_ctx->height, dec_ctx->pix_fmt,time_base.num, time_base.den,dec_ctx->sample_aspect_ratio.num, dec_ctx->sample_aspect_ratio.den);ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",args, NULL, filter_graph);if (ret < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot create buffer source\n");goto end;}/* buffer video sink: to terminate the filter chain. */ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",NULL, NULL, filter_graph);if (ret < 0) {av_log(NULL, AV_LOG_ERROR, "Cannot create buffer sink\n");goto end;}/** Set the endpoints for the filter graph. The filter_graph will* be linked to the graph described by filters_descr.*//** The buffer source output must be connected to the input pad of* the first filter described by filters_descr; since the first* filter input label is not specified, it is set to "in" by* default.*/outputs->name = av_strdup("in");outputs->filter_ctx = buffersrc_ctx;outputs->pad_idx = 0;outputs->next = NULL;/** The buffer sink input must be connected to the output pad of* the last filter described by filters_descr; since the last* filter output label is not specified, it is set to "out" by* default.*/inputs->name = av_strdup("out");inputs->filter_ctx = buffersink_ctx;inputs->pad_idx = 0;inputs->next = NULL;if ((ret = avfilter_graph_parse_ptr(filter_graph, filters_descr,&inputs, &outputs, NULL)) < 0)goto end;if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)goto end;end:avfilter_inout_free(&inputs);avfilter_inout_free(&outputs);return ret;

}/// 將yuv幀寫入文件:yuv420p格式

FILE * g__file_fd;

static void write_frame(const AVFrame *frame)

{static int printf_flag = 0;if(!printf_flag){printf_flag = 1;printf("frame widht=%d,frame height=%d\n",frame->width,frame->height);if(frame->format==AV_PIX_FMT_YUV420P){printf("format is yuv420p\n");}else{printf("formet is = %d \n",frame->format);}}fwrite(frame->data[0],1,frame->width*frame->height,g__file_fd);fwrite(frame->data[1],1,frame->width/2*frame->height/2,g__file_fd);fwrite(frame->data[2],1,frame->width/2*frame->height/2,g__file_fd);

}int main(int argc, char **argv)

{int ret;AVPacket packet;AVFrame *frame;AVFrame *filt_frame;if (argc != 2) {fprintf(stderr, "Usage: %s file\n", argv[0]);exit(1);}g__file_fd = fopen("yuv888.yuv", "w");frame = av_frame_alloc();filt_frame = av_frame_alloc();if (!frame || !filt_frame) {perror("Could not allocate frame");exit(1);}if ((ret = open_input_file(argv[1])) < 0)goto end;if ((ret = init_filters(filter_descr)) < 0)goto end;/* read all packets */while (1) {if ((ret = av_read_frame(fmt_ctx, &packet)) < 0)break;if (packet.stream_index == video_stream_index) {ret = avcodec_send_packet(dec_ctx, &packet);if (ret < 0) {av_log(NULL, AV_LOG_ERROR, "Error while sending a packet to the decoder\n");break;}while (ret >= 0) {ret = avcodec_receive_frame(dec_ctx, frame);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {break;} else if (ret < 0) {av_log(NULL, AV_LOG_ERROR, "Error while receiving a frame from the decoder\n");goto end;}frame->pts = frame->best_effort_timestamp;/* push the decoded frame into the filtergraph */if (av_buffersrc_add_frame_flags(buffersrc_ctx, frame, AV_BUFFERSRC_FLAG_KEEP_REF) < 0) {av_log(NULL, AV_LOG_ERROR, "Error while feeding the filtergraph\n");break;}/* pull filtered frames from the filtergraph */while (1) {ret = av_buffersink_get_frame(buffersink_ctx, filt_frame);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)break;if (ret < 0)goto end;write_frame(filt_frame);av_frame_unref(filt_frame);}av_frame_unref(frame);}}av_packet_unref(&packet);}

end:avfilter_graph_free(&filter_graph);avcodec_free_context(&dec_ctx);avformat_close_input(&fmt_ctx);av_frame_free(&frame);av_frame_free(&filt_frame);fclose(g__file_fd);if (ret < 0 && ret != AVERROR_EOF) {fprintf(stderr, "Error occurred: %s\n", av_err2str(ret));exit(1);}exit(0);

}

)

![[GESP樣題 四級] 填幻方和幸運數](http://pic.xiahunao.cn/[GESP樣題 四級] 填幻方和幸運數)