目錄

一、第三方庫導入

二、數據集準備

三、使用轉置卷積的生成器

四、使用卷積的判別器

五、生成器生成圖像

六、主程序

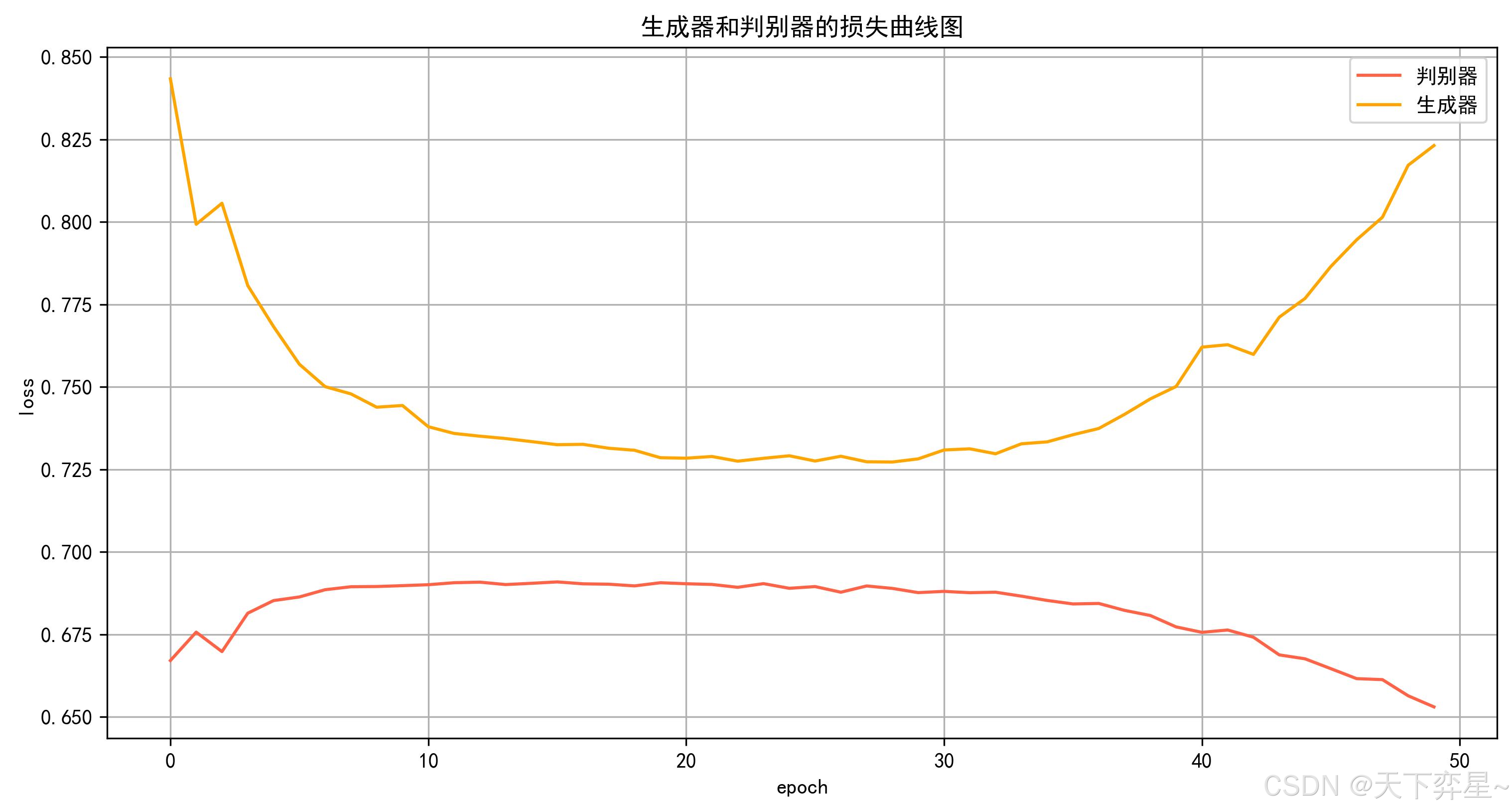

七、運行結果

7.1 生成器和判別器的損失函數圖像

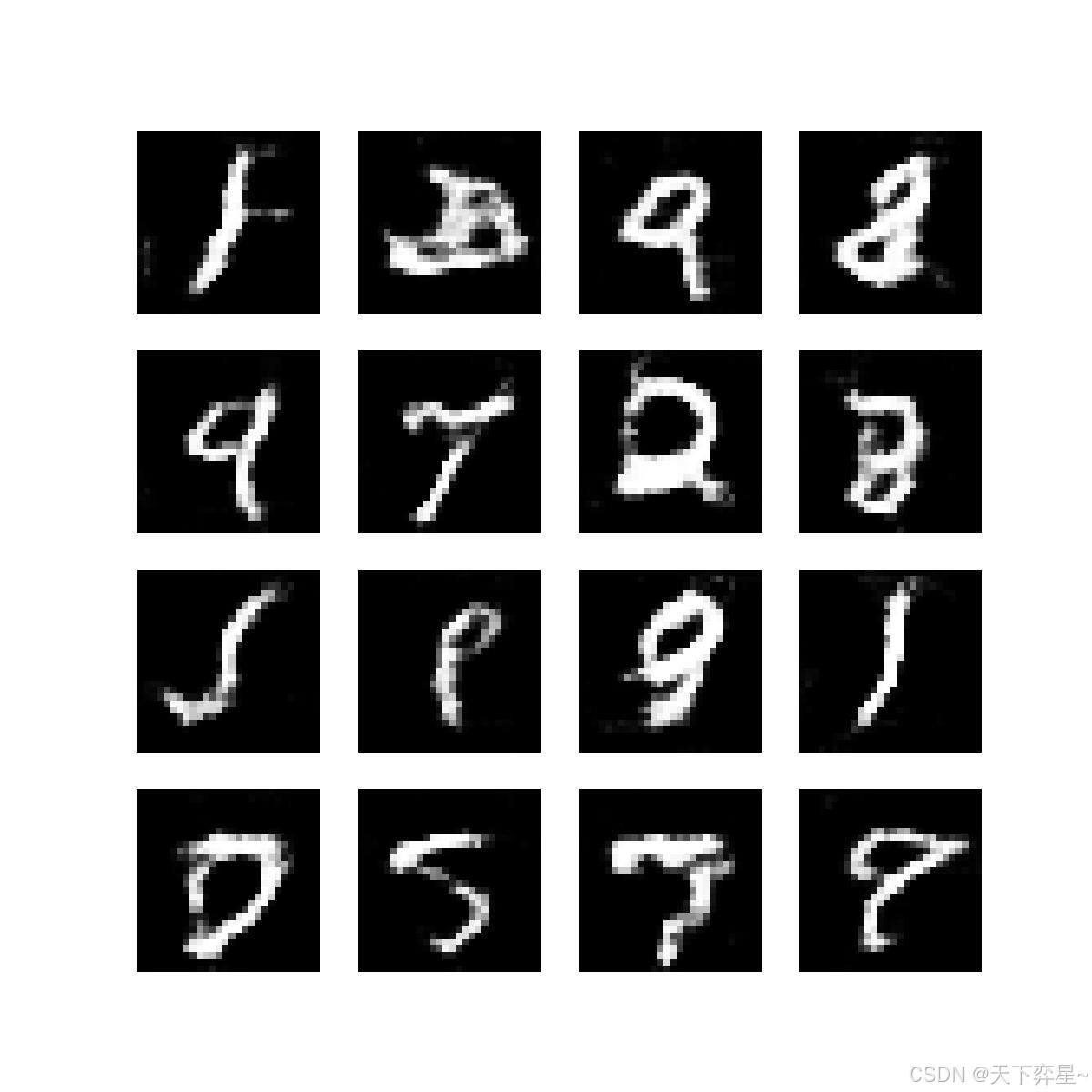

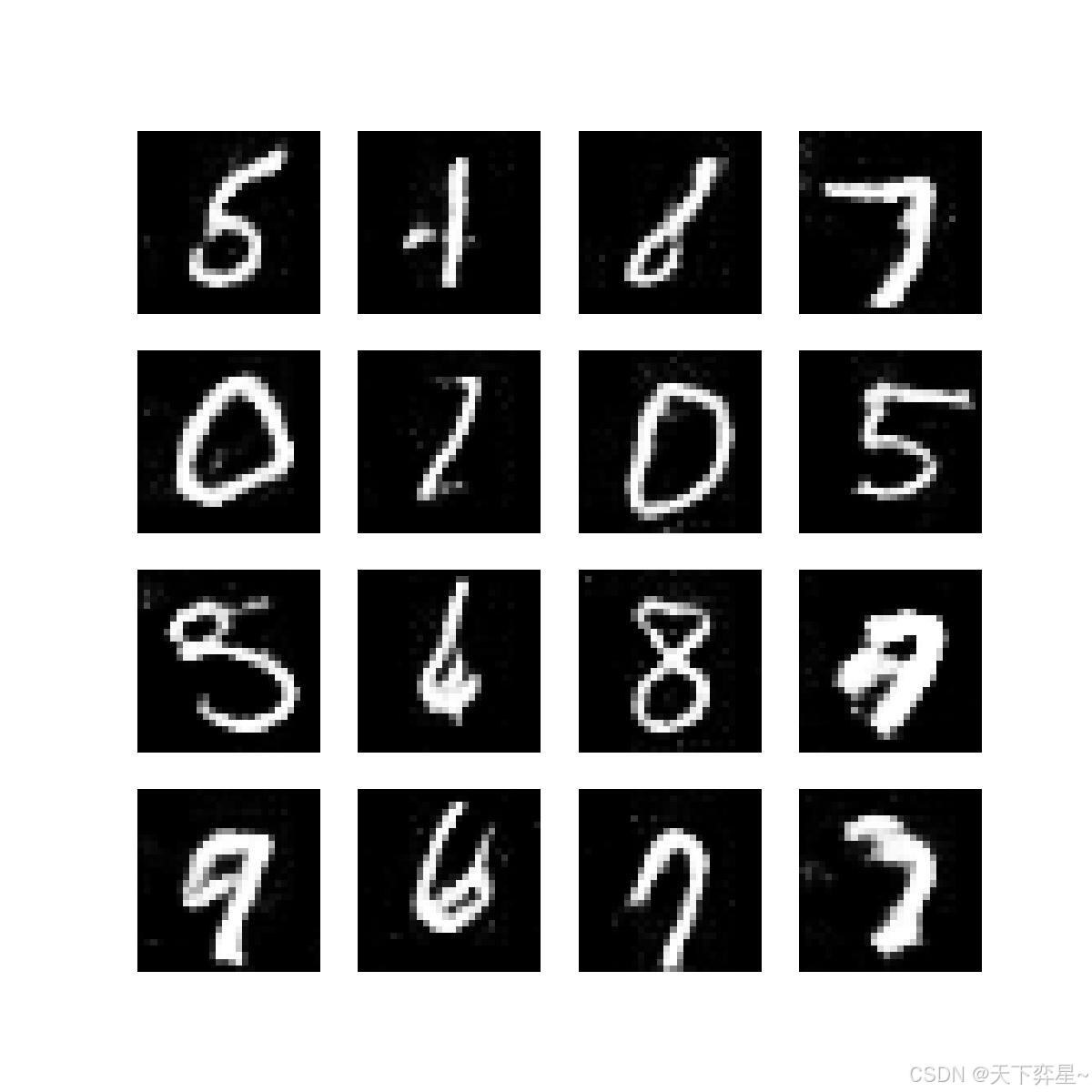

7.2 訓練過程中生成器生成的圖像

八、完整的pytorch代碼

由于之前寫gans的代碼時,我的生成器和判別器不是使用的全連接網絡就是卷積,但是無論這兩種方法怎么組合,最后生成器生成的圖像效果都很不好。因此最后我選擇了生成器使用轉置卷積,而判別器使用卷積,最后得到的生成圖像確實效果比之前好很多了。

一、第三方庫導入

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['SimHei'] # 設置中文字體

plt.rcParams['axes.unicode_minus'] = False # 正常顯示負號

from torchvision import transforms

import os

from PIL import Image

from torch.utils.data import Dataset, DataLoader二、數據集準備

# 手寫數字數據集

class MINISTDataset(Dataset):def __init__(self, files, root_dir, transform=None):self.files = filesself.root_dir = root_dirself.transform = transformself.labels = []for f in files:parts = f.split("_")p = parts[2].split(".")[0]self.labels.append(int(p))def __len__(self):return len(self.files)def __getitem__(self, idx):img_path = os.path.join(self.root_dir, self.files[idx])img = Image.open(img_path).convert("L")if self.transform:img = self.transform(img)label = self.labels[idx]return img, label三、使用轉置卷積的生成器

class Generator(nn.Module):def __init__(self, latent_dim=100):super().__init__()self.main = nn.Sequential(# 輸入: latent_dim維噪聲 -> 輸出: 7x7x256nn.ConvTranspose2d(latent_dim, 256, kernel_size=7, stride=1, padding=0, bias=False),nn.BatchNorm2d(256),nn.ReLU(True),# 上采樣: 7x7 -> 14x14nn.ConvTranspose2d(256, 128, kernel_size=4, stride=2, padding=1, bias=False),nn.BatchNorm2d(128),nn.ReLU(True),# 上采樣: 14x14 -> 28x28nn.ConvTranspose2d(128, 64, kernel_size=4, stride=2, padding=1, bias=False),nn.BatchNorm2d(64),nn.ReLU(True),# 輸出層: 28x28x1nn.ConvTranspose2d(64, 1, kernel_size=3, stride=1, padding=1, bias=False),nn.Tanh())def forward(self, x):# 將噪聲重塑為 (batch_size, latent_dim, 1, 1)x = x.view(x.size(0), -1, 1, 1)return self.main(x)

四、使用卷積的判別器

class Discriminator(nn.Module):def __init__(self):super().__init__()self.main = nn.Sequential(# 輸入: 1x28x28nn.Conv2d(1, 32, kernel_size=4, stride=2, padding=1), # 輸出: 32x14x14nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Conv2d(32, 64, kernel_size=4, stride=2, padding=1), # 輸出: 64x7x7nn.BatchNorm2d(64),nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1), # 輸出: 128x7x7nn.BatchNorm2d(128),nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Flatten(),nn.Linear(128 * 7 * 7, 1),nn.Sigmoid())def forward(self, x):return self.main(x)五、生成器生成圖像

# 展示生成器生成的圖像

def gen_img_plot(test_input, save_path):gen_imgs = gen(test_input).detach().cpu()gen_imgs = gen_imgs.view(-1, 28, 28)plt.figure(figsize=(4, 4))for i in range(16):plt.subplot(4, 4, i + 1)plt.imshow(gen_imgs[i], cmap="gray")plt.axis("off")plt.savefig(save_path, dpi=300)plt.close()六、主程序

if __name__ == "__main__":# 對數據做歸一化處理transforms = transforms.Compose([transforms.Resize((28, 28)),transforms.ToTensor(),transforms.Normalize((0.5,), (0.5,))])# 路徑base_dir = 'C:\\Users\\Administrator\\PycharmProjects\\CNN'train_dir = os.path.join(base_dir, "minist_train")# 獲取文件夾里圖像的名稱train_files = [f for f in os.listdir(train_dir) if f.endswith('.jpg')]# 創建數據集和數據加載器train_dataset = MINISTDataset(train_files, train_dir, transform=transforms)train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)# 參數epochs = 50lr = 0.0002# 初始化模型的優化器和損失函數gen = Generator()dis = Discriminator()d_optim = torch.optim.Adam(dis.parameters(), lr=lr, betas=(0.5, 0.999)) # 判別器的優化器g_optim = torch.optim.Adam(gen.parameters(), lr=lr, betas=(0.5, 0.999)) # 生成器的優化器loss_fn = torch.nn.BCELoss() # 二分類交叉熵損失函數# 記錄lossD_loss = []G_loss = []# 訓練for epoch in range(epochs):d_epoch_loss = 0g_epoch_loss = 0count = len(train_loader) # 返回批次數for step, (img, _) in enumerate(train_loader):# 每個批次的大小size = img.size(0)random_noise = torch.randn(size, 100)# 判別器訓練d_optim.zero_grad()real_output = dis(img)d_real_loss = loss_fn(real_output, torch.ones_like(real_output))# d_real_loss.backward()gen_img = gen(random_noise)gen_img = gen_img.view(size, 1, 28, 28)fake_output = dis(gen_img.detach())d_fake_loss = loss_fn(fake_output, torch.zeros_like(fake_output))# d_fake_loss.backward()d_loss = (d_real_loss + d_fake_loss) / 2d_loss.backward()d_optim.step()# 生成器的訓練g_optim.zero_grad()fake_output = dis(gen_img)g_loss = loss_fn(fake_output, torch.ones_like(fake_output))g_loss.backward()g_optim.step()# 計算在一個epoch里面所有的g_loss和d_losswith torch.no_grad():d_epoch_loss += d_lossg_epoch_loss += g_loss# 計算平均損失值with torch.no_grad():d_epoch_loss = d_epoch_loss / countg_epoch_loss = g_epoch_loss / countD_loss.append(d_epoch_loss.item())G_loss.append(g_epoch_loss.item())print("Epoch:", epoch, " D loss:", d_epoch_loss.item(), " G Loss:", g_epoch_loss.item())# 每隔2個epoch繪制生成器生成的圖像if (epoch + 1) % 2 == 0:test_input = torch.randn(16, 100)name = f"gen_img_{epoch}.jpg"save_path = os.path.join('C:\\Users\\Administrator\\PycharmProjects\\CNN\\gen_img_11', name)gen_img_plot(test_input, save_path)# 繪制損失曲線圖plt.figure(figsize=(12, 6))plt.plot(D_loss, label="判別器", color="tomato")plt.plot(G_loss, label="生成器", color="orange")plt.xlabel("epoch")plt.ylabel("loss")plt.title("生成器和判別器的損失曲線圖")plt.legend()plt.grid()plt.savefig("C:\\Users\\Administrator\\PycharmProjects\\CNN\\gen_dis_loss_11.jpg", dpi=300, bbox_inches="tight")plt.close()七、運行結果

7.1 生成器和判別器的損失函數圖像

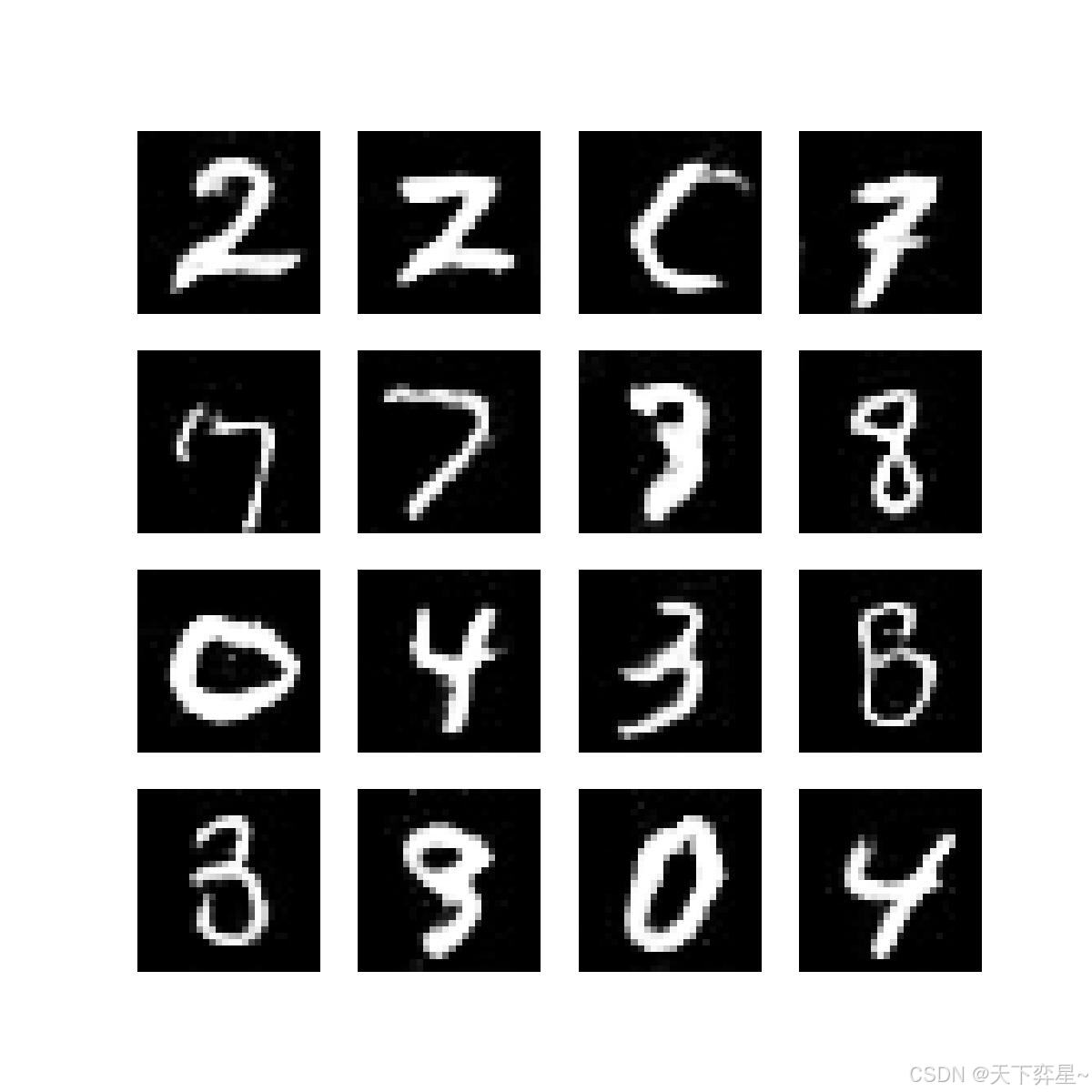

7.2 訓練過程中生成器生成的圖像

這里只展示一部分

gen_img_1.jpg

gen_img_25.jpg

gen_img_49.jpg

八、完整的pytorch代碼

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['SimHei'] # 設置中文字體

plt.rcParams['axes.unicode_minus'] = False # 正常顯示負號

from torchvision import transforms

import os

from PIL import Image

from torch.utils.data import Dataset, DataLoader# 手寫數字數據集

class MINISTDataset(Dataset):def __init__(self, files, root_dir, transform=None):self.files = filesself.root_dir = root_dirself.transform = transformself.labels = []for f in files:parts = f.split("_")p = parts[2].split(".")[0]self.labels.append(int(p))def __len__(self):return len(self.files)def __getitem__(self, idx):img_path = os.path.join(self.root_dir, self.files[idx])img = Image.open(img_path).convert("L")if self.transform:img = self.transform(img)label = self.labels[idx]return img, label# 改進的生成器(使用轉置卷積)

class Generator(nn.Module):def __init__(self, latent_dim=100):super().__init__()self.main = nn.Sequential(# 輸入: latent_dim維噪聲 -> 輸出: 7x7x256nn.ConvTranspose2d(latent_dim, 256, kernel_size=7, stride=1, padding=0, bias=False),nn.BatchNorm2d(256),nn.ReLU(True),# 上采樣: 7x7 -> 14x14nn.ConvTranspose2d(256, 128, kernel_size=4, stride=2, padding=1, bias=False),nn.BatchNorm2d(128),nn.ReLU(True),# 上采樣: 14x14 -> 28x28nn.ConvTranspose2d(128, 64, kernel_size=4, stride=2, padding=1, bias=False),nn.BatchNorm2d(64),nn.ReLU(True),# 輸出層: 28x28x1nn.ConvTranspose2d(64, 1, kernel_size=3, stride=1, padding=1, bias=False),nn.Tanh())def forward(self, x):# 將噪聲重塑為 (batch_size, latent_dim, 1, 1)x = x.view(x.size(0), -1, 1, 1)return self.main(x)# 改進的判別器(使用深度卷積網絡)

class Discriminator(nn.Module):def __init__(self):super().__init__()self.main = nn.Sequential(# 輸入: 1x28x28nn.Conv2d(1, 32, kernel_size=4, stride=2, padding=1), # 輸出: 32x14x14nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Conv2d(32, 64, kernel_size=4, stride=2, padding=1), # 輸出: 64x7x7nn.BatchNorm2d(64),nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1), # 輸出: 128x7x7nn.BatchNorm2d(128),nn.LeakyReLU(0.2, inplace=True),nn.Dropout2d(0.3),nn.Flatten(),nn.Linear(128 * 7 * 7, 1),nn.Sigmoid())def forward(self, x):return self.main(x)# 展示生成器生成的圖像

def gen_img_plot(test_input, save_path):gen_imgs = gen(test_input).detach().cpu()gen_imgs = gen_imgs.view(-1, 28, 28)plt.figure(figsize=(4, 4))for i in range(16):plt.subplot(4, 4, i + 1)plt.imshow(gen_imgs[i], cmap="gray")plt.axis("off")plt.savefig(save_path, dpi=300)plt.close()if __name__ == "__main__":# 對數據做歸一化處理transforms = transforms.Compose([transforms.Resize((28, 28)),transforms.ToTensor(),transforms.Normalize((0.5,), (0.5,))])# 路徑base_dir = 'C:\\Users\\Administrator\\PycharmProjects\\CNN'train_dir = os.path.join(base_dir, "minist_train")# 獲取文件夾里圖像的名稱train_files = [f for f in os.listdir(train_dir) if f.endswith('.jpg')]# 創建數據集和數據加載器train_dataset = MINISTDataset(train_files, train_dir, transform=transforms)train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)# 參數epochs = 50lr = 0.0002# 初始化模型的優化器和損失函數gen = Generator()dis = Discriminator()d_optim = torch.optim.Adam(dis.parameters(), lr=lr, betas=(0.5, 0.999)) # 判別器的優化器g_optim = torch.optim.Adam(gen.parameters(), lr=lr, betas=(0.5, 0.999)) # 生成器的優化器loss_fn = torch.nn.BCELoss() # 二分類交叉熵損失函數# 記錄lossD_loss = []G_loss = []# 訓練for epoch in range(epochs):d_epoch_loss = 0g_epoch_loss = 0count = len(train_loader) # 返回批次數for step, (img, _) in enumerate(train_loader):# 每個批次的大小size = img.size(0)random_noise = torch.randn(size, 100)# 判別器訓練d_optim.zero_grad()real_output = dis(img)d_real_loss = loss_fn(real_output, torch.ones_like(real_output))# d_real_loss.backward()gen_img = gen(random_noise)gen_img = gen_img.view(size, 1, 28, 28)fake_output = dis(gen_img.detach())d_fake_loss = loss_fn(fake_output, torch.zeros_like(fake_output))# d_fake_loss.backward()d_loss = (d_real_loss + d_fake_loss) / 2d_loss.backward()d_optim.step()# 生成器的訓練g_optim.zero_grad()fake_output = dis(gen_img)g_loss = loss_fn(fake_output, torch.ones_like(fake_output))g_loss.backward()g_optim.step()# 計算在一個epoch里面所有的g_loss和d_losswith torch.no_grad():d_epoch_loss += d_lossg_epoch_loss += g_loss# 計算平均損失值with torch.no_grad():d_epoch_loss = d_epoch_loss / countg_epoch_loss = g_epoch_loss / countD_loss.append(d_epoch_loss.item())G_loss.append(g_epoch_loss.item())print("Epoch:", epoch, " D loss:", d_epoch_loss.item(), " G Loss:", g_epoch_loss.item())# 每隔2個epoch繪制生成器生成的圖像if (epoch + 1) % 2 == 0:test_input = torch.randn(16, 100)name = f"gen_img_{epoch}.jpg"save_path = os.path.join('C:\\Users\\Administrator\\PycharmProjects\\CNN\\gen_img_11', name)gen_img_plot(test_input, save_path)# 繪制損失曲線圖plt.figure(figsize=(12, 6))plt.plot(D_loss, label="判別器", color="tomato")plt.plot(G_loss, label="生成器", color="orange")plt.xlabel("epoch")plt.ylabel("loss")plt.title("生成器和判別器的損失曲線圖")plt.legend()plt.grid()plt.savefig("C:\\Users\\Administrator\\PycharmProjects\\CNN\\gen_dis_loss_11.jpg", dpi=300, bbox_inches="tight")plt.close()

)

)

)