作業:day43的時候我們安排大家對自己找的數據集用簡單cnn訓練,現在可以嘗試下借助這幾天的知識來實現精度的進一步提高

還是繼續用上次的街頭食物分類數據集,既然已經統一圖片尺寸到了140x140,所以這次選用輕量化模型?MobileNetV3 ,問就是這個尺寸的圖片可以直接進入這個模型訓練,而且輕量化訓練參數還更少,多是一件美事啊(

前面預處理和數據加載還是和之前一樣的

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

import torchvision.models as models

from torch.utils.data import Dataset, DataLoader, random_split

from PIL import Image

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import os# 設置中文字體支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解決負號顯示問題# 檢查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用設備: {device}")# 1. 數據預處理

# 圖像尺寸統一成140x140

class PhotoResizer:def __init__(self, target_size=140, fill_color=114): # target_size: 目標正方形尺寸,fill_color: 填充使用的灰度值self.target_size = target_sizeself.fill_color = fill_color# 預定義轉換方法self.to_tensor = transforms.ToTensor()self.normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406],std=[0.229, 0.224, 0.225])def __call__(self, img):"""智能處理流程:1. 對小圖像進行填充,對大圖像進行智能裁剪2. 保持長寬比的情況下進行保護性處理"""w, h = img.sizeif w == h == self.target_size: # 情況1:已經是目標尺寸pass # 無需處理elif min(w, h) < self.target_size: # 情況2:至少有一個維度小于目標尺寸(需要填充)img = self.padding_resize(img)else: # 情況3:兩個維度都大于目標尺寸(智能裁剪)img = self.crop_resize(img)# 最終統一轉換return self.normalize(self.to_tensor(img))def padding_resize(self, img): # 等比縮放后居中填充不足部分w, h = img.sizescale = self.target_size / min(w, h)new_w, new_h = int(w * scale), int(h * scale)img = img.resize((new_w, new_h), Image.BILINEAR)# 等比縮放 + 居中填充# 計算需要填充的像素數(4個值:左、上、右、下)pad_left = (self.target_size - new_w) // 2pad_top = (self.target_size - new_h) // 2pad_right = self.target_size - new_w - pad_leftpad_bottom = self.target_size - new_h - pad_topreturn transforms.functional.pad(img, [pad_left, pad_top, pad_right, pad_bottom], self.fill_color)def crop_resize(self, img): # 等比縮放后中心裁剪w, h = img.sizeratio = w / h# 計算新尺寸(保護長邊)if ratio < 0.9: # 豎圖new_size = (self.target_size, int(h * self.target_size / w))elif ratio > 1.1: # 橫圖new_size = (int(w * self.target_size / h), self.target_size)else: # 近似正方形new_size = (self.target_size, self.target_size)img = img.resize(new_size, Image.BILINEAR)return transforms.functional.center_crop(img, self.target_size)# 訓練集測試集預處理

train_transform = transforms.Compose([PhotoResizer(target_size=140), # 自動處理所有情況transforms.RandomHorizontalFlip(), # 隨機水平翻轉圖像(概率0.5)transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1), # 隨機顏色抖動:亮度、對比度、飽和度和色調隨機變化transforms.RandomRotation(15), # 隨機旋轉圖像(最大角度15度)

])test_transform = transforms.Compose([PhotoResizer(target_size=140)

])# 2. 創建dataset和dataloader實例

class StreetFoodDataset(Dataset):def __init__(self, root_dir, transform=None):self.root_dir = root_dirself.transform = transformself.image_paths = []self.labels = []self.class_to_idx = {}# 遍歷目錄獲取類別映射classes = sorted(entry.name for entry in os.scandir(root_dir) if entry.is_dir())self.class_to_idx = {cls_name: i for i, cls_name in enumerate(classes)}# 收集圖像路徑和標簽for class_name in classes:class_dir = os.path.join(root_dir, class_name)for img_name in os.listdir(class_dir):if img_name.lower().endswith(('.png', '.jpg', '.jpeg')):self.image_paths.append(os.path.join(class_dir, img_name))self.labels.append(self.class_to_idx[class_name])def __len__(self):return len(self.image_paths)def __getitem__(self, idx):img_path = self.image_paths[idx]image = Image.open(img_path).convert('RGB')label = self.labels[idx]if self.transform:image = self.transform(image)return image, label# 數據集路徑(Kaggle路徑示例)

dataset_path = '/kaggle/input/popular-street-foods/popular_street_foods/dataset'# 創建數據集實例

# 先創建基礎數據集

full_dataset = StreetFoodDataset(root_dir=dataset_path)# 分割數據集

train_size = int(0.8 * len(full_dataset))

test_size = len(full_dataset) - train_size

train_dataset, test_dataset = random_split(full_dataset, [train_size, test_size])

train_dataset.dataset.transform = train_transform

test_dataset.dataset.transform = test_transform# 創建數據加載器

train_loader = DataLoader(train_dataset,batch_size=32,shuffle=True,num_workers=2,pin_memory=True

)test_loader = DataLoader(test_dataset,batch_size=32,shuffle=False,num_workers=2,pin_memory=True

)CBAM模塊定義

# 3. CBAM模塊定義

# 定義通道注意力

class ChannelAttention(nn.Module):def __init__(self, in_channels, ratio=16):"""通道注意力機制初始化參數:in_channels: 輸入特征圖的通道數ratio: 降維比例,用于減少參數量"""super().__init__()# 全局平均池化,將每個通道的特征圖壓縮為1x1,保留通道間的平均值信息self.avg_pool = nn.AdaptiveAvgPool2d(1)# 全局最大池化,將每個通道的特征圖壓縮為1x1,保留通道間的最顯著特征self.max_pool = nn.AdaptiveMaxPool2d(1)# 共享全連接層,用于學習通道間的關系# 先降維(除以ratio),再通過ReLU激活,最后升維回原始通道數self.ratio = max(4, in_channels // ratio) # 確保至少降維到4self.fc = nn.Sequential(nn.Linear(in_channels, self.ratio, bias=False), # 降維層nn.ReLU(), # 非線性激活函數nn.Linear(self.ratio, in_channels, bias=False) # 升維層)# Sigmoid函數將輸出映射到0-1之間,作為各通道的權重self.sigmoid = nn.Sigmoid()def forward(self, x):"""前向傳播函數參數:x: 輸入特征圖,形狀為 [batch_size, channels, height, width]返回:調整后的特征圖,通道權重已應用"""# 獲取輸入特征圖的維度信息,這是一種元組的解包寫法b, c, h, w = x.shape# 對平均池化結果進行處理:展平后通過全連接網絡avg_out = self.fc(self.avg_pool(x).view(b, c))# 對最大池化結果進行處理:展平后通過全連接網絡max_out = self.fc(self.max_pool(x).view(b, c))# 將平均池化和最大池化的結果相加并通過sigmoid函數得到通道權重attention = self.sigmoid(avg_out + max_out).view(b, c, 1, 1)# 將注意力權重與原始特征相乘,增強重要通道,抑制不重要通道return x * attention #這個運算是pytorch的廣播機制# 空間注意力模塊

class SpatialAttention(nn.Module):def __init__(self, kernel_size=7):super().__init__()self.conv = nn.Conv2d(2, 1, kernel_size, padding=kernel_size//2, bias=False)self.sigmoid = nn.Sigmoid()def forward(self, x):# 通道維度池化avg_out = torch.mean(x, dim=1, keepdim=True) # 平均池化:(B,1,H,W)max_out, _ = torch.max(x, dim=1, keepdim=True) # 最大池化:(B,1,H,W)pool_out = torch.cat([avg_out, max_out], dim=1) # 拼接:(B,2,H,W)attention = self.conv(pool_out) # 卷積提取空間特征return x * self.sigmoid(attention) # 特征與空間權重相乘# CBAM模塊

class CBAM(nn.Module):def __init__(self, in_channels, ratio=16, kernel_size=7):super().__init__()self.channel_attn = ChannelAttention(in_channels, ratio)self.spatial_attn = SpatialAttention(kernel_size) def forward(self, x):x = self.channel_attn(x)x = self.spatial_attn(x)return x在模型修改這一部分,原先構想的是在每一個殘差塊后面都加入CBAM模塊,但是 MobileNetV3 有十五個殘差塊啊,當時沒有想到這樣操作鐵定會造成過擬合,訓練集準確旅奇高但測試集準確率簡直一坨

最后是考慮在第3、6、9、12、15個殘差塊后面插入CBAM模塊,情況確實改善了很多

# 4. 插入CBAM的MobileNetV3-large模型定義修改

from torchvision.models.mobilenetv3 import InvertedResidual

class MobileNetV3_CBAM(nn.Module):def __init__(self, num_classes=20, pretrained=True, cbam_ratio=None, cbam_kernel=7):super().__init__()# 加載預訓練模型self.backbone = models.mobilenet_v3_large(pretrained=True)# 首層輸入不用改,這個網絡本就匹配140x140# 殘差塊太多了,在3、6、9、12、15個殘差塊后面插入CBAM模塊self.cbam_layers = nn.ModuleDict()self.cbam_positions = [3, 6, 9, 12, 15]cbam_count = 0for idx, layer in enumerate(self.backbone.features):if isinstance(layer, InvertedResidual):if cbam_count in self.cbam_positions:# 獲取當前層的實際輸出通道數out_channels = layer.block[-1].out_channels # 取最后一個卷積的輸出通道self.cbam_layers[f"cbam_{idx}"] = CBAM(out_channels)cbam_count += 1# 修改分類頭self.backbone.classifier = nn.Sequential(nn.Linear(960, 1280), # MobileNetV3-Large的倒數第二層維度nn.Hardswish(),nn.Dropout(0.2),nn.Linear(1280, num_classes))def forward(self, x):# 提取特征cbam_count = 0for idx, layer in enumerate(self.backbone.features):x = layer(x)if isinstance(layer, InvertedResidual):if cbam_count in self.cbam_positions:x = self.cbam_layers[f"cbam_{idx}"](x)cbam_count += 1# 全局池化和分類x = torch.mean(x, dim=[2, 3]) # 替代AdaptiveAvgPool2dx = self.backbone.classifier(x)return x訓練部分依舊采用之前的分段微調和學習率調整

import time# ======================================================================

# 5. 結合了分階段策略和詳細打印的訓練函數

# ======================================================================

def set_trainable_layers(model, trainable_parts):print(f"\n---> 解凍以下部分并設為可訓練: {trainable_parts}")for name, param in model.named_parameters():param.requires_grad = Falsefor part in trainable_parts:if part in name:param.requires_grad = Truebreakdef train_staged_finetuning(model, criterion, train_loader, test_loader, device, epochs):optimizer = None# 初始化歷史記錄列表,與你的要求一致all_iter_losses, iter_indices = [], []train_acc_history, test_acc_history = [], []train_loss_history, test_loss_history = [], []for epoch in range(1, epochs + 1):epoch_start_time = time.time()# --- 動態調整學習率和凍結層 ---if epoch == 1:print("\n" + "="*50 + "\n **階段 1:訓練注意力模塊和分類頭**\n" + "="*50)set_trainable_layers(model, ["cbam_layers", "backbone.classifier"])optimizer = optim.Adam(filter(lambda p: p.requires_grad, model.parameters()), lr=1e-4, weight_decay=1e-4)elif epoch == 6:print("\n" + "="*50 + "\n **階段 2:解凍高層特征提取層**\n" + "="*50)set_trainable_layers(model, ["cbam_layers", "backbone.classifier", "backbone.features.16", "backbone.features.17"])optimizer = optim.Adam(filter(lambda p: p.requires_grad, model.parameters()), lr=5e-5, weight_decay=1e-4)elif epoch == 21:print("\n" + "="*50 + "\n **階段 3:解凍所有層,進行全局微調**\n" + "="*50)for param in model.parameters(): param.requires_grad = Trueoptimizer = optim.Adam(model.parameters(), lr=1e-5, weight_decay=1e-4)# --- 訓練循環 ---model.train()running_loss, correct, total = 0.0, 0, 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = criterion(output, target)loss.backward()optimizer.step()# 記錄每個iteration的損失iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append((epoch - 1) * len(train_loader) + batch_idx + 1)running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 按你的要求,每100個batch打印一次if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 單Batch損失: {iter_loss:.4f} | 累計平均損失: {running_loss/(batch_idx+1):.4f}')epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / totaltrain_loss_history.append(epoch_train_loss)train_acc_history.append(epoch_train_acc)# --- 測試循環 ---model.eval()test_loss, correct_test, total_test = 0, 0, 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_testtest_loss_history.append(epoch_test_loss)test_acc_history.append(epoch_test_acc)# 打印每個epoch的最終結果print(f'Epoch {epoch}/{epochs} 完成 | 耗時: {time.time() - epoch_start_time:.2f}s | 訓練準確率: {epoch_train_acc:.2f}% | 測試準確率: {epoch_test_acc:.2f}%')# 訓練結束后調用繪圖函數print("\n訓練完成! 開始繪制結果圖表...")plot_iter_losses(all_iter_losses, iter_indices)plot_epoch_metrics(train_acc_history, test_acc_history, train_loss_history, test_loss_history)# 返回最終的測試準確率return epoch_test_acc# ======================================================================

# 6. 繪圖函數定義

# ======================================================================

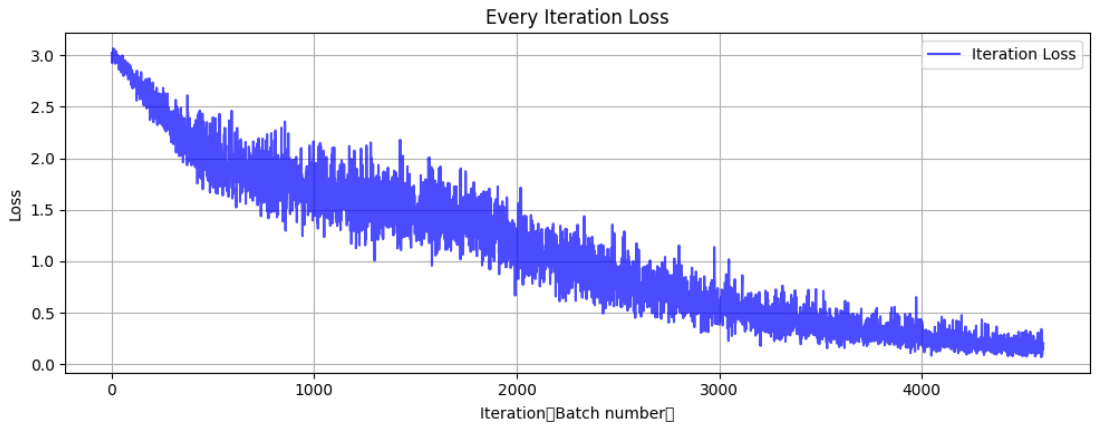

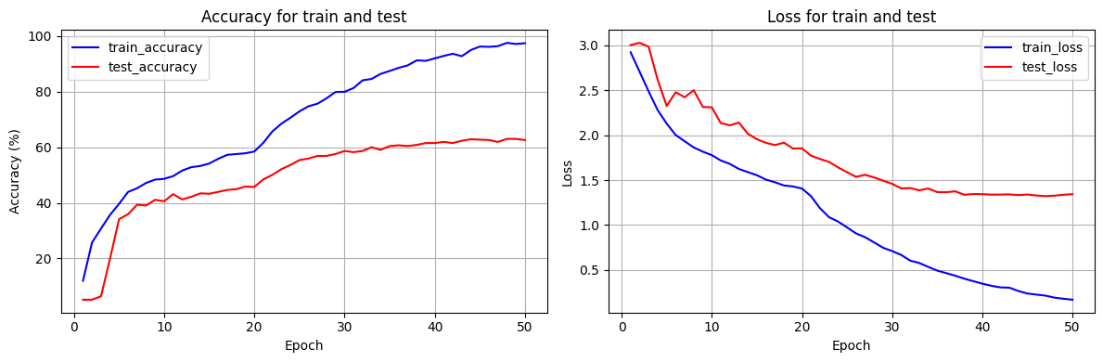

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch number)')plt.ylabel('Loss')plt.title('Every Iteration Loss')plt.legend()plt.grid(True)plt.tight_layout()plt.show()def plot_epoch_metrics(train_acc, test_acc, train_loss, test_loss):epochs = range(1, len(train_acc) + 1)plt.figure(figsize=(12, 4))plt.subplot(1, 2, 1)plt.plot(epochs, train_acc, 'b-', label='train_accuracy')plt.plot(epochs, test_acc, 'r-', label='test_accuracy')plt.xlabel('Epoch')plt.ylabel('Accuracy (%)')plt.title('Accuracy for train and test')plt.legend(); plt.grid(True)plt.subplot(1, 2, 2)plt.plot(epochs, train_loss, 'b-', label='train_loss')plt.plot(epochs, test_loss, 'r-', label='test_loss')plt.xlabel('Epoch')plt.ylabel('Loss')plt.title('Loss for train and test')plt.legend(); plt.grid(True)plt.tight_layout()plt.show()# ======================================================================

# 7. 執行訓練

# ======================================================================

model = MobileNetV3_CBAM().to(device)

criterion = nn.CrossEntropyLoss()

epochs = 50print("開始使用帶分階段微調策略的MobileNetV3+CBAM模型進行訓練...")

final_accuracy = train_staged_finetuning(model, criterion, train_loader, test_loader, device, epochs)

print(f"訓練完成!最終測試準確率: {final_accuracy:.2f}%")結果也還將就吧

開始使用帶分階段微調策略的MobileNetV3+CBAM模型進行訓練...==================================================**階段 1:訓練注意力模塊和分類頭**

==================================================---> 解凍以下部分并設為可訓練: ['cbam_layers', 'backbone.classifier']

Epoch 1/50 完成 | 耗時: 7.65s | 訓練準確率: 11.98% | 測試準確率: 5.17%

Epoch 2/50 完成 | 耗時: 4.51s | 訓練準確率: 25.72% | 測試準確率: 5.17%

Epoch 3/50 完成 | 耗時: 4.55s | 訓練準確率: 30.76% | 測試準確率: 6.39%

Epoch 4/50 完成 | 耗時: 4.53s | 訓練準確率: 35.69% | 測試準確率: 20.00%

Epoch 5/50 完成 | 耗時: 4.56s | 訓練準確率: 39.64% | 測試準確率: 34.15%==================================================**階段 2:解凍高層特征提取層**

==================================================---> 解凍以下部分并設為可訓練: ['cbam_layers', 'backbone.classifier', 'backbone.features.16', 'backbone.features.17']

Epoch 6/50 完成 | 耗時: 4.53s | 訓練準確率: 43.93% | 測試準確率: 35.92%

Epoch 7/50 完成 | 耗時: 4.49s | 訓練準確率: 45.25% | 測試準確率: 39.32%

Epoch 8/50 完成 | 耗時: 4.57s | 訓練準確率: 47.16% | 測試準確率: 39.05%

Epoch 9/50 完成 | 耗時: 4.48s | 訓練準確率: 48.35% | 測試準確率: 41.09%

Epoch 10/50 完成 | 耗時: 4.59s | 訓練準確率: 48.62% | 測試準確率: 40.54%

Epoch 11/50 完成 | 耗時: 4.61s | 訓練準確率: 49.61% | 測試準確率: 43.13%

Epoch 12/50 完成 | 耗時: 4.55s | 訓練準確率: 51.62% | 測試準確率: 41.22%

Epoch 13/50 完成 | 耗時: 4.59s | 訓練準確率: 52.81% | 測試準確率: 42.18%

Epoch 14/50 完成 | 耗時: 4.70s | 訓練準確率: 53.25% | 測試準確率: 43.40%

Epoch 15/50 完成 | 耗時: 5.03s | 訓練準確率: 54.13% | 測試準確率: 43.27%

Epoch 16/50 完成 | 耗時: 4.64s | 訓練準確率: 55.84% | 測試準確率: 43.95%

Epoch 17/50 完成 | 耗時: 4.68s | 訓練準確率: 57.26% | 測試準確率: 44.63%

Epoch 18/50 完成 | 耗時: 4.61s | 訓練準確率: 57.54% | 測試準確率: 44.90%

Epoch 19/50 完成 | 耗時: 4.41s | 訓練準確率: 57.81% | 測試準確率: 45.85%

Epoch 20/50 完成 | 耗時: 4.50s | 訓練準確率: 58.46% | 測試準確率: 45.71%==================================================**階段 3:解凍所有層,進行全局微調**

==================================================

Epoch 21/50 完成 | 耗時: 5.95s | 訓練準確率: 61.62% | 測試準確率: 48.44%

Epoch 22/50 完成 | 耗時: 6.09s | 訓練準確率: 65.67% | 測試準確率: 50.07%

Epoch 23/50 完成 | 耗時: 5.87s | 訓練準確率: 68.46% | 測試準確率: 52.11%

Epoch 24/50 完成 | 耗時: 5.92s | 訓練準確率: 70.60% | 測試準確率: 53.61%

Epoch 25/50 完成 | 耗時: 5.95s | 訓練準確率: 72.88% | 測試準確率: 55.37%

Epoch 26/50 完成 | 耗時: 6.02s | 訓練準確率: 74.72% | 測試準確率: 55.92%

Epoch 27/50 完成 | 耗時: 5.94s | 訓練準確率: 75.64% | 測試準確率: 56.87%

Epoch 28/50 完成 | 耗時: 5.96s | 訓練準確率: 77.58% | 測試準確率: 56.87%

Epoch 29/50 完成 | 耗時: 5.81s | 訓練準確率: 79.86% | 測試準確率: 57.55%

Epoch 30/50 完成 | 耗時: 5.89s | 訓練準確率: 79.93% | 測試準確率: 58.64%

Epoch 31/50 完成 | 耗時: 5.87s | 訓練準確率: 81.29% | 測試準確率: 58.23%

Epoch 32/50 完成 | 耗時: 6.00s | 訓練準確率: 84.04% | 測試準確率: 58.64%

Epoch 33/50 完成 | 耗時: 5.88s | 訓練準確率: 84.55% | 測試準確率: 60.00%

Epoch 34/50 完成 | 耗時: 5.98s | 訓練準確率: 86.36% | 測試準確率: 59.05%

Epoch 35/50 完成 | 耗時: 5.88s | 訓練準確率: 87.41% | 測試準確率: 60.41%

Epoch 36/50 完成 | 耗時: 5.93s | 訓練準確率: 88.53% | 測試準確率: 60.68%

Epoch 37/50 完成 | 耗時: 6.10s | 訓練準確率: 89.42% | 測試準確率: 60.41%

Epoch 38/50 完成 | 耗時: 5.95s | 訓練準確率: 91.22% | 測試準確率: 60.82%

Epoch 39/50 完成 | 耗時: 5.87s | 訓練準確率: 91.05% | 測試準確率: 61.50%

Epoch 40/50 完成 | 耗時: 6.01s | 訓練準確率: 91.94% | 測試準確率: 61.50%

Epoch 41/50 完成 | 耗時: 6.04s | 訓練準確率: 92.82% | 測試準確率: 61.90%

Epoch 42/50 完成 | 耗時: 6.04s | 訓練準確率: 93.60% | 測試準確率: 61.50%

Epoch 43/50 完成 | 耗時: 6.07s | 訓練準確率: 92.72% | 測試準確率: 62.31%

Epoch 44/50 完成 | 耗時: 6.02s | 訓練準確率: 94.93% | 測試準確率: 62.86%

Epoch 45/50 完成 | 耗時: 5.89s | 訓練準確率: 96.19% | 測試準確率: 62.72%

Epoch 46/50 完成 | 耗時: 6.03s | 訓練準確率: 96.09% | 測試準確率: 62.59%

Epoch 47/50 完成 | 耗時: 5.98s | 訓練準確率: 96.33% | 測試準確率: 61.90%

Epoch 48/50 完成 | 耗時: 6.08s | 訓練準確率: 97.52% | 測試準確率: 62.99%

Epoch 49/50 完成 | 耗時: 5.94s | 訓練準確率: 97.11% | 測試準確率: 62.99%

Epoch 50/50 完成 | 耗時: 5.94s | 訓練準確率: 97.38% | 測試準確率: 62.59%訓練完成! 開始繪制結果圖表...

?

?

也不算太高,但至少比之前用自定義CNN網絡訓練來的效果好(突然想到之前效果不好可能也是網絡構造復雜過擬合了,當時沒有可視化細看,遺憾)

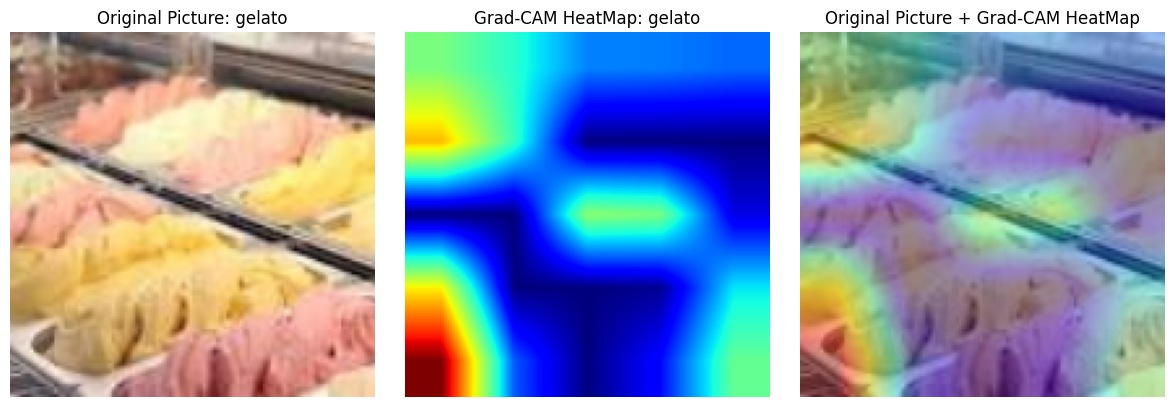

和之前一樣,用Grad-CAM可視化,改了點細節

import torch.nn.functional as F

model.eval()# Grad-CAM實現

class GradCAM:def __init__(self, model, target_layer):self.model = modelself.target_layer = target_layerself.gradients = Noneself.activations = None# 支持直接傳入層對象或層名稱(字符串)if isinstance(target_layer, str):self.target_layer = self._find_layer_by_name(target_layer)else:self.target_layer = target_layer # 假設已經是層對象 # 注冊鉤子,用于獲取目標層的前向傳播輸出和反向傳播梯度self.register_hooks()def _find_layer_by_name(self, layer_name):"""根據名稱查找層對象"""for name, layer in self.model.named_modules():if name == layer_name:return layerraise ValueError(f"Target layer '{layer_name}' not found in model.") def register_hooks(self):# 前向鉤子函數,在目標層前向傳播后被調用,保存目標層的輸出(激活值)def forward_hook(module, input, output):self.activations = output.detach()# 反向鉤子函數,在目標層反向傳播后被調用,保存目標層的梯度def backward_hook(module, grad_input, grad_output):self.gradients = grad_output[0].detach()# 在目標層注冊前向鉤子和反向鉤子self.target_layer.register_forward_hook(forward_hook)self.target_layer.register_backward_hook(backward_hook)def generate_cam(self, input_image, target_class=None):# 前向傳播,得到模型輸出model_output = self.model(input_image)if target_class is None:# 如果未指定目標類別,則取模型預測概率最大的類別作為目標類別target_class = torch.argmax(model_output, dim=1).item()# 清除模型梯度,避免之前的梯度影響self.model.zero_grad()# 反向傳播,構造one-hot向量,使得目標類別對應的梯度為1,其余為0,然后進行反向傳播計算梯度one_hot = torch.zeros_like(model_output)one_hot[0, target_class] = 1model_output.backward(gradient=one_hot)# 獲取之前保存的目標層的梯度和激活值gradients = self.gradientsactivations = self.activations# 對梯度進行全局平均池化,得到每個通道的權重,用于衡量每個通道的重要性weights = torch.mean(gradients, dim=(2, 3), keepdim=True)# 加權激活映射,將權重與激活值相乘并求和,得到類激活映射的初步結果cam = torch.sum(weights * activations, dim=1, keepdim=True)# ReLU激活,只保留對目標類別有正貢獻的區域,去除負貢獻的影響cam = F.relu(cam)# 調整大小并歸一化,將類激活映射調整為與輸入圖像相同的尺寸(140x140),并歸一化到[0, 1]范圍cam = F.interpolate(cam, size=(140, 140), mode='bilinear', align_corners=False)cam = cam - cam.min()cam = cam / cam.max() if cam.max() > 0 else camreturn cam.cpu().squeeze().numpy(), target_class# 選擇一個隨機圖像

idx = np.random.randint(len(test_dataset))

image, label = test_dataset[idx]

classes = sorted(os.listdir('/kaggle/input/popular-street-foods/popular_street_foods/dataset'))

print(f"選擇的圖像類別: {classes[label]}")# 轉換圖像以便可視化

def tensor_to_np(tensor):img = tensor.cpu().numpy().transpose(1, 2, 0)mean = np.array([0.485, 0.456, 0.406])std = np.array([0.229, 0.224, 0.225])img = std * img + meanimg = np.clip(img, 0, 1)return img# 添加批次維度并移動到設備

input_tensor = image.unsqueeze(0).to(device)# 初始化Grad-CAM(選擇最后一個卷積層)

target_layer_name = "cbam_layers.cbam_13"

grad_cam = GradCAM(model, target_layer_name)# 生成熱力圖

heatmap, pred_class = grad_cam.generate_cam(input_tensor)# 可視化

plt.figure(figsize=(12, 4))# 原始圖像

plt.subplot(1, 3, 1)

plt.imshow(tensor_to_np(image))

plt.title(f"Original Picture: {classes[label]}")

plt.axis('off')# 熱力圖

plt.subplot(1, 3, 2)

plt.imshow(heatmap, cmap='jet')

plt.title(f"Grad-CAM HeatMap: {classes[pred_class]}")

plt.axis('off')# 疊加的圖像

plt.subplot(1, 3, 3)

img = tensor_to_np(image)

heatmap_resized = np.uint8(255 * heatmap)

heatmap_colored = plt.cm.jet(heatmap_resized)[:, :, :3]

superimposed_img = heatmap_colored * 0.4 + img * 0.6

plt.imshow(superimposed_img)

plt.title("Original Picture + Grad-CAM HeatMap")

plt.axis('off')plt.tight_layout()

plt.show()

?講實話整個結構改進下來,CBAM和通道數匹配才是最惱火的,最先是直接設定CBAM輸入通道,一直報錯都快放棄了,最后無腦遍歷😈

@浙大疏錦行?

)

)

排序)

)