背景

1、軟件系統(轉儲系統)需要向生產環境遷移:遷到國產操作系統、國產資源池(Hbase存儲不變)

2、老環境上的轉儲系統本身存在寫入hbase的性能問題、及部分省份寫入hbase失敗的問題(20%失敗)

3、全國31個省寫入數據量大

4、向國產環境遷移沒有成功,該工程(6年前的工程)作者之前從來沒有參與過

5、新的環境遷移涉及主機端口開通、及網絡端口打通等諸多事項

環境

?1、Oracle JDK8u202(Oracle JDK8最后一個非商業版本)? ?下載地址:Oracle JDK8u202

?2、Hbase 1.1.2.2.5 ?Apache HBase – Apache HBase Downloads(多年前的項目,所以其版本較舊, 該版本已歸檔:???Index of /dist/hbase?)

?3、Hadoop?2.7.1.2.5? Apache Hadoop?(該版本已歸檔: Central Repository: org/apache/hadoop/hadoop-common/2.7.1)

?4、Kerberos 1.10.3-10? ?MIT Kerberos Consortium? (該版本已歸檔:Historic MIT Kerberos Releases)

Hbase測試代碼

話不多說,先看代碼作者在POD上手撕的測試代碼,這是指登陸代碼(因為只要登陸成功就能解決所有問題):

主類

package com.asiainfo.crm.ai;import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.security.UserProvider;

import org.apache.hadoop.hbase.shaded.com.google.common.base.Preconditions;

import org.apache.hadoop.hbase.util.Strings;

import org.apache.hadoop.net.DNS;/*** export LIB_HOME=/app/tomcat/webapps/ROOT/WEB-INF/lib/javac -d ../classes -cp $LIB_HOME\*:. Transfer2Application.javajava -cp $LIB_HOME\*:. Transfer2Application*/

public class Transfer2Application

{public static void main(String[] args){try {System.out.println("################## hello world! 1 ");//初始化Hbase信息initHbase();} catch (Exception e) {e.printStackTrace();}}/*** 系統啟動時初始化Hbase連接信息,包括kerberos認證** @throws Exception*/private static void initHbase() throws Exception{System.out.println("################## hello world! initHbase ");System.getProperties().setProperty("hadoop.home.dir", "/app/tomcat/temp/hbase");System.setProperty("java.security.krb5.conf", "/app/tomcat/krb5.conf");Configuration conf = HBaseConfiguration.create();conf.set("hbase.keytab.file", "/app/tomcat/gz**.app.keytab");conf.set("hbase.kerberos.principal", "gz***/gz**-***.dcs.com@DCS.COM");UserProvider userProvider = UserProvider.instantiate(conf);// login the server principal (if using secure Hadoop)if (userProvider.isHadoopSecurityEnabled() && userProvider.isHBaseSecurityEnabled()){String machineName = Strings.domainNamePointerToHostName(DNS.getDefaultHost(conf.get( "hbase.rest.dns.interface", "default"),conf.get( "hbase.rest.dns.nameserver", "default")));String keytabFilename = conf.get( "hbase.keytab.file" );Preconditions.checkArgument(keytabFilename != null && !keytabFilename.isEmpty(),"hbase.keytab.file"+ " should be set if security is enabled");String principalConfig = conf.get( "hbase.kerberos.principal" );Preconditions.checkArgument(principalConfig != null && !principalConfig.isEmpty(),"hbase.kerberos.principal" + " should be set if security is enabled");userProvider.login( "hbase.keytab.file" , "hbase.kerberos.principal", machineName);System.out.println("########## login success! #######");}else{System.out.println("不具備登陸條件!");System.out.println("userProvider.isHadoopSecurityEnabled() :"+userProvider.isHadoopSecurityEnabled() );System.out.println("userProvider.isHBaseSecurityEnabled():"+userProvider.isHBaseSecurityEnabled());}}

}

POD上手撕代碼的幾條重要命令

# 應用pod上 lib目錄路徑,用于設置java classpath

export LIB_HOME=/app/tomcat/webapps/ROOT/WEB-INF/lib/

# 進入src目錄并創建主類源文件

cd src

# 編譯主類,并將lib目錄下所有jar加入到classpath中

javac -d ../classes -cp $LIB_HOME\*:. Transfer2Application.java

# 進入classes目錄

cd ../classes

# 運行二進制文件

java -cp $LIB_HOME\*:. Transfer2Application運行成功效果日志(基于shell)

################## hello world! 1

################## hello world! initHbase

14:23:01.194 [main] DEBUG org.apache.hadoop.util.Shell - setsid exited with exit code 0

14:23:01.207 [main] DEBUG org.apache.hadoop.security.Groups - Creating new Groups object

14:23:01.234 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Trying to load the custom-built native-hadoop library...

14:23:01.235 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: no hadoop in java.library.path

14:23:01.235 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

14:23:01.235 [main] WARN org.apache.hadoop.util.NativeCodeLoader - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

14:23:01.235 [main] DEBUG org.apache.hadoop.util.PerformanceAdvisory - Falling back to shell based

14:23:01.235 [main] DEBUG org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback - Group mapping impl=org.apache.hadoop.security.ShellBasedUnixGroupsMapping

14:23:01.285 [main] DEBUG org.apache.hadoop.security.Groups - Group mapping impl=org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback; cacheTimeout=300000; warningDeltaMs=5000

14:23:01.338 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginSuccess with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of successful kerberos logins and latency (milliseconds)])

14:23:01.345 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginFailure with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of failed kerberos logins and latency (milliseconds)])

14:23:01.345 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.getGroups with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[GetGroups])

14:23:01.346 [main] DEBUG org.apache.hadoop.metrics2.impl.MetricsSystemImpl - UgiMetrics, User and group related metrics

14:23:01.556 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - hadoop login

14:23:01.557 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - hadoop login commit

14:23:01.558 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - using kerberos user:gz***/gz***-ctdfs01.dcs.com@DCS.COM

14:23:01.558 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - Using user: "gz***/gz***-ctdfs01.dcs.com@DCS.COM" with name gz***/gz***-ctdfs01.dcs.com@DCS.COM

14:23:01.558 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - User entry: "gz***/gz***-ctdfs01.dcs.com@DCS.COM"

14:23:01.558 [main] INFO org.apache.hadoop.security.UserGroupInformation - Login successful for user gz***/gz***-ctdfs01.dcs.com@DCS.COM using keytab file /app/tomcat/gz***.app.keytab

########## login success! #######運行成功效果日志(基于動態庫)

sh-4.2# java -cp $LIB_HOME\*:. Transfer2Application

################## hello world! 1

################## hello world! initHbase

17:46:05.469 [main] DEBUG org.apache.hadoop.util.Shell - setsid exited with exit code 0

17:46:05.481 [main] DEBUG org.apache.hadoop.security.Groups - Creating new Groups object

17:46:05.504 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Trying to load the custom-built native-hadoop library...

17:46:05.504 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Loaded the native-hadoop library

17:46:05.504 [main] DEBUG org.apache.hadoop.security.JniBasedUnixGroupsMapping - Using JniBasedUnixGroupsMapping for Group resolution

17:46:05.505 [main] DEBUG org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback - Group mapping impl=org.apache.hadoop.security.JniBasedUnixGroupsMapping

17:46:05.553 [main] DEBUG org.apache.hadoop.security.Groups - Group mapping impl=org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback; cacheTimeout=300000; warningDeltaMs=5000

17:46:05.604 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginSuccess with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of successful kerberos logins and latency (milliseconds)])

17:46:05.611 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginFailure with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of failed kerberos logins and latency (milliseconds)])

17:46:05.611 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.getGroups with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[GetGroups])

17:46:05.612 [main] DEBUG org.apache.hadoop.metrics2.impl.MetricsSystemImpl - UgiMetrics, User and group related metrics

17:46:05.818 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - hadoop login

17:46:05.819 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - hadoop login commit

17:46:05.820 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - using kerberos user:gzcrm/gzcrm-ctdfs01.dcs.com@DCS.COM

17:46:05.820 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - Using user: "gzcrm/gzcrm-ctdfs01.dcs.com@DCS.COM" with name gzcrm/gzcrm-ctdfs01.dcs.com@DCS.COM

17:46:05.820 [main] DEBUG org.apache.hadoop.security.UserGroupInformation - User entry: "gzcrm/gzcrm-ctdfs01.dcs.com@DCS.COM"

17:46:05.821 [main] INFO org.apache.hadoop.security.UserGroupInformation - Login successful for user gzcrm/gzcrm-ctdfs01.dcs.com@DCS.COM using keytab file /app/tomcat/gzcrm.app.keytab

########## login success! #######說明:

? ? 基于動態庫的方式操作hbase會得到更好的性能效果。

常見問題(FAQ):

Q1:運行時報?Kerberos krb5 configuration not found

18:39:46.030 [main] DEBUG org.apache.hadoop.util.Shell - setsid exited with exit code 0

18:39:46.041 [main] DEBUG org.apache.hadoop.security.Groups - Creating new Groups object

18:39:46.058 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Trying to load the custom-built native-hadoop library...

18:39:46.059 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: no hadoop in java.library.path

18:39:46.059 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

18:39:46.059 [main] WARN org.apache.hadoop.util.NativeCodeLoader - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18:39:46.059 [main] DEBUG org.apache.hadoop.util.PerformanceAdvisory - Falling back to shell based

18:39:46.059 [main] DEBUG org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback - Group mapping impl=org.apache.hadoop.security.ShellBasedUnixGroupsMapping

18:39:46.113 [main] DEBUG org.apache.hadoop.security.Groups - Group mapping impl=org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback; cacheTimeout=300000; warningDeltaMs=5000

18:39:46.166 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginSuccess with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of successful kerberos logins and latency (milliseconds)])

18:39:46.172 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginFailure with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of failed kerberos logins and latency (milliseconds)])

18:39:46.172 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.getGroups with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[GetGroups])

18:39:46.173 [main] DEBUG org.apache.hadoop.metrics2.impl.MetricsSystemImpl - UgiMetrics, User and group related metrics

18:39:46.219 [main] DEBUG org.apache.hadoop.security.authentication.util.KerberosName - Kerberos krb5 configuration not found, setting default realm to empty

java.lang.IllegalArgumentException: Can't get Kerberos realmat org.apache.hadoop.security.HadoopKerberosName.setConfiguration(HadoopKerberosName.java:65)at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupInformation.java:277)at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(UserGroupInformation.java:262)at org.apache.hadoop.security.UserGroupInformation.isAuthenticationMethodEnabled(UserGroupInformation.java:339)at org.apache.hadoop.security.UserGroupInformation.isSecurityEnabled(UserGroupInformation.java:333)at org.apache.hadoop.hbase.security.User$SecureHadoopUser.isSecurityEnabled(User.java:428)at org.apache.hadoop.hbase.security.User.isSecurityEnabled(User.java:268)at org.apache.hadoop.hbase.security.UserProvider.isHadoopSecurityEnabled(UserProvider.java:159)at Transfer2Application.initHbase(Transfer2Application.java:44)at Transfer2Application.main(Transfer2Application.java:22)

Caused by: java.lang.reflect.InvocationTargetExceptionat sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)at java.lang.reflect.Method.invoke(Method.java:498)at org.apache.hadoop.security.authentication.util.KerberosUtil.getDefaultRealm(KerberosUtil.java:84)at org.apache.hadoop.security.HadoopKerberosName.setConfiguration(HadoopKerberosName.java:63)... 9 more

Caused by: KrbException: Cannot locate default realmat sun.security.krb5.Config.getDefaultRealm(Config.java:1029)... 15 more

Q2:運行時報hbase.keytab.file should be set if security is enabled

18:41:21.911 [main] DEBUG org.apache.hadoop.util.Shell - setsid exited with exit code 0

18:41:21.922 [main] DEBUG org.apache.hadoop.security.Groups - Creating new Groups object

18:41:21.945 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Trying to load the custom-built native-hadoop library...

18:41:21.945 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: no hadoop in java.library.path

18:41:21.945 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

18:41:21.946 [main] WARN org.apache.hadoop.util.NativeCodeLoader - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18:41:21.946 [main] DEBUG org.apache.hadoop.util.PerformanceAdvisory - Falling back to shell based

18:41:21.946 [main] DEBUG org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback - Group mapping impl=org.apache.hadoop.security.ShellBasedUnixGroupsMapping

18:41:21.995 [main] DEBUG org.apache.hadoop.security.Groups - Group mapping impl=org.apache.hadoop.security.JniBasedUnixGroupsMappingWithFallback; cacheTimeout=300000; warningDeltaMs=5000

18:41:22.046 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginSuccess with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of successful kerberos logins and latency (milliseconds)])

18:41:22.052 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.loginFailure with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[Rate of failed kerberos logins and latency (milliseconds)])

18:41:22.053 [main] DEBUG org.apache.hadoop.metrics2.lib.MutableMetricsFactory - field org.apache.hadoop.metrics2.lib.MutableRate org.apache.hadoop.security.UserGroupInformation$UgiMetrics.getGroups with annotation @org.apache.hadoop.metrics2.annotation.Metric(always=false, about=, sampleName=Ops, type=DEFAULT, valueName=Time, value=[GetGroups])

18:41:22.053 [main] DEBUG org.apache.hadoop.metrics2.impl.MetricsSystemImpl - UgiMetrics, User and group related metrics

java.lang.IllegalArgumentException: hbase.keytab.file should be set if security is enabledat org.apache.hadoop.hbase.shaded.com.google.common.base.Preconditions.checkArgument(Preconditions.java:92)at Transfer2Application.initHbase(Transfer2Application.java:53)at Transfer2Application.main(Transfer2Application.java:22):22)A:沒有正確設置hbase.keytab.file

conf.set("hbase.keytab.file", "/app/tomcat/gzcrm.app.keytab");Q3:運行時報Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: no hadoop in java.library.path

18:39:46.041 [main] DEBUG org.apache.hadoop.security.Groups - Creating new Groups object

18:39:46.058 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Trying to load the custom-built native-hadoop library...

18:39:46.059 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: no hadoop in java.library.path

18:39:46.059 [main] DEBUG org.apache.hadoop.util.NativeCodeLoader - java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

18:39:46.059 [main] WARN org.apache.hadoop.util.NativeCodeLoader - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18:39:46.059 [main] DEBUG org.apache.hadoop.util.PerformanceAdvisory - Falling back to shell basedA:沒有成功加載hadoop動態庫。從官方下載動態庫并拷貝到動態庫路徑中即可。官方動態庫下載地址:https://archive.apache.org/dist/hadoop/common/hadoop-2.7.1/hadoop-2.7.1.tar.gz

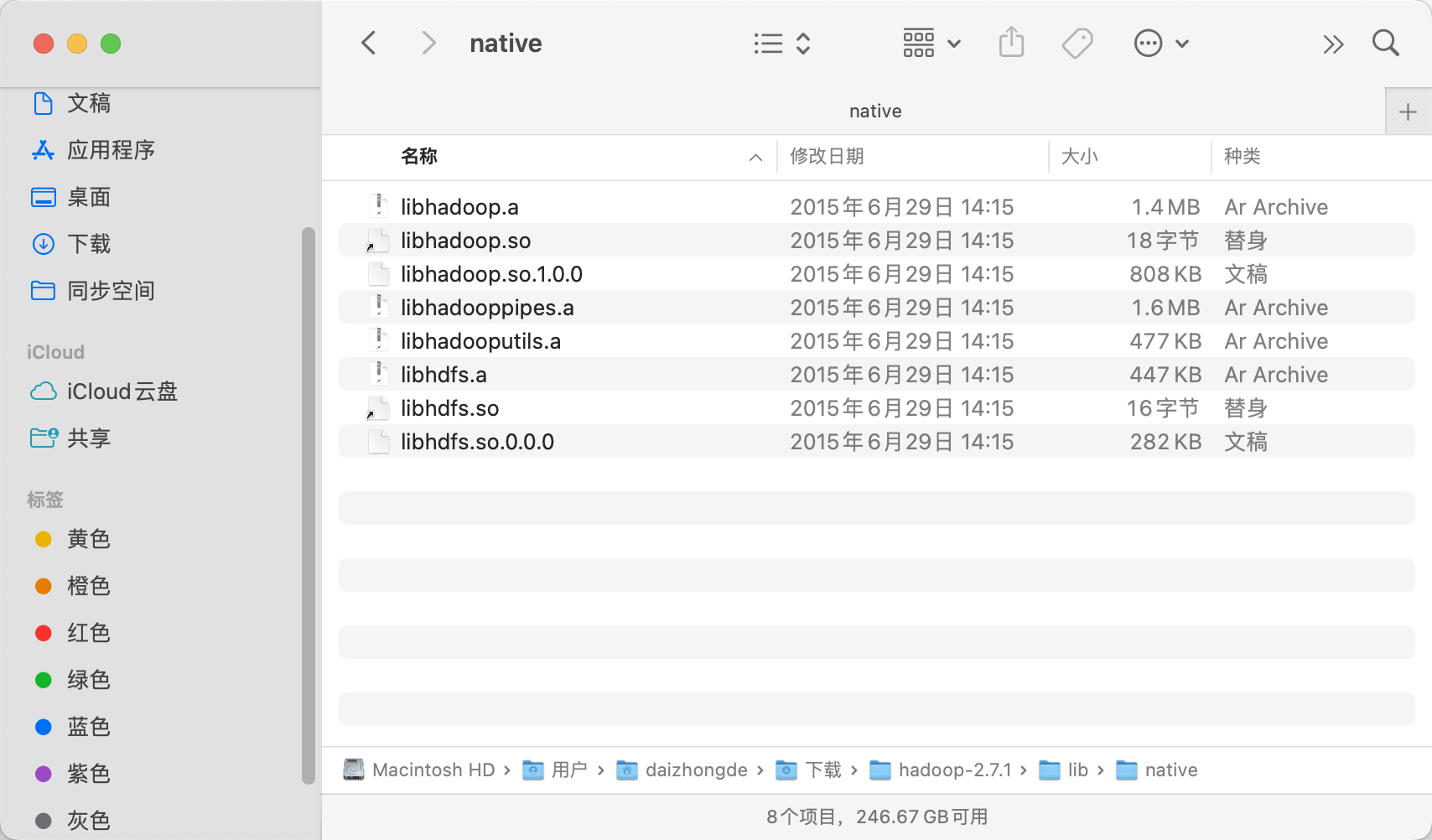

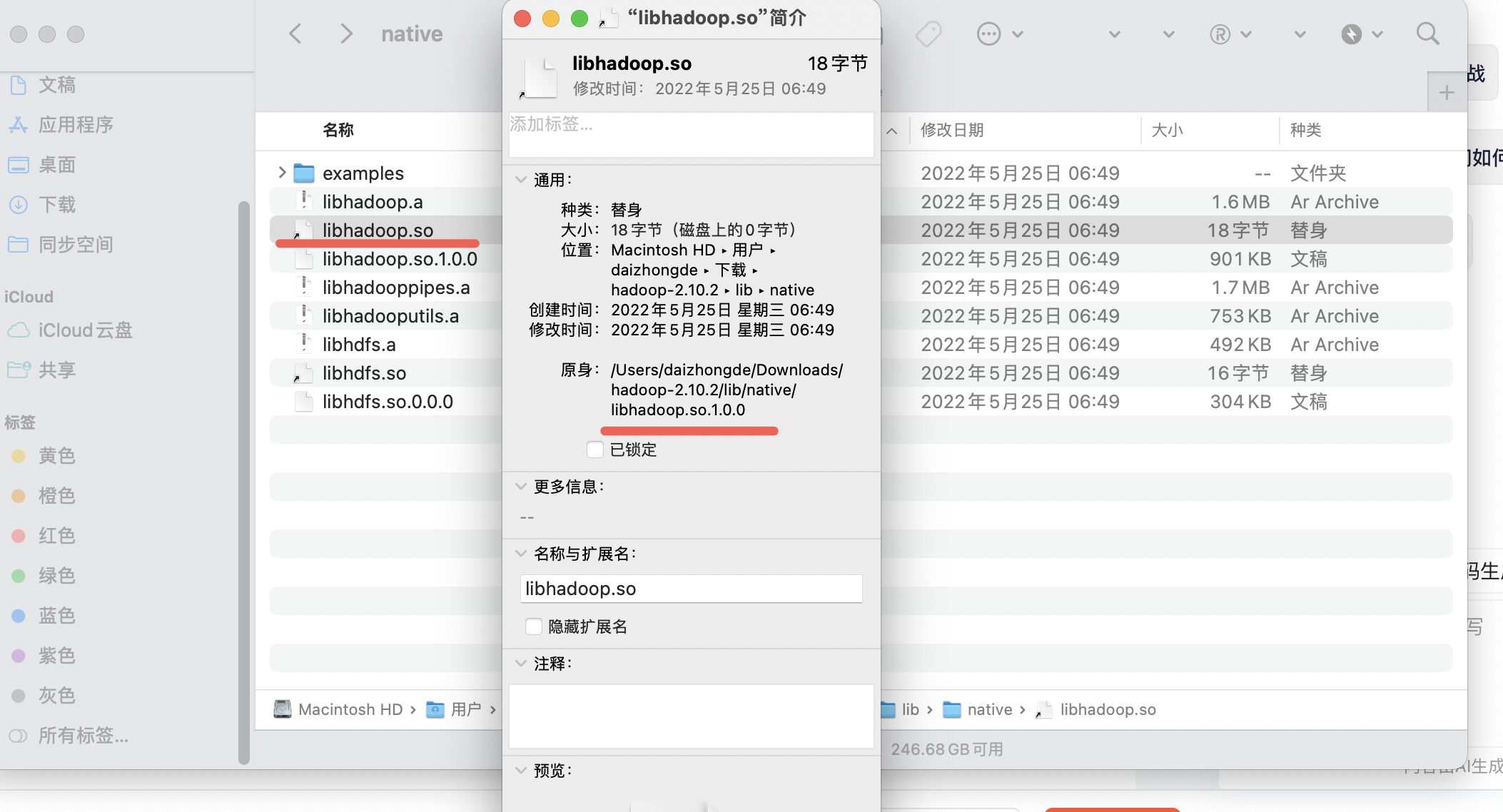

動態庫文件路徑:hadoop-2.7.1/lib/native

把這個目錄所有文件拷貝到:/usr/lib 目錄下 ,其為日志中提示的java.library.path的目錄之一

注意:

? ? ?默認拷貝到lib下的文件不包含可直接使用的動態庫(不會被加載),原因是默認是的文件鏈接,拷貝進去就丟失了。需要重新創建文件鏈接、或做二次拷貝、或是重命名

sh-4.2# ls -lh /usr/lib|grep hadoop

-rwxrwxrwx 1 root root 1.4M Aug 24 14:22 libhadoop.a

-rwxrwxrwx 1 root root 1.6M Aug 24 14:22 libhadooppipes.a

-rwxrwxrwx 1 root root 790K Aug 24 14:22 libhadoop.so.1.0.0

-rwxrwxrwx 1 root root 466K Aug 24 14:22 libhadooputils.a

sh-4.2#

sh-4.2#

sh-4.2# cd /usr/lib

sh-4.2# cp libhadoop.so.1.0.0 libhadoop.so

sh-4.2# cp libhdfs.so.0.0.0 libhdfs.so

sh-4.2#

sh-4.2# ls -lh /usr/lib|grep hadoop

-rwxrwxrwx 1 root root 1.4M Aug 24 14:22 libhadoop.a

-rwxrwxrwx 1 root root 1.6M Aug 24 14:22 libhadooppipes.a

-rwxr-xr-x 1 root root 790K Aug 24 17:44 libhadoop.so

-rwxrwxrwx 1 root root 790K Aug 24 14:22 libhadoop.so.1.0.0

-rwxrwxrwx 1 root root 466K Aug 24 14:22 libhadooputils.a

sh-4.2#

sh-4.2#

sh-4.2# ls -lh /usr/lib|grep hdfs

-rwxrwxrwx 1 root root 437K Aug 24 14:22 libhdfs.a

-rwxr-xr-x 1 root root 276K Aug 24 17:45 libhdfs.so

-rwxrwxrwx 1 root root 276K Aug 24 14:22 libhdfs.so.0.0.0本地查看:

hadoop-2.7.1

hadoop-2.10.2

Q4:運行時報各種網絡不通,包括不能訪問kerberos服務

A:打通網絡端口,開通hbase服務端主機端口,清單如下(實際大家的端口號可能不一致,但都有):

| 端口號 | 客戶端是否必需打通該端口 | 端口說明 |

| 8090 | 資源管理頁面端口 | |

| 50470 | https節點訪問端口 | |

| 8485 | √ | 共享目錄端口 |

| 8020 | √ | rpc端口 |

| 50070 | √ | 節點http訪問端口(需要打通) |

| 2181 | √ | zookeeper服務端口(需要打通) |

| 8088 | √ | RM資源管理器http端口 |

| 16000 | √ | master服務端口 |

| 3888 | √ | 主zookeeper端口 |

| 16010 | √ | master 信息端口 |

| 13562 | √ | mapreduce分片服務端口 |

| 2049 | nfs文件系統訪問端口 | |

| 16100 | 多播端口 | |

| 8080 | √ | 服務rest端口 |

| 16020 | √ | RS區域服務端口 |

| 21 | ftp端口 | |

| 4242 | nfs掛載端口 | |

| 88 | √ | KDC通信端口 |

| 80 | √ | Kerberos服務端口 |

| 749 | √ | 這是一個可選的端口,主要用于Kerberos的TCP服 |

| 800 | 這些端口用于Kerberos的KDC之間的通信,特別是在設置了跨多個KDC的環境時 | |

| 801 | 這些端口用于Kerberos的KDC之間的通信,特別是在設置了跨多個KDC的環境時 | |

| 464 | √ | 這是Kpasswd服務的端口,用于更改Kerberos用戶的密碼。 |

| 750 | 一些實現可能使用這個端口來支持Kpasswd服務的額外功能。 | |

| 636 | 如果Kerberos配置為使用LDAP over SSL(LDAPS),則LDAPS服務通常運行在這個端口上。雖然這不是Kerberos原生的一部分,但它常被用于管理Kerberos用戶賬戶和策略。 | |

| 9090 | Thrift接口端口,支持非Java客戶端訪問HBase | |

| 60020 | RegionServer工作節點端口,處理客戶端讀寫請求 | |

| 2888 | 集群內機器通訊使用,Leader監聽此端口 |

附件一:批量驗證網絡端口連通性的腳本

Linux shell 批量驗證網絡主機端口連通性_linux shell批量測試ip連通性-CSDN博客

?)

)

)