訓練和測試的規范寫法

一、黑白圖片的規范寫法,以MNIST數據集為例

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms # 用于加載MNIST數據集

from torch.utils.data import DataLoader # 用于創建數據加載器

import matplotlib.pyplot as plt

import numpy as np# 設置中文字體支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解決負號顯示問題# 1. 數據預處理

transform = transforms.Compose([ transforms.ToTensor(), # 轉換為張量并歸一化到[0,1]transforms.Normalize((0.1307,), (0.3081,)) # MNIST數據集的均值和標準差

])# 2. 加載MNIST數據集,沒有的話會自動下載

train_dataset = datasets.MNIST(root='./data',train=True,download=True,transform=transform

)test_dataset = datasets.MNIST(root='./data',train=False,transform=transform

)# 3. 創建數據加載器,用于批量加載數據

# 這里我們使用 DataLoader 來創建訓練集和測試集的加載器,

# 并設置了 batch_size 和 shuffle 參數。

# batch_size 表示每次加載的數據量,shuffle 表示是否打亂數據順序。

batch_size = 64 # 每批處理64個樣本

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True) # 訓練時打斷順序

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False) # 測試時不打斷順序# 4. 定義感知機模型、損失函數和優化器

class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.flatten = nn.Flatten() # 將28x28的圖像展平為784維向量,方便輸入到全連接層self.layer1 = nn.Linear(784, 128) # 第一層:784個輸入,128個神經元self.relu = nn.ReLU() # 激活函數self.layer2 = nn.Linear(128, 10) # 第二層:128個輸入,10個輸出(對應10個數字類別)def forward(self, x):x = self.flatten(x) # 展平圖像x = self.layer1(x) # 第一層線性變換x = self.relu(x) # 應用ReLU激活函數x = self.layer2(x) # 第二層線性變換,輸出logitsreturn x# 檢查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 初始化模型

model = MLP()

model = model.to(device) # 將模型移至GPU(如果可用)criterion = nn.CrossEntropyLoss() # 交叉熵損失函數,適用于多分類問題

optimizer = optim.Adam(model.parameters(), lr=0.001) # Adam優化器# 5. 訓練模型(記錄每個 iteration 的損失)

def train(model, train_loader, test_loader, criterion, optimizer, device, epochs):model.train() # 設置為訓練模式# 新增:記錄每個 iteration 的損失all_iter_losses = [] # 存儲所有 batch 的損失iter_indices = [] # 存儲 iteration 序號(從1開始)for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 移至GPU(如果可用)optimizer.zero_grad() # 梯度清零output = model(data) # 前向傳播loss = criterion(output, target) # 計算損失loss.backward() # 反向傳播optimizer.step() # 更新參數# 記錄當前 iteration 的損失(注意:這里直接使用單 batch 損失,而非累加平均)iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(epoch * len(train_loader) + batch_idx + 1) # iteration 序號從1開始# 統計準確率和損失(原邏輯保留,用于 epoch 級統計)running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 每100個批次打印一次訓練信息(可選:同時打印單 batch 損失)if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 單Batch損失: {iter_loss:.4f} | 累計平均損失: {running_loss/(batch_idx+1):.4f}')# 原 epoch 級邏輯(測試、打印 epoch 結果)不變epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / totalepoch_test_loss, epoch_test_acc = test(model, test_loader, criterion, device)print(f'Epoch {epoch+1}/{epochs} 完成 | 訓練準確率: {epoch_train_acc:.2f}% | 測試準確率: {epoch_test_acc:.2f}%')# 繪制所有 iteration 的損失曲線plot_iter_losses(all_iter_losses, iter_indices)# 保留原 epoch 級曲線(可選)# plot_metrics(train_losses, test_losses, train_accuracies, test_accuracies, epochs)return epoch_test_acc # 返回最終測試準確率# 6. 測試模型

def test(model, test_loader, criterion, device):model.eval() # 設置為評估模式test_loss = 0correct = 0total = 0with torch.no_grad(): # 不計算梯度,節省內存和計算資源for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()avg_loss = test_loss / len(test_loader)accuracy = 100. * correct / totalreturn avg_loss, accuracy # 返回損失和準確率# 7.繪制每個 iteration 的損失曲線

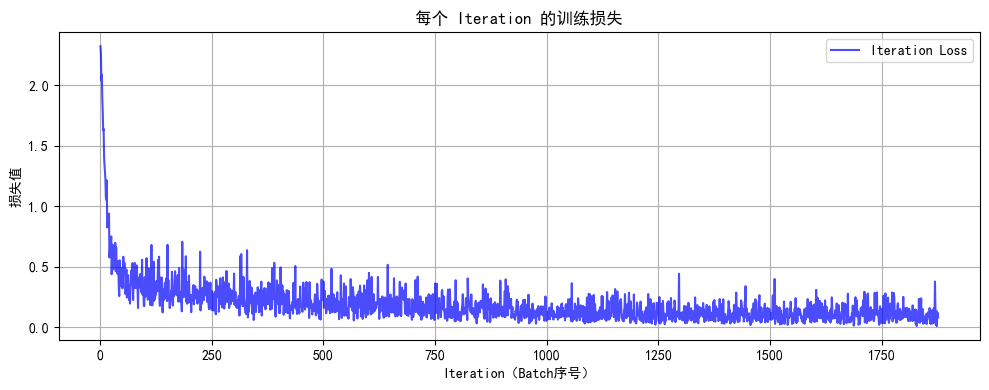

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序號)')plt.ylabel('損失值')plt.title('每個 Iteration 的訓練損失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()# 8. 執行訓練和測試(設置 epochs=2 驗證效果)

epochs = 2

print("開始訓練模型...")

final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, device, epochs)

print(f"訓練完成!最終測試準確率: {final_accuracy:.2f}%")# 9. 隨機選擇測試圖像進行預測展示

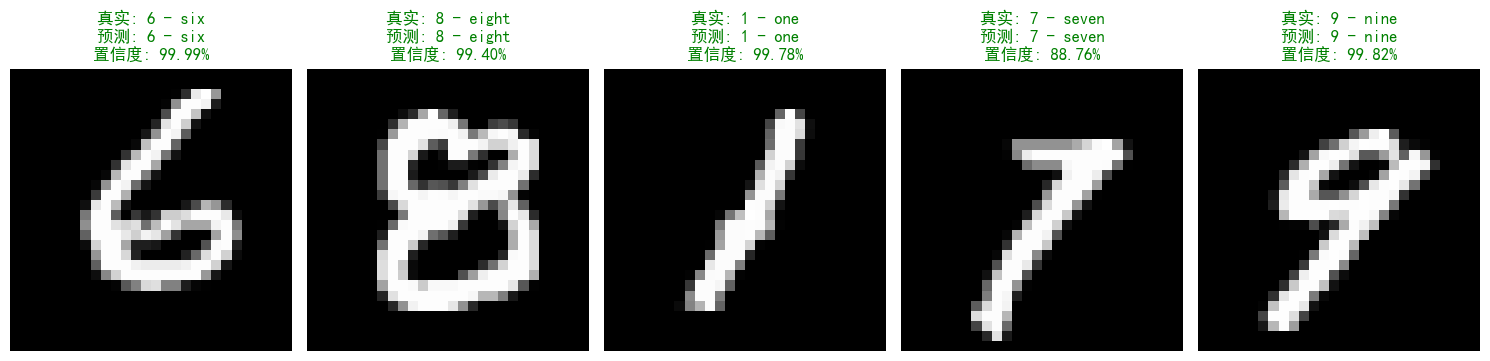

def show_random_predictions(model, test_loader, device, num_images=5):model.eval()# 隨機選擇索引indices = np.random.choice(len(test_dataset), num_images, replace=False)plt.figure(figsize=(3*num_images, 4))for i, idx in enumerate(indices):# 獲取圖像和真實標簽image, label = test_dataset[idx]image = image.unsqueeze(0).to(device)# 預測并計算概率with torch.no_grad():output = model(image)probs = torch.nn.functional.softmax(output, dim=1)_, predicted = torch.max(output.data, 1)confidence = probs[0][predicted].item()# 轉換圖像格式image = image.squeeze().cpu().numpy()# 修改為直接取第一個通道(MNIST是單通道)image = image[0] if image.ndim == 3 else image # 處理單通道情況image = image * 0.1307 + 0.3081 # 反歸一化# 繪制結果plt.subplot(1, num_images, i+1)plt.imshow(image, cmap='gray') # 明確指定灰度圖# 設置標題顏色和內容title_color = 'green' if predicted.item() == label else 'red'title_text = (f'真實: {test_dataset.classes[label]}\n'f'預測: {test_dataset.classes[predicted.item()]}\n'f'置信度: {confidence:.2%}')plt.title(title_text, color=title_color)plt.axis('off')plt.tight_layout()plt.show()# 在訓練完成后調用

print("\n隨機測試圖像預測結果:")

show_random_predictions(model, test_loader, device)開始訓練模型...

Epoch: 1/2 | Batch: 100/938 | 單Batch損失: 0.4156 | 累計平均損失: 0.6298

Epoch: 1/2 | Batch: 200/938 | 單Batch損失: 0.2680 | 累計平均損失: 0.4774

Epoch: 1/2 | Batch: 300/938 | 單Batch損失: 0.4445 | 累計平均損失: 0.4096

Epoch: 1/2 | Batch: 400/938 | 單Batch損失: 0.1984 | 累計平均損失: 0.3676

Epoch: 1/2 | Batch: 500/938 | 單Batch損失: 0.3787 | 累計平均損失: 0.3352

Epoch: 1/2 | Batch: 600/938 | 單Batch損失: 0.2049 | 累計平均損失: 0.3130

Epoch: 1/2 | Batch: 700/938 | 單Batch損失: 0.2164 | 累計平均損失: 0.2962

Epoch: 1/2 | Batch: 800/938 | 單Batch損失: 0.1432 | 累計平均損失: 0.2797

Epoch: 1/2 | Batch: 900/938 | 單Batch損失: 0.0901 | 累計平均損失: 0.2655

Epoch 1/2 完成 | 訓練準確率: 92.45% | 測試準確率: 96.09%

Epoch: 2/2 | Batch: 100/938 | 單Batch損失: 0.1137 | 累計平均損失: 0.1193

Epoch: 2/2 | Batch: 200/938 | 單Batch損失: 0.2177 | 累計平均損失: 0.1250

Epoch: 2/2 | Batch: 300/938 | 單Batch損失: 0.1939 | 累計平均損失: 0.1253

Epoch: 2/2 | Batch: 400/938 | 單Batch損失: 0.1066 | 累計平均損失: 0.1227

Epoch: 2/2 | Batch: 500/938 | 單Batch損失: 0.0964 | 累計平均損失: 0.1199

Epoch: 2/2 | Batch: 600/938 | 單Batch損失: 0.1582 | 累計平均損失: 0.1185

Epoch: 2/2 | Batch: 700/938 | 單Batch損失: 0.2484 | 累計平均損失: 0.1173

Epoch: 2/2 | Batch: 800/938 | 單Batch損失: 0.1279 | 累計平均損失: 0.1166

Epoch: 2/2 | Batch: 900/938 | 單Batch損失: 0.2397 | 累計平均損失: 0.1163

Epoch 2/2 完成 | 訓練準確率: 96.51% | 測試準確率: 96.64%

隨機測試圖像預測結果:?

二、彩色圖片的規范寫法,以CIFAR-10數據集為例

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import numpy as np# 設置中文字體支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解決負號顯示問題# 1. 數據預處理

transform = transforms.Compose([transforms.ToTensor(), # 轉換為張量transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)) # 標準化處理

])# 2. 加載CIFAR-10數據集

train_dataset = datasets.CIFAR10(root='./data',train=True,download=True,transform=transform

)test_dataset = datasets.CIFAR10(root='./data',train=False,transform=transform

)# 3. 創建數據加載器

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)# 4. 定義MLP模型(適應CIFAR-10的輸入尺寸)

class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.flatten = nn.Flatten() # 將3x32x32的圖像展平為3072維向量self.layer1 = nn.Linear(3072, 512) # 第一層:3072個輸入,512個神經元self.relu1 = nn.ReLU()self.dropout1 = nn.Dropout(0.2) # 添加Dropout防止過擬合self.layer2 = nn.Linear(512, 256) # 第二層:512個輸入,256個神經元self.relu2 = nn.ReLU()self.dropout2 = nn.Dropout(0.2)self.layer3 = nn.Linear(256, 10) # 輸出層:10個類別def forward(self, x):# 第一步:將輸入圖像展平為一維向量x = self.flatten(x) # 輸入尺寸: [batch_size, 3, 32, 32] → [batch_size, 3072]# 第一層全連接 + 激活 + Dropoutx = self.layer1(x) # 線性變換: [batch_size, 3072] → [batch_size, 512]x = self.relu1(x) # 應用ReLU激活函數x = self.dropout1(x) # 訓練時隨機丟棄部分神經元輸出# 第二層全連接 + 激活 + Dropoutx = self.layer2(x) # 線性變換: [batch_size, 512] → [batch_size, 256]x = self.relu2(x) # 應用ReLU激活函數x = self.dropout2(x) # 訓練時隨機丟棄部分神經元輸出# 第三層(輸出層)全連接x = self.layer3(x) # 線性變換: [batch_size, 256] → [batch_size, 10]return x # 返回未經過Softmax的logits# 檢查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 初始化模型

model = MLP()

model = model.to(device) # 將模型移至GPU(如果可用)criterion = nn.CrossEntropyLoss() # 交叉熵損失函數

optimizer = optim.Adam(model.parameters(), lr=0.001) # Adam優化器# 5. 訓練模型(記錄每個 iteration 的損失)

def train(model, train_loader, test_loader, criterion, optimizer, device, epochs):model.train() # 設置為訓練模式# 記錄每個 iteration 的損失all_iter_losses = [] # 存儲所有 batch 的損失iter_indices = [] # 存儲 iteration 序號for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 移至GPUoptimizer.zero_grad() # 梯度清零output = model(data) # 前向傳播loss = criterion(output, target) # 計算損失loss.backward() # 反向傳播optimizer.step() # 更新參數# 記錄當前 iteration 的損失iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(epoch * len(train_loader) + batch_idx + 1)# 統計準確率和損失running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 每100個批次打印一次訓練信息if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 單Batch損失: {iter_loss:.4f} | 累計平均損失: {running_loss/(batch_idx+1):.4f}')# 計算當前epoch的平均訓練損失和準確率epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / total# 測試階段model.eval() # 設置為評估模式test_loss = 0correct_test = 0total_test = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_testprint(f'Epoch {epoch+1}/{epochs} 完成 | 訓練準確率: {epoch_train_acc:.2f}% | 測試準確率: {epoch_test_acc:.2f}%')# 繪制所有 iteration 的損失曲線plot_iter_losses(all_iter_losses, iter_indices)return epoch_test_acc # 返回最終測試準確率# 6. 繪制每個 iteration 的損失曲線

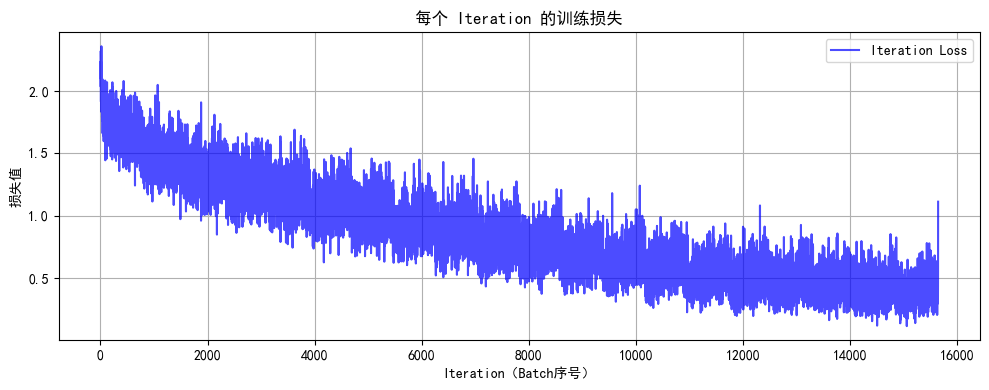

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序號)')plt.ylabel('損失值')plt.title('每個 Iteration 的訓練損失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()# 7. 執行訓練和測試

epochs = 20 # 增加訓練輪次以獲得更好效果

print("開始訓練模型...")

final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, device, epochs)

print(f"訓練完成!最終測試準確率: {final_accuracy:.2f}%")# 8. 隨機選擇測試圖像進行預測展示

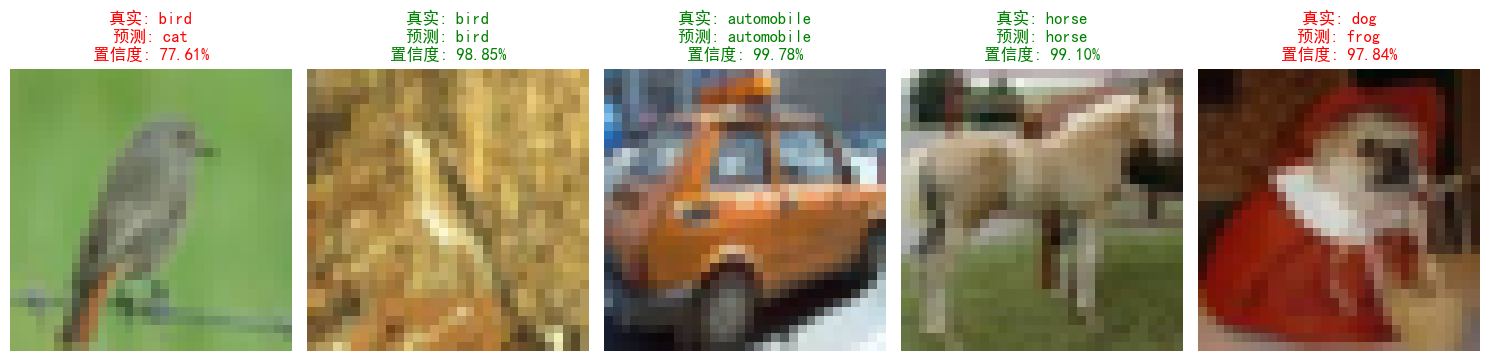

def show_random_predictions(model, test_loader, device, num_images=5):model.eval()# 隨機選擇索引indices = np.random.choice(len(test_dataset), num_images, replace=False)plt.figure(figsize=(3*num_images, 4))for i, idx in enumerate(indices):# 獲取圖像和真實標簽image, label = test_dataset[idx]image = image.unsqueeze(0).to(device)# 預測并計算概率with torch.no_grad():output = model(image)probs = torch.nn.functional.softmax(output, dim=1)_, predicted = torch.max(output.data, 1)confidence = probs[0][predicted].item()# 轉換圖像格式(CIFAR-10專用)image = image.squeeze().cpu().numpy()image = np.transpose(image, (1, 2, 0)) # 從(C,H,W)轉為(H,W,C)image = image * 0.5 + 0.5 # 反歸一化# 繪制結果(CIFAR-10不需要cmap='gray')plt.subplot(1, num_images, i+1)plt.imshow(image) # 設置標題顏色和內容title_color = 'green' if predicted.item() == label else 'red'title_text = (f'真實: {test_dataset.classes[label]}\n'f'預測: {test_dataset.classes[predicted.item()]}\n'f'置信度: {confidence:.2%}')plt.title(title_text, color=title_color)plt.axis('off')plt.tight_layout()plt.show()# 在訓練完成后調用

print("\n隨機測試圖像預測結果:")

show_random_predictions(model, test_loader, device)開始訓練模型...

Epoch: 1/20 | Batch: 100/782 | 單Batch損失: 1.8490 | 累計平均損失: 1.9064

Epoch: 1/20 | Batch: 200/782 | 單Batch損失: 1.9917 | 累計平均損失: 1.8319

Epoch: 1/20 | Batch: 300/782 | 單Batch損失: 1.6255 | 累計平均損失: 1.7918

Epoch: 1/20 | Batch: 400/782 | 單Batch損失: 1.4693 | 累計平均損失: 1.7604

Epoch: 1/20 | Batch: 500/782 | 單Batch損失: 1.8785 | 累計平均損失: 1.7441

Epoch: 1/20 | Batch: 600/782 | 單Batch損失: 1.5239 | 累計平均損失: 1.7261

Epoch: 1/20 | Batch: 700/782 | 單Batch損失: 1.5979 | 累計平均損失: 1.7132

Epoch 1/20 完成 | 訓練準確率: 39.59% | 測試準確率: 45.31%

Epoch: 2/20 | Batch: 100/782 | 單Batch損失: 1.3397 | 累計平均損失: 1.4856

Epoch: 2/20 | Batch: 200/782 | 單Batch損失: 1.4475 | 累計平均損失: 1.4658

Epoch: 2/20 | Batch: 300/782 | 單Batch損失: 1.3311 | 累計平均損失: 1.4698

Epoch: 2/20 | Batch: 400/782 | 單Batch損失: 1.5560 | 累計平均損失: 1.4726

Epoch: 2/20 | Batch: 500/782 | 單Batch損失: 1.3987 | 累計平均損失: 1.4686

Epoch: 2/20 | Batch: 600/782 | 單Batch損失: 1.4540 | 累計平均損失: 1.4664

Epoch: 2/20 | Batch: 700/782 | 單Batch損失: 1.3138 | 累計平均損失: 1.4620

Epoch 2/20 完成 | 訓練準確率: 48.36% | 測試準確率: 50.02%

Epoch: 3/20 | Batch: 100/782 | 單Batch損失: 1.3247 | 累計平均損失: 1.3399

Epoch: 3/20 | Batch: 200/782 | 單Batch損失: 1.5797 | 累計平均損失: 1.3359

Epoch: 3/20 | Batch: 300/782 | 單Batch損失: 1.7371 | 累計平均損失: 1.3417

Epoch: 3/20 | Batch: 400/782 | 單Batch損失: 1.2458 | 累計平均損失: 1.3385

Epoch: 3/20 | Batch: 500/782 | 單Batch損失: 1.3252 | 累計平均損失: 1.3355

Epoch: 3/20 | Batch: 600/782 | 單Batch損失: 1.2957 | 累計平均損失: 1.3373

Epoch: 3/20 | Batch: 700/782 | 單Batch損失: 1.3832 | 累計平均損失: 1.3398

...

Epoch: 20/20 | Batch: 500/782 | 單Batch損失: 0.6388 | 累計平均損失: 0.3730

Epoch: 20/20 | Batch: 600/782 | 單Batch損失: 0.3712 | 累計平均損失: 0.3795

Epoch: 20/20 | Batch: 700/782 | 單Batch損失: 0.3770 | 累計平均損失: 0.3884

Epoch 20/20 完成 | 訓練準確率: 86.10% | 測試準確率: 53.29%

隨機測試圖像預測結果:

)

)

帶論文文檔1萬字以上,文末可獲取,系統界面在最后面。)

)

)