文章目錄

- 前言

- 一、數據準備

- 1.1 數據集介紹

- 1.2 數據集文件結構

- 二、項目實戰

- 2.1 數據標簽劃分

- 2.2 數據預處理

- 2.3 構建模型

- 2.4 開始訓練

- 2.5 結果可視化

- 三、數據集個體預測

前言

SqueezeNet是一種輕量且高效的CNN模型,它參數比AlexNet少50倍,但模型性能(accuracy)與AlexNet接近。顧名思義,Squeeze的中文意思是壓縮和擠壓的意思,所以我們通過算法的名字就可以猜想到,該算法一定是通過壓縮模型來降低模型參數量的。當然任何算法的改進都是在原先的基礎上提升精度或者降低模型參數,因此該算法的主要目的就是在于降低模型參數量的同時保持模型精度。

我的環境:

- 基礎環境:python3.7

- 編譯器:pycharm

- 深度學習框架:pytorch

- 數據集代碼獲取:鏈接(提取碼:2357 )

一、數據準備

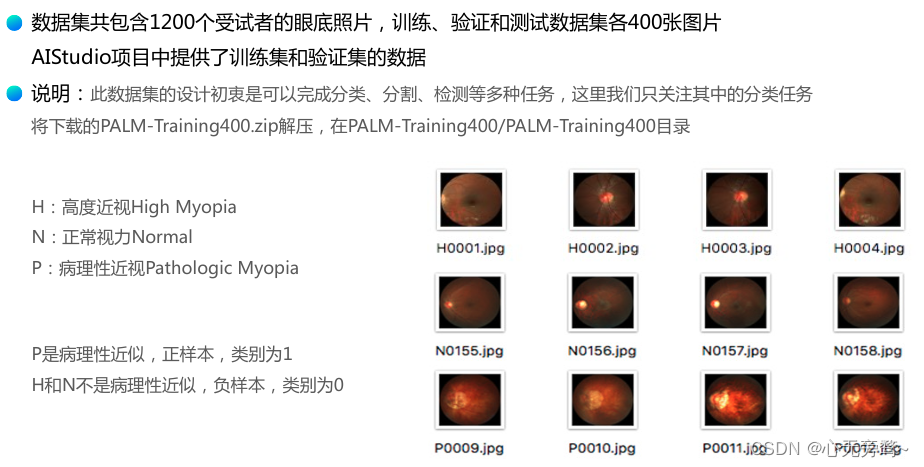

本案例使用的數據集是眼疾識別數據集iChallenge-PM。

1.1 數據集介紹

iChallenge-PM是百度大腦和中山大學中山眼科中心聯合舉辦的iChallenge比賽中,提供的關于病理性近視(Pathologic Myopia,PM)的醫療類數據集,包含1200個受試者的眼底視網膜圖片,訓練、驗證和測試數據集各400張。

- training.zip:包含訓練中的圖片和標簽

- validation.zip:包含驗證集的圖片

- valid_gt.zip:包含驗證集的標簽

該數據集是從AI Studio平臺中下載的,具體信息如下:

1.2 數據集文件結構

數據集中共有三個壓縮文件,分別是:

- training.zip

├── PALM-Training400

│ ├── PALM-Training400.zip

│ │ ├── H0002.jpg

│ │ └── ...

│ ├── PALM-Training400-Annotation-D&F.zip

│ │ └── ...

│ └── PALM-Training400-Annotation-Lession.zip└── ...

- valid_gt.zip:標記的位置 里面的PM_Lable_and_Fovea_Location.xlsx就是標記文件

├── PALM-Validation-GT

│ ├── Lession_Masks

│ │ └── ...

│ ├── Disc_Masks

│ │ └── ...

│ └── PM_Lable_and_Fovea_Location.xlsx- validation.zip:測試數據集

├── PALM-Validation

│ ├── V0001.jpg

│ ├── V0002.jpg

│ └── ...二、項目實戰

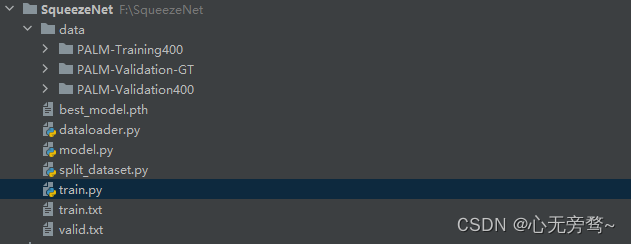

項目結構如下:

2.1 數據標簽劃分

該眼疾數據集格式有點復雜,這里我對數據集進行了自己的處理,將訓練集和驗證集寫入txt文本里面,分別對應它的圖片路徑和標簽。

import os

import pandas as pd

# 將訓練集劃分標簽

train_dataset = r"F:\SqueezeNet\data\PALM-Training400\PALM-Training400"

train_list = []

label_list = []train_filenames = os.listdir(train_dataset)for name in train_filenames:filepath = os.path.join(train_dataset, name)train_list.append(filepath)if name[0] == 'N' or name[0] == 'H':label = 0label_list.append(label)elif name[0] == 'P':label = 1label_list.append(label)else:raise('Error dataset!')with open('F:/SqueezeNet/train.txt', 'w', encoding='UTF-8') as f:i = 0for train_img in train_list:f.write(str(train_img) + ' ' +str(label_list[i]))i += 1f.write('\n')

# 將驗證集劃分標簽

valid_dataset = r"F:\SqueezeNet\data\PALM-Validation400"

valid_filenames = os.listdir(valid_dataset)

valid_label = r"F:\SqueezeNet\data\PALM-Validation-GT\PM_Label_and_Fovea_Location.xlsx"

data = pd.read_excel(valid_label)

valid_data = data[['imgName', 'Label']].values.tolist()with open('F:/SqueezeNet/valid.txt', 'w', encoding='UTF-8') as f:for valid_img in valid_data:f.write(str(valid_dataset) + '/' + valid_img[0] + ' ' + str(valid_img[1]))f.write('\n')

2.2 數據預處理

這里采用到的數據預處理,主要有調整圖像大小、隨機翻轉、歸一化等。

import os.path

from PIL import Image

from torch.utils.data import DataLoader, Dataset

from torchvision.transforms import transformstransform_BZ = transforms.Normalize(mean=[0.5, 0.5, 0.5],std=[0.5, 0.5, 0.5]

)class LoadData(Dataset):def __init__(self, txt_path, train_flag=True):self.imgs_info = self.get_images(txt_path)self.train_flag = train_flagself.train_tf = transforms.Compose([transforms.Resize(224), # 調整圖像大小為224x224transforms.RandomHorizontalFlip(), # 隨機左右翻轉圖像transforms.RandomVerticalFlip(), # 隨機上下翻轉圖像transforms.ToTensor(), # 將PIL Image或numpy.ndarray轉換為tensor,并歸一化到[0,1]之間transform_BZ # 執行某些復雜變換操作])self.val_tf = transforms.Compose([transforms.Resize(224), # 調整圖像大小為224x224transforms.ToTensor(), # 將PIL Image或numpy.ndarray轉換為tensor,并歸一化到[0,1]之間transform_BZ # 執行某些復雜變換操作])def get_images(self, txt_path):with open(txt_path, 'r', encoding='utf-8') as f:imgs_info = f.readlines()imgs_info = list(map(lambda x: x.strip().split(' '), imgs_info))return imgs_infodef padding_black(self, img):w, h = img.sizescale = 224. / max(w, h)img_fg = img.resize([int(x) for x in [w * scale, h * scale]])size_fg = img_fg.sizesize_bg = 224img_bg = Image.new("RGB", (size_bg, size_bg))img_bg.paste(img_fg, ((size_bg - size_fg[0]) // 2,(size_bg - size_fg[1]) // 2))img = img_bgreturn imgdef __getitem__(self, index):img_path, label = self.imgs_info[index]img_path = os.path.join('', img_path)img = Image.open(img_path)img = img.convert("RGB")img = self.padding_black(img)if self.train_flag:img = self.train_tf(img)else:img = self.val_tf(img)label = int(label)return img, labeldef __len__(self):return len(self.imgs_info)

2.3 構建模型

import torch

import torch.nn as nn

import torch.nn.init as initclass Fire(nn.Module):def __init__(self, inplanes, squeeze_planes,expand1x1_planes, expand3x3_planes):super(Fire, self).__init__()self.inplanes = inplanesself.squeeze = nn.Conv2d(inplanes, squeeze_planes, kernel_size=1)self.squeeze_activation = nn.ReLU(inplace=True)self.expand1x1 = nn.Conv2d(squeeze_planes, expand1x1_planes,kernel_size=1)self.expand1x1_activation = nn.ReLU(inplace=True)self.expand3x3 = nn.Conv2d(squeeze_planes, expand3x3_planes,kernel_size=3, padding=1)self.expand3x3_activation = nn.ReLU(inplace=True)def forward(self, x):x = self.squeeze_activation(self.squeeze(x))return torch.cat([self.expand1x1_activation(self.expand1x1(x)),self.expand3x3_activation(self.expand3x3(x))], 1)class SqueezeNet(nn.Module):def __init__(self, version='1_0', num_classes=1000):super(SqueezeNet, self).__init__()self.num_classes = num_classesif version == '1_0':self.features = nn.Sequential(nn.Conv2d(3, 96, kernel_size=7, stride=2),nn.ReLU(inplace=True),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(96, 16, 64, 64),Fire(128, 16, 64, 64),Fire(128, 32, 128, 128),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(256, 32, 128, 128),Fire(256, 48, 192, 192),Fire(384, 48, 192, 192),Fire(384, 64, 256, 256),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(512, 64, 256, 256),)elif version == '1_1':self.features = nn.Sequential(nn.Conv2d(3, 64, kernel_size=3, stride=2),nn.ReLU(inplace=True),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(64, 16, 64, 64),Fire(128, 16, 64, 64),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(128, 32, 128, 128),Fire(256, 32, 128, 128),nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True),Fire(256, 48, 192, 192),Fire(384, 48, 192, 192),Fire(384, 64, 256, 256),Fire(512, 64, 256, 256),)else:# FIXME: Is this needed? SqueezeNet should only be called from the# FIXME: squeezenet1_x() functions# FIXME: This checking is not done for the other modelsraise ValueError("Unsupported SqueezeNet version {version}:""1_0 or 1_1 expected".format(version=version))# Final convolution is initialized differently from the restfinal_conv = nn.Conv2d(512, self.num_classes, kernel_size=1)self.classifier = nn.Sequential(nn.Dropout(p=0.5),final_conv,nn.ReLU(inplace=True),nn.AdaptiveAvgPool2d((1, 1)))for m in self.modules():if isinstance(m, nn.Conv2d):if m is final_conv:init.normal_(m.weight, mean=0.0, std=0.01)else:init.kaiming_uniform_(m.weight)if m.bias is not None:init.constant_(m.bias, 0)def forward(self, x):x = self.features(x)x = self.classifier(x)return torch.flatten(x, 1)2.4 開始訓練

import torch

import torch.nn as nn

from torch.utils.data import DataLoader, Dataset

from model import SqueezeNet

import torchsummary

from dataloader import LoadData

import copydevice = "cuda:0" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))model = SqueezeNet(num_classes=2).to(device)

# print(model)

#print(torchsummary.summary(model, (3, 224, 224), 1))# 加載訓練集和驗證集

train_data = LoadData(r"F:\SqueezeNet\train.txt", True)

train_dl = torch.utils.data.DataLoader(train_data, batch_size=16, pin_memory=True,shuffle=True, num_workers=0)

test_data = LoadData(r"F:\SqueezeNet\valid.txt", True)

test_dl = torch.utils.data.DataLoader(test_data, batch_size=16, pin_memory=True,shuffle=True, num_workers=0)# 編寫訓練函數

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 訓練集的大小num_batches = len(dataloader) # 批次數目, (size/batch_size,向上取整)print('num_batches:', num_batches)train_loss, train_acc = 0, 0 # 初始化訓練損失和正確率for X, y in dataloader: # 獲取圖片及其標簽X, y = X.to(device), y.to(device)# 計算預測誤差pred = model(X) # 網絡輸出loss = loss_fn(pred, y) # 計算網絡輸出和真實值之間的差距,targets為真實值,計算二者差值即為損失# 反向傳播optimizer.zero_grad() # grad屬性歸零loss.backward() # 反向傳播optimizer.step() # 每一步自動更新# 記錄acc與losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= sizetrain_loss /= num_batchesreturn train_acc, train_loss# 編寫驗證函數

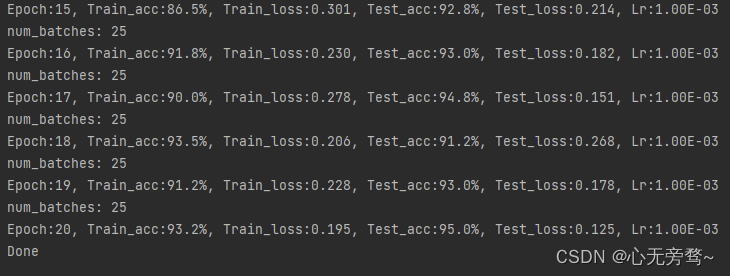

def test(dataloader, model, loss_fn):size = len(dataloader.dataset) # 測試集的大小num_batches = len(dataloader) # 批次數目, (size/batch_size,向上取整)test_loss, test_acc = 0, 0# 當不進行訓練時,停止梯度更新,節省計算內存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 計算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_loss# 開始訓練epochs = 20train_loss = []

train_acc = []

test_loss = []

test_acc = []best_acc = 0 # 設置一個最佳準確率,作為最佳模型的判別指標loss_function = nn.CrossEntropyLoss() # 定義損失函數

optimizer = torch.optim.Adam(model.parameters(), lr=0.001) # 定義Adam優化器for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_function, optimizer)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_function)# 保存最佳模型到 best_modelif epoch_test_acc > best_acc:best_acc = epoch_test_accbest_model = copy.deepcopy(model)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 獲取當前的學習率lr = optimizer.state_dict()['param_groups'][0]['lr']template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')print(template.format(epoch + 1, epoch_train_acc * 100, epoch_train_loss,epoch_test_acc * 100, epoch_test_loss, lr))# 保存最佳模型到文件中

PATH = './best_model.pth' # 保存的參數文件名

torch.save(best_model.state_dict(), PATH)print('Done')

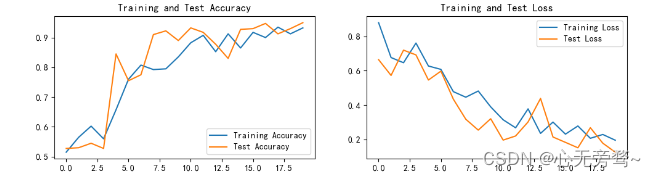

2.5 結果可視化

import matplotlib.pyplot as plt

#隱藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用來正常顯示中文標簽

plt.rcParams['axes.unicode_minus'] = False # 用來正常顯示負號

plt.rcParams['figure.dpi'] = 100 #分辨率epochs_range = range(epochs)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Test Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Test Loss')

plt.show()

可視化結果如下:

可以自行調整學習率以及batch_size,這里我的超參數并沒有調整。

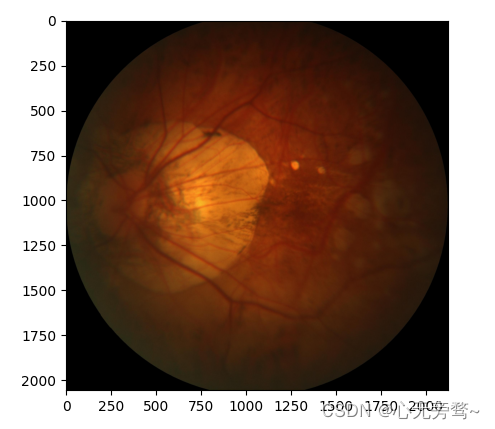

三、數據集個體預測

import matplotlib.pyplot as plt

from PIL import Image

from torchvision.transforms import transforms

from model import SqueezeNet

import torchdata_transform = transforms.Compose([transforms.ToTensor(),transforms.Resize((224, 224)),transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])img = Image.open("F:\SqueezeNet\data\PALM-Validation400\V0008.jpg")

plt.imshow(img)

img = data_transform(img)

img = torch.unsqueeze(img, dim=0)

name = ['非病理性近視', '病理性近視']

model_weight_path = r"F:\SqueezeNet\best_model.pth"

model = SqueezeNet(num_classes=2)

model.load_state_dict(torch.load(model_weight_path))

model.eval()

with torch.no_grad():output = torch.squeeze(model(img))predict = torch.softmax(output, dim=0)# 獲得最大可能性索引predict_cla = torch.argmax(predict).numpy()print('索引為', predict_cla)

print('預測結果為:{},置信度為: {}'.format(name[predict_cla], predict[predict_cla].item()))

plt.show()

索引為 1

預測結果為:病理性近視,置信度為: 0.9768268465995789

更詳細的請看paddle版本的實現:深度學習實戰基礎案例——卷積神經網絡(CNN)基于SqueezeNet的眼疾識別

:Flink流處理程序流程和項目準備)

)

)

)

)

模塊使用教程)

![[國產MCU]-BL602開發實例-I2C與總線設備地址掃描](http://pic.xiahunao.cn/[國產MCU]-BL602開發實例-I2C與總線設備地址掃描)