參考

3.13 丟棄法

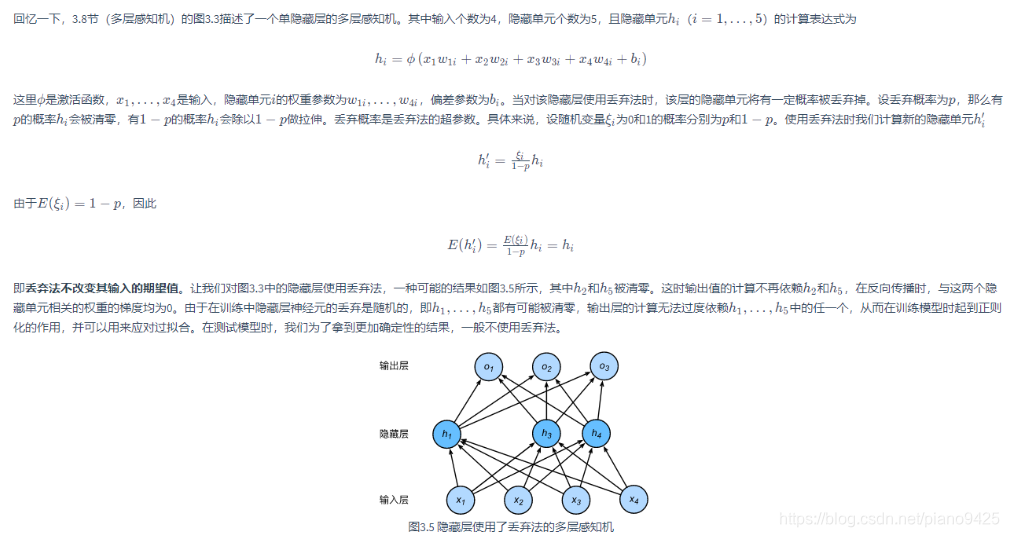

過擬合問題的另一種解決辦法是丟棄法。當對隱藏層使用丟棄法時,隱藏單元有一定概率被丟棄。

3.12.1 方法

3.13.2 從零開始實現

import torch

import torch.nn as nn

import numpy as np

import sys

sys.path.append("..")

import d2lzh_pytorch as d2ldef dropout(X, drop_prob):X = X.float()assert 0 <= drop_prob <= 1keep_prob = 1 - drop_prob# 這種情況下把全部元素都丟棄if keep_prob == 0:return torch.zeros_like(X)mask = (torch.rand(X.shape) < keep_prob).float()return mask * X / keep_prob

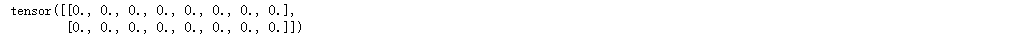

X = torch.arange(16).view(2, 8)

X

dropout(X, 0.5)

dropout(X, 1)

3.13.2.1 定義模型參數

num_inputs, num_outputs, num_hiddens1, num_hiddens2 = 784, 10, 256, 256W1 = torch.tensor(np.random.normal(0, 0.01, size=(num_inputs, num_hiddens1)), dtype=torch.float, requires_grad=True)

b1 = torch.zeros(num_hiddens1, requires_grad=True)

W2 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens1, num_hiddens2)), dtype=torch.float, requires_grad=True)

b2 = torch.zeros(num_hiddens2, requires_grad=True)

W3 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens2, num_outputs)), dtype=torch.float, requires_grad=True)

b3 = torch.zeros(num_outputs, requires_grad=True)params = [W1, b1, W2, b2, W3, b3]

3.13.2.2 定義模型

drop_prob1, drop_prob2 = 0.2, 0.5def net(X, is_training=True):X = X.view(-1, num_inputs)H1 = (torch.matmul(X, W1) + b1).relu()if is_training: # 只在訓練模型時使用丟棄法H1 = dropout(H1, drop_prob1) # 在第一層全連接后添加丟棄層H2 = (torch.matmul(H1, W2) + b2).relu()if is_training:H2 = dropout(H2, drop_prob2) # 在第二層全連接后添加丟棄層return torch.matmul(H2, W3) + b3# 本函數已保存在d2lzh_pytorch

def evaluate_accuracy(data_iter, net):acc_sum, n = 0.0, 0for X, y in data_iter:if isinstance(net, torch.nn.Module):net.eval() # 評估模式, 這會關閉dropoutacc_sum += (net(X).argmax(dim=1) == y).float().sum().item()net.train() # 改回訓練模式else: # 自定義的模型if('is_training' in net.__code__.co_varnames): # 如果有is_training這個參數# 將is_training設置成Falseacc_sum += (net(X, is_training=False).argmax(dim=1) == y).float().sum().item() else:acc_sum += (net(X).argmax(dim=1) == y).float().sum().item() n += y.shape[0]return acc_sum / n

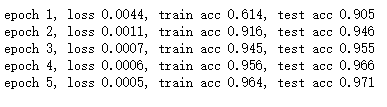

3.13.2.3 訓練和測試模型

num_epochs, lr, batch_size = 5, 100.0, 256

loss = torch.nn.CrossEntropyLoss()

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, params, lr)

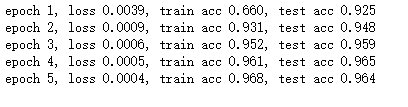

3.13.3 簡潔實現

net = nn.Sequential(d2l.FlattenLayer(),nn.Linear(num_inputs, num_hiddens1),nn.ReLU(),nn.Dropout(drop_prob1),nn.Linear(num_hiddens1, num_hiddens2),nn.ReLU(),nn.Dropout(drop_prob2),nn.Linear(num_hiddens2, 10)

)for param in net.parameters():nn.init.normal_(param, mean=0, std= 0.01)optimizer = torch.optim.SGD(net.parameters(), lr=0.5)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, None, None, optimizer)

![[pytorch、學習] - 4.1 模型構造](http://pic.xiahunao.cn/[pytorch、學習] - 4.1 模型構造)

)

![[pytorch、學習] - 4.2 模型參數的訪問、初始化和共享](http://pic.xiahunao.cn/[pytorch、學習] - 4.2 模型參數的訪問、初始化和共享)

)

和 CASE WHEN 的異同點)

![[pytorch、學習] - 4.4 自定義層](http://pic.xiahunao.cn/[pytorch、學習] - 4.4 自定義層)

)

![[pytorch、學習] - 4.5 讀取和存儲](http://pic.xiahunao.cn/[pytorch、學習] - 4.5 讀取和存儲)

)

![[pytorch、學習] - 4.6 GPU計算](http://pic.xiahunao.cn/[pytorch、學習] - 4.6 GPU計算)