在近年來的深度學習領域,Transformer模型憑借其在自然語言處理(NLP)中的卓越表現,迅速成為研究熱點。尤其是基于自注意力(Self-Attention)機制的模型,更是推動了NLP的飛速發展。然而,隨著研究的深入,Transformer模型不僅在NLP領域大放異彩,還被引入到計算機視覺領域,形成了Vision Transformer(ViT)。ViT模型在不依賴傳統卷積神經網絡(CNN)的情況下,依然能夠在圖像分類任務中取得優異的效果。本文將深入解析ViT模型的結構、特點,并通過代碼示例展示如何使用MindSpore框架實現ViT模型的訓練、驗證和推理。

ViT模型結構

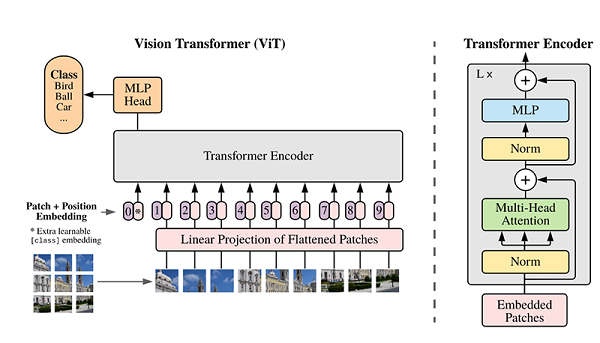

ViT模型的主體結構基于Transformer模型的編碼器(Encoder)部分,其整體結構如下圖所示:

模型特點

為什么要使用Patch Embedding?

在傳統的Transformer模型中,輸入通常是一維的詞向量序列,而圖像數據是二維的像素矩陣。為了將圖像數據轉換為Transformer可以處理的形式,我們需要將圖像劃分為多個小塊(patch),并將每個patch轉換為一維向量。這一過程稱為Patch Embedding。通過這種方式,我們可以將圖像數據轉換為類似于詞向量的形式,從而利用Transformer模型處理圖像數據。

為什么要使用位置編碼(Position Embedding)?

由于Transformer模型在處理輸入序列時不考慮順序信息,因此在圖像數據中,patch之間的空間關系可能會丟失。為了解決這個問題,我們引入了位置編碼(Position Embedding),它為每個patch增加了位置信息,使得模型能夠識別不同patch之間的空間關系。這對于保留圖像的空間結構信息非常重要。

- Patch Embedding:輸入圖像被劃分為多個patch(圖像塊),然后將每個二維patch轉換為一維向量,并加上類別向量和位置向量作為模型輸入。

- Transformer Encoder:模型主體的Block結構基于Transformer的Encoder部分,主要結構是多頭注意力(Multi-Head Attention)和前饋神經網絡(Feed Forward)。

- 分類頭(Head):在Transformer Encoder堆疊后接一個全連接層,用于分類。

環境準備與數據讀取

開始實驗之前,請確保本地已經安裝了Python環境和MindSpore。

首先下載本案例的數據集,該數據集是從ImageNet中篩選出來的子集。數據集路徑結構如下:

.dataset/├── ILSVRC2012_devkit_t12.tar.gz├── train/├── infer/└── val/

from download import download

import os

import mindspore as ms

from mindspore.dataset import ImageFolderDataset

import mindspore.dataset.vision as transforms# 下載數據集

dataset_url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/vit_imagenet_dataset.zip"

path = "./"

path = download(dataset_url, path, kind="zip", replace=True)data_path = './dataset/'

mean = [0.485 * 255, 0.456 * 255, 0.406 * 255]

std = [0.229 * 255, 0.224 * 255, 0.225 * 255]dataset_train = ImageFolderDataset(os.path.join(data_path, "train"), shuffle=True)trans_train = [transforms.RandomCropDecodeResize(size=224, scale=(0.08, 1.0), ratio=(0.75, 1.333)),transforms.RandomHorizontalFlip(prob=0.5),transforms.Normalize(mean=mean, std=std),transforms.HWC2CHW()

]dataset_train = dataset_train.map(operations=trans_train, input_columns=["image"])

dataset_train = dataset_train.batch(batch_size=16, drop_remainder=True)

Transformer基本原理

Transformer模型源于2017年的一篇文章,其主要結構為多個編碼器和解碼器模塊。編碼器和解碼器由多頭注意力(Multi-Head Attention)、前饋神經網絡(Feed Forward)、歸一化層(Normalization)和殘差連接(Residual Connection)組成。

Self-Attention機制

Self-Attention機制是Transformer的核心,其主要步驟如下:

- 輸入向量映射:將輸入向量映射成Query(Q)、Key(K)、Value(V)三個向量。

- 計算注意力權重:通過點乘計算Query和Key的相似性,并通過Softmax函數歸一化。

- 加權求和:使用注意力權重對Value進行加權求和,得到最終的Attention輸出。

以下是Self-Attention的代碼實現:

from mindspore import nn, opsclass Attention(nn.Cell):def __init__(self, dim: int, num_heads: int = 8, keep_prob: float = 1.0, attention_keep_prob: float = 1.0):super(Attention, self).__init__()self.num_heads = num_headshead_dim = dim // num_headsself.scale = ms.Tensor(head_dim ** -0.5)self.qkv = nn.Dense(dim, dim * 3)self.attn_drop = nn.Dropout(p=1.0-attention_keep_prob)self.out = nn.Dense(dim, dim)self.out_drop = nn.Dropout(p=1.0-keep_prob)self.attn_matmul_v = ops.BatchMatMul()self.q_matmul_k = ops.BatchMatMul(transpose_b=True)self.softmax = nn.Softmax(axis=-1)def construct(self, x):b, n, c = x.shapeqkv = self.qkv(x)qkv = ops.reshape(qkv, (b, n, 3, self.num_heads, c // self.num_heads))qkv = ops.transpose(qkv, (2, 0, 3, 1, 4))q, k, v = ops.unstack(qkv, axis=0)attn = self.q_matmul_k(q, k)attn = ops.mul(attn, self.scale)attn = self.softmax(attn)attn = self.attn_drop(attn)out = self.attn_matmul_v(attn, v)out = ops.transpose(out, (0, 2, 1, 3))out = ops.reshape(out, (b, n, c))out = self.out(out)out = self.out_drop(out)return out

Transformer Encoder

為什么要使用殘差連接(Residual Connection)和歸一化層(Normalization Layer)?

在深層神經網絡中,隨著層數的增加,梯度消失和梯度爆炸的問題變得越來越嚴重。殘差連接通過在每一層加上輸入的跳躍連接,可以有效緩解這些問題,確保信息能夠順利傳遞。此外,歸一化層(如LayerNorm)可以加速模型的訓練,并提高模型的穩定性和泛化能力。這些技術的結合,使得Transformer模型能夠在更深的層次上進行有效的訓練。

Transformer Encoder由多層Self-Attention和前饋神經網絡(Feed Forward)組成,通過殘差連接和歸一化層增強模型的訓練效果和泛化能力。

class FeedForward(nn.Cell):def __init__(self, in_features: int, hidden_features: Optional[int] = None, out_features: Optional[int] = None, activation: nn.Cell = nn.GELU, keep_prob: float = 1.0):super(FeedForward, self).__init__()out_features = out_features or in_featureshidden_features = hidden_features or in_featuresself.dense1 = nn.Dense(in_features, hidden_features)self.activation = activation()self.dense2 = nn.Dense(hidden_features, out_features)self.dropout = nn.Dropout(p=1.0-keep_prob)def construct(self, x):x = self.dense1(x)x = self.activation(x)x = self.dropout(x)x = self.dense2(x)x = self.dropout(x)return xclass ResidualCell(nn.Cell):def __init__(self, cell):super(ResidualCell, self).__init__()self.cell = celldef construct(self, x):return self.cell(x) + xclass TransformerEncoder(nn.Cell):def __init__(self, dim: int, num_layers: int, num_heads: int, mlp_dim: int, keep_prob: float = 1., attention_keep_prob: float = 1.0, drop_path_keep_prob: float = 1.0, activation: nn.Cell = nn.GELU, norm: nn.Cell = nn.LayerNorm):super(TransformerEncoder, self).__init__()layers = []for _ in range(num_layers):normalization1 = norm((dim,))normalization2 = norm((dim,))attention = Attention(dim=dim, num_heads=num_heads, keep_prob=keep_prob, attention_keep_prob=attention_keep_prob)feedforward = FeedForward(in_features=dim, hidden_features=mlp_dim, activation=activation, keep_prob=keep_prob)layers.append(nn.SequentialCell([ResidualCell(nn.SequentialCell([normalization1, attention])), ResidualCell(nn.SequentialCell([normalization2, feedforward]))]))self.layers = nn.SequentialCell(layers)def construct(self, x):return self.layers(x)

ViT模型的輸入

ViT模型通過將輸入圖像劃分為多個patch,將每個patch轉換為一維向量,并加上類別向量和位置向量作為模型輸入。以下是Patch Embedding的代碼實現:

class PatchEmbedding(nn.Cell):MIN_NUM_PATCHES = 4def __init__(self, image_size: int = 224, patch_size: int = 16, embed_dim: int = 768, input_channels: int = 3):super(PatchEmbedding, self).__init__()self.image_size = image_sizeself.patch_size = patch_sizeself.num_patches = (image_size // patch_size) ** 2self.conv = nn.Conv2d(input_channels, embed_dim, kernel_size=patch_size, stride=patch_size, has_bias=True)def construct(self, x):x = self.conv(x)b, c, h, w = x.shapex = ops.reshape(x, (b, c, h * w))x = ops.transpose(x, (0, 2, 1))return x

整體構建ViT

以下代碼構建了一個完整的ViT模型:

from mindspore.common.initializer import Normal

from mindspore.common.initializer import initializer

from mindspore import Parameterdef init(init_type, shape, dtype, name, requires_grad):initial = initializer(init_type, shape, dtype).init_data()return Parameter(initial, name=name, requires_grad=requires_grad)class ViT(nn.Cell):def __init__(self, image_size: int = 224, input_channels: int = 3, patch_size: int = 16, embed_dim: int = 768, num_layers: int = 12, num_heads: int = 12, mlp_dim: int = 3072, keep_prob: float = 1.0, attention_keep_prob: float = 1.0, drop_path_keep_prob: float = 1.0, activation: nn.Cell = nn.GELU, norm: Optional[nn.Cell] = nn.LayerNorm, pool: str = 'cls') -> None:super(ViT, self).__init__()self.patch_embedding = PatchEmbedding(image_size=image_size, patch_size=patch_size, embed_dim=embed_dim, input_channels=input_channels)num_patches = self.patch_embedding.num_patchesself.cls_token = init(init_type=Normal(sigma=1.0), shape=(1, 1, embed_dim), dtype=ms.float32, name='cls', requires_grad=True)self.pos_embedding = init(init_type=Normal(sigma=1.0), shape=(1, num_patches + 1, embed_dim), dtype=ms.float32, name='pos_embedding', requires_grad=True)self.pool = poolself.pos_dropout = nn.Dropout(p=1.0-keep_prob)self.norm = norm((embed_dim,))self.transformer = TransformerEncoder(dim=embed_dim, num_layers=num_layers, num_heads=num_heads, mlp_dim=mlp_dim, keep_prob=keep_prob, attention_keep_prob=attention_keep_prob, drop_path_keep_prob=drop_path_keep_prob, activation=activation, norm=norm)self.dropout = nn.Dropout(p=1.0-keep_prob)self.dense = nn.Dense(embed_dim, num_classes)def construct(self, x):x = self.patch_embedding(x)cls_tokens = ops.tile(self.cls_token.astype(x.dtype), (x.shape[0], 1, 1))x = ops.concat((cls_tokens, x), axis=1)x += self.pos_embeddingx = self.pos_dropout(x)x = self.transformer(x)x = self.norm(x)x = x[:, 0]if self.training:x = self.dropout(x)x = self.dense(x)return x

模型訓練與推理

模型訓練

模型訓練前,需要設定損失函數、優化器和回調函數。以下是訓練ViT模型的代碼:

from mindspore.nn import LossBase

from mindspore.train import LossMonitor, TimeMonitor, CheckpointConfig, ModelCheckpoint

from mindspore import train# 定義超參數

epoch_size = 10

momentum = 0.9

num_classes = 1000

resize = 224

step_size = dataset_train.get_dataset_size()# 構建模型

network = ViT()# 加載預訓練模型參數

vit_url = "https://download.mindspore.cn/vision/classification/vit_b_16_224.ckpt"

path = "./ckpt/vit_b_16_224.ckpt"

vit_path = download(vit_url, path, replace=True)

param_dict = ms.load_checkpoint(vit_path)

ms.load_param_into_net(network, param_dict)# 定義學習率

lr = nn.cosine_decay_lr(min_lr=float(0), max_lr=0.00005, total_step=epoch_size * step_size, step_per_epoch=step_size, decay_epoch=10)# 定義優化器

network_opt = nn.Adam(network.trainable_params(), lr, momentum)# 定義損失函數

class CrossEntropySmooth(LossBase):def __init__(self, sparse=True, reduction='mean', smooth_factor=0., num_classes=1000):super(CrossEntropySmooth, self).__init__()self.onehot = ops.OneHot()self.sparse = sparseself.on_value = ms.Tensor(1.0 - smooth_factor, ms.float32)self.off_value = ms.Tensor(1.0 * smooth_factor / (num_classes - 1), ms.float32)self.ce = nn.SoftmaxCrossEntropyWithLogits(reduction=reduction)def construct(self, logit, label):if self.sparse:label = self.onehot(label, ops.shape(logit)[1], self.on_value, self.off_value)loss = self.ce(logit, label)return lossnetwork_loss = CrossEntropySmooth(sparse=True, reduction="mean", smooth_factor=0.1, num_classes=num_classes)# 設置檢查點

ckpt_config = CheckpointConfig(save_checkpoint_steps=step_size, keep_checkpoint_max=100)

ckpt_callback = ModelCheckpoint(prefix='vit_b_16', directory='./ViT', config=ckpt_config)# 初始化模型

ascend_target = (ms.get_context("device_target") == "Ascend")

if ascend_target:model = train.Model(network, loss_fn=network_loss, optimizer=network_opt, metrics={"acc"}, amp_level="O2")

else:model = train.Model(network, loss_fn=network_loss, optimizer=network_opt, metrics={"acc"}, amp_level="O0")# 訓練模型

model.train(epoch_size, dataset_train, callbacks=[ckpt_callback, LossMonitor(125), TimeMonitor(125)], dataset_sink_mode=False)

模型驗證

模型驗證過程主要應用了ImageFolderDataset,CrossEntropySmooth和Model等接口。以下是驗證ViT模型的代碼:

dataset_val = ImageFolderDataset(os.path.join(data_path, "val"), shuffle=True)trans_val = [transforms.Decode(),transforms.Resize(224 + 32),transforms.CenterCrop(224),transforms.Normalize(mean=mean, std=std),transforms.HWC2CHW()

]dataset_val = dataset_val.map(operations=trans_val, input_columns=["image"])

dataset_val = dataset_val.batch(batch_size=16, drop_remainder=True)# 構建模型

network = ViT()# 加載預訓練模型參數

param_dict = ms.load_checkpoint(vit_path)

ms.load_param_into_net(network, param_dict)network_loss = CrossEntropySmooth(sparse=True, reduction="mean", smooth_factor=0.1, num_classes=num_classes)# 定義評價指標

eval_metrics = {'Top_1_Accuracy': train.Top1CategoricalAccuracy(), 'Top_5_Accuracy': train.Top5CategoricalAccuracy()}if ascend_target:model = train.Model(network, loss_fn=network_loss, optimizer=network_opt, metrics=eval_metrics, amp_level="O2")

else:model = train.Model(network, loss_fn=network_loss, optimizer=network_opt, metrics=eval_metrics, amp_level="O0")# 驗證模型

result = model.eval(dataset_val)

print(result)

模型推理

在進行模型推理之前,首先要定義一個對推理圖片進行數據預處理的方法。以下是推理ViT模型的代碼:

dataset_infer = ImageFolderDataset(os.path.join(data_path, "infer"), shuffle=True)trans_infer = [transforms.Decode(),transforms.Resize([224, 224]),transforms.Normalize(mean=mean, std=std),transforms.HWC2CHW()

]dataset_infer = dataset_infer.map(operations=trans_infer, input_columns=["image"], num_parallel_workers=1)

dataset_infer = dataset_infer.batch(1)# 讀取推理數據

for i, image in enumerate(dataset_infer.create_dict_iterator(output_numpy=True)):image = image["image"]image = ms.Tensor(image)prob = model.predict(image)label = np.argmax(prob.asnumpy(), axis=1)mapping = index2label()output = {int(label): mapping[int(label)]}print(output)show_result(img="./dataset/infer/n01440764/ILSVRC2012_test_00000279.JPEG", result=output, out_file="./dataset/infer/ILSVRC2012_test_00000279.JPEG")

)

——day59)

葉子游戲新聞)