前饋全連接層

什么是前饋全連接層:

在Transformer中前饋全連接層就是具有兩層線性層的全連接網絡

前饋全連接層的作用:

考慮注意力機制可能對復雜過程的擬合程度不夠,通過增加兩層網絡來增強模型的能力

code

# 前饋全連接層

class PositionwiseFeedForward(nn.Module):def __init__(self, d_model, d_ff,drop=0.1) -> None:""" d_mode :第一個線下層的輸入維度d_ff :隱藏層的維度drop :"""super(PositionwiseFeedForward,self).__init__()self.line1 = nn.Linear(d_model,d_ff)self.line2 = nn.Linear(d_ff,d_model)self.dropout = nn.Dropout(dropout)def forward(self,x):return self.line2(self.dropout(F.relu(self.line1(x))))測試:

輸出

ff_result.shape = torch.Size([2, 4, 512])

ff_result = tensor([[[-0.0589, -1.3885, -0.8852, ..., -0.4463, -0.9892, 2.7384],[ 0.2426, -1.1040, -1.1298, ..., -0.9296, -1.5262, 1.0632],[ 0.0318, -0.8362, -0.9389, ..., -1.6359, -1.8531, -0.1163],[ 1.1119, -1.2007, -1.5487, ..., -0.8869, 0.1711, 1.7431]],[[-0.2358, -0.9319, 0.8866, ..., -1.2987, 0.2001, 1.5415],[-0.1448, -0.7505, -0.3023, ..., -0.2585, -0.8902, 0.6206],[ 1.8106, -0.8460, 1.6487, ..., -1.1931, 0.0535, 0.8415],[ 0.2669, -0.3897, 1.1560, ..., 0.1138, -0.2795, 1.8780]]],grad_fn=<ViewBackward0>)規范化層

規范化層的作用:

它是所有深層網絡模型都需要的標準網絡層,因為隨著網絡層數的增加,通過多層的計算后參數可能開始出現過大或過小的情況,這樣可能會導致學習過程出現異常,模型可能收斂非常的慢,因此都會在一定層數后接規范化層進行數值的規范化,使其特征數值在合理范圍內.

code

class LayerNorm(nn.Module):def __init__(self,features,eps=1e-6) -> None:# features 詞嵌入的維度# eps 足夠小的數據,防止除0,放到分母上super(LayerNorm,self).__init__()# 規范化層的參數,后續訓練使用的self.w = nn.parameter(torch.ones(features))self.b = nn.Parameter(torch.zeros(features))self.eps = epsdef forward(self, x):mean = x.mean(-1,keepdim=True)stddev = x.std(-1,keepdim=True)# * 代表對應位置進行相乘,不是點積return self.w*(x-mean)/(stddev,self.eps) + self.btest

輸出:

ln_result.shape = torch.Size([2, 4, 512])

ln_result = tensor([[[ 1.3255e+00, 7.7968e-02, -1.7036e+00, ..., -1.3097e-01,4.9385e-01, 1.3975e-03],[-1.0717e-01, -1.8999e-01, -1.0603e+00, ..., 2.9285e-01,1.0337e+00, 1.0597e+00],[ 1.0801e+00, -1.5308e+00, -1.6577e+00, ..., -1.0050e-01,-3.7577e-02, 4.1453e-01],[ 4.2174e-01, -1.1476e-01, -5.9897e-01, ..., -8.2557e-01,1.2285e+00, 2.2961e-01]],[[-1.3024e-01, -6.9125e-01, -8.4373e-01, ..., -4.7106e-01,2.3697e-01, 2.4667e+00],[-1.8319e-01, -5.0278e-01, -6.6853e-01, ..., -3.3992e-02,-4.8510e-02, 2.3002e+00],[-5.7036e-01, -1.4439e+00, -2.9533e-01, ..., -4.9297e-01,9.9002e-01, 9.1294e-01],[ 2.8479e-02, -1.2107e+00, -4.9597e-01, ..., -6.0751e-01,3.1257e-01, 1.7796e+00]]], grad_fn=<AddBackward0>)子層連接結構

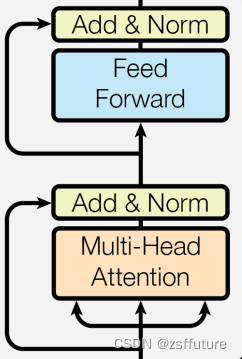

什么是子層連接結構:

如圖所示,輸入到每個子層以及規范化層的過程中,還使用了殘差鏈接(跳躍連接),因此我們把這一部分結構整體叫做子層連接(代表子層及其鏈接結構),在每個編碼器層中,都有兩個子層,這兩個子層加上周圍的鏈接結構就形成了兩個子層連接結構.

code

# 子層連接結構

class SublayerConnection(nn.Module):def __init__(self,size,dropout=0.1) -> None:"""size : 詞嵌入的維度"""super(SublayerConnection,self).__init__()self.norm = LayerNorm(size)self.dropout = nn.Dropout(dropout)def forward(self, x, sublayer):# sublayer 子層結構或者函數# x+ 跳躍層return x+self.dropout(sublayer(self.norm(x)))測試代碼 放到最后

輸出:

sc_result.sahpe = torch.Size([2, 4, 512])

sc_result = tensor([[[-1.8123e+00, -2.2030e+01, 3.1459e+00, ..., -1.3725e+01,-6.1578e+00, 2.2611e+01],[ 4.1956e+00, 1.9670e+01, 0.0000e+00, ..., 2.3616e+01,3.8118e+00, 6.4224e+01],[-7.8954e+00, 8.5818e+00, -7.8634e+00, ..., 1.5810e+01,3.5864e-01, 1.8220e+01],[-2.5320e+01, -2.8745e+01, -3.6269e+01, ..., -1.8110e+01,-1.7574e+01, 2.9502e+01]],[[-9.3402e+00, 1.0549e+01, -9.0477e+00, ..., 1.5789e+01,2.6289e-01, 1.8317e+01],[-4.0251e+01, 1.5518e+01, 1.9928e+01, ..., -1.4024e+01,-3.4640e-02, 1.8811e-01],[-2.6166e+01, 2.1279e+01, -1.1375e+01, ..., -1.9781e+00,-6.4913e+00, -3.8984e+01],[ 2.1043e+01, -3.5800e+01, 6.4603e+01, ..., 2.2372e+01,3.0018e+01, -3.0919e+01]]], grad_fn=<AddBackward0>)編碼器層

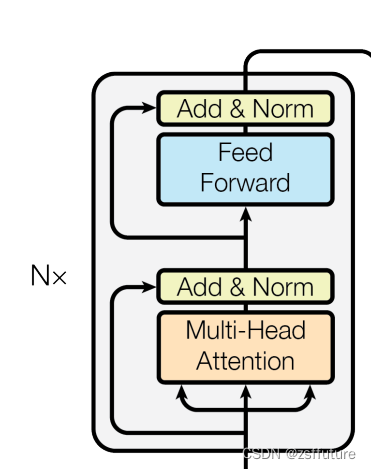

編碼器層的作用:

作為編碼器的組成單元,每個編碼器層完成一次對輸入的特征提取過程,即編碼過程,如下圖

code

# 編碼器層

class EncoderLayer(nn.Module):def __init__(self,size,self_attn, feed_forward,dropout) -> None:"""size : 詞嵌入維度self_attn: 多頭自注意力子層實例化對象fee_forward: 前饋全連接層網絡"""super(EncoderLayer,self).__init__()self.self_attn = self_attnself.feed_forward = feed_forward# 編碼器中有兩個子層結構,所以使用clone實現self.sublayer = clones(SublayerConnection(size, dropout),2)self.size = sizedef forward(self,x,mask):x = self.sublayer[0](x, lambda x: self_attn(x,x,x,mask))return self.sublayer[1](x,self.feed_forward)測試代碼放到最后

輸出?

el_result.shape = torch.Size([2, 4, 512])

el_result = tensor([[[-1.4834e+01, -4.3176e+00, 1.2379e+01, ..., -1.0715e+01,-6.8350e-01, -5.2663e+00],[-1.7895e+01, -5.9179e+01, 1.0283e+01, ..., 9.7986e+00,2.2730e+01, 1.6832e+01],[ 5.0309e+00, -6.9362e+00, -2.6385e-01, ..., -1.2178e+01,3.1495e+01, -1.9781e-02],[ 1.6883e+00, 3.9012e+01, 3.2095e-01, ..., -6.1469e-01,3.8988e+01, 2.2591e+01]],[[ 4.8033e+00, -5.5316e+00, 1.4400e+01, ..., -1.1599e+01,3.1904e+01, -1.4026e+01],[ 8.6239e+00, 1.3545e+01, 3.9492e+01, ..., -8.3500e+00,2.6721e+01, 4.4794e+00],[-2.0212e+01, 1.6034e+01, -1.9680e+01, ..., -4.7649e+00,-1.1372e+01, -3.3566e+01],[ 1.0816e+01, -1.7987e+01, 2.0039e+01, ..., -4.7768e+00,-1.9426e+01, 2.7683e+01]]], grad_fn=<AddBackward0>)編碼器

編碼器的作用:

編碼器用于對輸入進行指定的特征提取過程,也稱為編碼,由N個編碼器層堆疊而成

code??

# 編碼器

class Encoder(nn.Module):def __init__(self, layer,N) -> None:super(Encoder,self).__init__()self.layers = clones(layer,N)self.norm = LayerNorm(layer.size)def forward(self, x, mask):"""forward函數的輸入和編碼器層相同,x代表上一層的輸出,mask代表掩碼張量"""# 首先就是對我們克隆的編碼器層進行循環,每次都會得到一個新的x,# 這個循環的過程,就相當于輸出的x經過了N個編碼器層的處理,# 最后再通過規范化層的對象self.norm進行處理,最后返回結果for layre in self.layers:x = layre(x,mask)return self.norm(x)測試代碼在下面:

輸出:

en_result.shape : torch.Size([2, 4, 512])

en_result : tensor([[[-2.7477e-01, -1.1117e+00, 6.1682e-02, ..., 6.7421e-01,-6.2473e-02, -4.6477e-02],[-7.7232e-01, -7.6969e-01, -2.0160e-01, ..., 2.6131e+00,-1.9882e+00, 1.3715e+00],[-1.4178e+00, 2.6184e-01, 1.1888e-01, ..., -9.9172e-01,1.3337e-01, 1.3132e+00],[-1.3268e+00, -1.1559e+00, -1.1774e+00, ..., -8.1548e-01,-2.8089e-02, 1.4730e-03]],[[-1.3472e+00, 4.4969e-01, -4.3498e-02, ..., -9.8910e-01,7.4551e-02, 1.1824e+00],[-2.2395e-02, 3.1730e-01, 6.8652e-02, ..., 4.3939e-01,2.8600e+00, 3.2169e-01],[-7.2252e-01, -7.6787e-01, -7.5412e-01, ..., 6.3915e-02,1.2210e+00, -2.3871e+00],[ 1.6294e-02, -4.8995e-02, -2.2887e-02, ..., -7.7798e-01,4.4148e+00, 1.7802e-01]]], grad_fn=<AddBackward0>)測試代碼

from inputs import Embeddings,PositionalEncoding

import numpy as np

import torch

import torch.nn.functional as F

import torch.nn as nn

import matplotlib.pyplot as plt

import math

import copy def subsequent_mask(size):"""生成向后遮掩的掩碼張量,參數size是掩碼張量最后兩個維度的大小,他們最好會形成一個方陣"""attn_shape = (1,size,size)# 使用np.ones方法向這個形狀中添加1元素,形成上三角陣subsequent_mask = np.triu(np.ones(attn_shape),k=1).astype('uint8')# 最后將numpy類型轉化為torch中的tensor,內部做一個1-的操作,# 在這個其實是做了一個三角陣的反轉,subsequent_mask中的每個元素都會被1減# 如果是0,subsequent_mask中的該位置由0變成1# 如果是1,subsequent_mask中的該位置由1變成0return torch.from_numpy(1-subsequent_mask)def attention(query, key, value, mask=None, dropout=None):""" 注意力機制的實現,輸入分別是query、key、value,mask此時輸入的query、key、value的形狀應該是 batch * number_token * embeding"""# 獲取詞嵌入的維度d_k = query.size(-1)# 根據注意力公示,將query和key的轉置相乘,然后乘上縮放系數得到評分,這里為什么需要轉置?# batch * number_token * embeding X batch * embeding * number_token = batch * number_token * number_token# 結果是個方陣scores = torch.matmul(query,key.transpose(-2,-1))/math.sqrt(d_k)# 判斷是否使用maskif mask is not None:scores = scores.masked_fill(mask==0,-1e9)# scores 的最后一維進行softmax操作,為什么是最后一維?# 因為scores是方陣,每行的所有列代表當前token和全體token的相似度值,# 因此需要在列維度上進行softmaxp_attn = F.softmax(scores,dim=-1) # 這個就是最終的注意力張量# 之后判斷是否使用dropout 進行隨機置0if dropout is not None:p_attn = dropout(p_attn)# 最后,根據公式將p_attn與value張量相乘獲得最終的query注意力表示,同時返回注意力張量# 計算后的 維度是多少呢?# batch * number_token * number_token X batch * number_token * embeding = # batch * number_token * embedingreturn torch.matmul(p_attn,value), p_attndef clones(module, N):"""用于生成相同網絡層的克隆函數,N代表克隆的數量"""return nn.ModuleList([copy.deepcopy(module) for _ in range(N)])class MultiHeadedAttention(nn.Module):def __init__(self, head,embedding_dim,dropout=0.1) -> None:""" head 代表頭的數量, embedding_dim 詞嵌入的維度 """super(MultiHeadedAttention,self).__init__()# 因為多頭是指對embedding的向量進行切分,因此頭的數量需要整除embeddingassert embedding_dim % head == 0# 計算每個頭獲得分割詞向量維度d_kself.d_k = embedding_dim // head# 傳入頭數self.head = head# 獲得線形層對象,因為線性層是不分割詞向量的,同時需要保證線性層輸出和詞向量維度相同# 因此線形層權重是方陣self.linears = clones(nn.Linear(embedding_dim,embedding_dim),4)# 注意力張量self.attn = None# dropoutself.dropout = nn.Dropout(p=dropout)def forward(self,query,key,value,mask=None):if mask is not None:# 拓展維度,因為有多頭了mask = mask.unsqueeze(0)batch_size = query.size(0)# 輸入先經過線形層,首先使用zip將網絡層和輸入數據連接一起,# 模型的輸出利用view和transpose進行維度和形狀的變換# (query,key,value) 分別對應一個線形層,經過線形層輸出后,立刻對其進行切分,注意這里切分是對query經過線形層輸出后進行切分,key經過線性層進行切分,value進行線性層進行切分,在這里才是多頭的由來query,key,value = \[model(x).view(batch_size, -1, self.head,self.d_k).transpose(1,2) for model,x in zip(self.linears,(query,key,value))]# 將每個頭的輸出傳入注意力層x,self.attn = attention(query,key,value,mask,self.dropout)# 得到每個頭的計算結果是4維張量,需要進行形狀的轉換x = x.transpose(1,2).contiguous().view(batch_size,-1,self.head*self.d_k)return self.linears[-1](x)# 前饋全連接層

class PositionwiseFeedForward(nn.Module):def __init__(self, d_model, d_ff,drop=0.1) -> None:""" d_mode :第一個線下層的輸入維度d_ff :隱藏層的維度drop :"""super(PositionwiseFeedForward,self).__init__()self.line1 = nn.Linear(d_model,d_ff)self.line2 = nn.Linear(d_ff,d_model)self.dropout = nn.Dropout(dropout)def forward(self,x):return self.line2(self.dropout(F.relu(self.line1(x))))# 規范化層

class LayerNorm(nn.Module):def __init__(self,features,eps=1e-6) -> None:# features 詞嵌入的維度# eps 足夠小的數據,防止除0,放到分母上super(LayerNorm,self).__init__()# 規范化層的參數,后續訓練使用的self.w = nn.Parameter(torch.ones(features))self.b = nn.Parameter(torch.zeros(features))self.eps = epsdef forward(self, x):mean = x.mean(-1,keepdim=True)stddev = x.std(-1,keepdim=True)# * 代表對應位置進行相乘,不是點積return self.w*(x-mean)/(stddev+self.eps) + self.b# 子層連接結構

class SublayerConnection(nn.Module):def __init__(self,size,dropout=0.1) -> None:"""size : 詞嵌入的維度"""super(SublayerConnection,self).__init__()self.norm = LayerNorm(size)self.dropout = nn.Dropout(dropout)def forward(self, x, sublayer):# sublayer 子層結構或者函數# x+ 跳躍層return x+self.dropout(sublayer(self.norm(x)))# 編碼器層

class EncoderLayer(nn.Module):def __init__(self,size,self_attn, feed_forward,dropout) -> None:"""size : 詞嵌入維度self_attn: 多頭自注意力子層實例化對象fee_forward: 前饋全連接層網絡"""super(EncoderLayer,self).__init__()self.self_attn = self_attnself.feed_forward = feed_forward# 編碼器中有兩個子層結構,所以使用clone實現self.sublayer = clones(SublayerConnection(size, dropout),2)self.size = sizedef forward(self,x,mask):x = self.sublayer[0](x, lambda x: self_attn(x,x,x,mask))return self.sublayer[1](x,self.feed_forward)# 編碼器

class Encoder(nn.Module):def __init__(self, layer,N) -> None:super(Encoder,self).__init__()self.layers = clones(layer,N)self.norm = LayerNorm(layer.size)def forward(self, x, mask):"""forward函數的輸入和編碼器層相同,x代表上一層的輸出,mask代表掩碼張量"""# 首先就是對我們克隆的編碼器層進行循環,每次都會得到一個新的x,# 這個循環的過程,就相當于輸出的x經過了N個編碼器層的處理,# 最后再通過規范化層的對象self.norm進行處理,最后返回結果for layre in self.layers:x = layre(x,mask)return self.norm(x)if __name__ == "__main__":# size = 5# sm = subsequent_mask(size)# print("sm: \n",sm.data.numpy())# plt.figure(figsize=(5,5))# plt.imshow(subsequent_mask(20)[0])# plt.waitforbuttonpress()# 詞嵌入dim = 512vocab =1000emb = Embeddings(dim,vocab)x = torch.LongTensor([[100,2,321,508],[321,234,456,324]])embr =emb(x)print("embr.shape = ",embr.shape)# 位置編碼pe = PositionalEncoding(dim,0.1) # 位置向量的維度是20,dropout是0pe_result = pe(embr)print("pe_result.shape = ",pe_result.shape)# 獲取注意力值# query = key = value = pe_result # attn,p_attn = attention(query,key,value)# print("attn.shape = ",attn.shape)# print("p_attn.shape = ",p_attn.shape)# print("attn: ",attn)# print("p_attn: ",p_attn)# # 帶mask# mask = torch.zeros(2,4,4)# attn,p_attn = attention(query,key,value,mask)# print("mask attn.shape = ",attn.shape)# print("mask p_attn.shape = ",p_attn.shape)# print("mask attn: ",attn)# print("mask p_attn: ",p_attn)# 多頭注意力測試head = 8embedding_dim = 512dropout = 0.2query = key = value = pe_result# mask 是給計算出來的點積矩陣使用的,這個矩陣是方陣,tokenmask = torch.zeros(8,4,4) mha = MultiHeadedAttention(head,embedding_dim,dropout)mha_result = mha(query,key,value,mask)print("mha_result.shape = ",mha_result.shape)print("mha_result: ",mha_result)# 前饋全連接層 測試x = mha_resultd_model =512d_ff=128dropout = 0.2ff = PositionwiseFeedForward(d_model, d_ff, dropout)ff_result = ff(x)print("ff_result.shape = ",ff_result.shape)print("ff_result = ",ff_result)# 規范化層測試features = d_model = 512esp = 1e-6ln = LayerNorm(features,esp)ln_result = ln(ff_result)print("ln_result.shape = ",ln_result.shape)print("ln_result = ",ln_result)# 子層連接結構 測試size = 512dropout=0.2head=8d_model=512x = pe_resultmask = torch.zeros(8,4,4)self_attn = MultiHeadedAttention(head,d_model,dropout)sublayer = lambda x:self_attn(x,x,x,mask) # 子層函數sc = SublayerConnection(size,dropout)sc_result = sc(x,sublayer)print("sc_result.sahpe = ", sc_result.shape)print("sc_result = ", sc_result)# 編碼器層 測試size = 512dropout=0.2head=8d_model=512d_ff = 64x = pe_resultmask = torch.zeros(8,4,4)self_attn = MultiHeadedAttention(head,d_model,dropout)ff = PositionwiseFeedForward(d_model,d_ff,dropout)mask = torch.zeros(8,4,4)el = EncoderLayer(size,self_attn,ff,dropout)el_result = el(x,mask)print("el_result.shape = ", el_result.shape)print("el_result = ", el_result)# 編碼器測試size = 512dropout=0.2head=8d_model=512d_ff = 64c = copy.deepcopyx = pe_resultself_attn = MultiHeadedAttention(head,d_model,dropout)ff = PositionwiseFeedForward(d_model,d_ff,dropout)# 編碼器層不是共享的,因此需要深度拷貝layer= EncoderLayer(size,c(self_attn),c(ff),dropout)N=8mask = torch.zeros(8,4,4)en = Encoder(layer,N)en_result = en(x,mask)print("en_result.shape : ",en_result.shape)print("en_result : ",en_result)

)

12.5-Dem)

prepare 階段)

)

)