1. 前言

之前文章安裝 kubernetes 集群,都是使用 kubeadm 安裝,然鵝很多公司也采用二進制方式搭建集群。這篇文章主要講解,如何采用二進制包來搭建完整的高可用集群。相比使用 kubeadm 搭建,二進制搭建要繁瑣很多,需要自己配置簽名證書,每個組件都需要一步步配置安裝。

?

2. 環境準備

2.1 機器規劃

| IP地址 | 機器名稱 | 機器配置 | 操作系統 | 機器角色 | 安裝軟件 |

|---|---|---|---|---|---|

| 172.10.1.11 | master1 | 2C4G | CentOS7.6 | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd |

| 172.10.1.12 | msater2 | 2C4G | CentOS7.6 | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd |

| 172.10.1.13 | master3 | 2C4G | CentOS7.6 | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd |

| 172.10.1.14 | node1 | 2C4G | CentOS7.6 | worker | kubelet、kube-proxy |

| 172.10.1.15 | node2 | 2C4G | CentOS7.6 | worker | kubelet、kube-proxy |

| 172.10.1.16 | node2 | 2C4G | CentOS7.6 | worker | kubelet、kube-proxy |

| 172.10.0.20 | / | / | / | 負載均衡VIP | / |

注:此處VIP是采用的云廠商的SLB,你也可以使用haproxy + keepalived的方式實現。

2.2 軟件版本

| 軟件 | 版本 |

|---|---|

| kube-apiserver、kube-controller-manager、kube-scheduler、kubelet、kube-proxy | v1.20.2 |

| kube-apiserver、kube-controller-manager、kube-scheduler、kubelet、kube-proxy | v1.20.2 |

| etcd | v3.4.13 |

| calico | v3.14 |

| coredns | 1.7.0 |

3. 搭建集群

3.1 機器基本配置

以下配置在6臺機器上面操作

3.1.1 修改主機名

修改主機名稱:master1、master2、master3、node1、node2、node3

3.1.2 配置hosts文件

修改機器的/etc/hosts文件

cat >> /etc/hosts << EOF

172.10.1.11 master1

172.10.1.12 master2

172.10.1.13 master3

172.10.1.14 node1

172.10.1.15 node2

172.10.1.16 node3

EOF

3.1.3 關閉防火墻和selinux

systemctl stop firewalld

setenforce 0

sed -i 's/^SELINUX=.\*/SELINUX=disabled/' /etc/selinux/config

3.1.4 關閉交換分區

swapoff -a

永久關閉,修改/etc/fstab,注釋掉swap一行

3.1.5 時間同步

yum install -y chrony

systemctl start chronyd

systemctl enable chronyd

chronyc sources

3.1.6 修改內核參數

cat > /etc/sysctl.d/k8s.conf << EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

3.1.7 加載ipvs模塊

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

lsmod | grep ip_vs

lsmod | grep nf_conntrack_ipv4

yum install -y ipvsadm

3.2 配置工作目錄

每臺機器都需要配置證書文件、組件的配置文件、組件的服務啟動文件,現專門選擇 master1 來統一生成這些文件,然后再分發到其他機器。以下操作在 master1 上進行

[root@master1 ~]# mkdir -p /data/work

注:該目錄為配置文件和證書文件生成目錄,后面的所有文件生成相關操作均在此目錄下進行

[root@master1 ~]# ssh-keygen -t rsa -b 2048

將秘鑰分發到另外五臺機器,讓 master1 可以免密碼登錄其他機器

3.3 搭建etcd集群

3.3.1 配置etcd工作目錄

[root@master1 ~]# mkdir -p /etc/etcd # 配置文件存放目錄

[root@master1 ~]# mkdir -p /etc/etcd/ssl # 證書文件存放目錄

3.3.2 創建etcd證書

工具下載

[root@master1 work]# cd /data/work/

[root@master1 work]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@master1 work]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@master1 work]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

工具配置

[root@master1 work]# chmod +x cfssl*

[root@master1 work]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@master1 work]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@master1 work]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

配置ca請求文件

[root@master1 work]# vim ca-csr.json

{"CN": "kubernetes","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "k8s","OU": "system"}],"ca": {"expiry": "87600h"}

}

注:

CN:Common Name,kube-apiserver 從證書中提取該字段作為請求的用戶名 (User Name);瀏覽器使用該字段驗證網站是否合法;

O:Organization,kube-apiserver 從證書中提取該字段作為請求用戶所屬的組 (Group)

創建ca證書

[root@master1 work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

配置ca證書策略

[root@master1 work]# vim ca-config.json

{"signing": {"default": {"expiry": "87600h"},"profiles": {"kubernetes": {"usages": ["signing","key encipherment","server auth","client auth"],"expiry": "87600h"}}}

}

配置etcd請求csr文件

[root@master1 work]# vim etcd-csr.json

{"CN": "etcd","hosts": ["127.0.0.1","172.10.1.11","172.10.1.12","172.10.1.13"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "k8s","OU": "system"}]

}

生成證書

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

[root@master1 work]# ls etcd*.pem

etcd-key.pem etcd.pem

3.3.3 部署etcd集群

下載etcd軟件包

[root@master1 work]# wget https://github.com/etcd-io/etcd/releases/download/v3.4.13/etcd-v3.4.13-linux-amd64.tar.gz

[root@master1 work]# tar -xf etcd-v3.4.13-linux-amd64.tar.gz

[root@master1 work]# cp -p etcd-v3.4.13-linux-amd64/etcd* /usr/local/bin/

[root@master1 work]# rsync -vaz etcd-v3.4.13-linux-amd64/etcd* master2:/usr/local/bin/

[root@master1 work]# rsync -vaz etcd-v3.4.13-linux-amd64/etcd* master3:/usr/local/bin/

創建配置文件

[root@master1 work]# vim etcd.conf

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://172.10.1.11:2380"

ETCD_LISTEN_CLIENT_URLS="https://172.10.1.11:2379,http://127.0.0.1:2379"#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.10.1.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://172.10.1.11:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://172.10.1.11:2380,etcd2=https://172.10.1.12:2380,etcd3=https://172.10.1.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

注:

ETCD_NAME:節點名稱,集群中唯一

ETCD_DATA_DIR:數據目錄

ETCD_LISTEN_PEER_URLS:集群通信監聽地址

ETCD_LISTEN_CLIENT_URLS:客戶端訪問監聽地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客戶端通告地址

ETCD_INITIAL_CLUSTER:集群節點地址

ETCD_INITIAL_CLUSTER_TOKEN:集群Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的當前狀態,new是新集群,existing表示加入已有集群

?

創建啟動服務文件

方式一:

有配置文件的啟動

[root@master1 work]# vim etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \--cert-file=/etc/etcd/ssl/etcd.pem \--key-file=/etc/etcd/ssl/etcd-key.pem \--trusted-ca-file=/etc/etcd/ssl/ca.pem \--peer-cert-file=/etc/etcd/ssl/etcd.pem \--peer-key-file=/etc/etcd/ssl/etcd-key.pem \--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \--peer-client-cert-auth \--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

方式二:

無配置文件的啟動方式

[root@master1 work]# vim etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \--name=etcd1 \--data-dir=/var/lib/etcd/default.etcd \--cert-file=/etc/etcd/ssl/etcd.pem \--key-file=/etc/etcd/ssl/etcd-key.pem \--trusted-ca-file=/etc/etcd/ssl/ca.pem \--peer-cert-file=/etc/etcd/ssl/etcd.pem \--peer-key-file=/etc/etcd/ssl/etcd-key.pem \--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \--peer-client-cert-auth \--client-cert-auth \--listen-peer-urls=https://172.10.1.11:2380 \--listen-client-urls=https://172.10.1.11:2379,http://127.0.0.1:2379 \--advertise-client-urls=https://172.10.1.11:2379 \--initial-advertise-peer-urls=https://172.10.1.11:2380 \--initial-cluster=etcd1=https://172.10.1.11:2380,etcd2=https://172.10.1.12:2380,etcd3=https://172.10.1.13:2380 \--initial-cluster-token=etcd-cluster \--initial-cluster-state=new

Restart=on-failure

RestartSec=5

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

注:本文采用第一種方式

??

同步相關文件到各個節點

[root@master1 work]# cp ca*.pem /etc/etcd/ssl/

[root@master1 work]# cp etcd*.pem /etc/etcd/ssl/

[root@master1 work]# cp etcd.conf /etc/etcd/

[root@master1 work]# cp etcd.service /usr/lib/systemd/system/

[root@master1 work]# for i in master2 master3;do rsync -vaz etcd.conf $i:/etc/etcd/;done

[root@master1 work]# for i in master2 master3;do rsync -vaz etcd*.pem ca*.pem $i:/etc/etcd/ssl/;done

[root@master1 work]# for i in master2 master3;do rsync -vaz etcd.service $i:/usr/lib/systemd/system/;done

注:master2和master3分別修改配置文件中etcd名字和ip,并創建目錄 /var/lib/etcd/default.etcd

?

啟動etcd集群

[root@master1 work]# mkdir -p /var/lib/etcd/default.etcd

[root@master1 work]# systemctl daemon-reload

[root@master1 work]# systemctl enable etcd.service

[root@master1 work]# systemctl start etcd.service

[root@master1 work]# systemctl status etcd

注:第一次啟動可能會卡一段時間,因為節點會等待其他節點啟動

?

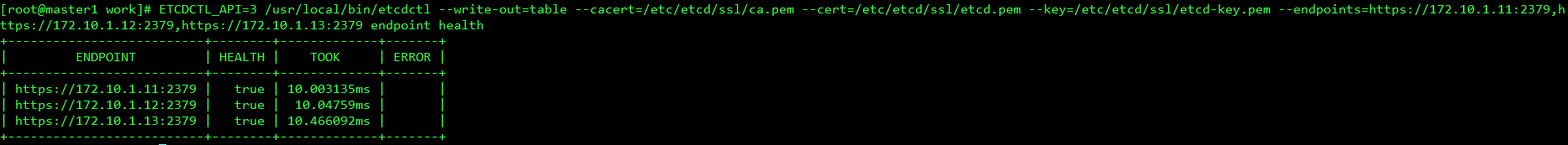

查看集群狀態

[root@master1 work]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://172.10.1.11:2379,https://172.10.1.12:2379,https://172.10.1.13:2379 endpoint health

3.4 kubernetes組件部署

3.4.1 下載安裝包

[root@master1 work]# wget https://dl.k8s.io/v1.20.1/kubernetes-server-linux-amd64.tar.gz

[root@master1 work]# tar -xf kubernetes-server-linux-amd64.tar

[root@master1 work]# cd kubernetes/server/bin/

[root@master1 bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

[root@master1 bin]# rsync -vaz kube-apiserver kube-controller-manager kube-scheduler kubectl master2:/usr/local/bin/

[root@master1 bin]# rsync -vaz kube-apiserver kube-controller-manager kube-scheduler kubectl master3:/usr/local/bin/

[root@master1 bin]# for i in node1 node2 node3;do rsync -vaz kubelet kube-proxy $i:/usr/local/bin/;done

[root@master1 bin]# cd /data/work/

3.4.2 創建工作目錄

[root@master1 work]# mkdir -p /etc/kubernetes/ # kubernetes組件配置文件存放目錄

[root@master1 work]# mkdir -p /etc/kubernetes/ssl # kubernetes組件證書文件存放目錄

[root@master1 work]# mkdir /var/log/kubernetes # kubernetes組件日志文件存放目錄

3.4.3 部署api-server

創建csr請求文件

[root@master1 work]# vim kube-apiserver-csr.json

{"CN": "kubernetes","hosts": ["127.0.0.1","172.10.1.11","172.10.1.12","172.10.1.13","172.10.1.14","172.10.1.15","172.10.1.16","172.10.0.20","10.255.0.1","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "k8s","OU": "system"}]

}

注:

如果 hosts 字段不為空則需要指定授權使用該證書的 IP 或域名列表。

由于該證書后續被 kubernetes master 集群使用,需要將master節點的IP都填上,同時還需要填寫 service 網絡的首個IP。(一般是 kube-apiserver 指定的 service-cluster-ip-range 網段的第一個IP,如 10.254.0.1)

生成證書和token文件

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

[root@master1 work]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

創建配置文件

[root@master1 work]# vim kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \--anonymous-auth=false \--bind-address=172.10.1.11 \--secure-port=6443 \--advertise-address=172.10.1.11 \--insecure-port=0 \--authorization-mode=Node,RBAC \--runtime-config=api/all=true \--enable-bootstrap-token-auth \--service-cluster-ip-range=10.255.0.0/16 \--token-auth-file=/etc/kubernetes/token.csv \--service-node-port-range=30000-50000 \--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \--client-ca-file=/etc/kubernetes/ssl/ca.pem \--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \ # 1.20以上版本必須有此參數--service-account-issuer=https://kubernetes.default.svc.cluster.local \ # 1.20以上版本必須有此參數--etcd-cafile=/etc/etcd/ssl/ca.pem \--etcd-certfile=/etc/etcd/ssl/etcd.pem \--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \--etcd-servers=https://172.10.1.11:2379,https://172.10.1.12:2379,https://172.10.1.13:2379 \--enable-swagger-ui=true \--allow-privileged=true \--apiserver-count=3 \--audit-log-maxage=30 \--audit-log-maxbackup=3 \--audit-log-maxsize=100 \--audit-log-path=/var/log/kube-apiserver-audit.log \--event-ttl=1h \--alsologtostderr=true \--logtostderr=false \--log-dir=/var/log/kubernetes \--v=4"

注:

--logtostderr:啟用日志

--v:日志等級

--log-dir:日志目錄

--etcd-servers:etcd集群地址

--bind-address:監聽地址

--secure-port:https安全端口

--advertise-address:集群通告地址

--allow-privileged:啟用授權

--service-cluster-ip-range:Service虛擬IP地址段

--enable-admission-plugins:準入控制模塊

--authorization-mode:認證授權,啟用RBAC授權和節點自管理

--enable-bootstrap-token-auth:啟用TLS bootstrap機制

--token-auth-file:bootstrap token文件

--service-node-port-range:Service nodeport類型默認分配端口范圍

--kubelet-client-xxx:apiserver訪問kubelet客戶端證書

--tls-xxx-file:apiserver https證書

--etcd-xxxfile:連接Etcd集群證書

--audit-log-xxx:審計日志

?

創建服務啟動文件

[root@master1 work]# vim kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

同步相關文件到各個節點

[root@master1 work]# cp ca*.pem /etc/kubernetes/ssl/

[root@master1 work]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@master1 work]# cp token.csv /etc/kubernetes/

[root@master1 work]# cp kube-apiserver.conf /etc/kubernetes/

[root@master1 work]# cp kube-apiserver.service /usr/lib/systemd/system/

[root@master1 work]# rsync -vaz token.csv master2:/etc/kubernetes/

[root@master1 work]# rsync -vaz token.csv master3:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-apiserver*.pem master2:/etc/kubernetes/ssl/ # 主要rsync同步文件,只能創建最后一級目錄,如果ssl目錄不存在會自動創建,但是上一級目錄kubernetes必須存在

[root@master1 work]# rsync -vaz kube-apiserver*.pem master3:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz ca*.pem master2:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz ca*.pem master3:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz kube-apiserver.conf master2:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-apiserver.conf master3:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-apiserver.service master2:/usr/lib/systemd/system/

[root@master1 work]# rsync -vaz kube-apiserver.service master3:/usr/lib/systemd/system/

注:master2和master3配置文件的IP地址修改為實際的本機IP

?

啟動服務

[root@master1 work]# systemctl daemon-reload

[root@master1 work]# systemctl enable kube-apiserver

[root@master1 work]# systemctl start kube-apiserver

[root@master1 work]# systemctl status kube-apiserver

測試

[root@master1 work]# curl --insecure https://172.10.1.11:6443/

有返回說明啟動正常

3.4.4 部署kubectl

創建csr請求文件

[root@master1 work]# vim admin-csr.json

{"CN": "admin","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "system:masters", "OU": "system"}]

}

說明:

后續 kube-apiserver 使用 RBAC 對客戶端(如 kubelet、kube-proxy、Pod)請求進行授權;

kube-apiserver 預定義了一些 RBAC 使用的 RoleBindings,如 cluster-admin 將 Group system:masters 與 Role cluster-admin 綁定,該 Role 授予了調用kube-apiserver 的所有 API的權限;

O指定該證書的 Group 為 system:masters,kubelet 使用該證書訪問 kube-apiserver 時 ,由于證書被 CA 簽名,所以認證通過,同時由于證書用戶組為經過預授權的 system:masters,所以被授予訪問所有 API 的權限;

注:

這個admin 證書,是將來生成管理員用的kube config 配置文件用的,現在我們一般建議使用RBAC 來對kubernetes 進行角色權限控制, kubernetes 將證書中的CN 字段 作為User, O 字段作為 Group;

"O": "system:masters", 必須是system:masters,否則后面kubectl create clusterrolebinding報錯。

?

生成證書

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

[root@master1 work]# cp admin*.pem /etc/kubernetes/ssl/

創建kubeconfig配置文件

kubeconfig 為 kubectl 的配置文件,包含訪問 apiserver 的所有信息,如 apiserver 地址、CA 證書和自身使用的證書

設置集群參數

[root@master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://172.10.0.20:6443 --kubeconfig=kube.config

設置客戶端認證參數

[root@master1 work]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

設置上下文參數

[root@master1 work]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

設置默認上下文

[root@master1 work]# kubectl config use-context kubernetes --kubeconfig=kube.config

[root@master1 work]# mkdir ~/.kube

[root@master1 work]# cp kube.config ~/.kube/config

授權kubernetes證書訪問kubelet api權限

[root@master1 work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

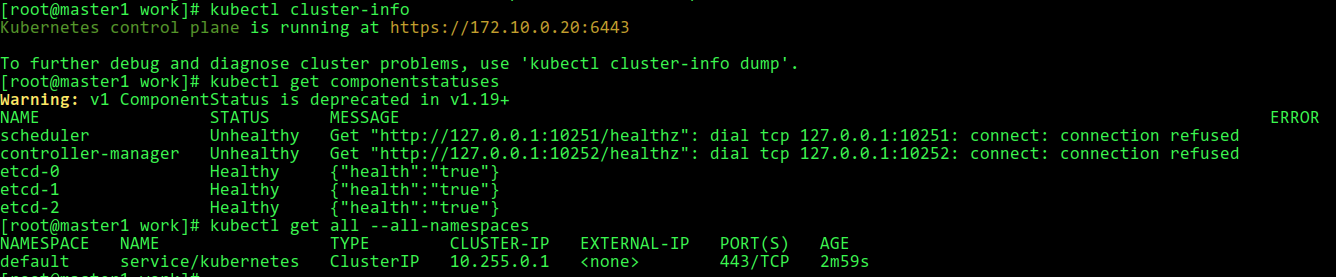

查看集群組件狀態

上面步驟完成后,kubectl就可以與kube-apiserver通信了

[root@master1 work]# kubectl cluster-info

[root@master1 work]# kubectl get componentstatuses

[root@master1 work]# kubectl get all --all-namespaces

同步kubectl配置文件到其他節點

[root@master1 work]# rsync -vaz /root/.kube/config master2:/root/.kube/

[root@master1 work]# rsync -vaz /root/.kube/config master3:/root/.kube/

配置kubectl子命令補全

[root@master1 work]# yum install -y bash-completion

[root@master1 work]# source /usr/share/bash-completion/bash_completion

[root@master1 work]# source <(kubectl completion bash)

[root@master1 work]# kubectl completion bash > ~/.kube/completion.bash.inc

[root@master1 work]# source '/root/.kube/completion.bash.inc'

[root@master1 work]# source $HOME/.bash_profile

3.4.5 部署kube-controller-manager

創建csr請求文件

[root@master1 work]# vim kube-controller-manager-csr.json

{"CN": "system:kube-controller-manager","key": {"algo": "rsa","size": 2048},"hosts": ["127.0.0.1","172.10.1.11","172.10.1.12","172.10.1.13"],"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "system:kube-controller-manager","OU": "system"}]

}

注:

hosts 列表包含所有 kube-controller-manager 節點 IP;

CN 為 system:kube-controller-manager、O 為 system:kube-controller-manager,kubernetes 內置的 ClusterRoleBindings system:kube-controller-manager 賦予 kube-controller-manager 工作所需的權限

生成證書

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

[root@master1 work]# ls kube-controller-manager*.pem

創建kube-controller-manager的kubeconfig

設置集群參數

[root@master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://172.10.0.20:6443 --kubeconfig=kube-controller-manager.kubeconfig

設置客戶端認證參數

[root@master1 work]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

設置上下文參數

[root@master1 work]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

設置默認上下文

[root@master1 work]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

創建配置文件

[root@master1 work]# vim kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS="--port=0 \--secure-port=10252 \--bind-address=127.0.0.1 \--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \--service-cluster-ip-range=10.255.0.0/16 \--cluster-name=kubernetes \--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \--allocate-node-cidrs=true \--cluster-cidr=10.0.0.0/16 \--experimental-cluster-signing-duration=87600h \--root-ca-file=/etc/kubernetes/ssl/ca.pem \--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \--leader-elect=true \--feature-gates=RotateKubeletServerCertificate=true \--controllers=*,bootstrapsigner,tokencleaner \--horizontal-pod-autoscaler-use-rest-clients=true \--horizontal-pod-autoscaler-sync-period=10s \--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \--use-service-account-credentials=true \--alsologtostderr=true \--logtostderr=false \--log-dir=/var/log/kubernetes \--v=2"

創建啟動文件

[root@master1 work]# vim kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target

同步相關文件到各個節點

[root@master1 work]# cp kube-controller-manager*.pem /etc/kubernetes/ssl/

[root@master1 work]# cp kube-controller-manager.kubeconfig /etc/kubernetes/

[root@master1 work]# cp kube-controller-manager.conf /etc/kubernetes/

[root@master1 work]# cp kube-controller-manager.service /usr/lib/systemd/system/

[root@master1 work]# rsync -vaz kube-controller-manager*.pem master2:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz kube-controller-manager*.pem master3:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz kube-controller-manager.kubeconfig kube-controller-manager.conf master2:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-controller-manager.kubeconfig kube-controller-manager.conf master3:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-controller-manager.service master2:/usr/lib/systemd/system/

[root@master1 work]# rsync -vaz kube-controller-manager.service master3:/usr/lib/systemd/system/

啟動服務

[root@master1 work]# systemctl daemon-reload

[root@master1 work]# systemctl enable kube-controller-manager

[root@master1 work]# systemctl start kube-controller-manager

[root@master1 work]# systemctl status kube-controller-manager

3.4.6 部署kube-scheduler

創建csr請求文件

[root@master1 work]# vim kube-scheduler-csr.json

{"CN": "system:kube-scheduler","hosts": ["127.0.0.1","172.10.1.11","172.10.1.12","172.10.1.13"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "system:kube-scheduler","OU": "system"}]

}

注:

hosts 列表包含所有 kube-scheduler 節點 IP;

CN 為 system:kube-scheduler、O 為 system:kube-scheduler,kubernetes 內置的 ClusterRoleBindings system:kube-scheduler 將賦予 kube-scheduler 工作所需的權限。

生成證書

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

[root@master1 work]# ls kube-scheduler*.pem

創建kube-scheduler的kubeconfig

設置集群參數

[root@master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://172.10.0.20:6443 --kubeconfig=kube-scheduler.kubeconfig

設置客戶端認證參數

[root@master1 work]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

設置上下文參數

[root@master1 work]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

設置默認上下文

[root@master1 work]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

創建配置文件

[root@master1 work]# vim kube-scheduler.conf

KUBE_SCHEDULER_OPTS="--address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

創建服務啟動文件

[root@master1 work]# vim kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target

同步相關文件到各個節點

[root@master1 work]# cp kube-scheduler*.pem /etc/kubernetes/ssl/

[root@master1 work]# cp kube-scheduler.kubeconfig /etc/kubernetes/

[root@master1 work]# cp kube-scheduler.conf /etc/kubernetes/

[root@master1 work]# cp kube-scheduler.service /usr/lib/systemd/system/

[root@master1 work]# rsync -vaz kube-scheduler*.pem master2:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz kube-scheduler*.pem master3:/etc/kubernetes/ssl/

[root@master1 work]# rsync -vaz kube-scheduler.kubeconfig kube-scheduler.conf master2:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-scheduler.kubeconfig kube-scheduler.conf master3:/etc/kubernetes/

[root@master1 work]# rsync -vaz kube-scheduler.service master2:/usr/lib/systemd/system/

[root@master1 work]# rsync -vaz kube-scheduler.service master3:/usr/lib/systemd/system/

啟動服務

[root@master1 work]# systemctl daemon-reload

[root@master1 work]# systemctl enable kube-scheduler

[root@master1 work]# systemctl start kube-scheduler

[root@master1 work]# systemctl status kube-scheduler

3.4.7 部署docker

在三個work節點上安裝

安裝docker

[root@node1 ~]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

[root@node1 ~]# yum install -y docker-ce

[root@node1 ~]# systemctl enable docker

[root@node1 ~]# systemctl start docker

[root@node1 ~]# docker --version

修改docker源和驅動

[root@node1 ~]# cat > /etc/docker/daemon.json << EOF

{"exec-opts": ["native.cgroupdriver=systemd"],"registry-mirrors": ["https://1nj0zren.mirror.aliyuncs.com","https://kfwkfulq.mirror.aliyuncs.com","https://2lqq34jg.mirror.aliyuncs.com","https://pee6w651.mirror.aliyuncs.com","http://hub-mirror.c.163.com","https://docker.mirrors.ustc.edu.cn","http://f1361db2.m.daocloud.io","https://registry.docker-cn.com"]

}

EOF

[root@node1 ~]# systemctl restart docker

[root@node1 ~]# docker info | grep "Cgroup Driver"

下載依賴鏡像

[root@node1 ~]# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

[root@node1 ~]# docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2 k8s.gcr.io/pause:3.2

[root@node1 ~]# docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2[root@node1 ~]# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0

[root@node1 ~]# docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0 k8s.gcr.io/coredns:1.7.0

[root@node1 ~]# docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.7.0

3.4.8 部署kubelet

以下操作在master1上操作

創建kubelet-bootstrap.kubeconfig

[root@master1 work]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

設置集群參數

[root@master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://172.10.0.20:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

設置客戶端認證參數

[root@master1 work]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

設置上下文參數

[root@master1 work]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

設置默認上下文

[root@master1 work]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

創建角色綁定

[root@master1 work]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

創建配置文件

[root@master1 work]# vim kubelet.json

{"kind": "KubeletConfiguration","apiVersion": "kubelet.config.k8s.io/v1beta1","authentication": {"x509": {"clientCAFile": "/etc/kubernetes/ssl/ca.pem"},"webhook": {"enabled": true,"cacheTTL": "2m0s"},"anonymous": {"enabled": false}},"authorization": {"mode": "Webhook","webhook": {"cacheAuthorizedTTL": "5m0s","cacheUnauthorizedTTL": "30s"}},"address": "172.10.1.14","port": 10250,"readOnlyPort": 10255,"cgroupDriver": "cgroupfs", # 如果docker的驅動為systemd,處修改為systemd。此處設置很重要,否則后面node節點無法加入到集群"hairpinMode": "promiscuous-bridge","serializeImagePulls": false,"featureGates": {"RotateKubeletClientCertificate": true,"RotateKubeletServerCertificate": true},"clusterDomain": "cluster.local.","clusterDNS": ["10.255.0.2"]

}

創建啟動文件

[root@master1 work]# vim kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \--cert-dir=/etc/kubernetes/ssl \--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \--config=/etc/kubernetes/kubelet.json \--network-plugin=cni \--pod-infra-container-image=k8s.gcr.io/pause:3.2 \--alsologtostderr=true \--logtostderr=false \--log-dir=/var/log/kubernetes \--v=2

Restart=on-failure

RestartSec=5[Install]

WantedBy=multi-user.target[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service[Service]

WorkingDirectory=/var/lib/kubelet/

ExecStart=/usr/local/bin/kubelet \--address=192.168.63.135 \--hostname-override=192.168.63.135 \--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0 \--bootstrap-kubeconfig=/etc/kubernetes/ssl1/kubelet-bootstrap.kubeconfig \--kubeconfig=/etc/kubernetes/ssl1/kubelet.kubeconfig \--cert-dir=/etc/kubernetes/ssl1/ \--container-runtime=docker \--cluster-dns=10.255.0.2 \--cluster-domain=cluster.local \--hairpin-mode promiscuous-bridge \--serialize-image-pulls=false \--register-node=true \--logtostderr=true \--cgroup-driver=cgroupfs \--runtime-cgroups=/systemd/user.slice \--kubelet-cgroups=/systemd/user.slice \--v=2Restart=on-failure

KillMode=process

LimitNOFILE=65536

RestartSec=5[Install]

WantedBy=multi-user.target注:

–hostname-override:顯示名稱,集群中唯一

–network-plugin:啟用CNI

–kubeconfig:空路徑,會自動生成,后面用于連接apiserver

–bootstrap-kubeconfig:首次啟動向apiserver申請證書

–config:配置參數文件

–cert-dir:kubelet證書生成目錄

–pod-infra-container-image:管理Pod網絡容器的鏡像

同步相關文件到各個節點

[root@master1 work]# cp kubelet-bootstrap.kubeconfig /etc/kubernetes/

[root@master1 work]# cp kubelet.json /etc/kubernetes/

[root@master1 work]# cp kubelet.service /usr/lib/systemd/system/

以上步驟,如果master節點不安裝kubelet,則不用執行

[root@master1 work]# for i in node1 node2 node3;do rsync -vaz kubelet-bootstrap.kubeconfig kubelet.json $i:/etc/kubernetes/;done

[root@master1 work]# for i in node1 node2 node3;do rsync -vaz ca.pem $i:/etc/kubernetes/ssl/;done

[root@master1 work]# for i in node1 node2 node3;do rsync -vaz kubelet.service $i:/usr/lib/systemd/system/;done

注:kubelete.json配置文件address改為各個節點的ip地址

啟動服務

各個work節點上操作

[root@node1 ~]# mkdir /var/lib/kubelet

[root@node1 ~]# mkdir /var/log/kubernetes

[root@node1 ~]# systemctl daemon-reload

[root@node1 ~]# systemctl enable kubelet

[root@node1 ~]# systemctl start kubelet

[root@node1 ~]# systemctl status kubelet

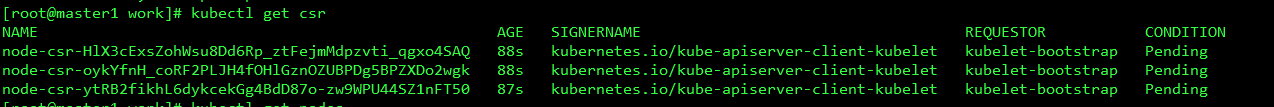

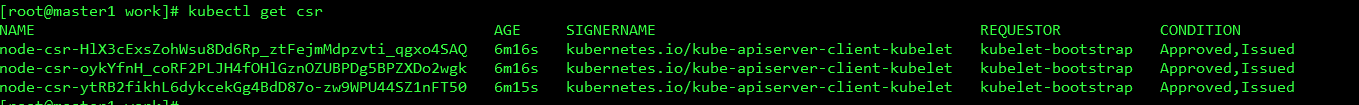

確認kubelet服務啟動成功后,接著到master上Approve一下bootstrap請求。執行如下命令可以看到三個worker節點分別發送了三個 CSR 請求:

[root@master1 work]# kubectl get csr

[root@master1 work]# kubectl certificate approve node-csr-HlX3cExsZohWsu8Dd6Rp_ztFejmMdpzvti_qgxo4SAQ

[root@master1 work]# kubectl certificate approve node-csr-oykYfnH_coRF2PLJH4fOHlGznOZUBPDg5BPZXDo2wgk

[root@master1 work]# kubectl certificate approve node-csr-ytRB2fikhL6dykcekGg4BdD87o-zw9WPU44SZ1nFT50

[root@master1 work]# kubectl get csr

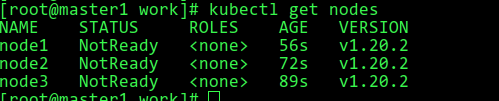

[root@master1 work]# kubectl get nodes

3.4.9 部署kube-proxy

創建csr請求文件

[root@master1 work]# vim kube-proxy-csr.json

{"CN": "system:kube-proxy","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "Hubei","L": "Wuhan","O": "k8s","OU": "system"}]

}

生成證書

[root@master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@master1 work]# ls kube-proxy*.pem

創建kubeconfig文件

[root@master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://172.10.0.20:6443 --kubeconfig=kube-proxy.kubeconfig

[root@master1 work]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

[root@master1 work]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

[root@master1 work]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

創建kube-proxy配置文件

[root@master1 work]# vim kube-proxy.yaml

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 172.10.1.14

clientConnection:kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 192.168.0.0/16 # 此處網段必須與網絡組件網段保持一致,否則部署網絡組件時會報錯

healthzBindAddress: 172.10.1.14:10256

kind: KubeProxyConfiguration

metricsBindAddress: 172.10.1.14:10249

mode: "ipvs"

創建服務啟動文件

[root@master1 work]# vim kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \--config=/etc/kubernetes/kube-proxy.yaml \--alsologtostderr=true \--logtostderr=false \--log-dir=/var/log/kubernetes \--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536[Install]

WantedBy=multi-user.target

同步文件到各個節點

[root@master1 work]# cp kube-proxy*.pem /etc/kubernetes/ssl/

[root@master1 work]# cp kube-proxy.kubeconfig kube-proxy.yaml /etc/kubernetes/

[root@master1 work]# cp kube-proxy.service /usr/lib/systemd/system/

master節點不安裝kube-proxy,則以上步驟不用執行

[root@master1 work]# for i in node1 node2 node3;do rsync -vaz kube-proxy.kubeconfig kube-proxy.yaml $i:/etc/kubernetes/;done

[root@master1 work]# for i in node1 node2 node3;do rsync -vaz kube-proxy.service $i:/usr/lib/systemd/system/;done

注:配置文件kube-proxy.yaml中address修改為各節點的實際IP

啟動服務

[root@node1 ~]# mkdir -p /var/lib/kube-proxy

[root@node1 ~]# systemctl daemon-reload

[root@node1 ~]# systemctl enable kube-proxy

[root@node1 ~]# systemctl restart kube-proxy

[root@node1 ~]# systemctl status kube-proxy

3.4.10 配置網絡組件

[root@master1 work]# wget https://docs.projectcalico.org/v3.14/manifests/calico.yaml

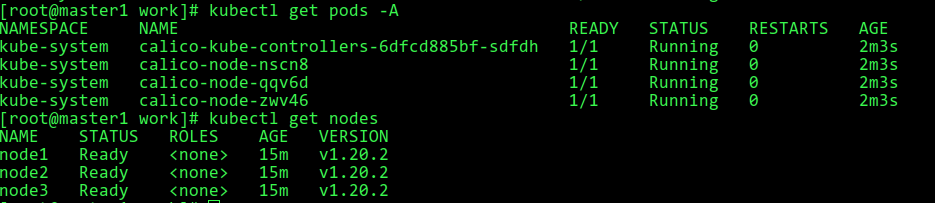

[root@master1 work]# kubectl apply -f calico.yaml 此時再來查看各個節點,均為Ready狀態

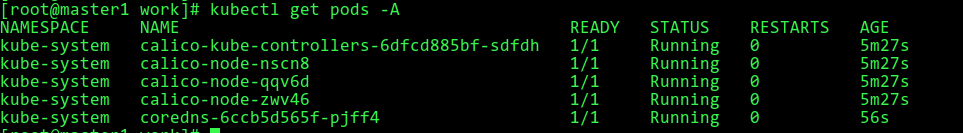

[root@master1 work]# kubectl get pods -A

[root@master1 work]# kubectl get nodes

3.4.11 部署coredns

下載coredns yaml文件:https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed

修改yaml文件:

kubernetes cluster.local in-addr.arpa ip6.arpa

forward . /etc/resolv.conf

clusterIP為:10.255.0.2(kubelet配置文件中的clusterDNS)

[root@master1 work]# cat coredns.yaml

apiVersion: v1

kind: ServiceAccount

metadata:name: corednsnamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:kubernetes.io/bootstrapping: rbac-defaultsname: system:coredns

rules:

- apiGroups:- ""resources:- endpoints- services- pods- namespacesverbs:- list- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:annotations:rbac.authorization.kubernetes.io/autoupdate: "true"labels:kubernetes.io/bootstrapping: rbac-defaultsname: system:coredns

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:coredns

subjects:

- kind: ServiceAccountname: corednsnamespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:name: corednsnamespace: kube-system

data:Corefile: |.:53 {errorshealth {lameduck 5s}readykubernetes cluster.local in-addr.arpa ip6.arpa {fallthrough in-addr.arpa ip6.arpa}prometheus :9153forward . /etc/resolv.conf {max_concurrent 1000}cache 30loopreloadloadbalance}

---

apiVersion: apps/v1

kind: Deployment

metadata:name: corednsnamespace: kube-systemlabels:k8s-app: kube-dnskubernetes.io/name: "CoreDNS"

spec:# replicas: not specified here:# 1. Default is 1.# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.strategy:type: RollingUpdaterollingUpdate:maxUnavailable: 1selector:matchLabels:k8s-app: kube-dnstemplate:metadata:labels:k8s-app: kube-dnsspec:priorityClassName: system-cluster-criticalserviceAccountName: corednstolerations:- key: "CriticalAddonsOnly"operator: "Exists"nodeSelector:kubernetes.io/os: linuxaffinity:podAntiAffinity:preferredDuringSchedulingIgnoredDuringExecution:- weight: 100podAffinityTerm:labelSelector:matchExpressions:- key: k8s-appoperator: Invalues: ["kube-dns"]topologyKey: kubernetes.io/hostnamecontainers:- name: corednsimage: coredns/coredns:1.8.0imagePullPolicy: IfNotPresentresources:limits:memory: 170Mirequests:cpu: 100mmemory: 70Miargs: [ "-conf", "/etc/coredns/Corefile" ]volumeMounts:- name: config-volumemountPath: /etc/corednsreadOnly: trueports:- containerPort: 53name: dnsprotocol: UDP- containerPort: 53name: dns-tcpprotocol: TCP- containerPort: 9153name: metricsprotocol: TCPsecurityContext:allowPrivilegeEscalation: falsecapabilities:add:- NET_BIND_SERVICEdrop:- allreadOnlyRootFilesystem: truelivenessProbe:httpGet:path: /healthport: 8080scheme: HTTPinitialDelaySeconds: 60timeoutSeconds: 5successThreshold: 1failureThreshold: 5readinessProbe:httpGet:path: /readyport: 8181scheme: HTTPdnsPolicy: Defaultvolumes:- name: config-volumeconfigMap:name: corednsitems:- key: Corefilepath: Corefile

---

apiVersion: v1

kind: Service

metadata:name: kube-dnsnamespace: kube-systemannotations:prometheus.io/port: "9153"prometheus.io/scrape: "true"labels:k8s-app: kube-dnskubernetes.io/cluster-service: "true"kubernetes.io/name: "CoreDNS"

spec:selector:k8s-app: kube-dnsclusterIP: 10.255.0.2ports:- name: dnsport: 53protocol: UDP- name: dns-tcpport: 53protocol: TCP- name: metricsport: 9153protocol: TCP

[root@master1 work]# kubectl apply -f coredns.yaml

3.5 驗證

3.5.1 部署nginx

[root@master1 ~]# vim nginx.yaml

---

apiVersion: v1

kind: ReplicationController

metadata:name: nginx-controller

spec:replicas: 2selector:name: nginxtemplate:metadata:labels:name: nginxspec:containers:- name: nginximage: nginx:1.19.6ports:- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:name: nginx-service-nodeport

spec:ports:- port: 80targetPort: 80nodePort: 30001protocol: TCPtype: NodePortselector:name: nginx

[root@master1 ~]# kubectl apply -f nginx.yaml

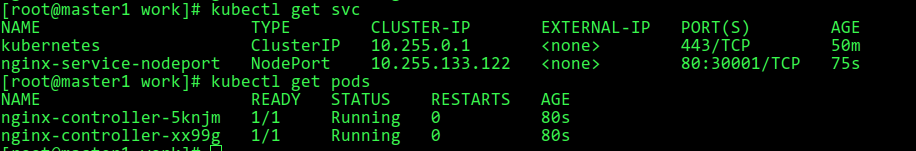

[root@master1 ~]# kubectl get svc

[root@master1 ~]# kubectl get pods

3.5.2 驗證

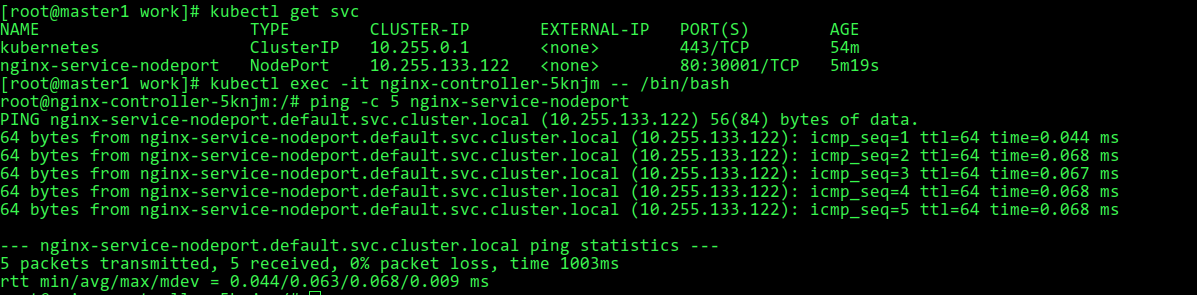

ping驗證nginx service

訪問nginx