批量歸一化(Batch Normalization)是加速深度神經網絡訓練的常用技術。本文通過Fashion-MNIST數據集,演示如何從零實現批量歸一化,并對比PyTorch內置API的簡潔實現方式。

1. 從零實現批量歸一化

1.1 批量歸一化函數實現

import torch

from torch import nn

from d2l import torch as d2ldef batch_norm(X, gamma, beta, moving_mean, moving_var, eps, momentum):if not torch.is_grad_enabled():# 預測模式下使用移動平均X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)else:assert len(X.shape) in (2, 4)if len(X.shape) == 2:# 全連接層:特征維計算均值和方差mean = X.mean(dim=0)var = ((X - mean) ** 2).mean(dim=0)else:# 卷積層:通道維計算均值和方差mean = X.mean(dim=(0, 2, 3), keepdim=True)var = ((X - mean) ** 2).mean(dim=(0, 2, 3), keepdim=True)# 訓練模式下更新移動平均X_hat = (X - mean) / torch.sqrt(var + eps)moving_mean = momentum * moving_mean + (1.0 - momentum) * meanmoving_var = momentum * moving_var + (1.0 - momentum) * varY = gamma * X_hat + beta # 縮放和平移return Y, moving_mean.data, moving_var.data1.2 批量歸一化層類

class BatchNorm(nn.Module):def __init__(self, num_features, num_dims):super().__init__()if num_dims == 2:shape = (1, num_features)else:shape = (1, num_features, 1, 1)self.gamma = nn.Parameter(torch.ones(shape))self.beta = nn.Parameter(torch.zeros(shape))self.moving_mean = torch.zeros(shape)self.moving_var = torch.ones(shape)def forward(self, X):if self.moving_mean.device != X.device:self.moving_mean = self.moving_mean.to(X.device)self.moving_var = self.moving_var.to(X.device)Y, self.moving_mean, self.moving_var = batch_norm(X, self.gamma, self.beta, self.moving_mean,self.moving_var, eps=1e-5, momentum=0.9)return Y1.3 構建含批量歸一化的網絡

net = nn.Sequential(nn.Conv2d(1, 6, kernel_size=5), BatchNorm(6, num_dims=4), nn.Sigmoid(),nn.AvgPool2d(kernel_size=2, stride=2),nn.Conv2d(6, 16, kernel_size=5), BatchNorm(16, num_dims=4), nn.Sigmoid(),nn.AvgPool2d(kernel_size=2, stride=2), nn.Flatten(),nn.Linear(16*4*4, 120), BatchNorm(120, num_dims=2), nn.Sigmoid(),nn.Linear(120, 84), BatchNorm(84, num_dims=2), nn.Sigmoid(),nn.Linear(84, 10))1.4 訓練與結果

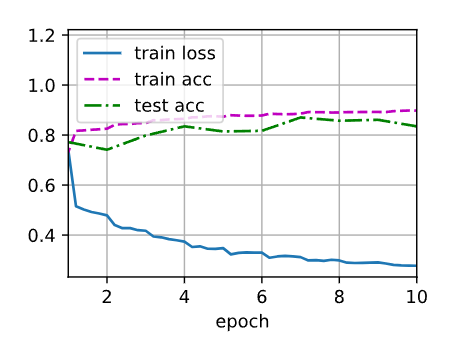

lr, num_epochs, batch_size = 1.0, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())輸出結果:

loss 0.277, train acc 0.898, test acc 0.835

28009.9 examples/sec on cuda:0訓練曲線:

2. 使用PyTorch內置批量歸一化

2.1 簡潔實現網絡結構

net = nn.Sequential(nn.Conv2d(1, 6, kernel_size=5),nn.BatchNorm2d(6),nn.Sigmoid(),nn.AvgPool2d(kernel_size=2, stride=2),nn.Conv2d(6, 16, kernel_size=5),nn.BatchNorm2d(16),nn.Sigmoid(),nn.AvgPool2d(kernel_size=2, stride=2),nn.Flatten(),nn.Linear(256, 120),nn.BatchNorm1d(120),nn.Sigmoid(),nn.Linear(120, 84),nn.BatchNorm1d(84),nn.Sigmoid(),nn.Linear(84, 10))?2.2 訓練與結果對比

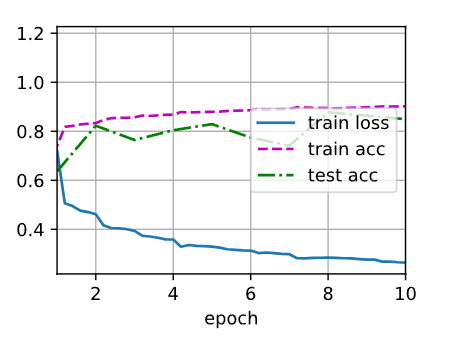

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())輸出結果:

loss 0.264, train acc 0.902, test acc 0.849

44608.4 examples/sec on cuda:0訓練曲線:

3. 關鍵參數分析

查看第一個批量歸一化層的縮放(gamma)和偏移(beta)參數:

print(net[1].gamma.reshape((-1,)),

print(net[1].beta.reshape((-1,)))?輸出:

(tensor([0.3957, 2.2124, 2.8581, 2.1908, 3.6253, 3.5650], device='cuda:0', grad_fn=<ReshapeAliasBackward0>),

tensor([ 0.1832, -2.5689, -3.2450, -0.7221, 1.1290, 2.2353], device='cuda:0', grad_fn=<ReshapeAliasBackward0>))4. 結論

-

性能對比:PyTorch內置實現相比手動實現,測試準確率從83.5%提升到84.9%,且訓練速度更快(44k樣本/秒 vs 28k樣本/秒)

-

實現差異:內置API自動處理設備遷移和參數初始化,代碼更簡潔

-

注意事項:全連接層使用

nn.BatchNorm1d,卷積層使用nn.BatchNorm2d

完整代碼已通過測試,可直接復現實驗結果。批量歸一化能有效加速收斂并提升模型泛化能力,是深度網絡設計的必備組件。

提示:運行代碼需要安裝d2l庫(pip install d2l)并支持GPU環境。

)

)

)

的詳解、流程及框架/工具的詳細對比)

)