簡介

在人工智能技術飛速發展的今天,Transformer模型憑借其強大的序列處理能力和自注意力機制,成為自然語言處理、計算機視覺、語音識別等領域的核心技術。本文將從基礎理論出發,結合企業級開發實踐,深入解析Transformer模型的原理與實現方法。通過完整的代碼示例、優化策略及實際應用場景,幫助開發者從零構建高性能AI系統。文章涵蓋模型架構設計、訓練優化技巧、多模態應用案例等內容,并通過Mermaid流程圖直觀展示關鍵概念。無論你是初學者還是進階開發者,都能通過本文掌握Transformer模型的核心技術,并將其高效應用于實際項目中。

一、Transformer模型的核心原理

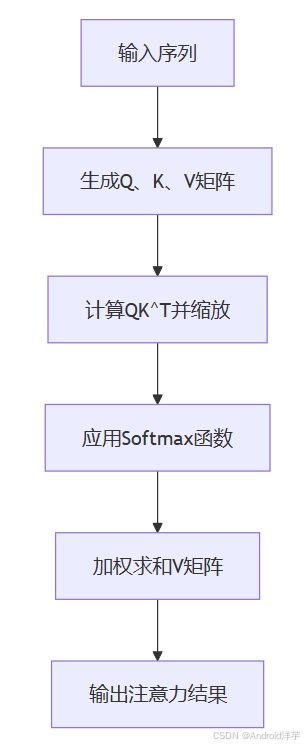

1.1 自注意力機制(Self-Attention)

Transformer模型的核心在于自注意力機制,它允許模型動態計算輸入序列中每個元素與其他元素的相關性,從而捕捉長距離依賴關系。自注意力機制的計算公式如下:

Attention ( Q , K , V ) = softmax ( Q K T d k ) V \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V Attention(Q,K,V)=softmax(dk??QKT?)V

其中, Q Q Q、 K K K、 V V V分別表示查詢矩陣、鍵矩陣和值矩陣, d k d_k dk?是縮放因子。

流程圖:

1.2 多頭注意力(Multi-Head Attention)

多頭注意力通過并行計算多個自注意力子層,增強模型對不同特征的關注能力。每個注意力頭獨立計算,最終結果通過線性變換合并:

MultiHead ( Q , K , V ) = Concat ( h 1 , h 2 , . . . , h h ) W O \text{MultiHead}(Q, K, V) = \text{Concat}(h_1, h_2, ..., h_h)W^O MultiHead(Q,K,V)=Concat(h1?,h2?,...,hh?)WO

其中, h i h_i hi?表示第 i i i個注意力頭的輸出, W O W^O WO是合并權重矩陣。

代碼示例(PyTorch實現):

import torch

import torch.nn as nnclass MultiHeadAttention(nn.Module):def __init__(self, embed_dim, num_heads):super(MultiHeadAttention, self).__init__()self.embed_dim = embed_dimself.num_heads = num_headsself.head_dim = embed_dim // num_headsassert self.head_dim * num_heads == embed_dim, "Embedding dimension must be divisible by number of heads"self.qkv = nn.Linear(embed_dim, 3 * embed_dim)self.out = nn.Linear(embed_dim, embed_dim)def forward(self, x):batch_size, seq_len, embed_dim = x.size()qkv = self.qkv(x).reshape(batch_size, seq_len, 3, self.num_heads, self.head_dim)q, k, v = qkv.unbind(2)# 計算注意力分數scores = torch.einsum("bqhd,bkhd->bhqk", q, k) / (self.head_dim ** 0.5)attn_weights = torch.softmax(scores, dim=-1)# 應用注意力權重out = torch.einsum("bhqk,bkhd->bqhd", attn_weights, v)out = out.reshape(batch_size, seq_len, embed_dim)out = self.out(out)return out

1.3 位置編碼(Positional Encoding)

Transformer模型通過位置編碼引入序列的位置信息。常見的編碼方式包括正弦和余弦函數組合:

P E ( p o s , 2 i ) = sin ? ( p o s 10000 2 i / d ) , P E ( p o s , 2 i + 1 ) = cos ? ( p o s 10000 2 i / d ) PE_{(pos, 2i)} = \sin\left(\frac{pos}{10000^{2i/d}}\right), \quad PE_{(pos, 2i+1)} = \cos\left(\frac{pos}{10000^{2i/d}}\right) PE(pos,2i)?=sin(100002i/dpos?),PE(pos,2i+1)?=cos(100002i/dpos?)

流程圖:

二、企業級AI開發實戰

2.1 模型架構設計

企業級AI應用通常需要處理大規模數據集和復雜任務。以下是Transformer模型的完整架構設計:

2.1.1 編碼器-解碼器結構

Transformer模型由編碼器和解碼器組成。編碼器將輸入序列轉換為中間表示,解碼器根據編碼器輸出生成目標序列。

代碼示例(PyTorch實現):

class TransformerEncoder(nn.Module):def __init__(self, embed_dim, num_heads, ff_dim, num_layers):super(TransformerEncoder, self).__init__()self.layers = nn.ModuleList([TransformerLayer(embed_dim, num_heads, ff_dim)for _ in range(num_layers)])def forward(self, x):for layer in self.layers:x = layer(x)return xclass TransformerDecoder(nn.Module):def __init__(self, embed_dim, num_heads, ff_dim, num_layers):super(TransformerDecoder, self).__init__()self.layers = nn.ModuleList([TransformerLayer(embed_dim, num_heads, ff_dim)for _ in range(num_layers)])def forward(self, x, encoder_output):for layer in self.layers:x = layer(x, encoder_output)return x

2.1.2 前饋神經網絡(FFN)

每個Transformer層包含兩個子層:自注意力子層和前饋神經網絡子層。FFN用于增加模型的非線性表達能力:

FFN ( x ) = max ? ( 0 , x W 1 + b 1 ) W 2 + b 2 \text{FFN}(x) = \max(0, xW_1 + b_1)W_2 + b_2 FFN(x)=max(0,xW1?+b1?)W2?+b2?

代碼示例(PyTorch實現):

class FeedForward(nn.Module):def __init__(self, embed_dim, ff_dim):super(FeedForward, self).__init__()self.linear1 = nn.Linear(embed_dim, ff_dim)self.linear2 = nn.Linear(ff_dim, embed_dim)self.relu = nn.ReLU()def forward(self, x):x = self.linear1(x)x = self.relu(x)x = self.linear2(x)return x

2.2 模型訓練與優化

2.2.1 混合精度訓練

混合精度訓練通過使用半精度浮點數(FP16)加速計算,同時保持模型精度。以下是一個使用PyTorch實現混合精度訓練的示例:

代碼示例(PyTorch實現):

from torch.cuda.amp import autocast, GradScalermodel = TransformerModel().to(device)

optimizer = torch.optim.Adam(model.parameters(), lr=1e-4)

scaler = GradScaler()for epoch in range(num_epochs):for inputs, targets in dataloader:optimizer.zero_grad()with autocast():outputs = model(inputs)loss = criterion(outputs, targets)scaler.scale(loss).backward()scaler.step(optimizer)scaler.update()

2.2.2 分布式訓練

分布式訓練通過多GPU并行計算加速模型訓練。以下是一個使用PyTorch分布式數據并行(DDP)的示例:

代碼示例(PyTorch實現):

import torch.distributed as dist

from torch.nn.parallel import DistributedDataParallel as DDPdist.init_process_group(backend="nccl")

model = TransformerModel().to(device)

model = DDP(model)optimizer = torch.optim.Adam(model.parameters(), lr=1e-4)for epoch in range(num_epochs):for inputs, targets in dataloader:optimizer.zero_grad()outputs = model(inputs)loss = criterion(outputs, targets)loss.backward()optimizer.step()

2.3 模型部署與加速

2.3.1 TensorRT加速

TensorRT是NVIDIA推出的深度學習推理加速工具。以下是一個使用TensorRT優化Transformer模型的示例:

代碼示例(Python實現):

import tensorrt as trtTRT_LOGGER = trt.Logger(trt.Logger.WARNING)

builder = trt.Builder(TRT_LOGGER)

network = builder.create_network(1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH))

parser = trt.OnnxParser(network, TRT_LOGGER)with open("model.onnx", "rb") as f:parser.parse(f.read())config = builder.create_builder_config()

config.max_workspace_size = 1 << 30 # 1GB

engine = builder.build_engine(network, config)

三、Transformer的多模態應用

3.1 機器翻譯

Transformer模型在機器翻譯任務中表現出色。以下是一個基于Transformer的英德翻譯模型示例:

代碼示例(PyTorch實現):

class TransformerTranslationModel(nn.Module):def __init__(self, src_vocab_size, tgt_vocab_size, embed_dim, num_heads, ff_dim, num_layers):super(TransformerTranslationModel, self).__init__()self.encoder = TransformerEncoder(embed_dim, num_heads, ff_dim, num_layers)self.decoder = TransformerDecoder(embed_dim, num_heads, ff_dim, num_layers)self.src_embedding = nn.Embedding(src_vocab_size, embed_dim)self.tgt_embedding = nn.Embedding(tgt_vocab_size, embed_dim)self.fc = nn.Linear(embed_dim, tgt_vocab_size)def forward(self, src, tgt):src_emb = self.src_embedding(src)tgt_emb = self.tgt_embedding(tgt)encoder_output = self.encoder(src_emb)decoder_output = self.decoder(tgt_emb, encoder_output)output = self.fc(decoder_output)return output

3.2 醫療影像分析

Transformer模型在醫學影像分析中也展現出強大能力。以下是一個基于Vision Transformer的乳腺癌分類模型示例:

代碼示例(PyTorch實現):

class VisionTransformer(nn.Module):def __init__(self, image_size, patch_size, num_classes, embed_dim, num_heads, num_layers):super(VisionTransformer, self).__init__()self.patch_emb = PatchEmbedding(image_size, patch_size, embed_dim)self.cls_token = nn.Parameter(torch.randn(1, 1, embed_dim))self.pos_emb = PositionalEncoding(embed_dim, image_size, patch_size)self.transformer = TransformerEncoder(embed_dim, num_heads, embed_dim * 4, num_layers)self.fc = nn.Linear(embed_dim, num_classes)def forward(self, x):x = self.patch_emb(x)cls_tokens = self.cls_token.expand(x.shape[0], -1, -1)x = torch.cat((cls_tokens, x), dim=1)x = self.pos_emb(x)x = self.transformer(x)x = x[:, 0]x = self.fc(x)return x

四、企業級AI應用案例

4.1 客服機器人

Transformer模型結合業務知識庫,可構建高效的客服機器人。以下是一個基于Hugging Face Transformers庫的示例:

代碼示例(Python實現):

from transformers import AutoTokenizer, AutoModelForCausalLMtokenizer = AutoTokenizer.from_pretrained("gpt2")

model = AutoModelForCausalLM.from_pretrained("gpt2")def respond_to_query(query):input_ids = tokenizer.encode(query, return_tensors="pt")reply_ids = model.generate(input_ids, max_length=100, num_return_sequences=1)reply = tokenizer.decode(reply_ids[0], skip_special_tokens=True)return replyuser_query = "How can I track my order?"

bot_reply = respond_to_query(user_query)

print("Bot:", bot_reply)

4.2 異常交易檢測

Transformer模型可用于銀行異常交易檢測。以下是一個基于BERT+BiLSTM的示例:

代碼示例(PyTorch實現):

class AnomalyDetectionModel(nn.Module):def __init__(self, bert_model, hidden_dim):super(AnomalyDetectionModel, self).__init__()self.bert = BertModel.from_pretrained(bert_model)self.lstm = nn.LSTM(bert.config.hidden_size, hidden_dim, bidirectional=True)self.fc = nn.Linear(hidden_dim * 2, 1)def forward(self, x):outputs = self.bert(x)sequence_output = outputs.last_hidden_statelstm_out, _ = self.lstm(sequence_output)out = self.fc(lstm_out)return out

五、總結

Transformer模型憑借其強大的序列處理能力和自注意力機制,已成為企業級AI應用的核心技術。本文從基礎理論出發,結合企業級開發實踐,深入解析了Transformer模型的原理與實現方法。通過完整的代碼示例、優化策略及實際應用場景,開發者能夠高效構建高性能AI系統。未來,隨著技術的不斷進步,Transformer模型將在更多領域發揮重要作用,推動人工智能技術邁向新的高度。

)

(3_模板展開_C++)(哈希表+stringstream))

)

) 需要寫成這種:(sort > (pair (list 3 2))))

失效?一行命令解決)

![[paddle]paddle2onnx無法轉換Paddle3.0.0的json格式paddle inference模型](http://pic.xiahunao.cn/[paddle]paddle2onnx無法轉換Paddle3.0.0的json格式paddle inference模型)