Qwen-VL 是阿里云研發的大規模視覺語言模型(Large Vision Language Model, LVLM)。Qwen-VL 可以以圖像、文本、檢測框作為輸入,并以文本和檢測框作為輸出。Qwen-VL 系列模型性能強大,具備多語言對話、多圖交錯對話等能力,并支持中文開放域定位和細粒度圖像識別與理解。

https://github.com/QwenLM/Qwen2.5-VL

安裝方法

pip install git+https://github.com/huggingface/transformers accelerate

pip install qwen-vl-utils[decord]

模型硬件要求:

| Precision | Qwen2.5-VL-3B | Qwen2.5-VL-7B | Qwen2.5-VL-72B |

|---|---|---|---|

| FP32 | 11.5 GB | 26.34 GB | 266.21 GB |

| BF16 | 5.75 GB | 13.17 GB | 133.11 GB |

| INT8 | 2.87 GB | 6.59 GB | 66.5 GB |

| INT4 | 1.44 GB | 3.29 GB | 33.28 GB |

模型特性

- 強大的文檔解析能力:將文本識別升級為全文檔解析,擅長處理多場景、多語言以及包含各種內置元素(手寫文字、表格、圖表、化學公式和樂譜)的文檔。

- 精準的對象定位跨格式支持:提升了檢測、指向和計數對象的準確性,支持絕對坐標和JSON格式,以實現高級空間推理。

- 超長視頻理解和細粒度視頻定位:將原生動態分辨率擴展到時間維度,增強對時長數小時的視頻的理解能力,同時能夠在秒級提取事件片段。

- 增強的計算機和移動設備代理功能:借助先進的定位、推理和決策能力,為模型賦予智能手機和計算機上更出色的代理功能。

使用案例

基礎圖文問答

from transformers import Qwen2_5_VLForConditionalGeneration, AutoProcessor

from qwen_vl_utils import process_vision_infomodel = Qwen2_5_VLForConditionalGeneration.from_pretrained("Qwen/Qwen2.5-VL-7B-Instruct", torch_dtype="auto", device_map="auto"

)# 傳入文本、圖像或視頻

messages = [{"role": "user","content": [{"type": "image","image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg",},{"type": "text", "text": "Describe this image."},],}

]# Preparation for inference

text = processor.apply_chat_template(messages, tokenize=False, add_generation_prompt=True

)

image_inputs, video_inputs = process_vision_info(messages)

inputs = processor(text=[text],images=image_inputs,videos=video_inputs,padding=True,return_tensors="pt",

)

inputs = inputs.to(model.device)# Inference: Generation of the output

generated_ids = model.generate(**inputs, max_new_tokens=128)

generated_ids_trimmed = [out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

]

output_text = processor.batch_decode(generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print(output_text)

多圖輸入

messages = [{"role": "user","content": [{"type": "image", "image": "file:///path/to/image1.jpg"},{"type": "image", "image": "file:///path/to/image2.jpg"},{"type": "text", "text": "Identify the similarities between these images."},],}

]

視頻理解

- Messages containing a images list as a video and a text query

messages = [{"role": "user","content": [{"type": "video","video": ["file:///path/to/frame1.jpg","file:///path/to/frame2.jpg","file:///path/to/frame3.jpg","file:///path/to/frame4.jpg",],},{"type": "text", "text": "Describe this video."},],}

]

- Messages containing a local video path and a text query

messages = [{"role": "user","content": [{"type": "video","video": "file:///path/to/video1.mp4","max_pixels": 360 * 420,"fps": 1.0,},{"type": "text", "text": "Describe this video."},],}

]

- Messages containing a video url and a text query

messages = [{"role": "user","content": [{"type": "video","video": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen2-VL/space_woaudio.mp4","min_pixels": 4 * 28 * 28,"max_pixels": 256 * 28 * 28,"total_pixels": 20480 * 28 * 28,},{"type": "text", "text": "Describe this video."},],}

]

物體檢測

- 定位最右上角的棕色蛋糕,以JSON格式輸出其bbox坐標

- 請以JSON格式輸出圖中所有物體bbox的坐標以及它們的名字,然后基于檢測結果回答以下問題:圖中物體的數目是多少?

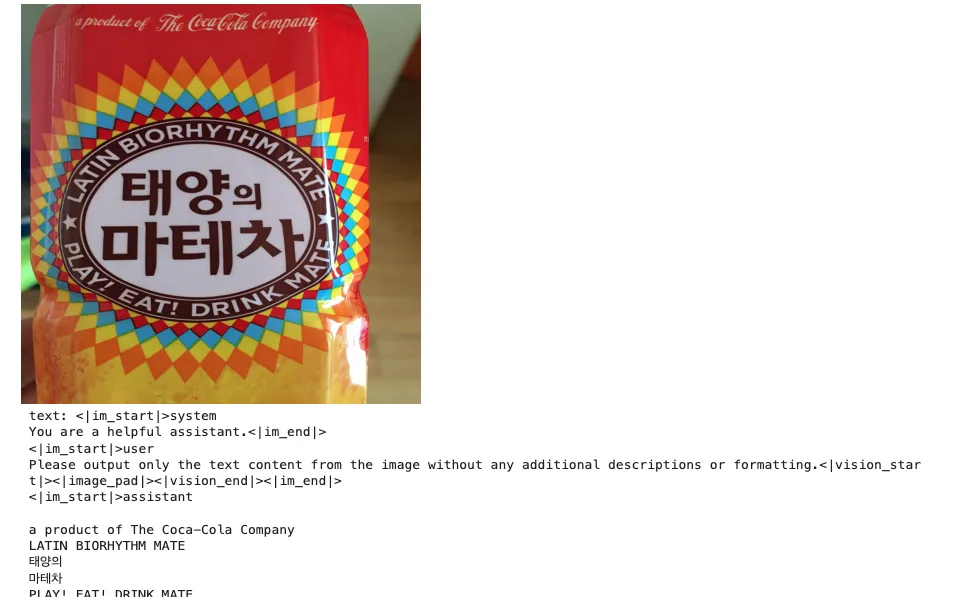

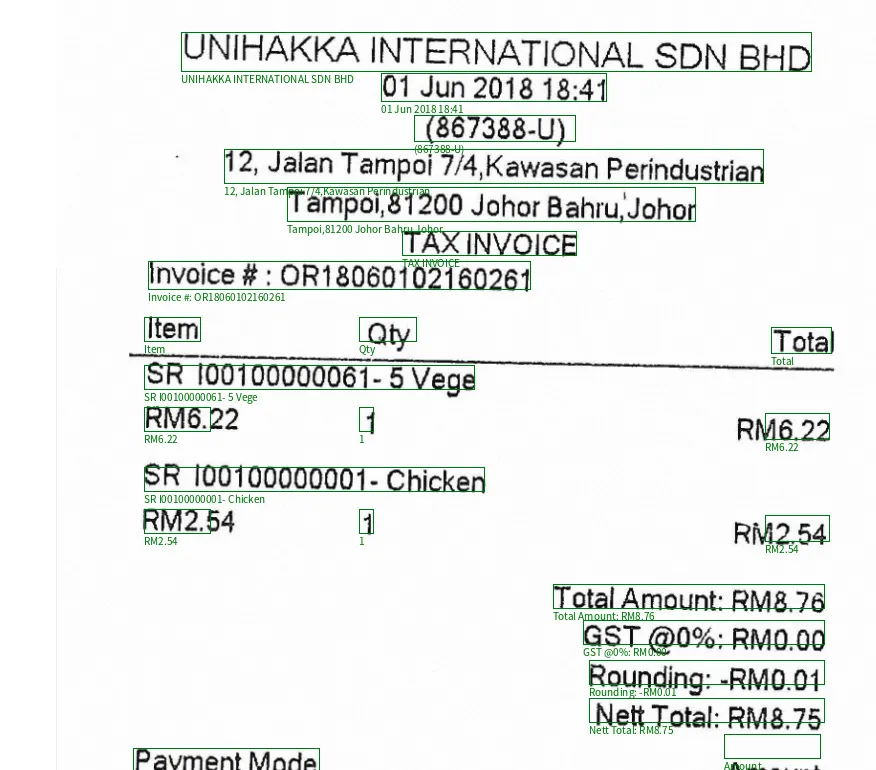

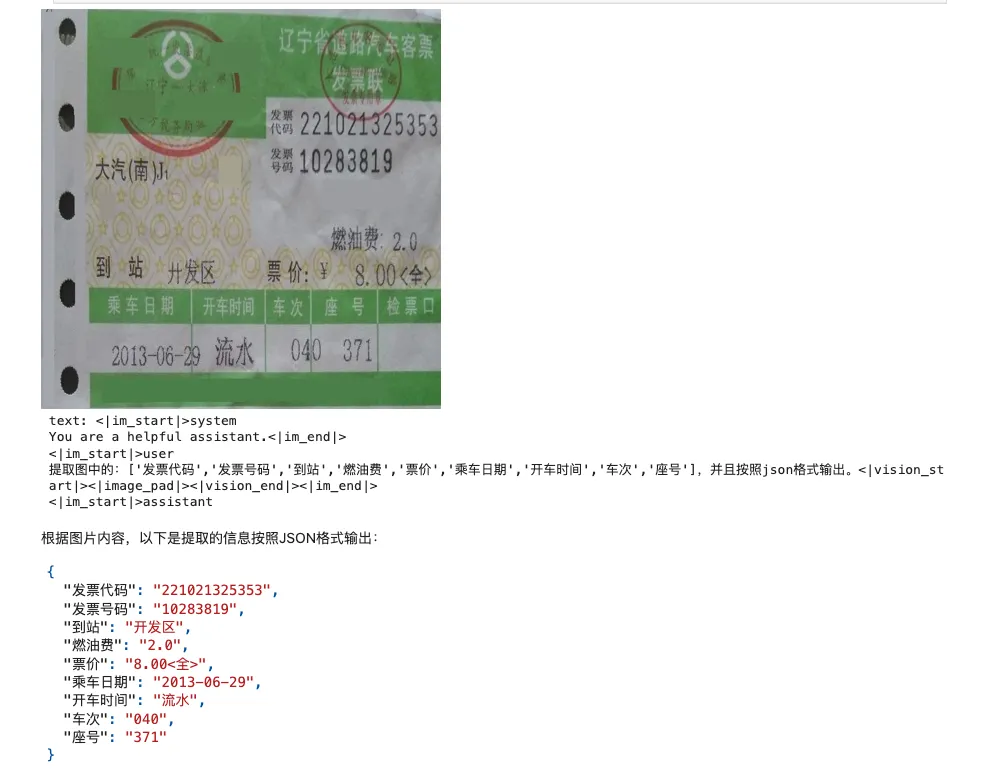

圖文解析OCR

- 請識別出圖中所有的文字

- Spotting all the text in the image with line-level, and output in JSON format.

- 提取圖中的:[‘發票代碼’,‘發票號碼’,‘到站’,‘燃油費’,‘票價’,‘乘車日期’,‘開車時間’,‘車次’,‘座號’],并且按照json格式輸出。

Agent & Computer Use

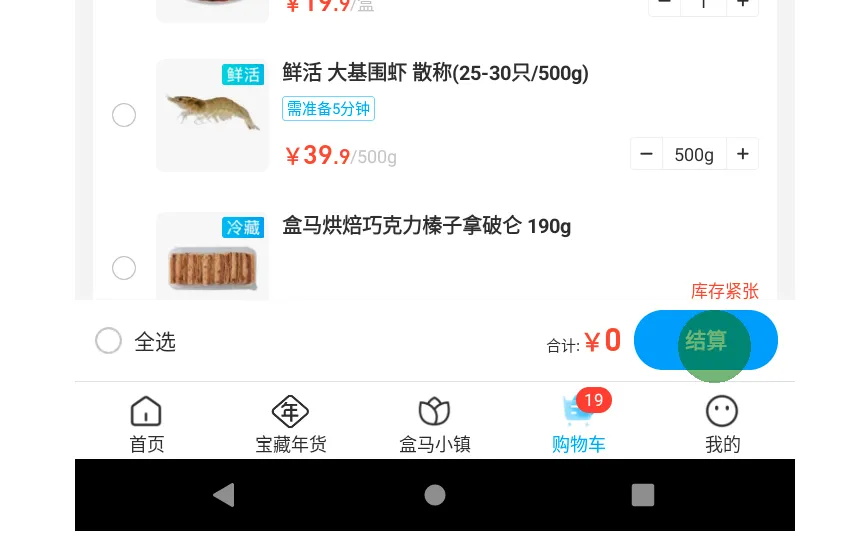

The user query:在盒馬中,打開購物車,結算(到付款頁面即可) (You have done the following operation on the current device):

編輯推薦

系統地介紹大語言模型的提示詞工程以及AI Agent的基本概念和設計方法論。許多用戶在使用ChatGPT等AI工具時,常常感到困惑:為什么有時候能得到滿意的回答,有時候卻答非所問?通過本書,讀者將學習如何構建有效的AI提示詞,以及如何設計合理的對話流程,從而更好地駕馭AI工具。

- KMP算法)

)

![[密碼學基礎]密碼學發展簡史:從古典藝術到量子安全的演進](http://pic.xiahunao.cn/[密碼學基礎]密碼學發展簡史:從古典藝術到量子安全的演進)

)