FFmpeg入門:最簡單的音視頻播放器

前兩章,我們已經了解了分別如何構建一個簡單和音頻播放器和視頻播放器。

FFmpeg入門:最簡單的音頻播放器

FFmpeg入門:最簡單的視頻播放器

本章我們將結合上述兩章的知識,看看如何融合成一個完整的音視頻播放器,跟上我的節奏,本章將是咱們后續完成一個完整的音視頻播放器的起點。

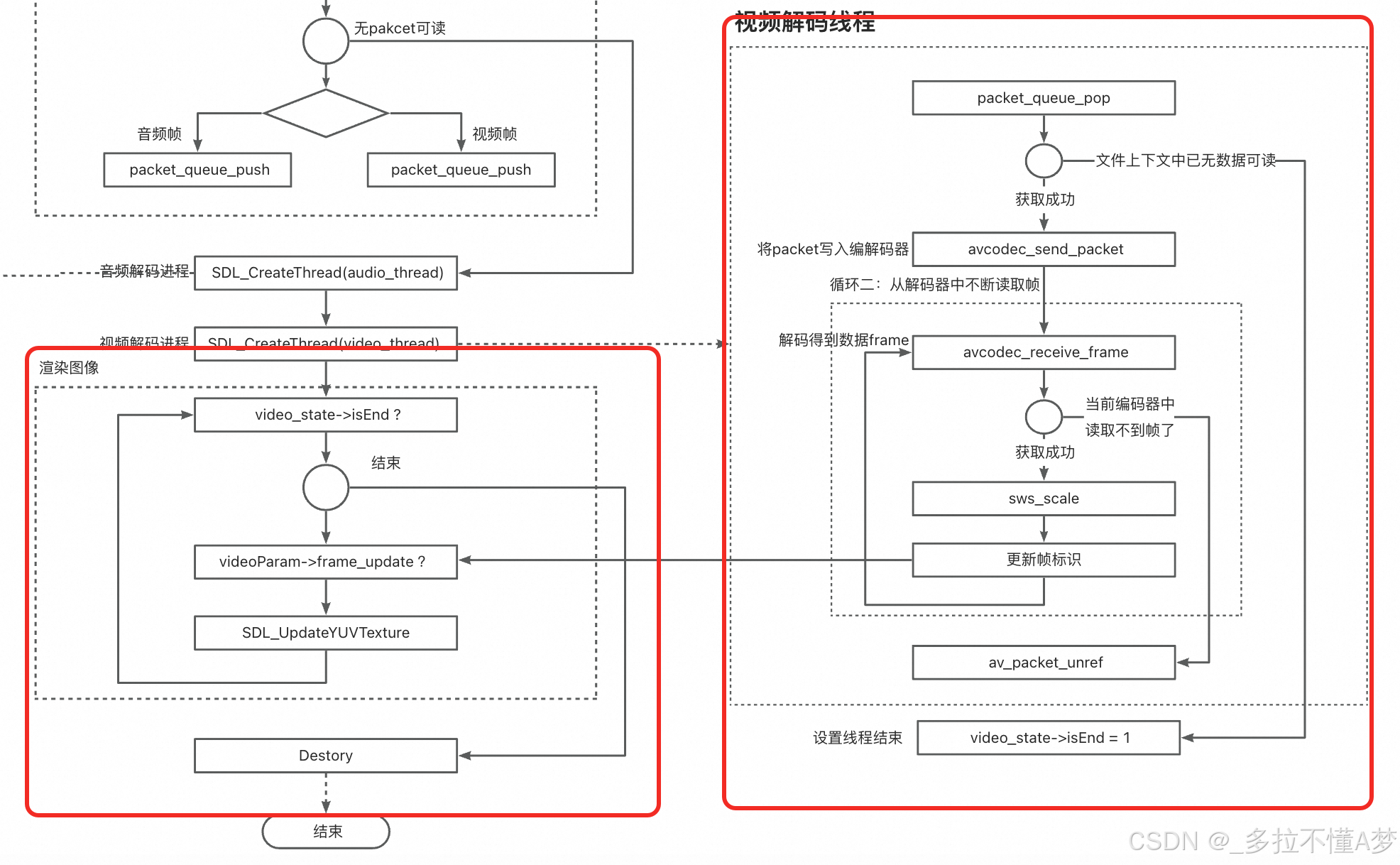

整體流程圖

話不多說,先上圖

這個圖似乎有點復雜了,這里我會分別將每個模塊拿出來講述,方便大家一步一步分析整個流程。

第一步:初始化

我們首先關注整個流程圖的最上面一部分,這部分其實和之前的流程一樣,主要就是將做一些前置的初始化工作:

1:打開文件,獲取文件上下文

2:找到對應的音頻/視頻流,獲取到Codec上下文

3:打開解碼器

4:分配輸出空間緩存,用于后續存儲解碼的輸出數據

5:音頻/視頻幀的格式轉化上下文

6:初始化SDL組件,主要是視頻的播放窗口和音頻播放器

代碼(省略了部分校驗和參數初始化,方便閱讀,原碼見文章末尾):

/** 初始化函數 */init_video_state(&video_state);audio_param = video_state->audioParam;video_param = video_state->videoParam;avformat_network_init();// 1. 打開視頻文件,獲取格式上下文if(avformat_open_input(&video_state->formatCtx, argv[1], NULL, NULL)!=0){printf("Couldn't open input stream.\n");return -1;}// 2. 對文件探測流信息if(avformat_find_stream_info(video_state->formatCtx, NULL) < 0){printf("Couldn't find stream information.\n");return -1;}// 3. 找到對應的 音頻流/視頻流 索引video_state->audioStream=-1;video_state->videoStream=-1;for(int i=0; i < video_state->formatCtx->nb_streams; i++) {if(video_state->formatCtx->streams[i]->codecpar->codec_type==AVMEDIA_TYPE_AUDIO){video_state->audioStream=i;}if (video_state->formatCtx->streams[i]->codecpar->codec_type==AVMEDIA_TYPE_VIDEO) {video_state->videoStream=i;}}// 4. 將 音頻流/視頻流 編碼參數寫入上下文AVCodecParameters* aCodecParam = video_state->formatCtx->streams[video_state->audioStream]->codecpar;avcodec_parameters_to_context(video_state->aCodecCtx, aCodecParam);AVCodecParameters* vCodecParam = video_state->formatCtx->streams[video_state->videoStream]->codecpar;avcodec_parameters_to_context(video_state->vCodecCtx, vCodecParam);// 5. 查找流的編碼器video_state->aCodec = avcodec_find_decoder(video_state->aCodecCtx->codec_id);video_state->vCodec = avcodec_find_decoder(video_state->vCodecCtx->codec_id);// 6. 打開流的編解碼器if(avcodec_open2(video_state->aCodecCtx, video_state->aCodec, NULL)<0){printf("Could not open audio codec.\n");return -1;}if(avcodec_open2(video_state->vCodecCtx, video_state->vCodec, NULL)<0){printf("Could not open video codec.\n");return -1;}/** 音頻輸出信息構建 */audio_output_set(video_state);/** 視頻輸出信息構建 */video_output_set(video_state);// SDL 初始化

#if USE_SDLif(SDL_Init(SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) {printf( "Could not initialize SDL - %s\n", SDL_GetError());return -1;}/** 初始化音頻SDL設備 */SDL_AudioSpec wanted_spec;wanted_spec.freq = audio_param->out_sample_rate; // 采樣率wanted_spec.format = AUDIO_S16SYS; // 采樣格式 16bitwanted_spec.channels = audio_param->out_channels; // 通道數wanted_spec.silence = 0;wanted_spec.samples = audio_param->out_nb_samples; // 單幀處理的采樣點wanted_spec.callback = fill_audio; // 回調函數wanted_spec.userdata = video_state->aCodecCtx; // 回調函數的參數/** 初始化視頻SDL設備 */SDL_Window* window = NULL;SDL_Renderer* renderer = NULL;SDL_Texture* texture= NULL;/** 窗口 */window = SDL_CreateWindow("SDL2 window",SDL_WINDOWPOS_CENTERED,SDL_WINDOWPOS_CENTERED,video_state->vCodecCtx->width,video_state->vCodecCtx->height,SDL_WINDOW_SHOWN);/** 渲染 */renderer = SDL_CreateRenderer(window,-1,SDL_RENDERER_ACCELERATED | SDL_RENDERER_PRESENTVSYNC);/** 紋理 */texture = SDL_CreateTexture(renderer,SDL_PIXELFORMAT_YV12,SDL_TEXTUREACCESS_STREAMING,video_state->vCodecCtx->width,video_state->vCodecCtx->height);// 打開音頻播放器if (SDL_OpenAudio(&wanted_spec, NULL)<0) {printf("can't open audio.\n");return -1;}#endif// 音頻上下文格式轉換swr_alloc_set_opts2(&video_state->swrCtx,&audio_param->out_channel_layout, // 輸出layoutaudio_param->out_sample_fmt, // 輸出格式audio_param->out_sample_rate, // 輸出采樣率&video_state->aCodecCtx->ch_layout, // 輸入layoutvideo_state->aCodecCtx->sample_fmt, // 輸入格式video_state->aCodecCtx->sample_rate, // 輸入采樣率0, NULL);swr_init(video_state->swrCtx);// 視頻上下文格式轉換video_state->swsCtx = sws_getContext(video_state->vCodecCtx->width, // src 寬video_state->vCodecCtx->height, // src 高video_state->vCodecCtx->pix_fmt, // src 格式video_param->width, // dst 寬video_param->height, // dst 高video_param->pix_fmt, // dst 格式SWS_BILINEAR,NULL,NULL,NULL);// 開始播放SDL_PauseAudio(0);

第二步:packet隊列寫入

做完準備工作之后,我們就將源文件中的輸入packet都取出來,放入到對應的音頻packet隊列和視頻packet隊列中,方便后續使用。然后然后分別啟動音頻解碼進程和視頻解碼進程同時進行解碼。

- 讀出packet,判斷packet類型

- 根據類型放入音頻和視頻packet隊列

- 創建解碼進程

// 循環1: 從文件中讀取packetwhile(av_read_frame(video_state->formatCtx, packet)>=0){/** 寫入音頻pkt隊列 */if(packet->stream_index==video_state->audioStream){packet_queue_push(video_state->aQueue, packet);}/** 寫入視頻pkt隊列 */if (packet->stream_index==video_state->videoStream) {packet_queue_push(video_state->vQueue, packet);}av_packet_unref(packet);SDL_PollEvent(&event);switch(event.type) {case SDL_QUIT:SDL_Quit();exit(0);break;default:break;}}printf("audio queue.size=%d\n", video_state->aQueue->size);// 創建一個線程并啟動SDL_CreateThread(audio_thread, "audio_thread", video_state);SDL_CreateThread(video_thread, "video_thread", video_state);

第三步:音頻解碼+播放

接下來會分別講一下音頻和視頻解碼進程,這兩個進程是同時開始的。

首先音頻解碼進程的步驟可以參考之前的音頻播放器文章。我簡單說一下步驟

- 從音頻packet隊列中取出packet

- 對packet進行解碼得到frame

- 按音頻輸出格式進行swr_convert轉換得到輸出值,并寫入buffer。

- SDL音頻播放器通過回調函數從buffer不斷讀取數據播放。

代碼如下:

/**音頻線程*/

int audio_thread(void *arg) {/**1. 從packet_queue隊列中取出packet2. 將packet進行解碼3. 寫入到sdl的緩沖區中*/ VideoState* video_state = (VideoState*) arg;AudioParam* audio_param = video_state->audioParam;PacketQueue* queue = video_state->aQueue;audio_param->index = 0;AVRational time_base = video_state->formatCtx->streams[video_state->audioStream]->time_base;int64_t av_start_time = av_gettime(); // 播放開始時間戳AVPacket packet;int ret;AVFrame* pFrame = av_frame_alloc();for(;;) {if (queue->size > 0) {packet_queue_pop(queue, &packet);// 將packet寫入編解碼器ret = avcodec_send_packet(video_state->aCodecCtx, &packet);// 獲取解碼后的幀while (!avcodec_receive_frame(video_state->aCodecCtx, pFrame)) {// 格式轉化swr_convert(video_state->swrCtx, &audio_param->out_buffer, audio_param->out_buffer_size,(const uint8_t **)pFrame->data, pFrame->nb_samples);audio_param->index++;printf("第%d幀 | pts:%lld | 幀大小(采樣點):%d | 實際播放點%.2fs | 預期播放點%.2fs\n",audio_param->index,packet.pts,packet.size,(double)(av_gettime() - av_start_time)/AV_TIME_BASE,pFrame->pts * av_q2d(time_base));#if USE_SDL// 設置讀取的音頻數據audio_info.audio_len = audio_param->out_buffer_size;audio_info.audio_pos = (Uint8 *) audio_param->out_buffer;// 等待SDL播放完成while(audio_info.audio_len > 0)SDL_Delay(0.5);

#endif}av_packet_unref(&packet);}else {break;}}av_frame_free(&pFrame);// 結束video_state->isEnd = 1;return 0;

}

第四步:視頻解碼(子線程)+播放(主線程)

說視頻的解碼和播放之前,先提一點:SDL的主窗口操作是需要在主線程中進行的。因此我們不能再解碼子線程中直接渲染SDL窗口,否則會造成內存泄漏。知道這個知識之后,更能理解接下來的流程分析。

我們視頻解碼播放拆成兩個部分:解碼+播放

第一部分:解碼子線程,在子線程中完成解碼,通過標識符的方式通知到主線程幀已更新,并渲染出來。

第二部分:主線程播放,循環監聽子線程的通知標識,并更新窗口幀進行顯示。

代碼如下:

視頻解碼子線程

/**視頻線程*/

int video_thread(void *arg) {/**1. 從視頻pkt隊列中讀出packet2. 送入解碼器解碼并取出3. 使用SDL進行渲染4. 根據pts計算延遲SDL_DELAY*/VideoState* video_state = (VideoState*) arg;PacketQueue* video_queue = video_state->vQueue;AVCodecContext* pCodecCtx = video_state->vCodecCtx;AVFrame* out_frame = video_state->videoParam->out_frame;AVPacket packet;AVFrame* pFrame = av_frame_alloc();AVRational time_base = video_state->formatCtx->streams[video_state->videoStream]->time_base;int64_t av_start_time = av_gettime(); // 開始播放時間(ms*1000)int64_t frame_delay = av_q2d(time_base) * AV_TIME_BASE; // pts單位(ms*1000)int64_t frame_start_time = av_gettime();for (;;) {if (video_queue->size > 0) {packet_queue_pop(video_queue, &packet);// 將packet寫入編解碼器int ret = avcodec_send_packet(pCodecCtx, &packet);// 從解碼器中取出原始幀while (!avcodec_receive_frame(pCodecCtx, pFrame)) {// 幀格式轉化,轉為YUV420Psws_scale(video_state->swsCtx, // sws_context轉換(uint8_t const * const *)pFrame->data, // 輸入 datapFrame->linesize, // 輸入 每行數據的大小(對齊)0, // 輸入 Y軸位置pCodecCtx->height, // 輸入 heightout_frame->data, // 輸出 dataout_frame->linesize); // 輸出 linesize// 幀更新video_state->videoParam->frame_update = 1;// 計算延遲int64_t pts = pFrame->pts; // ptsint64_t actual_playback_time = av_start_time + pts * frame_delay; // 實際播放時間int64_t current_time = av_gettime();if (actual_playback_time > current_time) {SDL_Delay((Uint32)(actual_playback_time-current_time)/1000); // 延遲當前時間和實際播放時間}video_state->videoParam->index++;printf("第%i幀 | 屬于%s | pts為%d | 時長為%.2fms | 實際播放點為%.2fs | 預期播放點為%.2fs\n ",video_state->videoParam->index,get_frame_type(pFrame),(int)pFrame->pts,(double)(av_gettime() - frame_start_time)/1000,(double)(av_gettime() - av_start_time)/AV_TIME_BASE,pFrame->pts * av_q2d(time_base));frame_start_time = av_gettime();}av_packet_unref(&packet);} else {break;}}av_frame_free(&pFrame);// 結束video_state->isEnd = 1;return 1;

}

渲染主線程

while (!video_state->isEnd) {// 處理事件(必須由主線程執行)while (SDL_PollEvent(&event)) {if (event.type == SDL_QUIT) {video_state->isEnd = 1;}}if (video_state->videoParam->frame_update) {// 將AVFrame的數據寫入到texture中,然后渲染后windows上rect.x = 0;rect.y = 0;rect.w = video_state->vCodecCtx->width;rect.h = video_state->vCodecCtx->height;out_frame = video_state->videoParam->out_frame;// 更新紋理SDL_UpdateYUVTexture(texture, &rect,out_frame->data[0], out_frame->linesize[0], // Yout_frame->data[1], out_frame->linesize[1], // Uout_frame->data[2], out_frame->linesize[2]); // V// 渲染頁面SDL_RenderClear(renderer);SDL_RenderCopy(renderer, texture, NULL, NULL);SDL_RenderPresent(renderer);// 重置標志video_state->videoParam->frame_update = 0;}}

完整代碼

sample_player.h

//

// sample_player.h

// learning

//

// Created by chenhuaiyi on 2025/2/26.

//#ifndef sample_player_h

#define sample_player_h#include <stdio.h>

// ffmpeg

#include "libavcodec/avcodec.h"

#include "libswresample/swresample.h"

#include "libavformat/avformat.h"

#include "libswscale/swscale.h"

#include "libavutil/imgutils.h"

#include "libavutil/time.h"

#include "libavutil/fifo.h"

#include "libavutil/channel_layout.h"

// SDL

#include "SDL.h"

#include "SDL_thread.h"/**宏定義*/

#define USE_SDL 1typedef struct MyAVPacketList {AVPacket *pkt;int serial;

} MyAVPacketList;/**packet隊列*/

typedef struct PacketQueue {AVFifo* pkt_list; // fifo隊列int size; // 隊列大小SDL_mutex* mutex; // 互斥信號量SDL_cond* cond; // 條件變量,阻塞線程

} PacketQueue;/**數據類型定義*/

typedef struct AudioInfo{Uint32 audio_len; // 緩沖區長度Uint8* audio_pos; // 緩沖區起始地址指針

} AudioInfo;/**語音輸出參數*/

typedef struct AudioParam {AVChannelLayout out_channel_layout; // layoutint out_nb_samples; // 每一幀的樣本數enum AVSampleFormat out_sample_fmt; // 格式int out_sample_rate; // 采樣率int out_channels; // 輸出通道數int index; // 音頻幀總數int out_buffer_size; // 音頻輸出緩沖區大小uint8_t* out_buffer; // 音頻輸出緩沖區

} AudioParam;/**視頻輸出參數*/

typedef struct VideoParam {int width; // 寬int height; // 高enum AVPixelFormat pix_fmt; // 格式 YUV420Pint num_bytes; // 單幀字節數int index; // 視頻幀總數AVFrame* out_frame; // 輸出幀int frame_update; // 幀更新標識

} VideoParam;/**全局參數*/

typedef struct VideoState {AVFormatContext* formatCtx; // format上下文int audioStream; // 音頻流索引AVCodecContext* aCodecCtx; // 音頻codec上下文const AVCodec* aCodec; // 音頻解碼器AudioParam* audioParam; // 音頻參數SwrContext* swrCtx; // 音頻上線文轉換int videoStream; // 視頻流索引AVCodecContext* vCodecCtx; // 視頻codec上新聞const AVCodec* vCodec; // 視頻解碼器VideoParam* videoParam; // 視頻參數struct SwsContext* swsCtx; // 視頻上下文轉換PacketQueue* aQueue; // 音頻pkt隊列PacketQueue* vQueue; // 視頻pkt隊列int isEnd; // 結束標志

} VideoState;/**全局變量*/

extern AudioInfo audio_info;#endif /* sample_player_h */

utils.h

//

// utils.h

// sample_player

//

// Created by chenhuaiyi on 2025/2/27.

//#ifndef utils_h

#define utils_h#include "sample_player.h"int init_video_state(VideoState** video_state);int destory_video_state(VideoState** video_state);int packet_queue_push(PacketQueue* q, AVPacket* pkt);int packet_queue_init(PacketQueue** q, size_t max_size);int packet_queue_pop(PacketQueue* q, AVPacket* pkt);void packet_queue_destroy(PacketQueue** q);char* get_frame_type(AVFrame* frame);#endif /* utils_h */

manager.h

//

// manager.h

// sample_player

//

// Created by chenhuaiyi on 2025/2/27.

//#ifndef manager_h

#define manager_h#include "sample_player.h"/**音頻輸出信息設置*/

int audio_output_set(VideoState* video_state);/**視頻輸出信息設置*/

int video_output_set(VideoState* video_state);/**音頻SDL初始化*/

int audio_sdl_set(VideoState* video_state, SDL_AudioSpec* wanted_spec, void (*fn)(void*, Uint8*, int));/**視頻SDL初始化*/

int video_sdl_set(VideoState* video_state, SDL_Window** window, SDL_Renderer** renderer, SDL_Texture** texture);#endif /* manager_h */

utils.c

//

// utils.c

// sample_player

//

// Created by chenhuaiyi on 2025/2/27.

//#include "utils.h"/**初始化VideoState*/

int init_video_state(VideoState** video_state) {*video_state = av_malloc(sizeof(VideoState));(*video_state)->formatCtx = avformat_alloc_context();(*video_state)->audioStream = 0;(*video_state)->aCodecCtx = avcodec_alloc_context3(NULL);(*video_state)->audioParam = av_malloc(sizeof(AudioParam));(*video_state)->videoStream = 0;(*video_state)->vCodecCtx = avcodec_alloc_context3(NULL);(*video_state)->videoParam = av_malloc(sizeof(VideoParam));(*video_state)->videoParam->frame_update = 0;/** pkt隊列初始化 */(*video_state)->aQueue = av_malloc(sizeof(PacketQueue));packet_queue_init(&(*video_state)->aQueue, 1);(*video_state)->vQueue = av_malloc(sizeof(PacketQueue));packet_queue_init(&(*video_state)->vQueue, 1);(*video_state)->isEnd = 0;return 1;

}/**銷毀VideoState*/

int destory_video_state(VideoState** video_state){swr_free(&(*video_state)->swrCtx);avcodec_free_context(&(*video_state)->aCodecCtx);av_free((*video_state)->audioParam->out_buffer);av_free((*video_state)->audioParam);sws_freeContext((*video_state)->swsCtx);avcodec_free_context(&(*video_state)->vCodecCtx);av_frame_free(&(*video_state)->videoParam->out_frame);av_free((*video_state)->videoParam);/** 隊列釋放 */packet_queue_destroy(&(*video_state)->aQueue);packet_queue_destroy(&(*video_state)->vQueue);if ((*video_state)->formatCtx != NULL) {avformat_close_input(&(*video_state)->formatCtx);(*video_state)->formatCtx = NULL;}av_free(*video_state);return 1;

}/**初始化隊列*/

int packet_queue_init(PacketQueue** q, size_t max_size) {// 創建一個 AVFifo 隊列,每個元素的大小為 sizeof(AVPacket)*q = av_malloc(sizeof(PacketQueue));(*q)->pkt_list = av_fifo_alloc2(max_size, sizeof(MyAVPacketList), AV_FIFO_FLAG_AUTO_GROW);(*q)->size = 0;(*q)->mutex = SDL_CreateMutex();(*q)->cond = SDL_CreateCond();if (!(*q)->pkt_list) {return -1;}return 0;

}/**寫入隊列*/

int packet_queue_push(PacketQueue* q, AVPacket* pkt) {MyAVPacketList pNode;if (!q || !pkt) {return -1;}AVPacket* pkt1 = av_packet_alloc();if (!pkt1) {av_packet_unref(pkt);return -1;}SDL_LockMutex(q->mutex);av_packet_ref(pkt1, pkt);pNode.pkt = pkt1;// 將 pkt 壓入隊列if (av_fifo_write(q->pkt_list, &pNode, 1) < 0) {SDL_UnlockMutex(q->mutex);return -1;}q->size++;SDL_CondSignal(q->cond);SDL_UnlockMutex(q->mutex);return 0;

}/**彈出隊列*/

int packet_queue_pop(PacketQueue* q, AVPacket* pkt) {if (!q || !pkt) {return -1;}SDL_LockMutex(q->mutex);MyAVPacketList pNode;// 從隊列中彈出一個元素, 沒找到則阻塞線程,等待生產者釋放if (av_fifo_read(q->pkt_list, &pNode, 1) < 0) {SDL_CondWait(q->cond, q->mutex);}q->size--;av_packet_move_ref(pkt, pNode.pkt);av_packet_free(&pNode.pkt);SDL_UnlockMutex(q->mutex);return 0;

}/**銷毀隊列*/

void packet_queue_destroy(PacketQueue** q) {if ((*q) && (*q)->pkt_list) {// 釋放隊列中的所有 AVPacketMyAVPacketList pNode;SDL_LockMutex((*q)->mutex);while (av_fifo_read((*q)->pkt_list, &pNode, 1) >= 0) {av_packet_free(&pNode.pkt); // 釋放 AVPacket 的資源}SDL_UnlockMutex((*q)->mutex);// 釋放 AVFifo 隊列(*q)->size = 0;av_fifo_freep2(&(*q)->pkt_list);SDL_DestroyMutex((*q)->mutex);SDL_DestroyCond((*q)->cond);av_free(*q);}

}/**獲取幀類型*/

char* get_frame_type(AVFrame* frame) {switch (frame->pict_type) {case AV_PICTURE_TYPE_I:return "I";break;case AV_PICTURE_TYPE_P:return "P";break;case AV_PICTURE_TYPE_B:return "B";break;case AV_PICTURE_TYPE_S:return "S";break;case AV_PICTURE_TYPE_SI:return "SI";break;case AV_PICTURE_TYPE_SP:return "SP";break;case AV_PICTURE_TYPE_BI:return "BI";break;default:return "N";break;}

}manager.c

//

// manager.c

// sample_player

//

// Created by chenhuaiyi on 2025/2/27.

//#include "manager.h"/**音頻輸出信息構建*/

int audio_output_set(VideoState* video_state) {AudioParam* audio_param = video_state->audioParam;// 輸出用到的信息av_channel_layout_default(&audio_param->out_channel_layout, 2);audio_param->out_nb_samples = video_state->aCodecCtx->frame_size; // 編解碼器每個幀需要處理或者輸出的采樣點的大小 AAC:1024 MP3:1152audio_param->out_sample_fmt = AV_SAMPLE_FMT_S16; // 采樣格式audio_param->out_sample_rate = 44100; // 采樣率audio_param->out_channels = audio_param->out_channel_layout.nb_channels; // 通道數// 獲取需要使用的緩沖區大小 -> 通道數,單通道樣本數,位深 1024(單幀處理的采樣點)*2(雙通道)*2(16bit對應2字節)audio_param->out_buffer_size = av_samples_get_buffer_size(NULL, audio_param->out_channels,audio_param->out_nb_samples,audio_param->out_sample_fmt, 1);// 分配緩沖區空間audio_param->out_buffer = NULL;av_samples_alloc(&audio_param->out_buffer, NULL, audio_param->out_channels,audio_param->out_nb_samples, audio_param->out_sample_fmt, 1);return 1;

}/**視頻輸出信息構建*/

int video_output_set(VideoState* video_state) {VideoParam* video_param = video_state->videoParam;// 基礎信息video_param->width = video_state->vCodecCtx->width;video_param->height = video_state->vCodecCtx->height;video_param->pix_fmt = AV_PIX_FMT_YUV420P;// 計算單幀大小, 分配單幀內存video_param->num_bytes = av_image_get_buffer_size(AV_PIX_FMT_YUV420P, video_param->width, video_param->height, 1);video_param->out_frame = av_frame_alloc();av_image_alloc(video_param->out_frame->data, video_param->out_frame->linesize,video_param->width, video_param->height, AV_PIX_FMT_YUV420P, 1);return 1;

}/**音頻SDL初始化*/

int audio_sdl_set(VideoState* video_state, SDL_AudioSpec* wanted_spec, void (*fn)(void*, Uint8*, int)) {AudioParam* audio_param = video_state->audioParam;wanted_spec->freq = audio_param->out_sample_rate; // 采樣率wanted_spec->format = AUDIO_S16SYS; // 采樣格式 16bitwanted_spec->channels = audio_param->out_channels; // 通道數wanted_spec->silence = 0;wanted_spec->samples = audio_param->out_nb_samples; // 單幀處理的采樣點wanted_spec->callback = fn; // 回調函數wanted_spec->userdata = video_state->aCodecCtx; // 回調函數的參數return 1;

}/**視頻SDL初始化*/

int video_sdl_set(VideoState* video_state, SDL_Window** window, SDL_Renderer** renderer, SDL_Texture** texture){AVCodecContext* pCodecCtx = video_state->vCodecCtx;/** 窗口 */*window = SDL_CreateWindow("SDL2 window",SDL_WINDOWPOS_CENTERED,SDL_WINDOWPOS_CENTERED,pCodecCtx->width,pCodecCtx->height,SDL_WINDOW_SHOWN);if (!*window) {printf("SDL_CreateWindow Error: %s\n", SDL_GetError());SDL_Quit();return 1;}/** 渲染 */*renderer = SDL_CreateRenderer(*window,-1,SDL_RENDERER_ACCELERATED | SDL_RENDERER_PRESENTVSYNC);if (!*renderer) {printf("SDL_CreateRenderer Error: %s\n", SDL_GetError());SDL_DestroyWindow(*window);SDL_Quit();return 1;}/** 紋理 */*texture = SDL_CreateTexture(*renderer,SDL_PIXELFORMAT_YV12,SDL_TEXTUREACCESS_STREAMING,pCodecCtx->width,pCodecCtx->height);return 1;

}

main.c

//

// main.c

// sample_player

//

// Created by chenhuaiyi on 2025/2/26.

//#include "utils.h"

#include "manager.h"AudioInfo audio_info;/* udata: 傳入的參數* stream: SDL音頻緩沖區* len: SDL音頻緩沖區大小* 回調函數*/

void fill_audio(void *udata, Uint8 *stream, int len){SDL_memset(stream, 0, len); // 必須重置,不然全是電音!!!if(audio_info.audio_len==0){ // 有音頻數據時才調用return;}len = (len>audio_info.audio_len ? audio_info.audio_len : len); // 最多填充緩沖區大小的數據SDL_MixAudio(stream, audio_info.audio_pos, len, SDL_MIX_MAXVOLUME);audio_info.audio_pos += len;audio_info.audio_len -= len;

}/**音頻線程*/

int audio_thread(void *arg) {/**1. 從packet_queue隊列中取出packet2. 將packet進行解碼3. 寫入到sdl的緩沖區中*/ VideoState* video_state = (VideoState*) arg;AudioParam* audio_param = video_state->audioParam;PacketQueue* queue = video_state->aQueue;audio_param->index = 0;AVRational time_base = video_state->formatCtx->streams[video_state->audioStream]->time_base;int64_t av_start_time = av_gettime(); // 播放開始時間戳AVPacket packet;int ret;AVFrame* pFrame = av_frame_alloc();for(;;) {if (queue->size > 0) {packet_queue_pop(queue, &packet);// 將packet寫入編解碼器ret = avcodec_send_packet(video_state->aCodecCtx, &packet);if ( ret < 0 ) {printf("send packet error\n");return -1;}// 獲取解碼后的幀while (!avcodec_receive_frame(video_state->aCodecCtx, pFrame)) {// 格式轉化swr_convert(video_state->swrCtx, &audio_param->out_buffer, audio_param->out_buffer_size,(const uint8_t **)pFrame->data, pFrame->nb_samples);audio_param->index++;printf("第%d幀 | pts:%lld | 幀大小(采樣點):%d | 實際播放點%.2fs | 預期播放點%.2fs\n",audio_param->index,packet.pts,packet.size,(double)(av_gettime() - av_start_time)/AV_TIME_BASE,pFrame->pts * av_q2d(time_base));#if USE_SDL// 設置讀取的音頻數據audio_info.audio_len = audio_param->out_buffer_size;audio_info.audio_pos = (Uint8 *) audio_param->out_buffer;// 等待SDL播放完成while(audio_info.audio_len > 0)SDL_Delay(0.5);

#endif}av_packet_unref(&packet);}else {break;}}av_frame_free(&pFrame);// 結束video_state->isEnd = 1;return 0;

}/**視頻線程*/

int video_thread(void *arg) {/**1. 從視頻pkt隊列中讀出packet2. 送入解碼器解碼并取出3. 使用SDL進行渲染4. 根據pts計算延遲SDL_DELAY*/VideoState* video_state = (VideoState*) arg;PacketQueue* video_queue = video_state->vQueue;AVCodecContext* pCodecCtx = video_state->vCodecCtx;AVFrame* out_frame = video_state->videoParam->out_frame;AVPacket packet;AVFrame* pFrame = av_frame_alloc();AVRational time_base = video_state->formatCtx->streams[video_state->videoStream]->time_base;int64_t av_start_time = av_gettime(); // 開始播放時間(ms*1000)int64_t frame_delay = av_q2d(time_base) * AV_TIME_BASE; // pts單位(ms*1000)int64_t frame_start_time = av_gettime();for (;;) {if (video_queue->size > 0) {packet_queue_pop(video_queue, &packet);// 將packet寫入編解碼器int ret = avcodec_send_packet(pCodecCtx, &packet);if (ret < 0) {printf("packet resolve error!");break;}// 從解碼器中取出原始幀while (!avcodec_receive_frame(pCodecCtx, pFrame)) {// 幀格式轉化,轉為YUV420Psws_scale(video_state->swsCtx, // sws_context轉換(uint8_t const * const *)pFrame->data, // 輸入 datapFrame->linesize, // 輸入 每行數據的大小(對齊)0, // 輸入 Y軸位置pCodecCtx->height, // 輸入 heightout_frame->data, // 輸出 dataout_frame->linesize); // 輸出 linesize// 幀更新video_state->videoParam->frame_update = 1;// 計算延遲int64_t pts = pFrame->pts; // ptsint64_t actual_playback_time = av_start_time + pts * frame_delay; // 實際播放時間int64_t current_time = av_gettime();if (actual_playback_time > current_time) {SDL_Delay((Uint32)(actual_playback_time-current_time)/1000); // 延遲當前時間和實際播放時間}video_state->videoParam->index++;printf("第%i幀 | 屬于%s | pts為%d | 時長為%.2fms | 實際播放點為%.2fs | 預期播放點為%.2fs\n ",video_state->videoParam->index,get_frame_type(pFrame),(int)pFrame->pts,(double)(av_gettime() - frame_start_time)/1000,(double)(av_gettime() - av_start_time)/AV_TIME_BASE,pFrame->pts * av_q2d(time_base));frame_start_time = av_gettime();}av_packet_unref(&packet);} else {break;}}av_frame_free(&pFrame);// 結束video_state->isEnd = 1;return 1;

}int main(int argc, char* argv[])

{VideoState* video_state;AudioParam* audio_param;VideoParam* video_param;SDL_Event event;SDL_Rect rect;if(argc < 2) {fprintf(stderr, "Usage: test <file>\n");exit(1);}/** 初始化函數 */init_video_state(&video_state);audio_param = video_state->audioParam;video_param = video_state->videoParam;avformat_network_init();// 1. 打開視頻文件,獲取格式上下文if(avformat_open_input(&video_state->formatCtx, argv[1], NULL, NULL)!=0){printf("Couldn't open input stream.\n");return -1;}// 2. 對文件探測流信息if(avformat_find_stream_info(video_state->formatCtx, NULL) < 0){printf("Couldn't find stream information.\n");return -1;}// 打印信息av_dump_format(video_state->formatCtx, 0, argv[1], 0);// 3. 找到對應的 音頻流/視頻流 索引video_state->audioStream=-1;video_state->videoStream=-1;for(int i=0; i < video_state->formatCtx->nb_streams; i++) {if(video_state->formatCtx->streams[i]->codecpar->codec_type==AVMEDIA_TYPE_AUDIO){video_state->audioStream=i;}if (video_state->formatCtx->streams[i]->codecpar->codec_type==AVMEDIA_TYPE_VIDEO) {video_state->videoStream=i;}}if(video_state->audioStream==-1){printf("Didn't find a audio stream.\n");return -1;}if (video_state->videoStream==-1) {printf("Didn't find a video stream.\n");return -1;}// 4. 將 音頻流/視頻流 編碼參數寫入上下文AVCodecParameters* aCodecParam = video_state->formatCtx->streams[video_state->audioStream]->codecpar;avcodec_parameters_to_context(video_state->aCodecCtx, aCodecParam);

// avcodec_parameters_free(&aCodecParam); 這個是不需要手動釋放的AVCodecParameters* vCodecParam = video_state->formatCtx->streams[video_state->videoStream]->codecpar;avcodec_parameters_to_context(video_state->vCodecCtx, vCodecParam);

// avcodec_parameters_free(&vCodecParam);// 5. 查找流的編碼器video_state->aCodec = avcodec_find_decoder(video_state->aCodecCtx->codec_id);if(video_state->aCodec==NULL){printf("Audio codec not found.\n");return -1;}video_state->vCodec = avcodec_find_decoder(video_state->vCodecCtx->codec_id);if(video_state->vCodec==NULL){printf("Video codec not found.\n");return -1;}// 6. 打開流的編解碼器if(avcodec_open2(video_state->aCodecCtx, video_state->aCodec, NULL)<0){printf("Could not open audio codec.\n");return -1;}if(avcodec_open2(video_state->vCodecCtx, video_state->vCodec, NULL)<0){printf("Could not open video codec.\n");return -1;}/** 音頻輸出信息構建 */audio_output_set(video_state);/** 視頻輸出信息構建 */video_output_set(video_state);// SDL 初始化

#if USE_SDLif(SDL_Init(SDL_INIT_VIDEO | SDL_INIT_AUDIO | SDL_INIT_TIMER)) {printf( "Could not initialize SDL - %s\n", SDL_GetError());return -1;}// 在 main 函數開始處添加SDL_SetHint(SDL_HINT_VIDEO_MAC_FULLSCREEN_SPACES, "0");SDL_SetHint(SDL_HINT_MAC_BACKGROUND_APP, "1");/** 初始化音頻SDL設備 */SDL_AudioSpec wanted_spec;// audio_sdl_set(video_state, &wanted_spec, fill_audio);wanted_spec.freq = audio_param->out_sample_rate; // 采樣率wanted_spec.format = AUDIO_S16SYS; // 采樣格式 16bitwanted_spec.channels = audio_param->out_channels; // 通道數wanted_spec.silence = 0;wanted_spec.samples = audio_param->out_nb_samples; // 單幀處理的采樣點wanted_spec.callback = fill_audio; // 回調函數wanted_spec.userdata = video_state->aCodecCtx; // 回調函數的參數/** 初始化視頻SDL設備 */SDL_Window* window = NULL;SDL_Renderer* renderer = NULL;SDL_Texture* texture= NULL;// video_sdl_set(video_state, &window, &renderer, &texture);/** 窗口 */window = SDL_CreateWindow("SDL2 window",SDL_WINDOWPOS_CENTERED,SDL_WINDOWPOS_CENTERED,video_state->vCodecCtx->width,video_state->vCodecCtx->height,SDL_WINDOW_SHOWN);if (!window) {printf("SDL_CreateWindow Error: %s\n", SDL_GetError());SDL_Quit();return 1;}/** 渲染 */renderer = SDL_CreateRenderer(window,-1,SDL_RENDERER_ACCELERATED | SDL_RENDERER_PRESENTVSYNC);if (!renderer) {printf("SDL_CreateRenderer Error: %s\n", SDL_GetError());SDL_DestroyWindow(window);SDL_Quit();return 1;}/** 紋理 */texture = SDL_CreateTexture(renderer,SDL_PIXELFORMAT_YV12,SDL_TEXTUREACCESS_STREAMING,video_state->vCodecCtx->width,video_state->vCodecCtx->height);// 打開音頻播放器if (SDL_OpenAudio(&wanted_spec, NULL)<0) {printf("can't open audio.\n");return -1;}#endif// 音頻上下文格式轉換swr_alloc_set_opts2(&video_state->swrCtx,&audio_param->out_channel_layout, // 輸出layoutaudio_param->out_sample_fmt, // 輸出格式audio_param->out_sample_rate, // 輸出采樣率&video_state->aCodecCtx->ch_layout, // 輸入layoutvideo_state->aCodecCtx->sample_fmt, // 輸入格式video_state->aCodecCtx->sample_rate, // 輸入采樣率0, NULL);swr_init(video_state->swrCtx);// 視頻上下文格式轉換video_state->swsCtx = sws_getContext(video_state->vCodecCtx->width, // src 寬video_state->vCodecCtx->height, // src 高video_state->vCodecCtx->pix_fmt, // src 格式video_param->width, // dst 寬video_param->height, // dst 高video_param->pix_fmt, // dst 格式SWS_BILINEAR,NULL,NULL,NULL);// 開始播放SDL_PauseAudio(0);int64_t av_start_time = av_gettime(); // 播放開始時間戳AVPacket* packet = av_packet_alloc(); // packet初始化// 循環1: 從文件中讀取packetwhile(av_read_frame(video_state->formatCtx, packet)>=0){/** 寫入音頻pkt隊列 */if(packet->stream_index==video_state->audioStream){packet_queue_push(video_state->aQueue, packet);}/** 寫入視頻pkt隊列 */if (packet->stream_index==video_state->videoStream) {packet_queue_push(video_state->vQueue, packet);}av_packet_unref(packet);SDL_PollEvent(&event);switch(event.type) {case SDL_QUIT:SDL_Quit();exit(0);break;default:break;}}printf("audio queue.size=%d\n", video_state->aQueue->size);// 創建一個線程并啟動SDL_CreateThread(audio_thread, "audio_thread", video_state);SDL_CreateThread(video_thread, "video_thread", video_state);// video_thread(video_state);AVFrame* out_frame = NULL;while (!video_state->isEnd) {// 處理事件(必須由主線程執行)while (SDL_PollEvent(&event)) {if (event.type == SDL_QUIT) {video_state->isEnd = 1;}}if (video_state->videoParam->frame_update) {// 將AVFrame的數據寫入到texture中,然后渲染后windows上rect.x = 0;rect.y = 0;rect.w = video_state->vCodecCtx->width;rect.h = video_state->vCodecCtx->height;out_frame = video_state->videoParam->out_frame;// 更新紋理SDL_UpdateYUVTexture(texture, &rect,out_frame->data[0], out_frame->linesize[0], // Yout_frame->data[1], out_frame->linesize[1], // Uout_frame->data[2], out_frame->linesize[2]); // V// 渲染頁面SDL_RenderClear(renderer);SDL_RenderCopy(renderer, texture, NULL, NULL);SDL_RenderPresent(renderer);// 重置標志video_state->videoParam->frame_update = 0;}}// 打印參數printf("格式: %s\n", video_state->formatCtx->iformat->name);printf("時長: %lld us\n", video_state->formatCtx->duration);printf("音頻持續時長為 %.2f,音頻幀總數為 %d\n", (double)(av_gettime()-av_start_time)/AV_TIME_BASE, audio_param->index);printf("碼率: %lld\n", video_state->formatCtx->bit_rate);printf("編碼器: %s (%s)\n", video_state->aCodecCtx->codec->long_name, avcodec_get_name(video_state->aCodecCtx->codec_id));printf("通道數: %d\n", video_state->aCodecCtx->ch_layout.nb_channels);printf("采樣率: %d \n", video_state->aCodecCtx->sample_rate);printf("單通道每幀的采樣點數目: %d\n", video_state->aCodecCtx->frame_size);printf("pts單位(ms*1000): %.2f\n", av_q2d(video_state->formatCtx->streams[video_state->audioStream]->time_base) * AV_TIME_BASE);// 釋放空間av_packet_free(&packet);#if USE_SDLSDL_CloseAudio();SDL_DestroyTexture(texture);SDL_DestroyRenderer(renderer);SDL_DestroyWindow(window);SDL_Quit();

#endifdestory_video_state(&video_state);return 0;

}結語和展望

終于做完了,恭喜你,完成了一個非常粗糙,而且有很多問題的簡單音視頻播放器。接下來幾期,我們跟著大家一起對這個簡單的播放器進行優化。當然我也是個小萌新,所以一步一步來嘛哈哈。先拋出幾個問題:

- 時鐘同步怎么做

- 如何邊讀出packet,邊解碼frame并播放

- 我們如何對輸出的解碼幀進行轉化

ps. 鼓勵大家閱讀ffplay源碼,所有的問題都能迎刃而解,哈哈哈!

)

使用010 Editor手動解析notepad++.exe的PE結構)

)

pandas庫入門手冊)