LSTM在股價數據集上的預測實戰

- 使用完整的JPX賽題數據,并向大家提供完整的lstm流程。

導包

import numpy as np #數據處理

import pandas as pd #數據處理

import matplotlib as mlp

import matplotlib.pyplot as plt #繪圖

from sklearn.preprocessing import MinMaxScaler #·數據預處理

from sklearn.metrics import mean_squared_error

import torch

import torch.nn as nn #導入pytorch中的基本類

from torch.autograd import Variable

from torch.utils.data import DataLoader, TensorDataset

import torch.optim as optim

import torch.utils.data as data

# typing 模塊提供了一些類型,輔助函數中的參數類型定義

from typing import Union,List,Tuple,Iterable

from sklearn.preprocessing import LabelEncoder,MinMaxScaler

from decimal import ROUND_HALF_UP, Decimal

一、數據加載與處理

# 一、數據加載與處理

# 1、查看數據集信息

stock= pd.read_csv('stock_prices.csv') # (2332531,12)

stock_list = pd.read_csv('stock_list.csv') # (4417,16)stock["SecuritiesCode"].unique().__len__() #2000支股票# 2、為了效率我們抽取其中的10支股票

selected_codes = stock['SecuritiesCode'].drop_duplicates().sample(n=10)

stock = stock[stock['SecuritiesCode'].isin(selected_codes)] # (9833,12)

stock["SecuritiesCode"].unique().__len__() #只有10支股票了stock.isnull().sum() #查看缺失值# 3、預處理數據集

#將Target名字修改為Sharpe Ratio

stock.rename(columns={'Target': 'Sharpe Ratio'}, inplace=True)#將Close列添加到最后

close_col = stock.pop('Close')

stock.loc[:,'Close'] = close_col#填補Dividend缺失值、刪除具有缺失值的行

stock["ExpectedDividend"] = stock["ExpectedDividend"].fillna(0)

stock.dropna(inplace=True)#恢復索引

stock.index = range(stock.shape[0])

二、數據分割與數據重組

# 二、數據分割與數據重組

# 1、數據分割

train_size = int(len(stock) * 0.67)

test_size = len(stock) - train_size

train, test = stock[:train_size], stock[train_size:] # train (6580,12) test(3242,12)# 2、帶標簽滑窗

def create_multivariate_dataset_2(dataset, window_size, pred_len): # """將多變量時間序列轉變為能夠用于訓練和預測的數據【帶標簽的滑窗】參數:dataset: DataFrame,其中包含特征和標簽,特征從索引3開始,最后一列是標簽window_size: 滑窗的窗口大小pred_len:多步預測的預測范圍/預測步長"""X, y, y_indices = [], [], []for i in range(len(dataset) - window_size - pred_len + 1): # (len-ws-pl+1) --> (6580-30-5+1) = 6546# 選取從第4列到最后一列的特征和標簽feature_and_label = dataset.iloc[i:i + window_size, 3:].values # (ws,fs_la) --> (30,9)# 下一個時間點的標簽作為目標target = dataset.iloc[(i + window_size):(i + window_size + pred_len), -1] # pred_len --> 5# 記錄本窗口中要預測的標簽的時間點target_indices = list(range(i + window_size, i + window_size + pred_len)) # pl*(len-ws-pl+1) --> 5*6546 = 32730 X.append(feature_and_label)y.append(target)#將每個標簽的索引添加到y_indices列表中y_indices.extend(target_indices)X = torch.FloatTensor(np.array(X, dtype=np.float32))y = torch.FloatTensor(np.array(y, dtype=np.float32))return X, y, y_indices# 3、數據重組

window_size = 30 #窗口大小

pred_len = 5 #多步預測的步數X_train_2, y_train_2, y_train_indices = create_multivariate_dataset_2(train, window_size, pred_len) # x(6546,30,9) y(6546,5) (32730,)

X_test_2, y_test_2, y_test_indices = create_multivariate_dataset_2(test, window_size, pred_len) # x(3208,30,9) y(3208,5) (16040,)

三、網絡架構與參數設置

# 三、網絡架構與參數設置

# 1、定義架構

class MyLSTM(nn.Module):def __init__(self,input_dim, seq_length, output_size, hidden_size, num_layers):super().__init__()self.lstm = nn.LSTM(input_size=input_dim, hidden_size=hidden_size, num_layers=num_layers, batch_first=True)self.linear = nn.Linear(hidden_size, output_size)def forward(self, x):x, _ = self.lstm(x)#現在我要的是最后一個時間步,而不是全部時間步了x = self.linear(x[:,-1,:])return x# 2、參數設置

input_size = 9 #輸入特征的維度

hidden_size = 20 #LSTM隱藏狀態的維度

n_epochs = 2000 #迭代epoch

learning_rate = 0.001 #學習率

num_layers = 1 #隱藏層的層數

output_size = 5#設置GPU

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(device)# 加載數據,將數據分批次

loader = data.DataLoader(data.TensorDataset(X_train_2, y_train_2), shuffle=True, batch_size=8) # 3、實例化模型

model = MyLSTM(input_size, window_size, pred_len,hidden_size, num_layers).to(device)

optimizer = optim.Adam(model.parameters(),lr=learning_rate) #定義優化器

loss_fn = nn.MSELoss() #定義損失函數

loader = data.DataLoader(data.TensorDataset(X_train_2, y_train_2)#每個表單內部是保持時間順序的即可,表單與表單之間可以shuffle, shuffle=True, batch_size=8) #將數據分批次

四、實際訓練流程

# 四、實際訓練流程

# 初始化早停參數

early_stopping_patience = 3 # 設置容忍的epoch數,即在這么多epoch后如果沒有改進就停止

early_stopping_counter = 0 # 用于跟蹤沒有改進的epoch數

best_train_rmse = float('inf') # 初始化最佳的訓練RMSEtrain_losses = []

test_losses = []for epoch in range(n_epochs):model.train()for X_batch, y_batch in loader:y_pred = model(X_batch.to(device))loss = loss_fn(y_pred, y_batch.to(device))optimizer.zero_grad()loss.backward()optimizer.step()#驗證與打印if epoch % 10 == 0:model.eval()with torch.no_grad():y_pred = model(X_train_2.to(device)).cpu()train_rmse = np.sqrt(loss_fn(y_pred, y_train_2))y_pred = model(X_test_2.to(device)).cpu()test_rmse = np.sqrt(loss_fn(y_pred, y_test_2))print("Epoch %d: train RMSE %.4f, test RMSE %.4f" % (epoch, train_rmse, test_rmse))# 將當前epoch的損失添加到列表中train_losses.append(train_rmse)test_losses.append(test_rmse)# 早停檢查if train_rmse < best_train_rmse:best_train_rmse = train_rmseearly_stopping_counter = 0 # 重置計數器else:early_stopping_counter += 1 # 增加計數器if early_stopping_counter >= early_stopping_patience:print(f"Early stopping triggered after epoch {epoch}. Training RMSE did not decrease for {early_stopping_patience} consecutive epochs.")break # 跳出訓練循環

結果顯示:

Epoch 0: train RMSE 1470.9308, test RMSE 1692.0652

Epoch 5: train RMSE 1415.7896, test RMSE 1639.1147

Epoch 10: train RMSE 1364.8196, test RMSE 1590.2207

......

Epoch 100: train RMSE 654.3458, test RMSE 904.7958

Epoch 105: train RMSE 638.2536, test RMSE 886.3511

Epoch 110: train RMSE 625.7336, test RMSE 870.9800

......

Epoch 200: train RMSE 598.3364, test RMSE 820.4078

Epoch 205: train RMSE 598.3354, test RMSE 820.3406

Epoch 210: train RMSE 598.3349, test RMSE 820.2874

......

Epoch 260: train RMSE 598.3341, test RMSE 820.1312

Epoch 265: train RMSE 598.3341, test RMSE 820.1294

Early stopping triggered after epoch 265. Training RMSE did not decrease for 3 consecutive epochs.

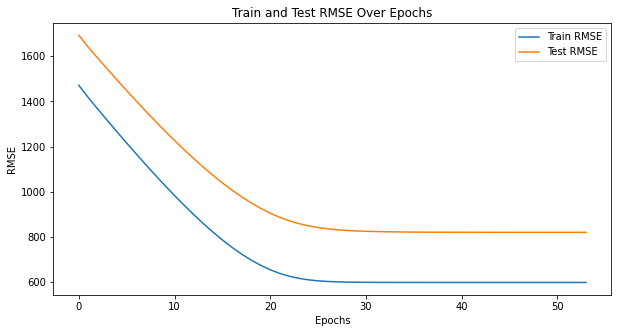

五、可視化結果

# 五、可視化結果

# 1、損失曲線

plt.figure(figsize=(10, 5))

plt.plot(train_losses, label='Train RMSE')

plt.plot(test_losses, label='Test RMSE')

plt.xlabel('Epochs')

plt.ylabel('RMSE')

plt.title('Train and Test RMSE Over Epochs')

plt.legend()

plt.show()

結果分析:預測效果不是很好,考慮進行數據預處理和特征工程

【擴展】股票數據的數據預處理與特征工程(后續更新~)

【8-18】)

—— 抽象類、接口、Object類和內部類)