Embodied-Reasoner?是一個多模態具身模型,它將 o1 的深度推理能力擴展到具身交互任務。

可以在 AI2THOR 仿真中執行復雜的任務,例如搜索隱藏物體、操縱 和 運輸物品

具有以下的功能:

- 🤔?深度推理能力,例如分析、空間推理、反思、規劃

- 🔄 交錯多模態處理能力,特別是處理長序列的交錯圖像文本上下文

- 🏠?環境互動能力,使其能夠自主觀察環境、探索房間并尋找隱藏物體

- 開源模型發布 7B/2B 尺寸

- 開源數據集🤗?Hugging Face:9.3k 條交錯的觀察-推理-行動軌跡,包括 64K 張圖像和 8M 個思想標記?

本文分享Embodied-Reasoner復現的模型推理、生成任務和數據的過程~

1、創建Conda環境

首先創建一個Conda環境,名字為embodied-reasoner,python版本為3.9

進入embodied-reasoner環境

conda create -n embodied-reasoner python=3.9

conda activate embodied-reasoner然后下載代碼,進入代碼工程:https://github.com/zwq2018/embodied_reasoner

git clone https://github.com/zwq2018/embodied_reasoner.git

cd embodied_reasoner2、安裝ai2thor模擬器和相關依賴

編輯requirements.txt,修改為下面內容:

ai2thor==5.0.0

Flask==3.1.0

opencv-python==4.7.0.72

accelerate==1.3.0

FlagEmbedding==1.3.4

openai==1.60.0

opencv-python-headless==4.11.0.86

peft==0.14.0

qwen-vl-utils==0.0.8

safetensors==0.5.2

sentence-transformers==3.4.1

sentencepiece==0.2.0

tiktoken==0.7.0

tokenizers==0.21.0然后進行安裝~

pip install -r requirements.txt3、安裝torch 和 torchvision

首先用nvcc -V查詢CUDA的版本,比如系統使用的12.1版本的

(embodied-reasoner) lgp@lgp-MS-7E07:~/2025_project/embodied_reasoner$ nvcc -V

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2023 NVIDIA Corporation

Built on Mon_Apr__3_17:16:06_PDT_2023

Cuda compilation tools, release 12.1, V12.1.105

Build cuda_12.1.r12.1/compiler.32688072_0

然后安裝與cuda版本對應的torch

pip install torch==2.4.0+cu121 torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu121因為后續安裝的flash_attn需要CUDA和torch進行編譯的,這里的版本需要對應上

4、安裝flash_attn

執行下面命令進行安裝:

pip install flash_attn==2.7.4.post1安裝成功會打印信息:

Building wheel for flash_attn (setup.py) ... doneCreated wheel for flash_attn: filename=flash_attn-2.7.4.post1-cp39-cp39-linux_x86_64.whl size=187787224 sha256=8cbee35b7faaad89436c8855e5de8881f5b04962cf066e6bc12a81947dddbe4cStored in directory: /home/lgp/.cache/pip/wheels/a4/e3/79/560592cf99bd2bd893a372eee64a31c0bd903bc236a1a98e00

Successfully built flash_attn

Installing collected packages: flash_attn

Successfully installed flash_attn-2.7.4.post1

5、補丁安裝

實際運行時,發現還缺少一些庫(matplotlib,huggingface_hub等),需要進行安裝

pip install matplotlib huggingface_hub openai還需要安裝Vulkan,在可視化時需要用到

# 安裝Vulkan工具包和運行時?

sudo apt update

sudo apt install vulkan-tools vulkan-utils mesa-vulkan-drivers libvulkan-dev

# ?驗證Vulkan安裝?

vulkaninfo --summary6、下載“通義千問”模型權重

這里選擇 2.5-VL-3B-Instruct版本的,如果用其他模型也可以的

使用huggingface_hub進行下載,執行命令:

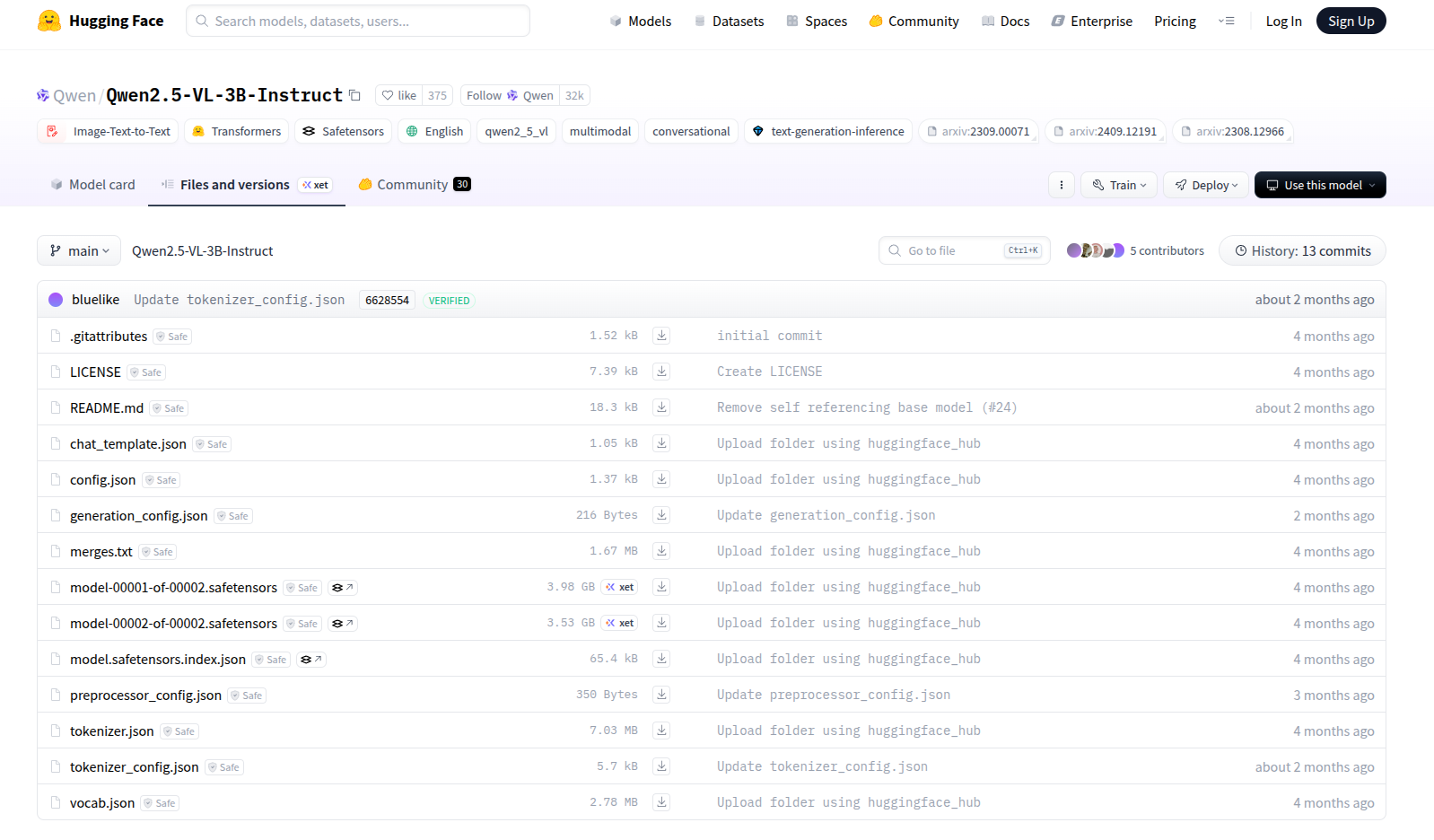

huggingface-cli download --resume-download Qwen/Qwen2.5-VL-3B-Instruct --local-dir ./Qwen2.5-VL-3B-Instruct官網地址:https://huggingface.co/Qwen/Qwen2.5-VL-3B-Instruct/tree/main?

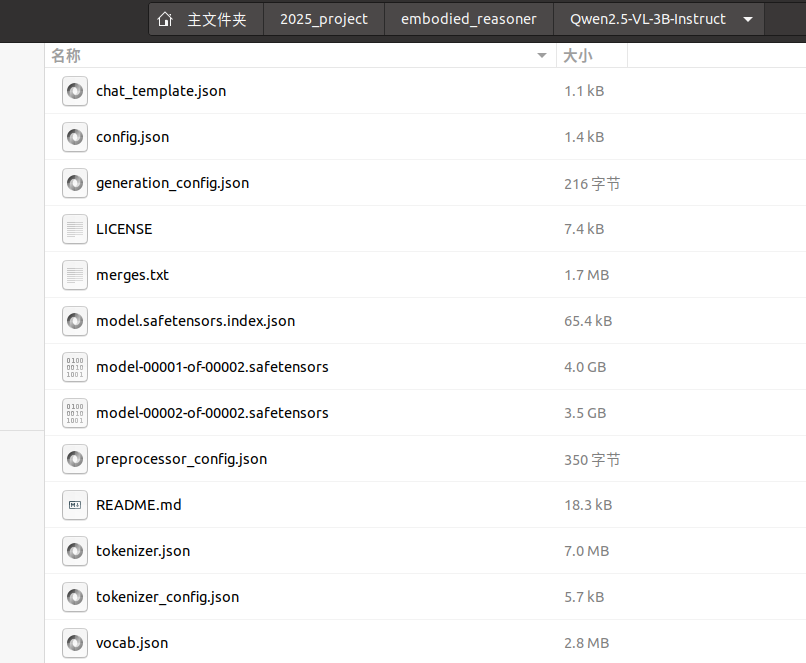

下載好后,目錄結構如下圖所示:

其他模型選擇:https://www.modelscope.cn/models

7、修改配置文件

首先修改 evaluate/VLMCall.py,大約52行:

在api_key中,需要替換成自己的ModelScope SDK Token

while retry_count < retry_limit: try:t1=time.time()# import pdb;pdb.set_trace()print(f"********* start call {self.model} *********")api_key=random.choice(moda_keys)client = OpenAI(api_key="xxxxxxxxxxxxxxx", # 請替換成您的ModelScope SDK Tokenbase_url="https://api-inference.modelscope.cn/v1")if self.model=="Qwen/Qwen2-VL-7B-Instruct":max_tokens=2000outputs = client.chat.completions.create(model=self.model, stream=False,messages = messages,temperature=0.9,max_tokens=max_tokens)ModelScope SDK 地址:https://www.modelscope.cn/my/myaccesstoken

點擊“新建 SDK/API 令牌”,然后復制api到代碼中?

8、合成任務和軌跡

先來到data_engine 文件夾來,它用來合成任務和軌跡。以下是 data_engine 中的關鍵文件:

data_engine/? ? ? ? ? ? ? ? ? ?# 數據引擎核心目錄

├── taskgenerate/ ? ? ? ? ? # 任務生成數據集

│ ? ├── bathrooms/ ? ? ? ? # 浴室場景相關數據

│ ? ├── bedrooms/ ? ? ? ? ?# 臥室場景相關數據

│ ? ├── kitchens/? ? ? ? ? ? # 廚房場景相關數據

│ ? ├── living_rooms/ ? ? ?# 客廳場景相關數據

│ ? └── pick_up_and_put.json ?# 物品拾取與放置任務模板

│

├── TaskGenerate.py ? ? ? ? # 任務合成主腳本(生成復合任務流程)

├── o1StyleGenerate.py ? ? ?# 標準軌跡生成腳本(單任務軌跡)

├── o1StyleGenerate_ordered.py ?# 復雜任務軌跡生成腳本(多步驟有序任務)

├── vlmCall.py? ? ? ? ? ? ? ? ? ? # 視覺語言模型調用接口(封裝VLM交互邏輯)

└── vlmCallapi_keys.py ? ? ?# VLM API密鑰配置文件(需在此設置訪問憑證)

步驟1.生成任務

TaskGenerate.py可以合成任務模板以及對應的關鍵動作

生成的任務相關數據會存放在<tasktype>_metadatadata_engine下的文件夾中

運行以下代碼來進行任務生成:

python TaskGenerate.py運行信息:

(embodied-reasoner) lgp@lgp-MS-7E07:~/2025_project/embodied_reasoner/data_engine$ python TaskGenerate.py

save json data to path: single_search_task_metadata/FloorPlan1.json

save json data to path: single_search_task_metadata/FloorPlan2.json

save json data to path: single_search_task_metadata/FloorPlan3.json

save json data to path: single_search_task_metadata/FloorPlan4.json

save json data to path: single_search_task_metadata/FloorPlan5.json

save json data to path: single_search_task_metadata/FloorPlan6.json

....

save json data to path: single_search_task_metadata/FloorPlan429.json

save json data to path: single_search_task_metadata/FloorPlan430.json看一個json示例,了解包含那些內容

[[{"taskname": "Identify the Apple in the room.","tasktype": "single_search","metadatapath": "taskgenerate/kitchens/FloorPlan11/metadata.json","actions": [{"action": "navigate to","objectId": "CounterTop|+00.28|+00.95|+00.46","objectType": "CounterTop","baseaction": "","reward": 1,"relatedObject": ["CounterTop|+00.28|+00.95|+00.46","Apple|-00.05|+00.95|+00.30"]},{"action": "end","objectId": "","objectType": "","baseaction": "","reward": 1,"relatedObject": ["CounterTop|+00.28|+00.95|+00.46","Apple|-00.05|+00.95|+00.30"]}],"totalreward": 2}]

]簡單分析一下json的內容:?

1. ??任務元信息??

| 字段 | 含義 |

|---|---|

taskname | 任務描述:"在房間中識別蘋果"(自然語言定義任務目標) |

tasktype | 任務類型:single_search(單目標搜索任務,區別于多目標搜索) |

metadatapath | 元數據路徑:指向包含場景布局、對象屬性等信息的JSON文件(如廚房場景) |

2. ??動作序列 (actions)??

-

??動作1:導航到目標位置??

action:?"navigate to"(導航動作類型)objectId:?CounterTop|+00.28|+00.95|+00.46(目標對象ID,格式為?類型|x|y|z)objectType:?CounterTop(對象類型:廚房臺面)relatedObject: 關聯對象列表(包含當前臺面和蘋果的位置,可能用于視覺定位)reward:?1(完成此動作的即時獎勵)

-

??動作2:結束任務??

action:?"end"(終止任務信號)reward:?1(任務完成獎勵)- 此動作可能觸發后續評估邏輯(如驗證是否識別到蘋果)

3. ??獎勵機制 (totalreward)??

- 總獎勵值為?

2,等于兩個動作的獎勵之和(1+1) - 可能用于強化學習中的策略優化(鼓勵高效完成任務)

步驟2.生成O1樣式軌跡

因為后需要用到gpt-4o的api,修改?data_engine/vlmCall.py代碼

推薦使用國內的供應商,比較穩定:https://ai.nengyongai.cn/register?aff=RQt3

首先“添加令牌”,設置額度,點擊查看就能看到Key啦

?然后填寫到 OPENAI_KEY 中:

import http.client

import json

import random

import base64

from datetime import datetime

from PIL import Image

import io

import time# 刪除原VLMCallapi_keys.py的依賴,直接使用固定API密鑰

OPENAI_KEY = "sk-tmlMwyAq8PQqExxxxxxxxxx" # 替換為你的真實API密鑰class VLMRequestError(Exception):pass class VLMAPI:def __init__(self, model):self.model = modeldef encode_image(self, image_path):# 保持原圖片處理邏輯不變with Image.open(image_path) as img:original_width, original_height = img.sizeif original_width == 1600 and original_height == 800:new_width = original_width // 2new_height = original_height // 2resized_img = img.resize((new_width, new_height), Image.Resampling.LANCZOS)buffered = io.BytesIO()resized_img.save(buffered, format="JPEG")base64_image = base64.b64encode(buffered.getvalue()).decode('utf-8')else:with open(image_path, "rb") as image_file:base64_image = base64.b64encode(image_file.read()).decode('utf-8')return base64_imagedef vlm_request(self, systext, usertext, image_path1=None, image_path2=None, image_path3=None, max_tokens=1500, retry_limit=3):# 構建請求體邏輯保持不變payload_data = [{"type": "text", "text": usertext}]# ...(原圖片處理代碼保持不變)messages = [{"role": "system", "content": systext},{"role": "user", "content": payload_data}]payload = json.dumps({"model": self.model,"stream": False,"messages": messages,"temperature": 0.9,"max_tokens": max_tokens})# 修改1:使用新的API端點conn = http.client.HTTPSConnection("ai.nengyongai.cn")retry_count = 0while retry_count < retry_limit:try:t1 = time.time() # 提前定義時間戳# 修改2:使用固定API密鑰headers = {'Accept': 'application/json','Authorization': f'Bearer {OPENAI_KEY}','User-Agent': 'Apifox/1.0.0 (https://apifox.com)','Content-Type': 'application/json'}print(f"********* start call {self.model} *********")conn.request("POST", "/v1/chat/completions", payload, headers)res = conn.getresponse()data = res.read().decode("utf-8")data_dict = json.loads(data)content = data_dict["choices"][0]["message"]["content"]print("****** content: \n", content)print(f"********* end call {self.model}: {time.time()-t1:.2f} *********")return contentexcept Exception as ex:print(f"Attempt call {self.model} {retry_count + 1} failed: {ex}")time.sleep(300)retry_count += 1return "Failed to generate completion after multiple attempts."if __name__ == "__main__":model = "gpt-4o-2024-11-20"llmapi = VLMAPI(model)# 示例調用response = llmapi.vlm_request(systext="你是一個AI助手,請用中文回答用戶的問題。",usertext="今天天氣怎么樣?",image_path1="example.jpg")print(response)?

再使用o1StyleGenerate.py或者o1StyleGenerate_ordered.py合成 10 種不同子任務類型的軌跡

備注:o1StyleGenerate_ordered.py 能合成更復雜的順序對象傳輸任務

運行下面代碼,生成簡單任務軌跡數據:

python o1StyleGenerate.py生成復雜任務軌跡數據:(可選)

python o1StyleGenerate_ordered.py?運行信息:

(embodied-reasoner) lgp@lgp-MS-7E07:~/2025_project/embodied_reasoner/data_engine$ python o1StyleGenerate.py

metadata_path: taskgenerate/kitchens/FloorPlan1/metadata.json

task_metadata_path: single_search_task_metadata/FloorPlan1.json*********************************************************************

Scene:FloorPlan1 Task_Type: single_search Processing_Task: 0 Trajectory_idx: a

*********************************************************************task: {'taskname': 'Can you identify the Apple in the room, please?', 'tasktype': 'single_search', 'metadatapath': 'taskgenerate/kitchens/FloorPlan1/metadata.json', 'actions': [{'action': 'navigate to', 'objectId': 'CounterTop|-00.08|+01.15|00.00', 'objectType': 'CounterTop', 'baseaction': '', 'reward': 1, 'relatedObject': ['CounterTop|-00.08|+01.15|00.00', 'Apple|-00.47|+01.15|+00.48']}, {'action': 'end', 'objectId': '', 'objectType': '', 'baseaction': '', 'reward': 1, 'relatedObject': ['CounterTop|-00.08|+01.15|00.00', 'Apple|-00.47|+01.15|+00.48']}], 'totalreward': 2}

Initialization succeeded

Saved frame as data_single_search/FloorPlan1_single_search_0_a/0_init_observe.png.****** begin generate selfobservation ******

round: 0 ['Book', 'Drawer', 'GarbageCan', 'Window', 'Stool', 'CounterTop', 'Cabinet', 'ShelvingUnit', 'CoffeeMachine', 'Fridge', 'HousePlant']

********* start call gpt-4o-2024-11-20 *********

****** content: <Observation> I see a CounterTop with a CoffeeMachine placed on its surface. Adjacent to it, there is a ShelvingUnit containing books. To the side, a Fridge stands near a Cabinet. A Window is visible on the wall, and a HousePlant sits nearby, adding a touch of greenery. </Observation>

********* end call gpt-4o-2024-11-20: 2.70 *********

****** end generate selfobservation ************ begin generate r1 plan, plan object num: 2 ******

********* start call gpt-4o-2024-11-20 *********

****** content: ['Fridge']

********* end call gpt-4o-2024-11-20: 1.45 *********

****** r1_init_plan_object_list: ['Fridge', 'CounterTop'] correct type: CounterTop

********* start call gpt-4o-2024-11-20 *********

****** content: <Planning>Based on my observation, an apple is a food item likely stored in locations where food is typically kept or prepared. The Fridge is a common place for storing perishable items, including fruits. The CounterTop, being a food preparation area, may also hold an apple if it's readily available or recently used. Thus, I will prioritize searching the Fridge first and then move to the CounterTop as the next logical location based on its function and proximity to food-related activities.</Planning>

********* end call gpt-4o-2024-11-20: 1.58 *********

****** end generate r1 plan ******* Saved frame as data_single_search/FloorPlan1_single_search_0_a/1_Fridge|-02.10|+00.00|+01.07.png.

********* start generate thinking 2 ********

************ current plan object list: ['Fridge', 'CounterTop']

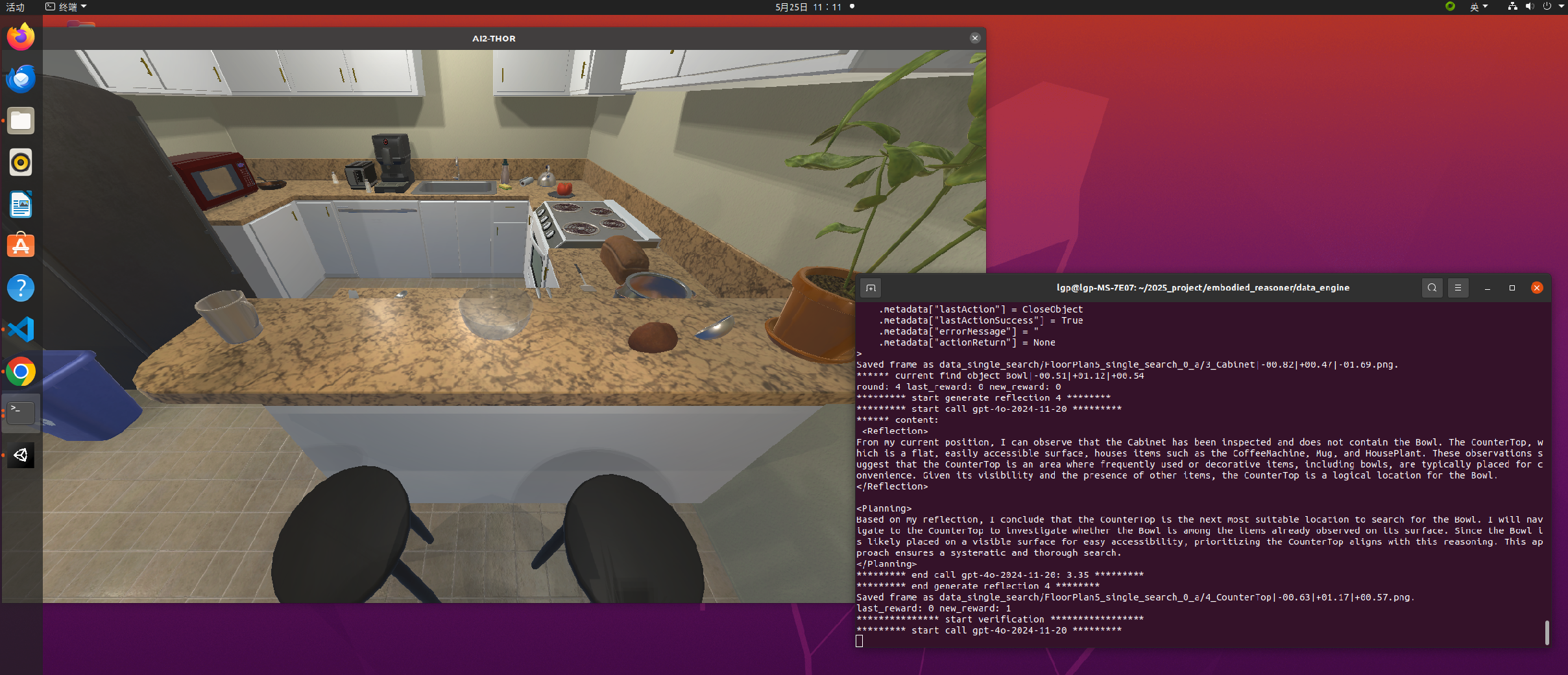

?運行效果:

然后生成的軌跡的文件夾,會包括 JSON 文件和軌跡的相關圖像

生存的文件目錄示例:

以下是 JSON 文件內容的示例:

{"scene": "FloorPlan1","tasktype": "...","taskname": "Locate the Apple in the room.","trajectory": ["<...>...</...>","<...>...</...>","..."],"images": [".../init_observe.png","..."],"flag": "","time": "...","task_metadata": {"..."}

}- 場景:執行任務的場景。

- tasktype:任務的類型。

- taskname:任務的名稱。

- 軌跡:軌跡的推理和決策內容

- 圖像:對應圖像的路徑(第一張圖像代表初始狀態;后續每張圖像對應于執行軌跡中列出的每個動作后的狀態)。

- time and flag:記錄生成時間戳和軌跡生成過程中遇到的異常。

- task_metadata:步驟1中生成的任務信息。

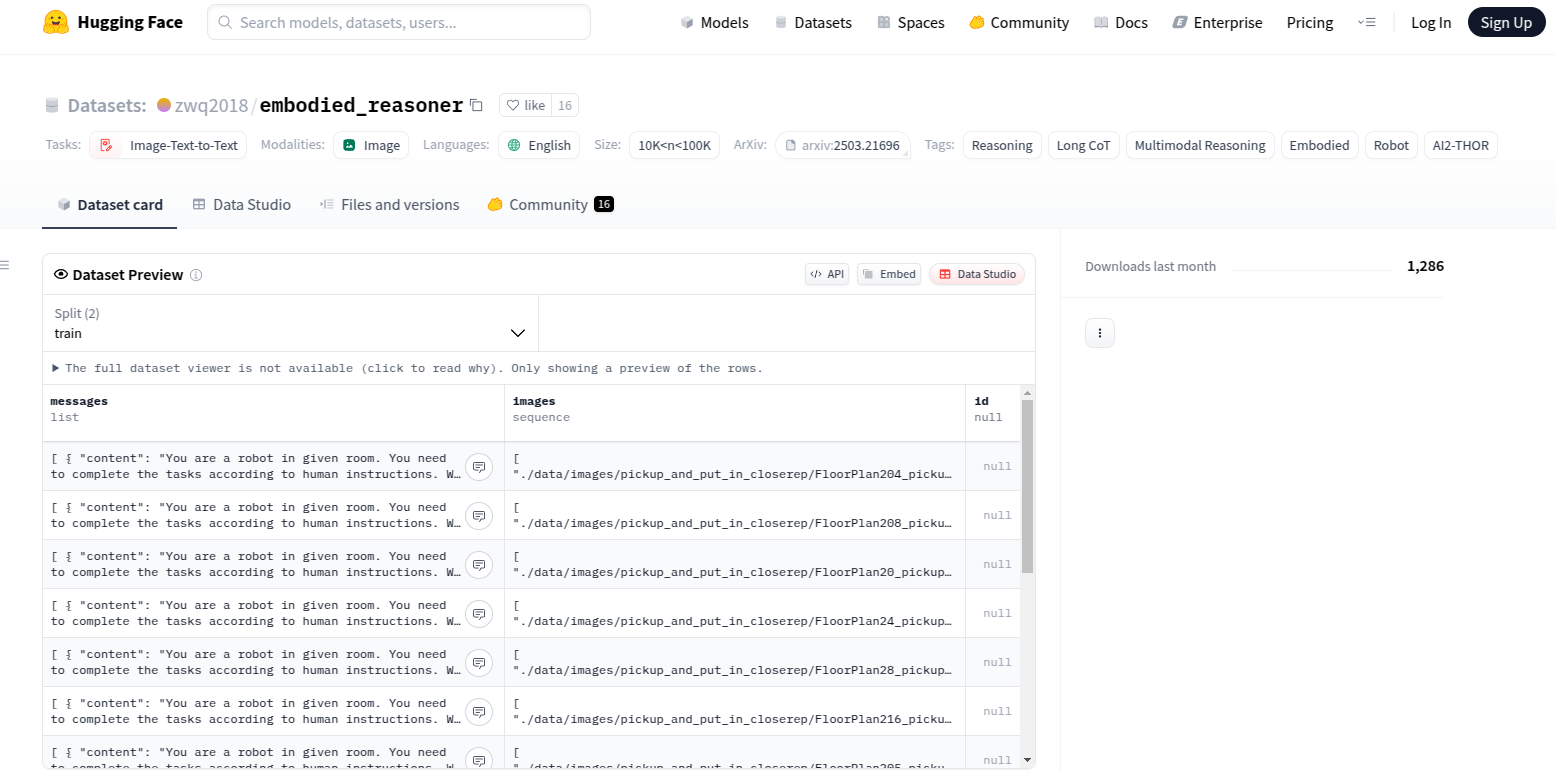

完整的軌跡數據,也可以去這里訪問:https://huggingface.co/datasets/zwq2018/embodied_reasoner

主要特點:

- 📸豐富的視覺數據:包含 64,000 張第一人稱視角交互圖像

- 🤔深度推理能力:800 萬個思維標記,涵蓋分析、空間推理、反思和規劃

- 🏠多樣化環境:涵蓋 107 種不同的室內場景(廚房、客廳等)

- 🎯豐富的交互對象:包含 2,100 個交互對象和 2,600 個容器對象

- 🔄完整的交互軌跡:每個樣本包含完整的觀察-思考-行動序列

10、模型推理與評估

需要修改 scripts/eval.sh 代碼,參考一下:

# ========================

# 模型路徑配置

# ========================

# 默認模型路徑配置(對應local_deploy.py的默認加載模型)

DEFAULT_MODEL_PATH="Qwen2.5-VL-3B-Instruct" # local_deploy.py使用的默認模型路徑

DEFAULT_MODEL_NAME="Qwen2.5-VL-3B-Instruct" # evaluate.py使用的默認模型名稱# 參數優先級:命令行參數 > 默認值

# 使用方式:./script.sh [自定義模型路徑] [自定義模型名稱]

MODEL_PATH=${1:-$DEFAULT_MODEL_PATH} # 優先使用第一個參數,未提供則用默認路徑

MODEL_NAME=${2:-$DEFAULT_MODEL_NAME} # 優先使用第二個參數,未提供則用默認名稱# ========================

# 圖像處理參數配置

# ========================

export IMAGE_RESOLUTION=351232 # 輸入圖像的最大分辨率

export MIN_PIXELS=3136 # 最小有效像素閾值

export MAX_PIXELS=351232 # 最大有效像素閾值

MODEL_TYPE="qwen2_5_vl" # 模型類型標識(用于框架識別)# ========================

# 環境變量配置

# ========================

export PYTHONUNBUFFERED=1 # 禁用Python輸出緩沖,實時顯示日志# ========================

# 啟動Embedding服務(后臺運行)

# ========================

# 啟動文本嵌入模型服務(用于對象匹配)

# --embedding 1 表示啟用嵌入模式

# 使用端口20006,后臺運行(&符號)

python ./inference/local_deploy.py \--embedding 1 \--port 20006 &# ========================

# 啟動多模態推理服務(前臺運行)

# ========================

# 使用GPU 1運行視覺語言模型服務

CUDA_VISIBLE_DEVICES=1 python inference/local_deploy.py \--frame "hf" # 使用HuggingFace框架模式--model_type $MODEL_TYPE # 指定模型類型--model_name $MODEL_PATH # 加載指定模型--port 10002 & # 使用端口10002,后臺運行# ========================

# 等待服務就緒

# ========================

echo "Waiting for ports ..."

# 阻塞等待直到端口20006可用(Embedding服務)

while ! nc -z localhost 20006; do sleep 1

done# 阻塞等待直到端口10002可用(多模態服務)

while ! nc -z localhost 10002; do sleep 1

done# ========================

# 啟動AI2Thor評估流程

# ========================

# 使用GPU 0運行評估腳本

CUDA_VISIBLE_DEVICES=0 python evaluate/evaluate.py \--model_name $MODEL_NAME # 指定推理模型--input_path "data/test_809.json" # 輸入測試數據集--batch_size 200 # 批次大小--cur_count 1 # 當前任務編號--port 10002 # 連接多模態服務的端口--total_count 1 # 總任務數量# ========================

# 顯示最終結果

# ========================

wait # 等待所有后臺進程結束

python evaluate/show_result.py \--model_name $MODEL_NAME # 展示指定模型的評估結果打印的日志信息:

(embodied-reasoner) (base) lgp@lgp-MS-7E07:~/2025_project/embodied_reasoner$ bash scripts/eval.sh

Waiting for ports ...

INFO 05-24 17:39:18 __init__.py:190] Automatically detected platform cuda.

INFO 05-24 17:39:18 __init__.py:190] Automatically detected platform cuda.

TP: 1

gmu None <class 'NoneType'>

TP: 1

gmu None <class 'NoneType'>

Loading checkpoint shards: 100%|█████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 2/2 [00:00<00:00, 12.06it/s]* Serving Flask app 'local_deploy'* Debug mode: off

WARNING: This is a development server. Do not use it in a production deployment. Use a production WSGI server instead.* Running on http://127.0.0.1:10002

Press CTRL+C to quit

127.0.0.1 - - [24/May/2025 17:39:28] "GET / HTTP/1.1" 404 -

127.0.0.1 - - [24/May/2025 17:39:28] "GET /favicon.ico HTTP/1.1" 404 -* Serving Flask app 'local_deploy'* Debug mode: off

WARNING: This is a development server. Do not use it in a production deployment. Use a production WSGI server instead.* Running on http://127.0.0.1:20006

Press CTRL+C to quit

No module named 'VLMCallapi_keys'

evaluate utils:4

evaluate utils:None

Namespace(input_path='data/test_809.json', model_name='Qwen2.5-VL-3B-Instruct', batch_size=200, port=10002, cur_count=1, total_count=1)

--total task count:809

--cache:0---remaining evaluation tasks:809

--Current process evaluation data:8090%| | 0/809 [00:00<?, ?it/s]******** Task Name: Do you find it overly troublesome to put the potato in the refrigerator and then take the apple out of the refrigerator, rinse it clean, and set it on a plate? *** Max Steps: 36 ********

******** Task Record: ./data/Qwen2.5-VL-3B-Instruct/809_long-range tasks with dependency relationships_FloorPlan2_4 ********

RoctAgent Initialization successful!!!

0 ****** begin exec action: init None ***

1 ****** end exec action: init None ***

url: http://127.0.0.1:10002/chat

predictor:utils:preprocess_image:image.width: 812

predictor:utils:preprocess_image:image.height: 448

predictor:utils:preprocess_image:image.width: 797

predictor:utils:preprocess_image:image.height: 440

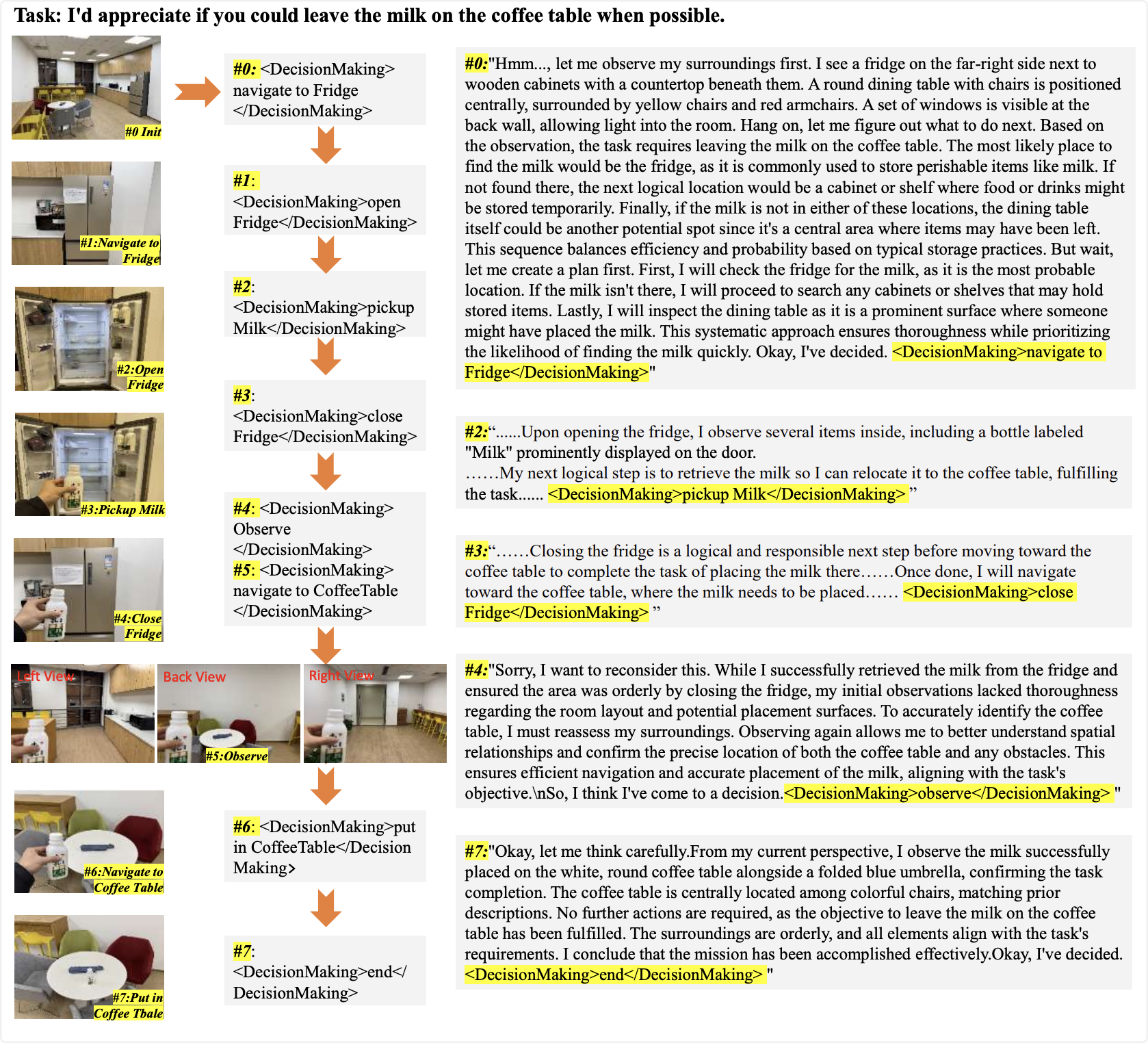

input tokens shape: torch.Size([1, 761])?下面是一個示例,展示了具身推理的過程:?

后續會繼續更新的

暫時分享完成~

?相關文章推薦:

UniGoal 具身導航 | 通用零樣本目標導航 CVPR 2025-CSDN博客

【機器人】復現 UniGoal 具身導航 | 通用零樣本目標導航 CVPR 2025-CSDN博客

【機器人】復現 WMNav 具身導航 | 將VLM集成到世界模型中-CSDN博客

【機器人】復現 ECoT 具身思維鏈推理-CSDN博客

【機器人】復現 SG-Nav 具身導航 | 零樣本對象導航的 在線3D場景圖提示-CSDN博客

?【機器人】復現 3D-Mem 具身探索和推理 | 3D場景記憶 CVPR 2025 -CSDN博客

)

)

)