一、實驗目的

1. 了解SkLearn Tensorlow使用方法?

2. 了解SkLearn keras使用方法

二、實驗工具:

1. SkLearn

三、實驗內容 (貼上源碼及結果)

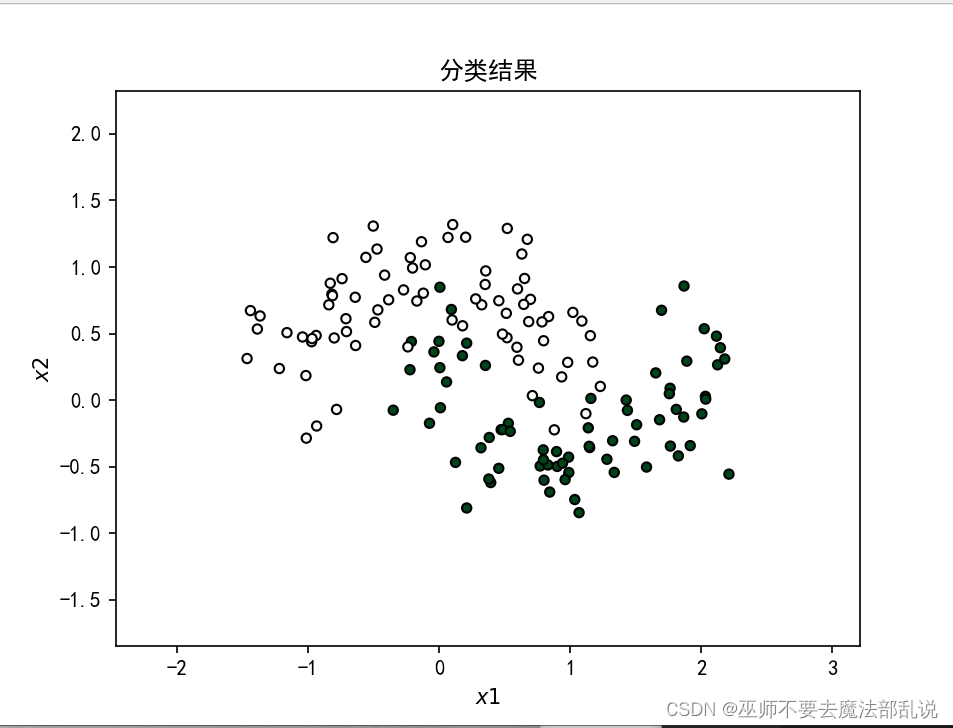

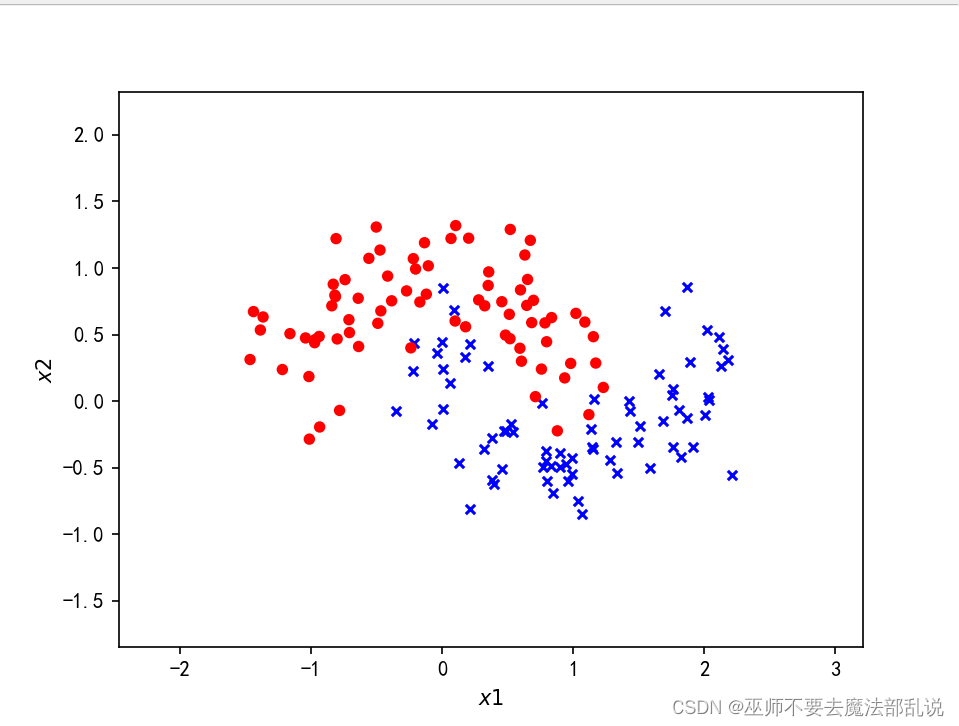

?使用Tensorflow對半環形數據集分

#encoding:utf-8import numpy as npfrom sklearn.datasets import make_moonsimport tensorflow as tffrom sklearn.model_selection import train_test_splitfrom tensorflow.keras import layers,Sequential,optimizers,losses, metricsfrom tensorflow.keras.layers import Denseimport matplotlib.pyplot as plt#產生一個半環形數據集X,y= make_moons(200,noise=0.25,random_state=100)#劃分訓練集和測試集X_train,X_test, y_train, y_test= train_test_split(X, y, test_size=0.25,random_state=2)print(X.shape,y.shape)def make_plot(X,y,plot_name,XX=None, YY=None, preds=None):plt.figure()axes = plt.gca()x_min=X[:,0].min()-1x_max=X[:,0].max()+ 1y_min=X[:,1].min()-1y_max=X[:,1].max()+ 1axes.set_xlim([x_min,x_max])axes.set_ylim([y_min,y_max])axes.set(xlabel="$x 1$",ylabel="$x 2$")if XX is None and YY is None and preds is None:yr = y.ravel()for step in range(X[:,0].size):if yr[step]== 1:plt.scatter(X[step,0],X[step,1],c='b',s=20,edgecolors='none',marker='x')else:plt.scatter(X[step,0],X[step,1],c='r',s=30,edgecolors='none',marker='o')plt.show()else:plt.contour(XX,YY,preds,cmap=plt.cm.spring,alpha=0.8)plt.scatter(X[:, 0], X[:, 1], c = y, s = 20, cmap=plt.cm.Greens, edgecolors = 'k')plt.rcParams['font.sans-serif'] =['SimHei']plt.rcParams['axes.unicode_minus'] = Falseplt.title(plot_name)plt.show()make_plot(X, y, None)# 創建容器model = Sequential()# 創建第一層model.add(Dense(8, input_dim = 2, activation = 'relu'))for _ in range(3):model.add(Dense(32, activation='relu'))# 創建最后一層,激活model.add(Dense(1, activation='sigmoid'))model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy'])history = model.fit(X_train, y_train, epochs = 30, verbose = 1)# 繪制決策曲線x_min = X[:,0].min() - 1x_max = X[:, 0].max() + 1y_min = X[:1].min() - 1y_max = X[:, 1].max() + 1XX, YY = np.meshgrid(np.arange(x_min, x_max, 0.01), np.arange(y_min, y_max, 0.01))Z = np.argmax(model.predict(np.c_[XX.ravel(), YY.ravel()]), axis=-1)preds =Z.reshape(XX.shape)title = "分類結果"make_plot(X_train, y_train, title, XX, YY, preds)

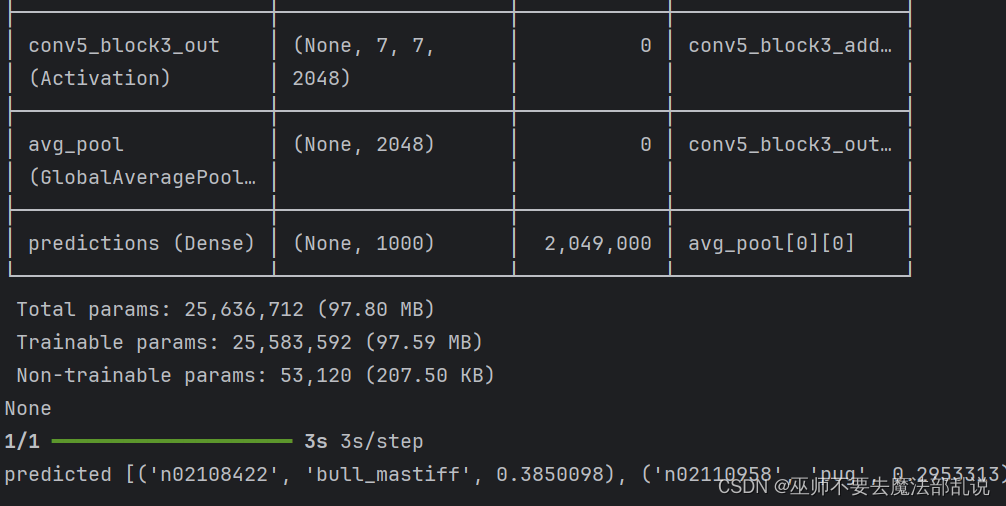

使用VGGNet 識別貓狗

from tensorflow import ?keras ???????????????????????????????from keras.applications.resnet import ResNet50 ??????????????from keras.preprocessing import ?image# #手寫文字識別 ?????????????from ?keras.applications.resnet import preprocess_input,decodimport ?numpy as np# #載人 MNIST 數據集 ??????????????????????????from PIL import ?ImageFont,ImageDraw,Image# #拆分數據集 ??????????# (x_train,y_train),(x_test,y_test)= mnist.load_data() ?????# #將樣本進行預處理,并從整數轉換為浮點數 ?????????????????????????????????????# x_train,x_test=x_train/255.0,x_test /255.0 ???????????????img1=r'C:\Users\PDHuang\Downloads\ch11\dog.jpg'# #使用 tf.kerasimg2=r'C:\Users\PDHuang\Downloads\ch11\cat.jpg'# model= tf.keimg3=r'C:\Users\PDHuang\Downloads\ch11\deer.jpg'# tf.keras.laweight_path=r'C:\Users\PDHuang\Downloads\ch11\resnet50_weightimg=image.load_img(img1,target_size=(224,224))# ????tf.keras.x=image.img_to_array(img)# ????tf.keras.layers.Dense(10,activx=np.expand_dims(x,axis=0)# ]) ??????????????????????????????x=preprocess_input(x)# #設置模型的優化器和損失函數 ???????????????????????def get_model():# model.compile(optimizer='adam',loss='sparsemodel=ResNet50(weights=weight_path)# #訓練并驗證模型 ???????????print(model.summary())# model.fit(x_train,y_train,epochs=return model# model.evaluate(x_test,y_test,verbose=2) ???model=get_model() ???????????????????????????????????????????#預測圖片 ???????????????????????????????????????????????????????preds=model.predict(x) ??????????????????????????????????????#打印出top-5的結果 ????????????????????????????????????????????????print('predicted',decode_predictions(preds,top=5)[0]) ???????

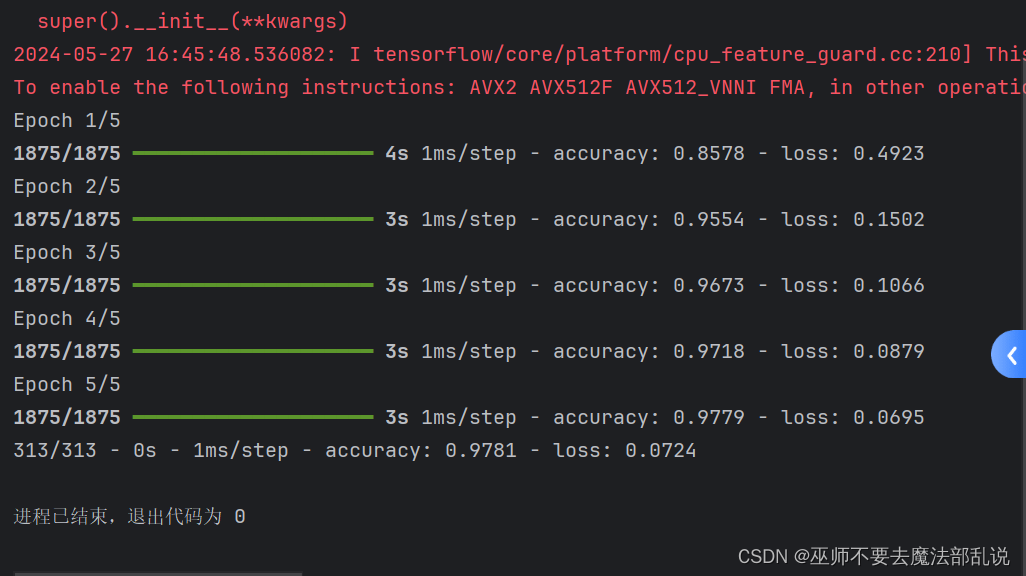

使用深度學習進行手寫數字識別

#手寫文字識別 ???????????????????????????????????import tensorflow as tf ???????????????????#載人 MNIST 數據集 ?????????????????????????????mnist= tf.keras.datasets.mnist ????????????#拆分數據集 ????????????????????????????????????(x_train,y_train),(x_test,y_test)= mnist.lo#將樣本進行預處理,并從整數轉換為浮點數 ??????????????????????x_train,x_test=x_train/255.0,x_test /255.0#使用 tf.keras.Sequential將模型的各層堆看,并設置參數 ?????model= tf.keras.models.Sequential([ ???????tf.keras.layers.Flatten(input_shape=(28tf.keras.layers.Dense(128,activation='rtf.keras.layers.Dropout(0.2), ?????????tf.keras.layers.Dense(10,activation='so]) ????????????????????????????????????????#設置模型的優化器和損失函數 ????????????????????????????model.compile(optimizer='adam',loss='sparse#訓練并驗證模型 ??????????????????????????????????model.fit(x_train,y_train,epochs=5) ???????model.evaluate(x_test,y_test,verbose=2) ???

使用Tensorflow + keras 實現人臉識別

from os import listdirimport numpy as npfrom PIL import Imageimport cv2from spyder.plugins.findinfiles.widgets.combobox import FILE_PATHfrom tensorflow.keras.models import Sequential, load_modelfrom tensorflow.keras.layers import Dense, Activation, Convolution2D,MaxPooling2D,Flattenfrom sklearn.model_selection import train_test_splitfrom tensorflow.python.keras.utils import np_utils#讀取人臉圖片數據def img2vector(fileNamestr):#創建向量returnVect=np.zeros((57,47))image = Image.open(fileNamestr).convert('L')img=np.asarray(image).reshape(57,47)return img#制作人臉數據集def GetDataset(imgDataDir):print('| Step1 |: Get dataset...')imgDataDir='C:/Users/PDHuang/Downloads/ch11/faces_4/'FileDir=listdir(imgDataDir)m= len(FileDir)imgarray=[]hwLabels=[]hwdata=[]#逐個讀取文件for i in range(m):#提取子目錄className=isubdirName='C:/Users/PDHuang/Downloads/ch11/faces_4/'+str(FileDir[i])+'/'fileNames= listdir(subdirName)lenFiles=len(fileNames)#提取文件名for j in range(lenFiles):fileNamestr=subdirName+fileNames[j]hwLabels.append(className)imgarray=img2vector(fileNamestr)hwdata.append(imgarray)hwdata= np.array(hwdata)return hwdata,hwLabels,6# CNN 模型類class MyCNN(object):FILE_PATH= 'C:/Users/PDHuang/Downloads/ch11/face_recognition.h5'picHeight=57picwidth=47#模型存儲/讀取目錄#模型的人臉圖片長47,寬57def __init__(self):self.model = None#獲取訓練數據集def read_trainData(self,dataset):self.dataset=dataset#建立 Sequential模型,并賦予參數def build_model(self):print('| step2 |:Init CNN model...')self.model=Sequential()print('self.dataset.x train.shape[1:]',self.dataset.X_train.shape[1:])self.model.add(Convolution2D(filters=32,kernel_size=(5,5),padding='same',#dim ordering='th',input_shape=self.dataset.X_train.shape[1:]))self.model.add(Activation('relu'))self.model.add(MaxPooling2D(pool_size=(2,2),strides=(2,2),padding='same'))self.model.add(Convolution2D(filters=64,kernel_size=(5,5),padding='same'))self.model.add(Activation('relu'))self.model.add(MaxPooling2D(pool_size=(2,2),strides=(2,2),padding='same'))self.model.add(Flatten())self.model.add(Dense(512))self.model.add(Activation('relu'))self.model.add(Dense(self.dataset.num_classes))self.model.add(Activation('softmax'))self.model.summary()# 模型訓練def train_model(self):print('| Step3 l: Train CNN model...')self.model.compile(optimizer='adam', loss='categorical_crossentropy',metrics = ['accuracy'])# epochs:訓練代次;batch size:每次訓練樣本數self.model.fit(self.dataset.X_train, self.dataset.Y_train, epochs=10,batch_size=20)def evaluate_model(self):loss, accuracy = self.model.evaluate(self.dataset.X_test, self.dataset.Y_test)print('|Step4|:Evaluate performance...')print('=------=---------------------=----')print('Loss Value is:', loss)print('Accuracy Value is :', accuracy)def save(self, file_path = FILE_PATH):print('| Step5 l: Save model...')self.model.save(file_path)print('Model',file_path, 'is successfully saved.')def predict(self, input_data):prediction = self.model.predict(input_data)return prediction#建立一個用于存儲和格式化讀取訓練數據的類class DataSet(object):def __init__(self, path):self.num_classes = Noneself.X_train = Noneself.X_test = Noneself.Y_train = Noneself.Y_test = Noneself.picwidth=47self.picHeight=57self.makeDataSet(path)#在這個類初始化的過程中讀取path下的訓練數據def makeDataSet(self, path):#根據指定路徑讀取出圖片、標簽和類別數imgs,labels,classNum = GetDataset(path)#將數據集打亂隨機分組X_train,X_test,y_train,y_test= train_test_split(imgs, labels, test_size=0.2,random_state=1)#重新格式化和標準化X_train=X_train.reshape(X_train.shape[0],1,self.picHeight, self.picwidth)/255.0X_test=X_test.reshape(X_test.shape[0],1,self.picHeight, self.picwidth)/255.0X_train=X_train.astype('float32')X_test=X_test.astype('float32')#將labels 轉成 binary class matricesY_train=np_utils.to_categorical(y_train, num_classes=classNum)Y_test =np_utils.to_categorical(y_test,num_classes=classNum)#將格式化后的數據賦值給類的屬性上self.X_train=X_trainself.X_test=X_testself.Y_train= Y_trainself.Y_test = Y_testself.num_classes=classNum#人臉圖片目錄dataset= DataSet('C:/Users/PDHuang/Downloads/ch11/faces_4/')model = MyCNN()model.read_trainData(dataset)model.build_model()model.train_model()model.evaluate_model()model.save()import osimport cv2import numpy as npfrom tensorflow.keras.models import load_modelhwdata =[]hwLabels =[]classNum = 0picHeight=57picwidth=47#人物標簽(編號 0~5)#圖像高度#圖像寬度#根據指定路徑讀取出圖片、標簽和類別數hwdata,hwLabels,classNum= GetDataset('C:/Users/PDHuang/Downloads/ch11/faces_4/')#加載模型if os.path.exists('face recognition.h5'):model= load_model('face recognition.h5')else:print('build model first')#加載待判斷圖片photo= cv2.imread('C:/Users/PDHuang/Downloads/ch11/who.jpg')#待判斷圖片調整resized_photo=cv2.resize(photo,(picHeight, picwidth)) #調整圖像大小recolord_photo=cv2.cvtColor(resized_photo, cv2.COLOR_BGR2GRAY)#將圖像調整成灰度圖recolord_photo= recolord_photo.reshape((1,1,picHeight,picwidth))recolord_photo= recolord_photo/255#人物預測print('| Step3 |:Predicting......')result = model.predict(recolord_photo)max_index=np.argmax(result)#顯示結果print('The predict result is Person',max_index+ 1)cv2.namedWindow("testperson",0);cv2.resizeWindow("testperson",300,350);cv2.imshow('testperson',photo)cv2.namedWindow("PredictResult",0);cv2.resizeWindow("PredictResult",300,350);cv2.imshow("predictResult",hwdata[max_index * 10])#print(resultrile)k= cv2.waitKey(0)#按Esc 鍵直接退出if k == 27:cv2.destroyWindow()

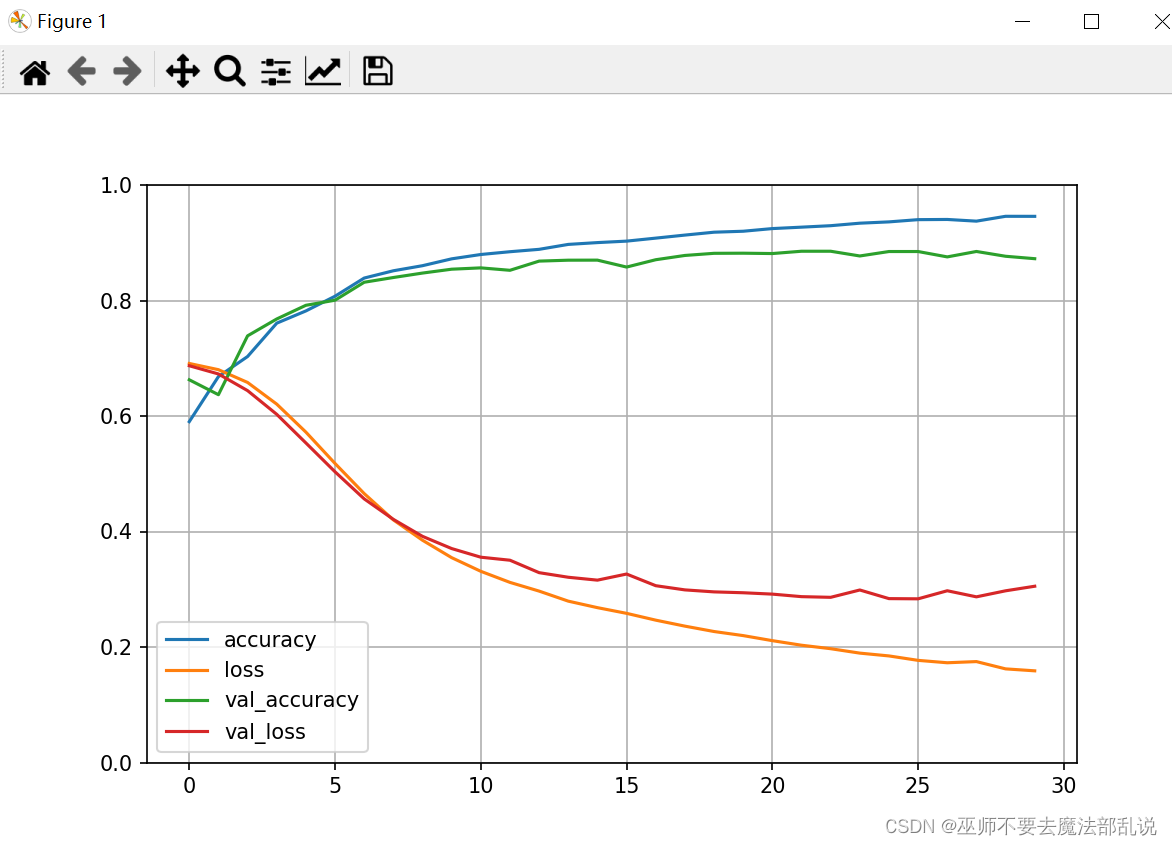

使用Tensorflow + keras 實現電影評論情感分類

# 導包import matplotlib as mplimport matplotlib.pyplot as pltimport numpy as npimport pandas as pdimport os# 導入tfimport tensorflow as tffrom tensorflow import kerasprint(tf.__version__)print(keras.__version__)# 加載數據集# num_words:只取10000個詞作為詞表imdb = keras.datasets.imdb(train_x_all, train_y_all),(test_x, test_y)=imdb.load_data(num_words=10000)# 查看數據樣本數量print("Train entries: {}, labels: {}".format(len(train_x_all), len(train_y_all)))print("Train entries: {}, labels: {}".format(len(test_x), len(test_y)))print(train_x_all[0]) ??# 查看第一個樣本數據的內容print(len(train_x_all[0])) ?# 查看第一個和第二個訓練樣本的長度,不一致print(len(train_x_all[1]))# 構建字典 ?兩個方法,一個是id映射到字,一個是字映射到idword_index = imdb.get_word_index()word2id = { k:(v+3) for k, v in word_index.items()}word2id['<PAD>'] = 0word2id['START'] = 1word2id['<UNK>'] = 2word2id['UNUSED'] = 3id2word = {v:k for k, v in word2id.items()}def get_words(sent_ids):return ' '.join([id2word.get(i, '?') for i in sent_ids])sent = get_words(train_x_all[0])print(sent)# 句子末尾進行填充train_x_all = keras.preprocessing.sequence.pad_sequences(train_x_all,value=word2id['<PAD>'],padding='post', #pre表示在句子前面填充, post表示在句子末尾填充maxlen=256)test_x = keras.preprocessing.sequence.pad_sequences(test_x,value=word2id['<PAD>'],padding='post',maxlen=256)print(train_x_all[0])print(len(train_x_all[0]))print(len(train_x_all[1]))#模型編寫vocab_size = 10000model = keras.Sequential()model.add(keras.layers.Embedding(vocab_size, 16))model.add(keras.layers.GlobalAveragePooling1D())model.add(keras.layers.Dense(16, activation='relu'))model.add(keras.layers.Dense(1, activation='sigmoid'))model.summary()model.compile(optimizer='adam', loss=keras.losses.binary_crossentropy, metrics=['accuracy'])train_x, valid_x = train_x_all[10000:], train_x_all[:10000]train_y, valid_y = train_y_all[10000:], train_y_all[:10000]# callbacks Tensorboard, earlystoping, ModelCheckpoint# 創建一個文件夾,用于放置日志文件logdir = os.path.join("callbacks")if not os.path.exists(logdir):os.mkdir(logdir)output_model_file = os.path.join(logdir, "imdb_model.keras")# 當訓練模型到什么程度的時候,就停止執行 也可以直接不用,然后直接訓練callbacks = [# 保存的路徑(使用TensorBoard就可以用命令,tensorboard --logdir callbacks 來分析結果)keras.callbacks.TensorBoard(logdir),# 保存最好的模型keras.callbacks.ModelCheckpoint(filepath=output_model_file, save_best_only=True),# 當精度連續5次都在1乘以10的-1次方之后停止訓練keras.callbacks.EarlyStopping(patience=5, min_delta=1e-3)]history = model.fit(train_x, train_y,epochs=40,batch_size=512,validation_data=(valid_x, valid_y),callbacks = callbacks,verbose=1 ??# 設置為1就會打印日志到控制臺,0就不打印)def plot_learing_show(history):pd.DataFrame(history.history).plot(figsize=(8,5))plt.grid(True)plt.gca().set_ylim(0,1)plt.show()plot_learing_show(history)result = model.evaluate(test_x, test_y)print(result)test_classes_list = model.predict_classes(test_x)print(test_classes_list[1][0])print(test_y[1])

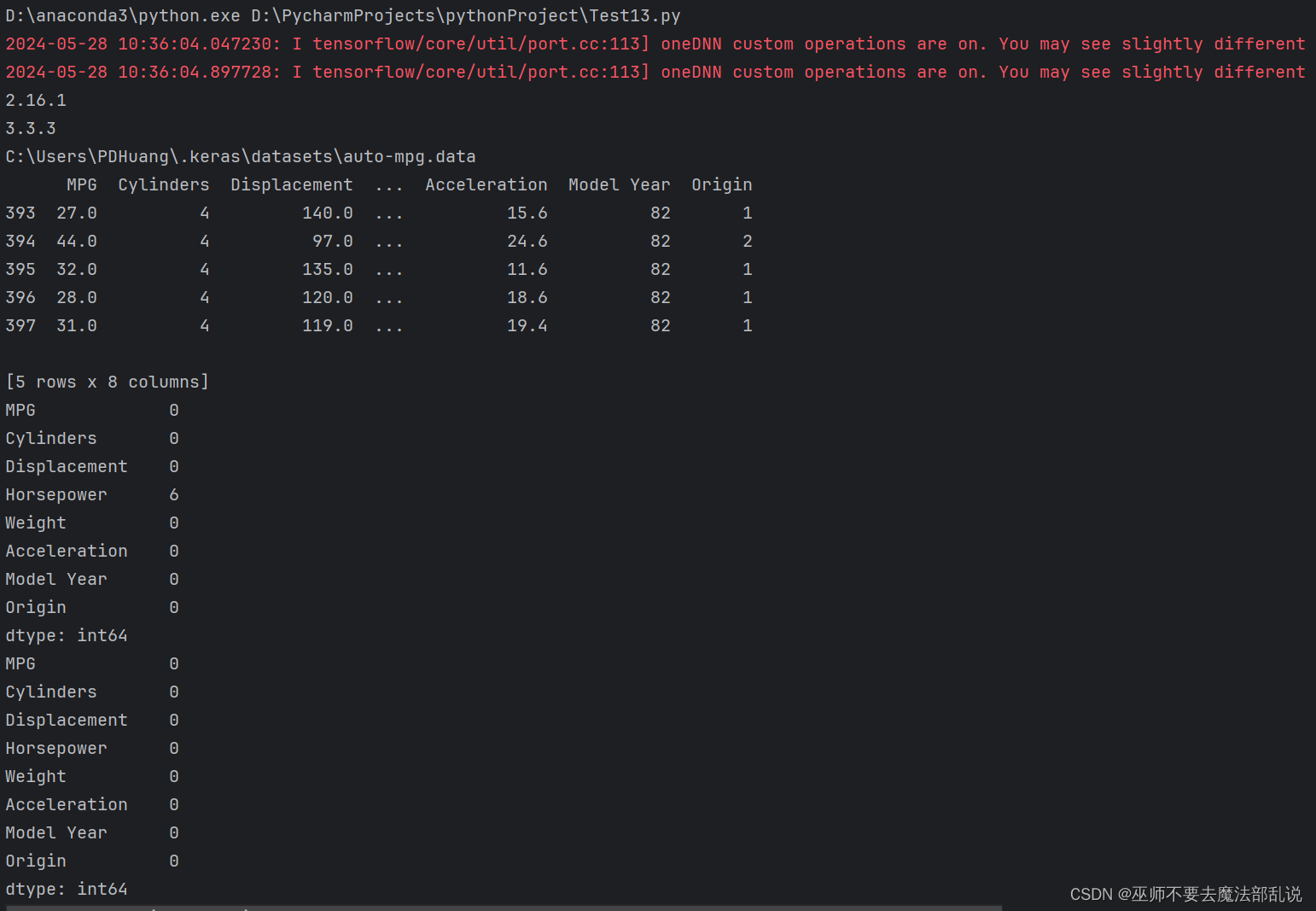

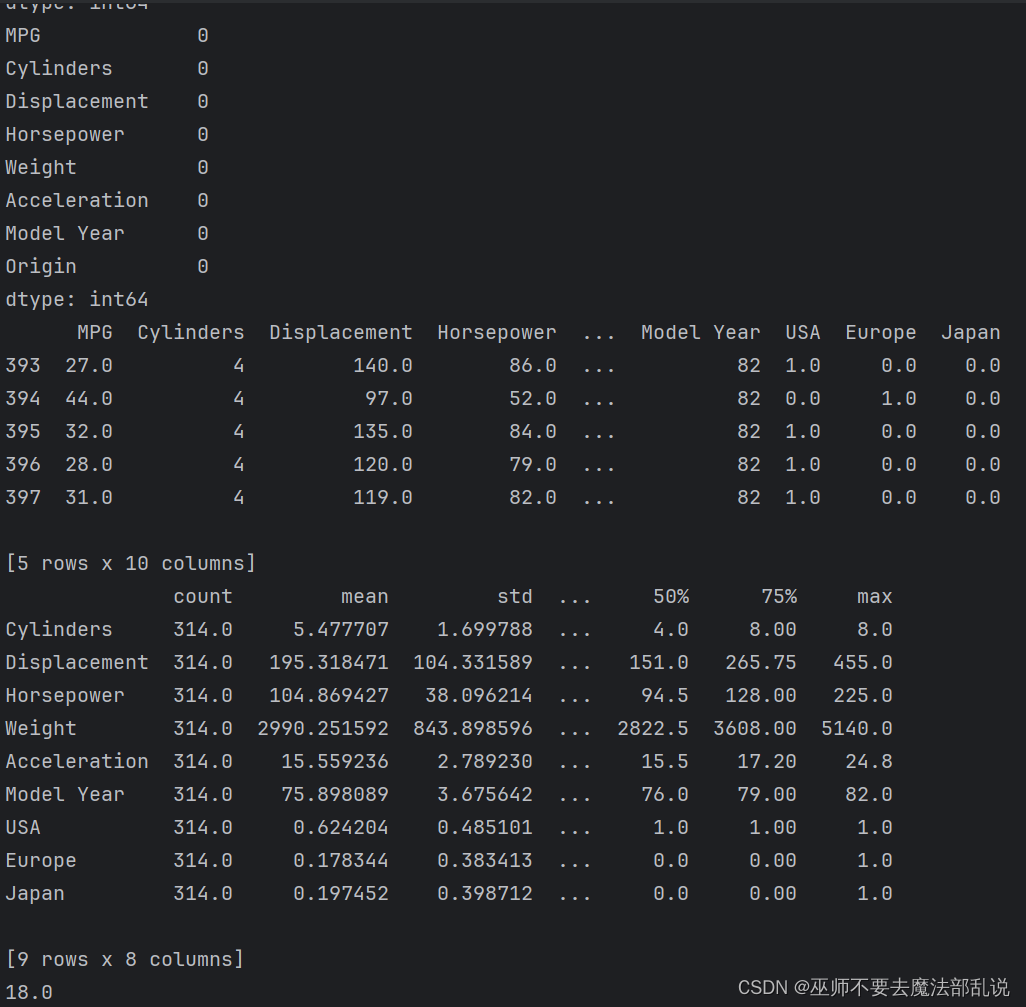

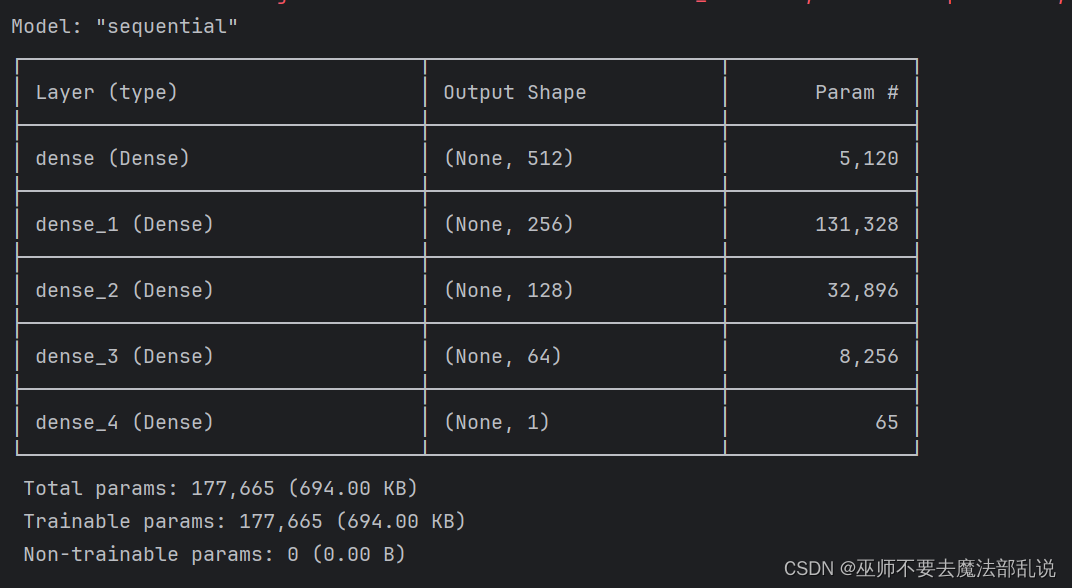

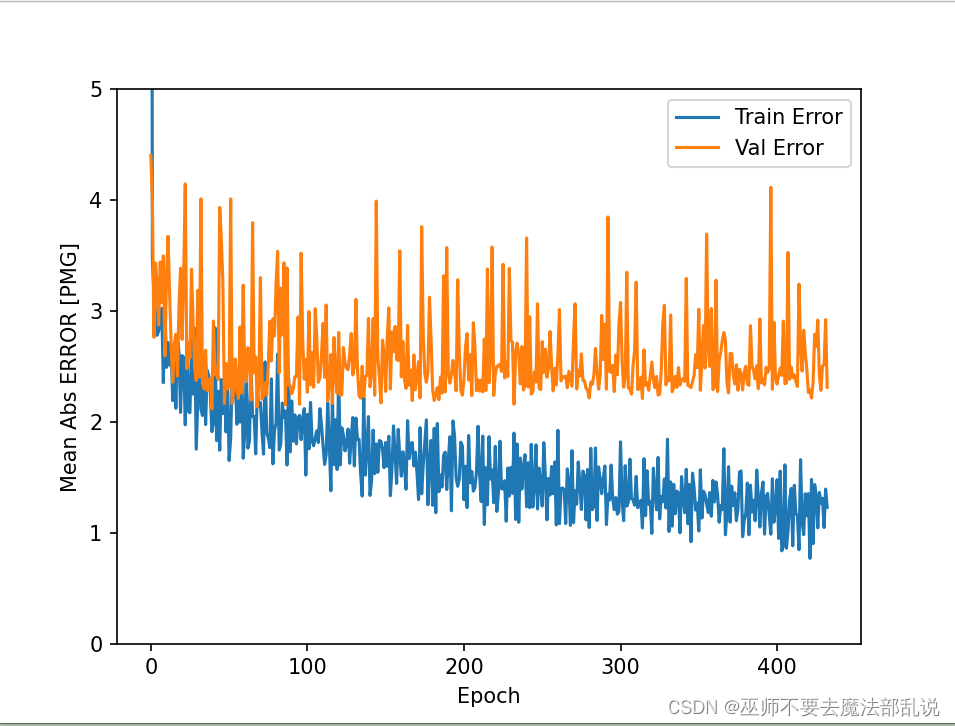

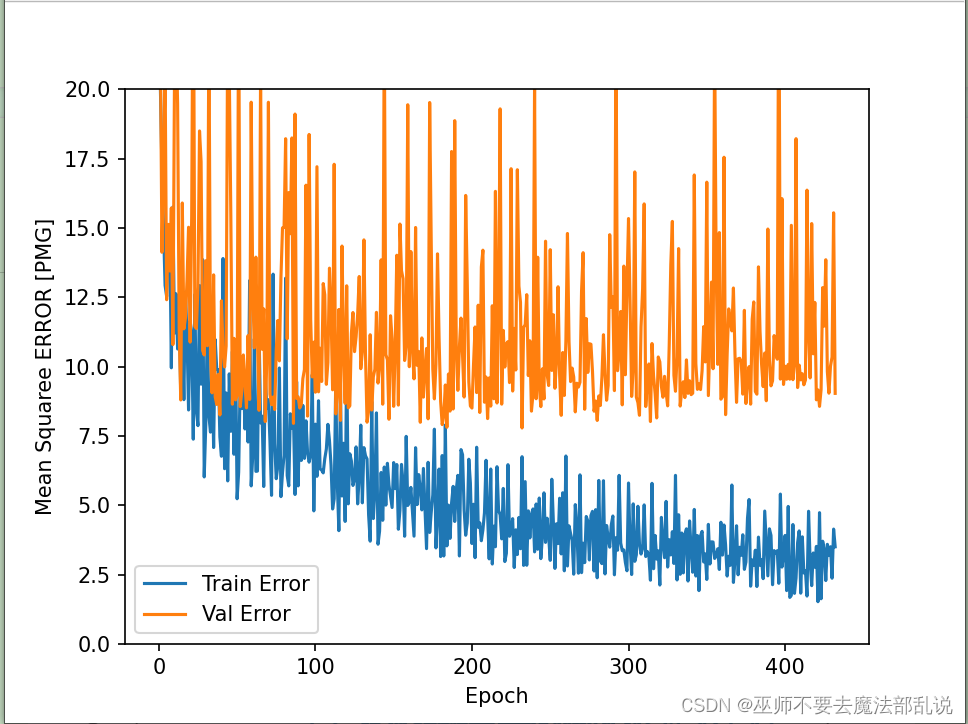

使用Tensorflow + keras 解決 回歸問題預測汽車燃油效率

# 導包import matplotlib as mplimport matplotlib.pyplot as pltimport numpy as npimport pandas as pdimport osimport pathlibimport seaborn as sns# 導入tfimport tensorflow as tffrom tensorflow import kerasprint(tf.__version__)print(keras.__version__)# 加載數據集dataset_path = keras.utils.get_file('auto-mpg.data',"http://archive.ics.uci.edu/ml/machine-learning-databases/auto-mpg/auto-mpg.data")print(dataset_path)# 使用pandas導入數據集column_names = ['MPG', 'Cylinders', 'Displacement', 'Horsepower', 'Weight', 'Acceleration', 'Model Year', 'Origin']raw_dataset = pd.read_csv(dataset_path, names=column_names, na_values='?', comment='\t',sep=' ', skipinitialspace=True)dataset = raw_dataset.copy()print(dataset.tail())# 數據清洗print(dataset.isna().sum())dataset = dataset.dropna()print(dataset.isna().sum())# 將origin轉換成one-hot編碼origin = dataset.pop('Origin')dataset['USA'] = (origin == 1) * 1.0dataset['Europe'] = (origin == 2) * 1.0dataset['Japan'] = (origin == 3) * 1.0print(dataset.tail())# 拆分數據集 拆分成訓練集和測試集train_dataset = dataset.sample(frac=0.8, random_state=0)test_dataset = dataset.drop(train_dataset.index)# 總體數據統計train_stats = train_dataset.describe()train_stats.pop("MPG")train_stats = train_stats.transpose()print(train_stats)# 從標簽中分類特征train_labels = train_dataset.pop('MPG')test_labels = test_dataset.pop('MPG')print(train_labels[0])# 數據規范化def norm(x):return (x - train_stats['mean']) / train_stats['std']norm_train_data = norm(train_dataset)norm_test_data = norm(test_dataset)# 構建模型def build_model():model = keras.Sequential([keras.layers.Dense(512, activation='relu', input_shape=[len(train_dataset.keys())]),keras.layers.Dense(256, activation='relu'),keras.layers.Dense(128, activation='relu'),keras.layers.Dense(64, activation='relu'),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return model# 構建防止過擬合的模型,加入正則項L1和L2def build_model2():model = keras.Sequential([keras.layers.Dense(512, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001),input_shape=[len(train_dataset.keys())]),keras.layers.Dense(256, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dense(128, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dense(64, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return model# 構建防止過擬合的模型,加入正則項L1def build_model3():model = keras.Sequential([keras.layers.Dense(512, activation='relu', kernel_regularizer=keras.regularizers.l1(0.001),input_shape=[len(train_dataset.keys())]),keras.layers.Dense(256, activation='relu', kernel_regularizer=keras.regularizers.l1(0.001)),keras.layers.Dense(128, activation='relu', kernel_regularizer=keras.regularizers.l1(0.001)),keras.layers.Dense(64, activation='relu', kernel_regularizer=keras.regularizers.l1(0.001)),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return model# 構建防止過擬合的模型,加入正則項L2def build_model4():model = keras.Sequential([keras.layers.Dense(512, activation='relu', kernel_regularizer=keras.regularizers.l2(0.001),input_shape=[len(train_dataset.keys())]),keras.layers.Dense(256, activation='relu', kernel_regularizer=keras.regularizers.l2(0.001)),keras.layers.Dense(128, activation='relu', kernel_regularizer=keras.regularizers.l2(0.001)),keras.layers.Dense(64, activation='relu', kernel_regularizer=keras.regularizers.l2(0.001)),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return model# 構建模型 使用dropout來防止過擬合def build_model5():model = keras.Sequential([keras.layers.Dense(512, activation='relu', input_shape=[len(train_dataset.keys())]),keras.layers.Dropout(0.5),keras.layers.Dense(256, activation='relu'),keras.layers.Dropout(0.5),keras.layers.Dense(128, activation='relu'),keras.layers.Dropout(0.5),keras.layers.Dense(64, activation='relu'),keras.layers.Dropout(0.5),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return model# 構建模型 ?使用正則化L1和L2以及dropout來預測def build_model6():model = keras.Sequential([keras.layers.Dense(512, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001),input_shape=[len(train_dataset.keys())]),keras.layers.Dropout(0.5),keras.layers.Dense(256, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dropout(0.5),keras.layers.Dense(128, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dropout(0.5),keras.layers.Dense(64, activation='relu', kernel_regularizer=keras.regularizers.l1_l2(0.001)),keras.layers.Dropout(0.5),keras.layers.Dense(1)])optimizer = keras.optimizers.RMSprop(0.001)model.compile(loss='mse', optimizer=optimizer, metrics=['mae', 'mse'])return modelmodel = build_model()model.summary()early_stop = keras.callbacks.EarlyStopping(monitor='val_loss', patience=200)# 模型訓練history = model.fit(norm_train_data, train_labels, epochs=1000, validation_split=0.2, verbose=0, callbacks=[early_stop])def plot_history(history):hist = pd.DataFrame(history.history)hist['epoch'] = history.epochplt.figure()plt.xlabel('Epoch')plt.ylabel('Mean Abs ERROR [PMG]')plt.plot(hist['epoch'], hist['mae'], label='Train Error')plt.plot(hist['epoch'], hist['val_mae'], label='Val Error')plt.ylim([0, 5])plt.legend()plt.figure()plt.xlabel('Epoch')plt.ylabel('Mean Squaree ERROR [PMG]')plt.plot(hist['epoch'], hist['mse'], label='Train Error')plt.plot(hist['epoch'], hist['val_mse'], label='Val Error')plt.ylim([0, 20])plt.legend()plt.show()plot_history(history)# 看下測試集合的效果loss, mae, mse = model.evaluate(norm_test_data, test_labels, verbose=2)print(loss)print(mae)print(mse)# 做預測test_preditions = model.predict(norm_test_data)test_preditions = test_preditions.flatten()plt.scatter(test_labels, test_preditions)plt.xlabel('True Values [MPG]')plt.ylabel('Predictios [MPG]')plt.axis('equal')plt.axis('square')plt.xlim([0, plt.xlim()[1]])plt.ylim([0, plt.ylim()[1]])_ = plt.plot([-100, 100], [-100, 100])# 看一下誤差分布error = test_preditions - test_labelsplt.hist(error, bins=25)plt.xlabel("Prediction Error [MPG]")_ = plt.ylabel('Count')

)

![LeetCode - 雙指針(Two Pointers) 算法集合 [對撞指針、快慢指針、滑動窗口、雙鏈遍歷]](http://pic.xiahunao.cn/LeetCode - 雙指針(Two Pointers) 算法集合 [對撞指針、快慢指針、滑動窗口、雙鏈遍歷])

)

等級考試試卷(四級))